-

摘要:

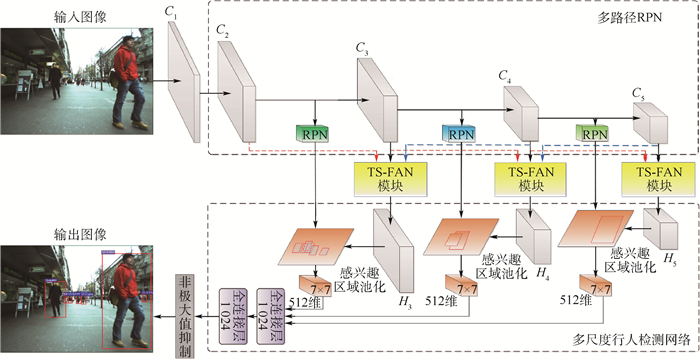

行人的空间尺度差异是影响行人检测性能的主要瓶颈之一。针对这一问题,提出了跨尺度特征聚合网络(TS-FAN)有效检测多尺度行人。首先,鉴于不同尺度空间呈现出的特征差异性,引入一种基于多路径区域建议网络(RPN)的尺度补偿策略,其在多尺度卷积特征层上自适应地生成一系列与其感受野大小相对应的候选目标尺度集。其次,考虑到不同层次卷积特征在视觉语义上的互补性,提出了跨尺度特征聚合网络模块,其通过横向连接、自上而下路径和由底向上路径,有效地聚合具有语义鲁棒性的高层特征和具有精确定位信息的低层特征,实现对卷积层特征的增强表示。最后,联合多路径RPN尺度补偿策略和跨尺度特征聚合网络模块,构建了一种尺度自适应感知的多尺度行人检测网络。实验结果表明,所提方法与当前一流的行人检测方法TLL-TFA相比,在整个Caltech公开测试数据集上(All:行人高度大于20像素)的行人漏检率降低到26.21%(提高了11.94%),尤其对于Caltech小尺寸行人子数据集上(Far:行人高度在20~30像素之间)的行人漏检率降低到47.30%(提高了12.79%),同时在尺度变化剧烈的ETH数据集上的效果也取得显著提升。

Abstract:Space scale variation of pedestrian instance is one of the main bottlenecks affecting pedestrian detection performance. For this issue, a Trans-Scale Feature Aggregation Network (TS-FAN) is proposed to effectively deal with multi-scale pedestrian detection. First, in view of the feature differences among different scale spaces, we introduce a scale compensation strategy based on multi-path Region Proposal Network (RPN). According to the effectiveness of the convolutional feature layers of different scales, a series of candidate regional scale sets are generated adaptively from the feature maps corresponding to the size of the receptive field. Second, considering the semantic complementarity of convolutional features at different levels, a trans-scale feature aggregation module is proposed to effectively aggregate with semantic robustness highllevel features and with accurate location information of low-level features and achieve enhanced representation ability of convolutional features, by aggregating horizontal connection, top-down path and bottom-up path. Finally, combining the multi-path RPN scale compensation strategy and trans-scale feature aggregation module, we construct a multi-scale pedestrian detection network by adaptive scale perception. The experimental results show that, compared with the state-of-the-art method TLL-TFA, the log-average miss rate of pedestrian detection on widely-used Caltech dataset is reduced to 26.21% (increased by 11.94%) for whole-scale pedestrians (above 20 pixel in height), and 47.30% (increased by 12.79%) for small-scale pedestrian (between 20-30 pixels in height). And the similar improvement is also achieved on ETH dataset with drastic scale variation.

-

表 1 在Caltech数据集上对于RPN的消融实验

Table 1. Ablation experiment of RPN on Caltech dataset

特征层 R300/% All子集 Far子集 Medium子集 Near子集 C3 87.7 71.5 90.6 91.9 C4 92.8 75.2 95.9 97.7 C5 82.4 59.7 85.4 95.2 P34 95.5 89.1 96.8 97.3 C34 95.3 93.7 95.7 97.9 P45 92.9 76.2 96.3 97.3 C45 93.3 77.3 97.7 97.9 P345 93.7 91.1 94.5 93.4 C345 97.2 93.7 97.7 97.9 表 2 Caltech数据集上验证跨尺度聚合特征的有效性

Table 2. Verification of validity of trans-scale aggregation features on Caltech dataset

方法 Proposal MR-2/% Reasonable子集 Near子集 Medium子集 Far子集 FPN-P3 C3 31.29 43.31 31.75 54.06 TS-FAN-H3 C3 13.84 15.31 20.50 52.80 FPN-P4 C4 5.33 0.72 24.65 75.41 TS-FAN-H4 C4 5.12 0.47 20.08 65.50 FPN-P5 C5 28.45 2.05 75.82 100.00 TS-FAN-H5 C5 37.96 1.97 82.73 100.00 TS-FAN-H3H4H5 C4 6.16 1.57 17.24 50.38 TS-FAN-H3H4H5 C345 5.53 0.47 13.76 47.30 表 3 在Caltech数据集不同重叠评估设置上,本文方法与目前一流方法的比较

Table 3. Comparison of proposed method with some state-of-the-art methods on the Caltech dataset under different overlapping evaluation protocols

方法 MR-2/% Reasonable子集 All子集 Near子集 Medium子集 Far子集 Partial子集 Heavy子集 FasterRCNN+ATT[28] 10.33 54.51 1.43 40.75 90.94 22.29 45.18 RPN+BF[29] 9.58 64.66 2.26 53.93 100 24.23 74.36 AR-Ped[35] 6.45 58.83 1.37 49.31 100 11.93 48.80 TLL-TFA[21] 7.40 38.15 0.72 22.92 60.09 18.49 28.66 TS-FAN(本文) 5.53 26.21 0.47 13.76 47.30 10.68 17.82 -

[1] REN S, HE K, GIRSHICK R, et al.Faster R-CNN: Towards real-time object detection with region proposal networks[C]//International Conference on Neural Information Processing Systems.Cambridge: MIT Press, 2015: 91-99. [2] LIU W, ANGUELOW D, ERHAN D, et al.SSD: Single shot multibox detector[C]//European Conference on Computer Vision.Berlin: Springer, 2016: 21-37. https://www.researchgate.net/publication/286513835_SSD_Single_Shot_MultiBox_Detector [3] ZHANG X W, CHENG L C, LI B, et al.Too far to see Not really!-Pedestrian detection with scale-aware localization policy[J].IEEE Transactions on Image Processing, 2018, 27(8):3703-3715. doi: 10.1109/TIP.2018.2818018 [4] GIRSHICK R, DONAHUE J, DARRELL T, et al.Rich feature hierarchies for accurate object detection and semantic segmentation[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2014: 580-587. [5] DALAL N, TRIGGS B.Histograms of oriented gradients for human detection[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2014: 886-893. [6] 种衍文, 匡湖林, 李清泉.一种基于多特征和机器学习的分级行人检测方法[J].自动化学报, 2012, 38(3):375-381. http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=zdhxb201203007ZHONG Y W, KUANG H L, LING Q Q.Two-stage pedestrain detection based on multiple features and machine learning[J].Acta Automatica Sinica, 2012, 38(3):375-381(in Chinese). http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=zdhxb201203007 [7] SINGH B, DAVIS L.An analysis of scale invariance in object detection snip[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2018: 3578-3587 [8] SINGH B, NAJIBI M, DAVIS L.SNIPER: Efficient multi-scale training[C]//International Conference on Neural Information Processing Systems.Cambridge: MIT Press, 2018: 9310-9320. https://www.researchgate.net/publication/325333259_SNIPER_Efficient_Multi-Scale_Training [9] LIU S T, HUANG D, WANG Y H.Receptive field block net for accurate and fast object detection[C]//European Conference on Computer Vision.Berlin: Springer, 2018: 385-400. https://www.researchgate.net/publication/321210791_Receptive_Field_Block_Net_for_Accurate_and_Fast_Object_Detection [10] LIU W, LIAO S C, HU W D, et al.Learning efficient single-stage pedestrian detectors by asymptotic localization fitting[C]//European Conference on Computer Vision.Berlin: Springer, 2018: 643-659. doi: 10.1007/978-3-030-01264-9_38 [11] HE K Y, ZHANG X Y, REN S Q, et al.Deep residual learning for image recognition[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2016: 770-778. [12] HONARI S, YOSINSKI J, VINCENT P, et al.Recombinator networks: Learning coarse-to-fine feature aggregation[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2016: 5743-5752. http://www.researchgate.net/publication/311609590_Recombinator_Networks_Learning_Coarse-to-Fine_Feature_Aggregation [13] 谭红臣, 李淑华, 刘彬, 等.特征增强的SSD算法及其在目标检测中的应用[J].计算机辅助设计与图形学学报, 2019, 31(4):63-69. http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=jsjfzsjytxxxb201904007TAN H C, LI S H, LIU B, et al.Feature enhancement SSD for object detection[J].Journal of Computer-Aided Design & Computer Graphics, 2019, 31(4):63-69(in Chinese). http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=jsjfzsjytxxxb201904007 [14] LIN T Y, DOLLAR P, GIRSHICK R, et al.Feature pyramid networks for object detection[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2017: 2117-2125. http://www.researchgate.net/publication/311573567_Feature_Pyramid_Networks_for_Object_Detection [15] LIU S, QI L, QIN H F, et al.Path aggregation network for instance segmentation[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2018: 8759-8768. http://www.researchgate.net/publication/323571076_Path_Aggregation_Network_for_Instance_Segmentation [16] ZHAO Q J, SHENG T, WANG Y T, et al.M2Det: A single-shot object detector based on multi-level feature pyramid network[C]//AAAI Conference on Artificial Intelligence.Menlo Park: AAAI Press, 2019, 33: 9259-9266. https://www.researchgate.net/publication/335239949_M2Det_A_Single-Shot_Object_Detector_Based_on_Multi-Level_Feature_Pyramid_Network [17] LI Y, CHEN Y, WANG N, et al.Scale-aware trident networks for object detection[C]//IEEE International Conference on Computer Vision.Piscataway: IEEE Press, 2019: 6054-6063. http://ieeexplore.ieee.org/document/9010716/ [18] LI J, LIANG X, SHEN S M, et al.Scale-aware fast R-CNN for pedestrian detection[J].IEEE Transactions on Multimedia, 2017, 20(4):985-996. http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=Arxiv000001335067 [19] DOLLA P, WOJEK C, SCHIELE B, et al.Pedestrian detection:An evaluation of the state of the art[J].IEEE Transactions on Pattern Analysis and Machine Intelligence, 2011, 34(4):743-761. doi: 10.1109/TPAMI.2011.155 [20] ESS A, LEIBE B, GOOL L V.Depth and appearance for mobile scene analysis[C]//IEEE International Conference on Computer Vision.Piscataway: IEEE Press, 2007: 1-8. https://www.researchgate.net/publication/228797908_Depth_and_Appearance_for_Mobile_Scene_Analysis [21] SONG T, SUN L Y, XIE D, et al.Small-scale pedestrian detection based on topological line localization and temporal feature aggregation[C]//European Conference on Computer Vision.Berlin: Springer, 2018: 536-551. [22] 王刚, 陈永光, 杨锁昌, 等.鲁棒的红外小目标视觉显著性检测方法[J].北京航空航天大学学报, 2015, 41(12):2309-2318. doi: 10.13700/j.bh.1001-5965.2014.0834WAGN G, CHEN Y G, YANG S C, et al.Robust visual saliency detection method for infrared small target[J].Journal of Beijing University of Aeronautics and Astronautics, 2015, 41(12):2309-2318(in Chinese). doi: 10.13700/j.bh.1001-5965.2014.0834 [23] ZHOU P, NI B B, GENG C, et al.Scale-transferrable object detection[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2018: 528-537. [24] 李晓光, 付陈平, 李晓莉, 等.面向多尺度目标检测的改进Faster R-CNN算法[J].计算机辅助设计与图形学学报, 2019, 31(7):1095-1101. http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=jsjfzsjytxxxb201907005LI X G, FU C P, LI X L, et al.Improved Faster R-CNN for multi-scale object detection[J].Journal of Computer-Aided Design & Computer Graphics, 2019, 31(7):1095-1101(in Chinese). http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=jsjfzsjytxxxb201907005 [25] 裴伟, 许晏铭, 朱永英, 等.改进的SSD航拍目标检测方法[J].软件学报, 2019, 30(3):248-268. http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=rjxb201903015PEI W, XU Y M, ZHU Y Y, et al.The target detection method of aerial photography images with improved SSD[J].Journal of Software, 2019, 30(3):248-268(in Chinese). http://www.wanfangdata.com.cn/details/detail.do?_type=perio&id=rjxb201903015 [26] 许冰, 牛燕雄, 吕建明.复杂动态场景下目标检测与分割算法[J].北京航空航天大学学报, 2016, 42(2):310-317. doi: 10.13700/j.bh.1001-5965.2015.0113XU B, NIU Y X, LYU J M.Object detection and segmentation algorithm in complex dynamic scene[J].Journal of Beijing University of Aeronautics and Astronautics, 2016, 42(2):310-317(in Chinese). doi: 10.13700/j.bh.1001-5965.2015.0113 [27] LIU W, LIAO S C, REN W Q, et al.High-level semantic feature detection: A new perspective for pedestrian detection[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2019: 5187-5196. https://www.researchgate.net/publication/332263773_High-level_Semantic_Feature_DetectionA_New_Perspective_for_Pedestrian_Detection [28] ZHANG S J, YANG J, SCHIELE B.Occluded pedestrian detection through guided attention in CNNs[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2018: 6995-7003. https://www.researchgate.net/publication/329748825_Occluded_Pedestrian_Detection_Through_Guided_Attention_in_CNNs [29] ZHANG L L, LIN L, LIANG X D, et al.Is Faster R-CNN doing well for pedestrian detection [C]//European Conference on Computer Vision.Berlin: Springer, 2018: 618-634. https://www.researchgate.net/publication/305638304_Is_Faster_R-CNN_Doing_Well_for_Pedestrian_Detection [30] ZHANG S S, BENENSON R, SCHIELE B.Citypersons: A diverse dataset for pedestrian detection[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2017: 3213-3221. https://www.researchgate.net/publication/320971355_CityPersons_A_Diverse_Dataset_for_Pedestrian_Detection [31] DU X Z, EL-KHAMY M, LEE J, et al.Fused DNN: A deep neural network fusion approach to fast and robust pedestrian detection[C]//IEEE Winter Conference on Applications of Computer Vision.Piscataway: IEEE Press, 2017: 953-961. https://www.researchgate.net/publication/316948537_Fused_DNN_A_Deep_Neural_Network_Fusion_Approach_to_Fast_and_Robust_Pedestrian_Detection [32] WANG S G, CHENG J, LIU H J, et al.PCN: Part and context information for pedestrian detection with CNNs[EB/OL].(2018-04-12)[2020-01-27].https://arxiv.org/abs/1804.04483. [33] LIN C Z, LU J W, WANG G, et al.Graininess-aware deep feature learning for pedestrian detection[C]//European Conference on Computer Vision.Berlin: Springer, 2018: 732-747. [34] DU X, EL-KHAMY M, MORARIU V I, et al.Fused deep neural networks for efficient pedestrian detection[EB/OL].(2018-05-02)[2020-01-27].https://arxiv.org/abs/1805.08688. [35] BRAZIL G, LIU X M.Pedestrian detection with autoregressive network phases[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2019: 7231-7240. https://www.researchgate.net/profile/Xiaoming_Liu8/publication/329387855_Pedestrian_Detection_with_Autoregressive_Network_Phases/links/5c16ef8b299bf139c75e25f7/Pedestrian-Detection-with-Autoregressive-Network-Phases.pdf [36] DOLLAR P, TU Z, PERONA P, et al.Integral channel features[C]//British Machine Vision Conference, 2009: 91.1-91.11. https://www.researchgate.net/publication/221259850_Integral_Channel_Features [37] OUYANG W L, WANG X G.Joint deep learning for pedestrian detection[C]//IEEE International Conference on Computer Vision.Piscataway: IEEE Press, 2013: 2056-2063. https://www.researchgate.net/publication/261857512_Joint_Deep_Learning_for_Pedestrian_Detection [38] ZENG X Y, OUYANG W L, WANG X G.Multi-stage contextual deep learning for pedestrian detection[C]//IEEE International Conference on Computer Vision.Piscataway: IEEE Press, 2013: 121-128. https://www.researchgate.net/publication/262400254_Multi-stage_Contextual_Deep_Learning_for_Pedestrian_Detection [39] OUYANG W L, ZENG X Y, WANG X G.Modeling mutual visibility relationship in pedestrian detection[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2013: 3222-3229. https://www.researchgate.net/publication/253329150_Modeling_Mutual_Visibility_Relationship_in_Pedestrian_Detection [40] TIAN Y L, LUO P, WANG X G, et al.Pedestrian detection aided by deep learning semantic tasks[C]//IEEE Conference on Computer Vision and Pattern Recognition.Piscataway: IEEE Press, 2015: 5079-5087. https://www.researchgate.net/publication/308806299_Pedestrian_detection_aided_by_deep_learning_semantic_tasks -

下载:

下载: