-

摘要:

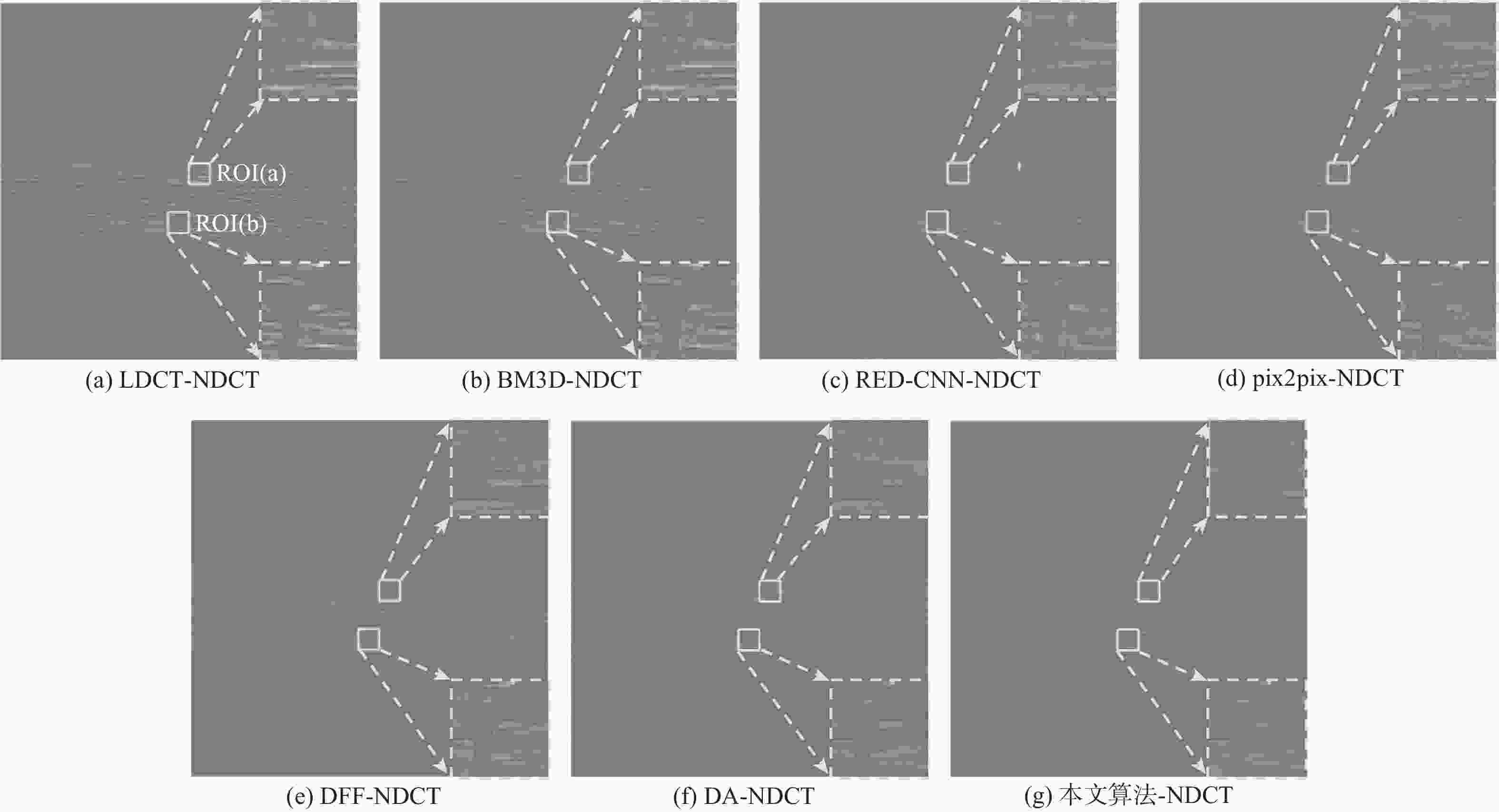

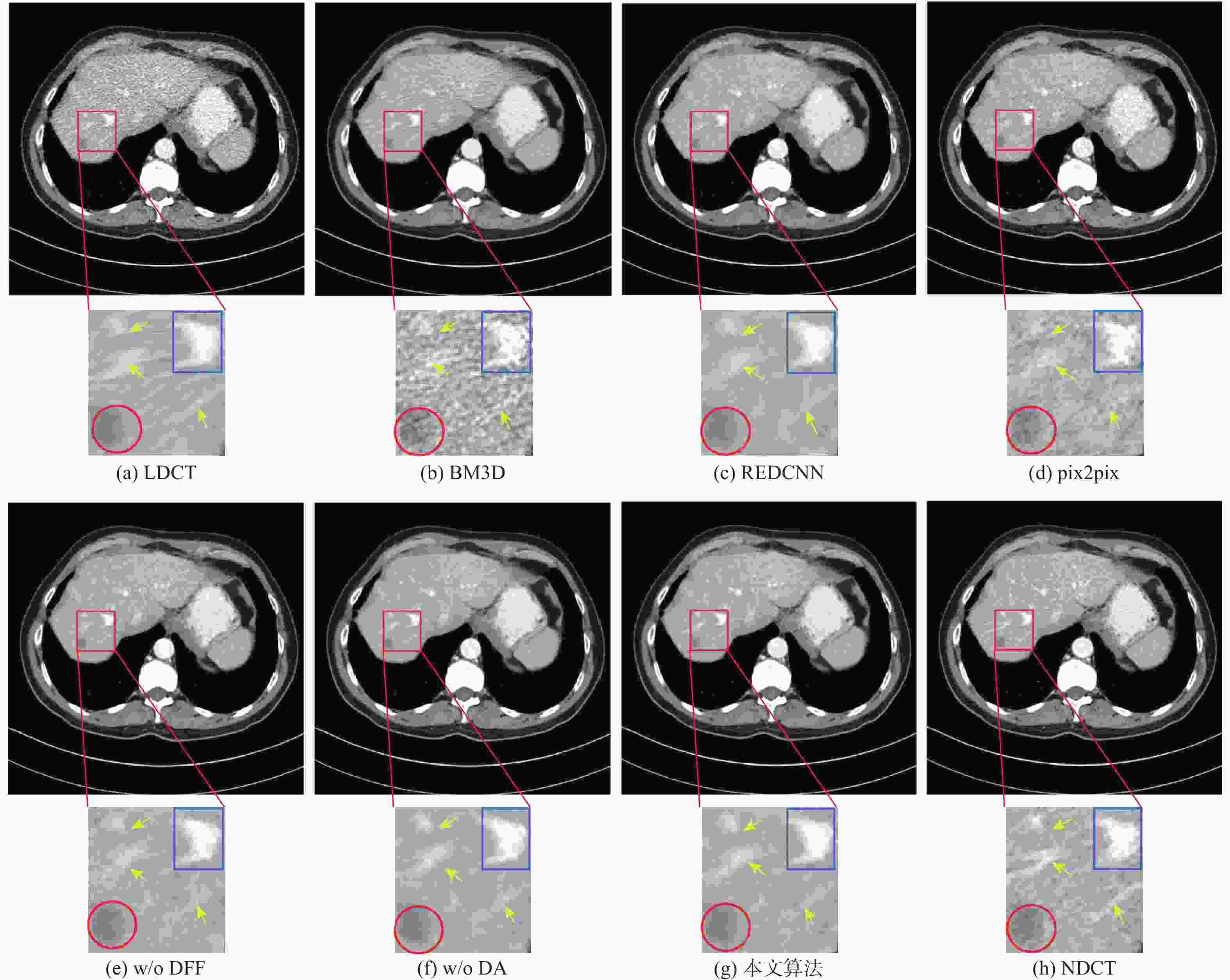

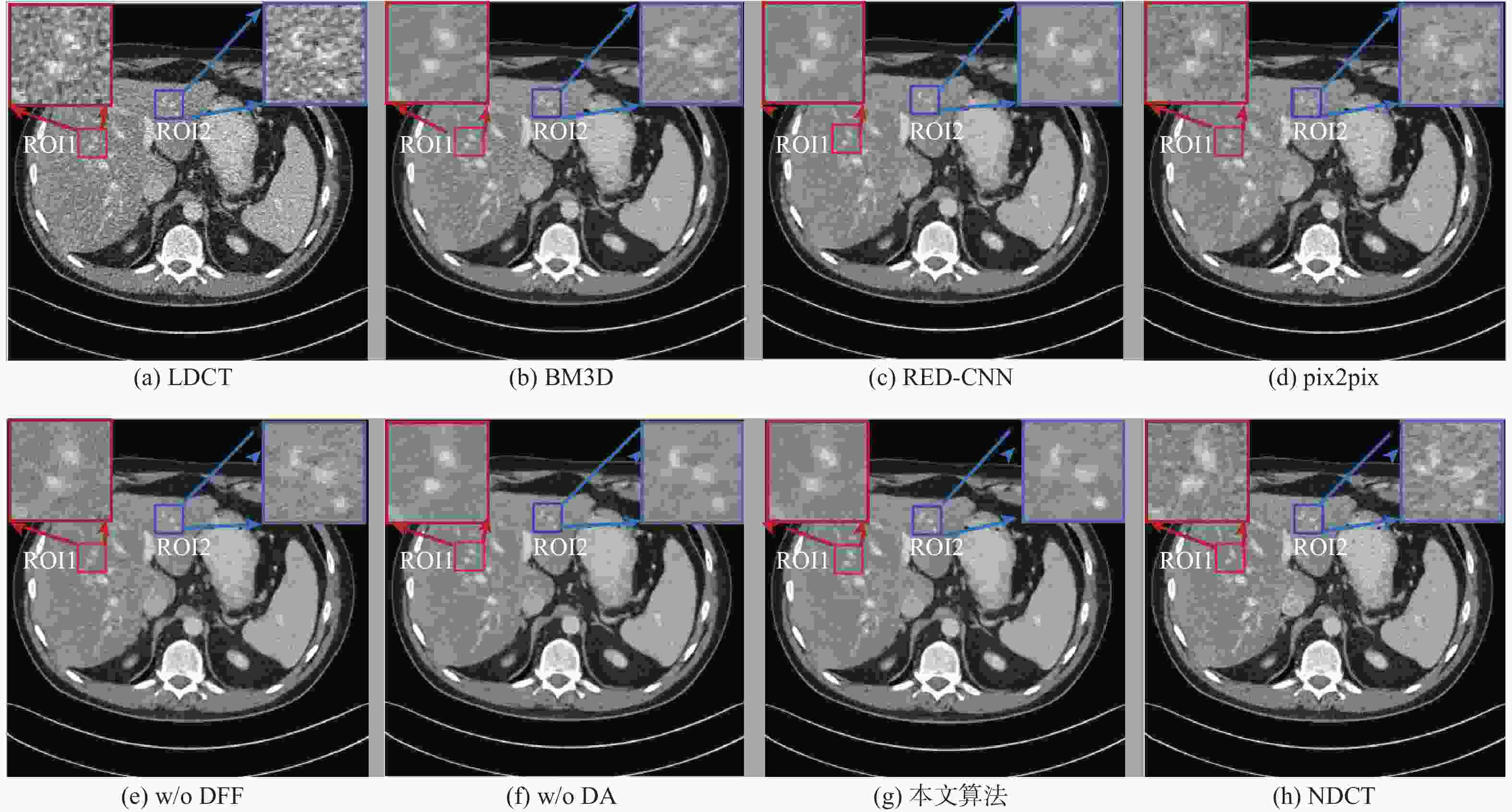

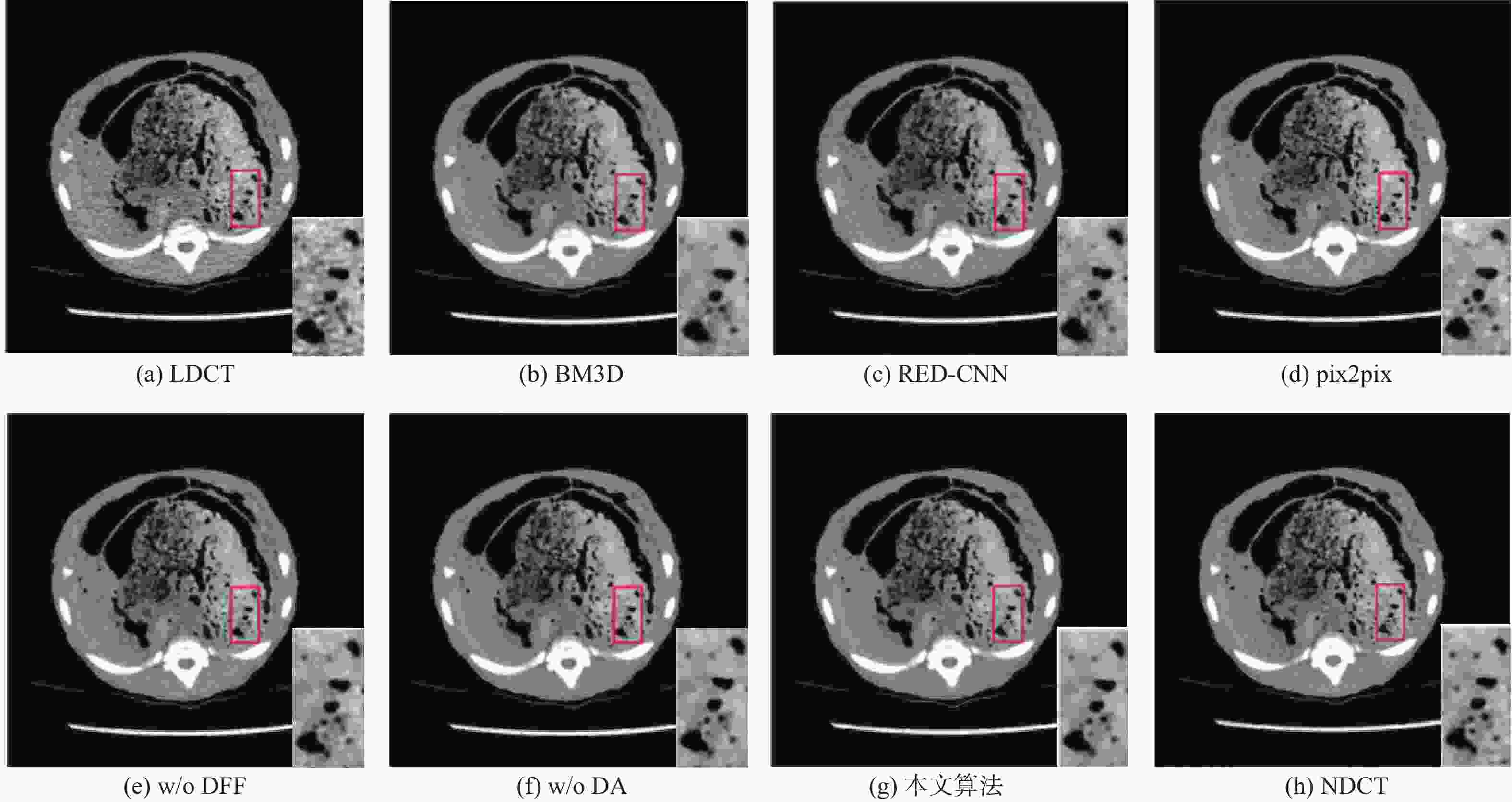

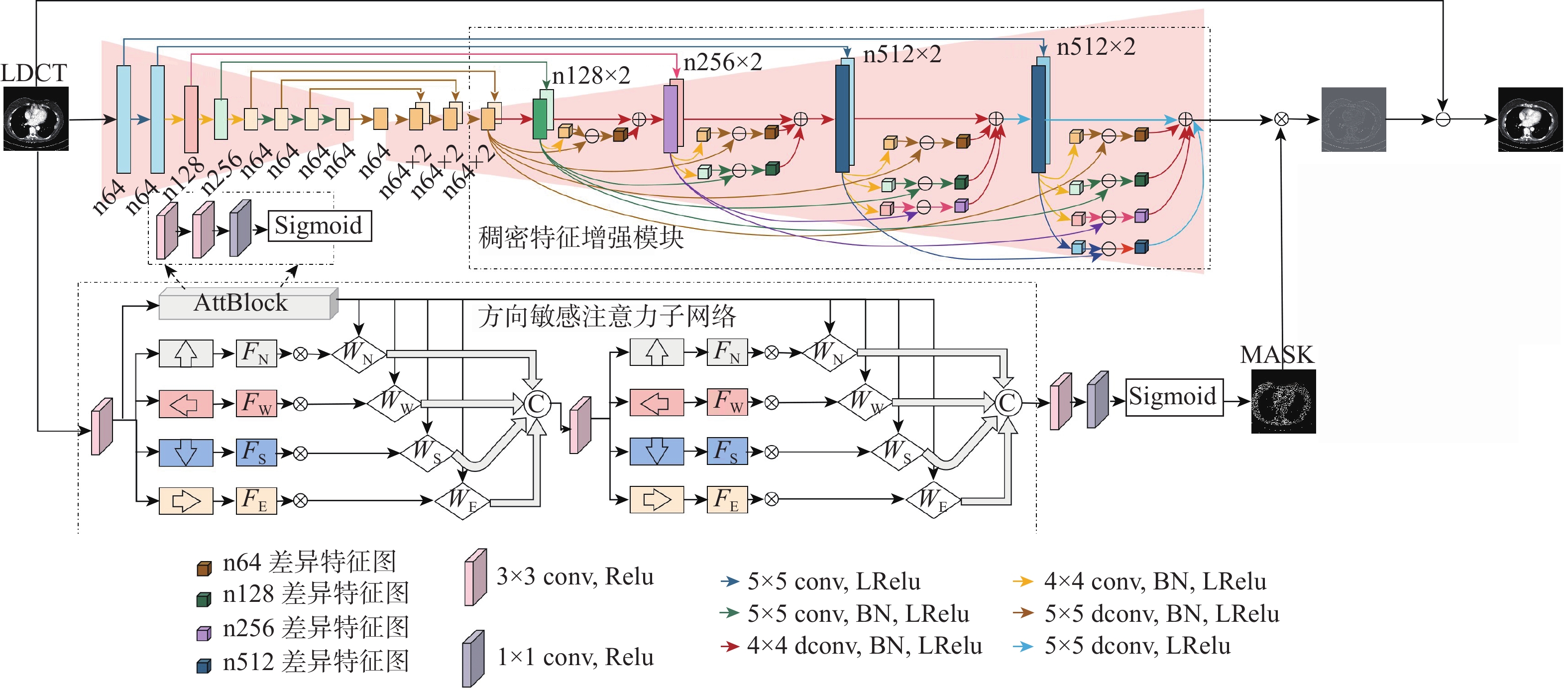

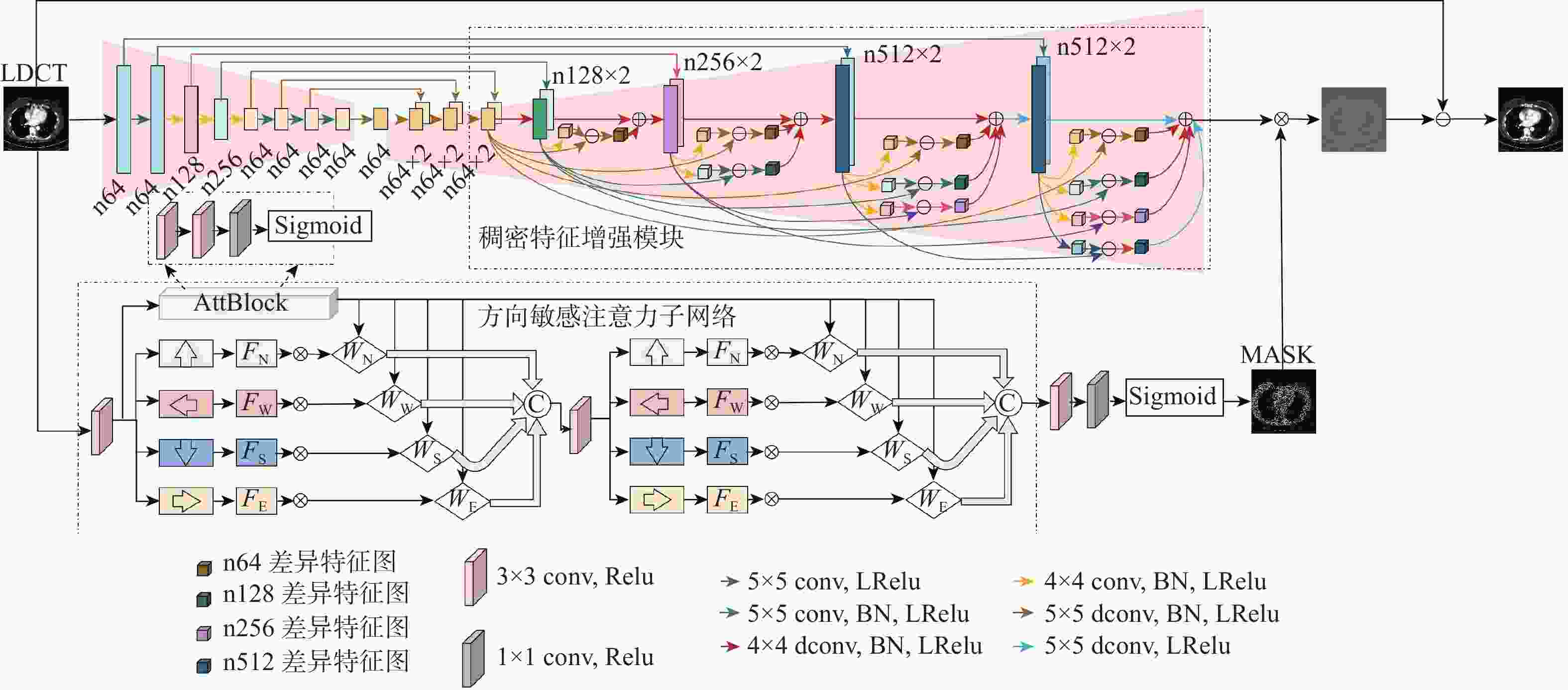

低剂量CT(LDCT)包含丰富组织结构、病理信息和分布极其不规律的噪声伪影,这2种信息的幅度值分布规律相似。因此,LDCT降噪任务易出现特征提取不充分、网络对噪声伪影方向特性敏感度不足及降噪结果过度平滑等问题。为此,应用U-Net网络作为去噪网络的基本模型,设计了一种基于伪影估计的LDCT降噪网络。所提网络模型主要包括主特征提取网络和方向敏感注意力子网络2部分。为充分利用不同尺度特征之间的差异性,提高特征提取有效性,在编解码U-Net结构基础上增加了一个稠密特征增强模块;为提高降噪网络对噪声伪影方向特征的敏感度,设计了一个方向敏感注意力子网络;为保障网络训练稳定性,设计了多种损失函数来共同优化网络训练过程。实验结果表明:与目前主流的LDCT降噪方法相比,所提方法降噪结果的视觉效果与量化指标均表现最佳。

Abstract:Low-dose CT (LDCT) contains abundant tissue structure, pathological information and noise artifacts with extremely irregular distribution.These two different types of information have comparable amplitude distributions. Therefore, the LDCT denoising task is prone to some problems, such as insufficient feature extraction, insufficient network sensitivity to the directional characteristics of noise artifacts, and excessive smoothing of the denoising results. In response to the above problems, this work uses the U-Net network as the basic model of the denoising network, and designs a LDCT denoising network based on artifact estimation. The proposed network mainly includes two parts: the main feature extraction network and the direction-sensitive attention sub-network.Firstly, to better use the differences between various scale features and increase the efficiency of feature extraction, we add a dense feature improvement module to the codec U-Net structure. Secondly, we design a direction-sensitive attention subnetwork to improve the sensitivity of the denoising network to the direction characteristics of the noise artifacts. Finally, to ensure the stability of network training, we utilize a variety of loss functions to optimize the network training process. The experimental results show that the proposed algorithm is superior to other mainstream LDCT denoising algorithms in terms of visual effects and quantitative indicators.

-

Key words:

- low-dose CT /

- image denoising /

- U-Net /

- attention mechanism /

- noise estimation /

- feature fusion

-

表 1 主观评价得分

Table 1. Subjective evaluation score

降噪方法 组织识别度 噪声抑制度 整体图像质量 LDCT 2.85 2.75 2.80 BM3D 2.95 3.15 3.10 RED-CNN 3.60 3.55 3.65 pix2pix 4.05 4.25 4.20 本文方法 4.35 4.45 4.45 NDCT 4.90 4.95 4.95 表 2 四种降噪方法在MAYO测试集上平均PSNR与SSIM(平均值±标准差)

Table 2. Average PSNR and SSIM (MEAN±SD) of four denoising methods on MAYO test set

降噪方法 PSNR(MEAN±SD) SSIM(MEAN±SD) LDCT 26.7891±1.9782 0.8100±0.0535 BM3D 30.2235±1.9193 0.8557±0.0455 RED-CNN 30.9054±1.7647 0.8610±0.0418 Pix2pix 28.3183±1.6599 0.8111±0.0545 本文方法 31.3091±1.7442 0.8738±0.0410 表 3 四种降噪方法对2幅具有代表性的LDCT图像的降噪结果

Table 3. Denoising results of two representative LDCT images with four denoising methods

降噪

方法胸部LDCT图像 腹部LDCT图像 PSNR SSIM VIF IFC NQM PSNR SSIM VIF IFC NQM LDCT 23.0729 0.8288 0.4095 1.9985 16.9253 22.8869 0.6960 0.2432 1.9498 24.5226 BM3D 23.8703 0.8403 0.3688 1.7772 16.9187 27.1470 0.7612 0.2937 2.3825 25.7574 RED-CNN 25.2797 0.8764 0.3147 1.3950 16.3417 27.5432 0.7688 0.2933 2.3726 25.2474 pix2pix 26.4141 0.8700 0.3741 1.7694 17.4361 25.5831 0.7063 0.2632 2.0484 23.4076 本文方法 28.0788 0.8951 0.4387 2.1998 19.0731 27.9521 0.7736 0.3078 2.5199 26.1564 表 4 网络结构消融对方法性能的影响

Table 4. Influence of network structure ablation on method performance

网络结构 平均SSIM 平均PSNR w/o DFF 0.8570 30.4679 w/o DA 0.8722 31.2892 本文算法 0.8738 31.3091 表 5 不同消融损失函数在测试集上降噪结果的平均PSNR与SSIM值

Table 5. Average PSNR and SSIM values of denoising results of different ablation loss functions on test set

子损失 平均SSIM 平均PSNR 伪影一致性损失 伪影掩码损失 像素级L1损失 √ 0.8726 31.2517 √ 0.8724 31.2734 √ √ 0.8733 31.2687 √ √ 0.8730 31.2873 √ √ 0.8728 31.2747 √ √ √ 0.8738 31.3091 表 6 四种降噪方法的训练与测试时间比较

Table 6. Comparison of training and testing time under four denoising methods

降噪方法 训练时间/s 测试时间(s/幅) 迭代次数 BM3D 1.2078 RED-CNN 2248.92 0.1041 100 pix2pix 31603.88 0.0679 200 本文方法 85766.74 0.0147 100 -

[1] ZHANG H, HUANG J, MA J H, et al. Iterative reconstruction for X-ray computed tomography using prior-image induced nonlocal regularization[J]. IEEE Transactions on Bio-Medical Engineering, 2014, 61(9): 2367-2378. doi: 10.1109/TBME.2013.2287244 [2] THOMAS L, SLOVIS M D. The ALARA (as low as reasonably achievable) concept in pediatric CT intelligent dose reduction[J]. Pediatric Radiology, 2002, 32(4): 219-220. doi: 10.1007/s00247-002-0665-z [3] 罗立民, 胡轶宁, 陈阳. 低剂量CT成像的研究现状与展望[J]. 数据采集与处理, 2015, 30(1): 24-34. doi: 10.16337/j.1004-9037.2015.01.002LUO L M, HU Y N, CHEN Y. Research status and prospect for low-dose CT imaging[J]. Journal of Data Acquisition and Processing, 2015, 30(1): 24-34(in Chinese). doi: 10.16337/j.1004-9037.2015.01.002 [4] 许琼. X 线 CT 不完备投影数据统计重建研究[D]. 西安: 西安交通大学,2012:129.XU Q. Statistical reconstruction methods for insufficient X-ray CT projection data[D]. Xi'an: Xi'an Jiaotong University, 2012: 129(in Chinese). [5] CHEN W B, SHAO Y L, JIA L N, et al. Low-dose CT image denoising model based on sparse representation by stationarily classified sub-dictionaries[J]. IEEE Access, 2019, 7: 116859-116874. doi: 10.1109/ACCESS.2019.2932754 [6] BUADES A, COLL B, MOREL J M. A non-local algorithm for image denoising[C]//IEEE Computer Society Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2005: 60-65. [7] CHEN Y, YANG Z, HU Y N, et al. Thoracic low-dose CT image processing using an artifact suppressed large-scale nonlocal means[J]. Physics in Medicine & Biology, 2012, 57(9): 2667-2688. [8] FERUGLIO P F, VINEGONI C, GROS J, et al. Block matching 3D random noise filtering for absorption optical projection tomography[J]. Physics in Medicine & Biology, 2010, 55(18): 5401-5415. doi: 10.1088/0031-9155/55/18/009 [9] CHEN H, ZHANG Y, ZHANG W H, et al. Low-dose CT via convolutional neural network[J]. Biomedical Optics Express, 2017, 8(2): 679-694. doi: 10.1364/BOE.8.000679 [10] SHAN H M, ZHANG Y, YANG Q S, et al. 3-D convolutional encoder-decoder network for low-dose CT via transfer learning from a 2D trained network[J]. IEEE Transactions on Medical Imaging, 2018, 37(6): 1522-1534. doi: 10.1109/TMI.2018.2832217 [11] WU D F, KIM K, FAKHRI G E, et al. A cascaded convolutional neural network for X-ray low-dose CT image denoising[EB/OL]. (2017-08-28)[2021-05-01].https://arxiv.org/abs/1705.04267. [12] KANG E, MIN J H, YE J C. Wavelet domain residual network (WavResNet) for low-dose X-ray CT reconstruction[EB/OL]. (2017-03-04)[2021-05-01].https://arxiv.org/abs/1703.01383. [13] CHEN H, ZHANG Y, KALRA M K, et al. Low-dose CT with a residual encoder-decoder convolutional neural network[J]. IEEE Transactions on Medical Imaging, 2017, 36(12): 2524-2535. doi: 10.1109/TMI.2017.2715284 [14] RONNEBERGER O, FISCHER P, BROX T. U-Net: Convolutional networks for biomedical image segmentation[C]//International Conference on Medical Image Computing and Computer-Assisted Intervention. Berlin: Springer, 2015: 234-241. [15] HEINRICH M P, STILLE M, BUZUG T M, et al. Residual U-Net convolutional neural network architecture for low-dose CT denoising[J]. Current Directions in Biomedical Engineering, 2018, 4(1): 297-300. doi: 10.1515/cdbme-2018-0072 [16] IEK, Z, ABDULKADIR A, LIENKAMP S S, et al. 3D U-Net: Learning dense volumetric segmentation from sparse annotation[C]//International Conference on Medical Image Computing and Computer-Assisted Intervention. Berlin: Springer, 2016, 424-432. [17] WANG T Y, YANG X, XU K, et al. Spatial attentive single-image deraining with a high quality real rain dataset[C]// IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2019: 12262-12271. [18] SHEN Y Y, FENG Y D, WANG W M, et al. MBA-RainGAN: A multi-branch attention generative adversarial network for mixture of rain removal[C]//IEEE International Conference on Acoustics, Speech and Signal Processing. Piscataway: IEEE Press, 2022: 3418-3422. [19] ZHANG H, PATEL V M. Density-aware single image de-raining using a multi-stream dense network[C]//IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 695-704. [20] HU X W, FU C W, ZHU L, et al. Depth-attentional features for single-image rain removal[C]//IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2019: 8014-8023. [21] WANG Y L, ZHANG H K, LIU Y, et al. Gradient information guided deraining with a novel network and adversarial training[EB/OL]. (2019-10-09)[2021-05-01].https://arxiv.org/abs/1910.03839. [22] GUO S, YAN Z F, ZHANG K, et al. Toward convolutional blind denoising of real photographs[C]//IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2019: 1712-1722. [23] WANG Y, GONG D, YANG J, et al. An effective two-branch model-based deep network for single image deraining[EB/OL]. (2019-05-14) [2021-05-01]. https://arxiv.org/abs/1905.05404v1. [24] 张雄, 杨琳琳, 上官宏, 等. 基于生成对抗网络和噪声水平估计的低剂量CT图像降噪方法[J]. 电子与信息学报, 2021, 43(8): 2404-2413. doi: 10.11999/JEIT200591ZHANG X, YANG L L, SHANGGUAN H, et al. A low-dose CT image denoising method based on generative adversarial network and noise level estimation[J]. Journal of Electronics & Information Technology, 2021, 43(8): 2404-2413(in Chinese). doi: 10.11999/JEIT200591 [25] DU W C, CHEN H, LIAO P X, et al. Visual attention network for low-dose CT[J]. IEEE Signal Processing Letters, 2019, 26(8): 1152-1156. doi: 10.1109/LSP.2019.2922851 [26] LI M, HSU W, XIE X, et al. SACNN: Self-attention convolutional neural network for low-dose CT denoising with self-supervised perceptual loss network[J]. IEEE Transactions on Medical Imaging, 2020, 39(7): 2289-2301. doi: 10.1109/TMI.2020.2968472 [27] LIU W, WANG Z, LIU X, et al. A survey of deep neural network architectures and their applications[J]. Neurocomputing, 2017, 234: 11-26. doi: 10.1016/j.neucom.2016.12.038 [28] YOU C Y, YANG Q S, SHAN H M, et al. Structurally-sensitive multi-scale deep neural network for low-dose CT denoising[J]. IEEE Access, 2018, 6: 41839-41855. [29] WOLTERINK J M, LEINER T, VIERGEVER M A, et al. Generative adversarial networks for noise reduction in low-dose CT[J]. IEEE Transactions on Medical Imaging, 2017, 36(12): 2536-2545. doi: 10.1109/TMI.2017.2708987 [30] KYUNGSANG K, LI Z Q, MIN J H, et al. Low dose CT grand challenge[EB/OL]. [2021-05-01]. http://www.aapm.org/GrandChallenge/LowDoseCT/. [31] SMITH K, JUSTIN K. Piglet dataset[EB/OL]. [2021-05-01]. http://homepage.usask.ca/ ~xiy525/. [32] YAO S S, LIN W S, OOG E P, et al. Contrast signal-to-noise ratio for image quality assessment[C]//IEEE International Conference on Image Processing. Piscataway: IEEE Press, 2005, 1: 8835943. [33] WANG Z, BOVIK A C, SHEIKH H R, et al. Image quality assessment: From error visibility to structural similarity[J]. IEEE Transactions on Image Processing, 2004, 13(4): 600-612. doi: 10.1109/TIP.2003.819861 [34] SHEIKH H R, BOVIK A C. Image information and visual quality[J]. IEEE Transactions on Image Processing, 2006, 15(2): 430-444. doi: 10.1109/TIP.2005.859378 [35] SHEIKH H R, BOVIK A C, DE VECIANA G. An information fidelity criterion for image quality assessment using natural scene statistics[J]. IEEE Transactions on Image Processing, 2005, 14(12): 2117-2128. doi: 10.1109/TIP.2005.859389 [36] DAMERA-VENKATA N, KITE T D, GEISLER W S, et al. Image quality assessment based on a degradation model[J]. IEEE Transactions on Image Processing, 2000, 9(4): 636-650. doi: 10.1109/83.841940 [37] DIEDERK P K, JIMMY L B. Adam: A method for stochastic optimization[C]//International Conference on Learning Representations (ICLR), 2015: 1-15. -

下载:

下载: