-

摘要:

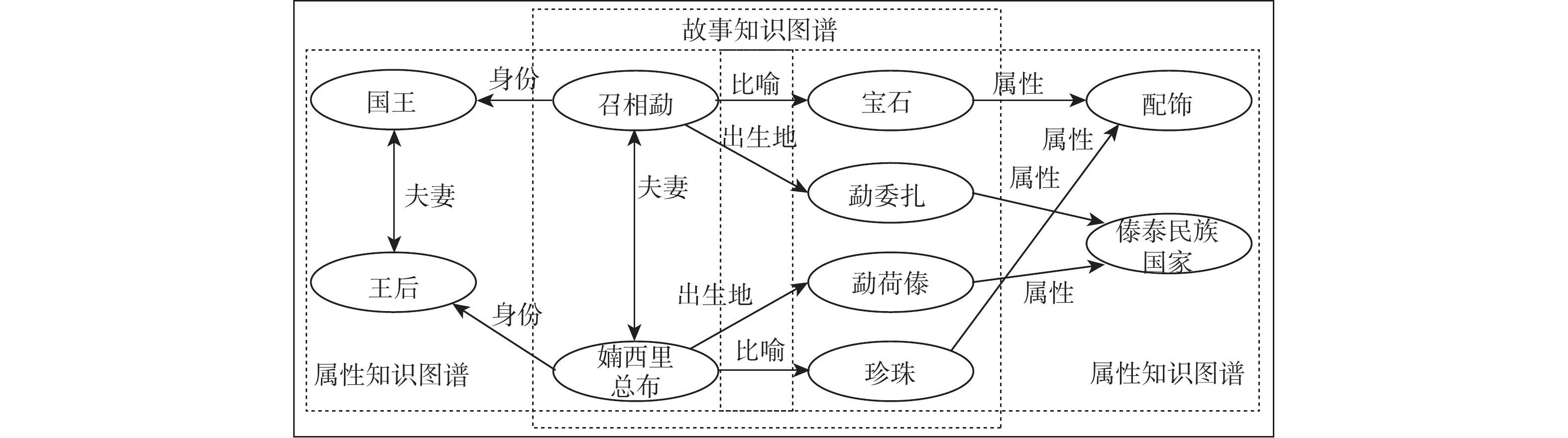

作为民间文学的知识库,民间文学知识图谱(KG)在文本理解和知识服务等方面具有良好的应用前景,受到广泛关注。民间文学作品语言简洁,且常用短句,仅阐述民间文学人、事物间的部分关系,且KG通常难以包含领域内的所有知识,需对其进行补全。针对现有知识图谱补全(KGC)方法未能充分利用民间文学实体的不同邻域信息和关系内部的细粒度语义等问题,提出融合邻域信息的民间文学KG补全模型FolkKGC。设计关系感知注意力门控机制,融合实体邻域信息,获得民间文学实体的有效表示;设计基于交叉邻域相似度的关系学习器,对关系的邻域信息进行融合,构建细粒度的民间文学关系表示;对关系表示进行打分,完成知识图谱补全。从民间文学文本构造KG数据集,并对所提方法进行对比测试和消融实验。FolkKGC模型的MRR和Hits@

n 指标均高于现有模型,验证了其有效性。Abstract:Folklore knowledge graph (KG), the domain knowledge base, has received a lot of interest and has the potential to be widely employed in context understanding and knowledge service scenarios. However, folk literature works often use short sentences and just elaborate on partial relations between characters and things in detail. The KG does not fully cover all the real domain knowledge, and it is necessary to complete. Existing KG completion (KGC) methods are hard to differentiate various neighborhood information and semantic gaps within relations. This paper proposes the folklore KG completion model FolkKGC by fusing the neighborhood information. First, the relation-aware gate attention mechanism is designed for neighborhood information fusion to effectively represent the folklore representation of entities. The relevant fine-grained folklore representations are then produced by fusing the neighborhood information of relations using a relation learner based on cross-neighborhood similarity. Comparison and ablation experiments are conducted on the datasets extracted from folklore texts. The results show that our method outperforms the state-of-the-art models on MRR and Hits@

n , which verifies the effectiveness of our proposed model. -

表 1 民间文学知识图谱补全任务示例

Table 1. Example of FolkKGC tasks

关系 集合 头实体 尾实体 $ {r_1} $演奏 $ {S_{{r_1}}} $ 俸改 仙笛 $ {Q_{{r_1}}} $ 杰罗 口琴? 荷花仙女 十二弦琴? $ {r_2} $坐骑 $ {S_{{r_2}}} $ 十头王 飞车 $ {Q_{{r_2}}} $ 婻西里总布 白象? 撒赛歇 龙马? 表 2 民间文学知识图谱数据集的统计信息

Table 2. Statistics of the FolkKG dataset

实体 关系 三元组 关系任务 训练集 验证集 测试集 1907 48 6106 40 28 8 4 表 3 对比实验结果

Table 3. Results of comparative experiments

模型类别 模型名称 MRR Hits@10 Hits@5 Hits@1 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot 度量学习

FKGC模型GMatching[16] 0.193 — — 0.350 — — 0.272 — — 0.116 — — FAAN[17] 0.227 0.302 0.333 0.411 0.463 0.511 0.319 0.388 0.424 0.137 0.218 0.245 元学习

FKGC模型MetaR[19] 0.207 — 0.241 0.406 — 0.458 0.313 — 0.355 0.101 — 0.141 GANA[20] 0.331 0.354 0.382 0.571 0.577 0.653 0.448 0.464 0.536 0.206 0.237 0.256 RSCL[21] 0.338 — 0.395 0.516 — 0.532 0.412 — 0.490 0.215 — 0.271 HiRe[22] 0.341 — 0.435 0.538 — 0.627 0.453 — 0.608 0.219 — 0.311 NP-FKGC[23] 0.343 0.360 0.418 0.557 0.569 0.593 0.460 0.476 0.574 0.225 0.244 0.383 FolkKGC 0.358 0.392 0.463 0.595 0.614 0.694 0.498 0.506 0.646 0.240 0.272 0.394 注:加粗数字表示最优结果;下划线数字表示次优结果;“—”表示该模型不支持当前K-shot场景。 表 4 消融实验结果

Table 4. Results of ablation experiments

模型名称 模块 MRR Hits@10 Hits@5 Hits@1 1 2 3 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot FolkKGC √ √ √ 0.358 0.392 0.463 0.595 0.614 0.694 0.498 0.506 0.646 0.240 0.272 0.394 w/o ENA × √ √ 0.251 0.277 0.339 0.490 0.512 0.584 0.369 0.387 0.481 0.139 0.174 0.203 GAT ENA ⇆ √ √ 0.306 0.315 0.380 0.545 0.579 0.657 0.428 0.446 0.546 0.182 0.194 0.260 w/o RNL √ × √ 0.222 0.273 0.394 0.474 0.495 0.612 0.328 0.370 0.501 0.125 0.131 0.229 GMatc SPL √ √ ⇆ 0.296 0.293 0.356 0.502 0.535 0.573 0.410 0.421 0.528 0.155 0.181 0.244 GANA SPL √ √ ⇆ 0.344 0.352 0.431 0.573 0.596 0.662 0.460 0.489 0.593 0.212 0.224 0.324 w/o ρ √ √ ⇆ 0.358 0.356 0.412 0.595 0.592 0.641 0.498 0.473 0.553 0.240 0.213 0.291 注:加粗数字表示最优结果;下划线数字表示次优结果;“—”表示该模型不支持当前K-shot场景。 表 5 预嵌入模型实验结果

Table 5. Results of pre-embedding models experiments

模型类别 模型名称 MRR Hits@10 Hits@5 Hits@1 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot 1-shot 3-shot 5-shot 预嵌入

模型TransE[6] 0.068 0.102 0.107 0.172 0.209 0.228 0.084 0.129 0.135 0.028 0.050 0.056 TransH[7] 0.117 0.175 0.229 0.201 0.368 0.372 0.141 0.267 0.285 0.084 0.103 0.115 DistMult[11] 0.113 0.139 0.150 0.208 0.216 0.236 0.133 0.172 0.190 0.073 0.101 0.099 ComplEx[12] 0.124 0.168 0.187 0.231 0.274 0.292 0.184 0.211 0.212 0.092 0.133 0.105 -

[1] EDELMANN A, WOLFF T, MONTAGNE D, et al. Computational social science and sociology[J]. Annual Review of Sociology, 2020, 46(1): 61-81. [2] 左玉堂. 中国民间文学大系·史诗·云南卷(1)[M]. 北京: 中国文联出版社, 2022: 3-7.ZUO Y T. Treasury of Chinese folk literature: Collection of epics. Yunnan volume (1)[M]. Beijing: China Federation of Literary and Art Publishing House, 2022: 3-7(in Chinese). [3] JI S X, PAN S R, CAMBRIA E, et al. A survey on knowledge graphs: representation, acquisition, and applications[J]. IEEE Transactions on Neural Networks and Learning Systems, 2022, 33(2): 494-514. [4] 官赛萍, 靳小龙, 贾岩涛, 等. 面向知识图谱的知识推理研究进展[J]. 软件学报, 2018, 29(10): 2966-2994.GUAN S P, JIN X L, JIA Y T, et al. Knowledge reasoning over knowledge graph: a survey[J]. Journal of Software, 2018, 29(10): 2966-2994(in Chinese). [5] 刘知远, 孙茂松, 林衍凯, 等. 知识表示学习研究进展[J]. 计算机研究与发展, 2016, 53(2): 247-261.LIU Z Y, SUN M S, LIN Y K, et al. Knowledge representation learning: a review[J]. Journal of Computer Research and Development, 2016, 53(2): 247-261(in Chinese). [6] ANTOINE B, NICOLAS U, ALBERT G, et al. Translating embeddings for modeling multi-relational data[C]//Proceedings of the 27th Conference on Neural Information Processing Systems. Stateline: NeurIPS, 2013: 2787-2795. [7] WANG Z, ZHANG J W, FENG J L, et al. Knowledge graph embedding by translating on hyperplanes[C]//Proceedings of the 28th AAAI Conference on Artificial Intelligence. Reston: AIAA, 2014, 28(1): 1112-1119. [8] LIN Y K, LIU Z Y, SUN M S, et al. Learning entity and relation embeddings for knowledge graph completion[J]. Proceedings of the 29th AAAI Conference on Artificial Intelligence. Austin: AAAI, 2015, 29(1): 2181-2187. [9] SUN Z Q, DENG Z H, NIE J Y, et al. Rotate: knowledge graph embedding by relational rotation in complex space[C]//Proceedings of the 7th International Conference on Learning Representations. New Orleans: Ithaca, 2019: 1-18. [10] NICKEL M, TRESP V, KRIEGEL H. A three-way model for collective learning on multi-relational data[C]//Proceedings of the 28th International Conference on Machine Learning. Williamstown: ACM, 2011: 809-816. [11] YANG B S, YIH W T, HE X D, et al. Embedding entities and relations for learning and inference in knowledge bases[C]//International Conference on Learning Representations. San Diego: ICLR, 2015. [12] TROUILLON T, WELBL J, RIEDEL S, et al. Complex embeddings for simple link prediction[C]//Proceedings of the 33rd International Conference on Machine Learning. New York: ACM, 2016: 2071-2080. [13] 唐榕氚, 徐秋程, 汤闻易, 等. 无对齐实体场景的多语言知识图谱补全[J]. 北京航空航天大学学报, 2026, 52(1): 252-259.TANG R C, XU Q C, TANG W Y, et al. Multilingual knowledge graph completion without aligned entity pairs[J]. Journal of Beijing University of Aeronautics and Astronautics, 2026, 52(1): 252-259(in Chinese). [14] 纪鑫, 武同心, 杨智伟, 等. 基于时序知识图谱的电力设备缺陷预测[J]. 北京航空航天大学学报, 2024, 50(10): 3131-3138.JI X, WU T X, YANG Z W, et al. Power equipment defect prediction based on temporary knowledge graph[J]. Journal of Beijing University of Aeronautics and Astronautics, 2024, 50(10): 3131-3138(in Chinese). [15] MA H, DAISY W. A survey on few-shot knowledge graph completion with structural and commonsense knowledge[J]. (2023-01-03)[2023-09-07]. https://doi.org/10.48550/arXiv.2301.01172. [16] XIONG W H, YU M, CHANG S Y, et al. One-shot relational learning for knowledge graphs[C]//Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing. Stroudsburg: ACL, 2018: 1980-1990. [17] SHENG J W, GUO S, CHEN Z Y, et al. Adaptive attentional network for few-shot knowledge graph completion[C]//Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing. Stroudsburg: ACL, 2020: 1681-1691. [18] VASWANI A, SHAZEER N, PARMER N, et al. Attention is all you need[C]//Proceedings of the 31st Conference on Neural Information Processing Systems. Long Beach: NeurIPS, 2017: 6000-6010. [19] CHEN M Y, ZHANG W, ZHANG W, et al. Meta relational learning for few-shot link prediction in knowledge graphs[C]//Proceedings of the 2019 Conference on Empirical Methods Natural Language Processing and the 9th International Joint Conference on Natural Language Processing. Stroudsburg: ACL, 2019: 4216-4225. [20] NIU G L, LI Y, TANG C G, et al. Relational learning with gated and attentive neighbor aggregator for few-shot knowledge graph completion[C]//Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval. New York: ACM, 2021: 213-222. [21] LI Y, YU K, ZHANG Y, et al. Learning relation-specific representations for few-shot knowledge graph completion[J]. (2023-03-22)[2023-09-20]. https://doi.org/10.48550/arXiv.2203.11639. [22] WU H, YIN J, RAJARATNAM B, et al. Hierarchical relational learning for few-shot knowledge graph completion[C]//Proceedings of the 11th International Conference on Learning Representations. Virtual: Ithaca, 2022: 1-10. [23] LUO L H, LI Y F, HAFFARI G, et al. Normalizing flow-based neural process for few-shot knowledge graph completion[C]//Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval. New York: ACM, 2023: 900-910. [24] CHUANG J, GULCEHRE C, CHO K, et al. Empirical evaluation of gated recurrent neural networks on sequence modeling[C]//Proceedings of the Conference on Neural Information Processing Systems. Montréal: NeurIPS, 2014. [25] VELICKOVIC P, CUCURULL G, CASANOVA A, et al. Graph attention networks[C]//Proceedings of the 6th International Conference on Learning Representations. Ithaca: ICLR, 2018: 1-12. -

下载:

下载: