Visual-inertial navigation method based on semantic segmentation and geometric constraints in dynamic environment

-

摘要:

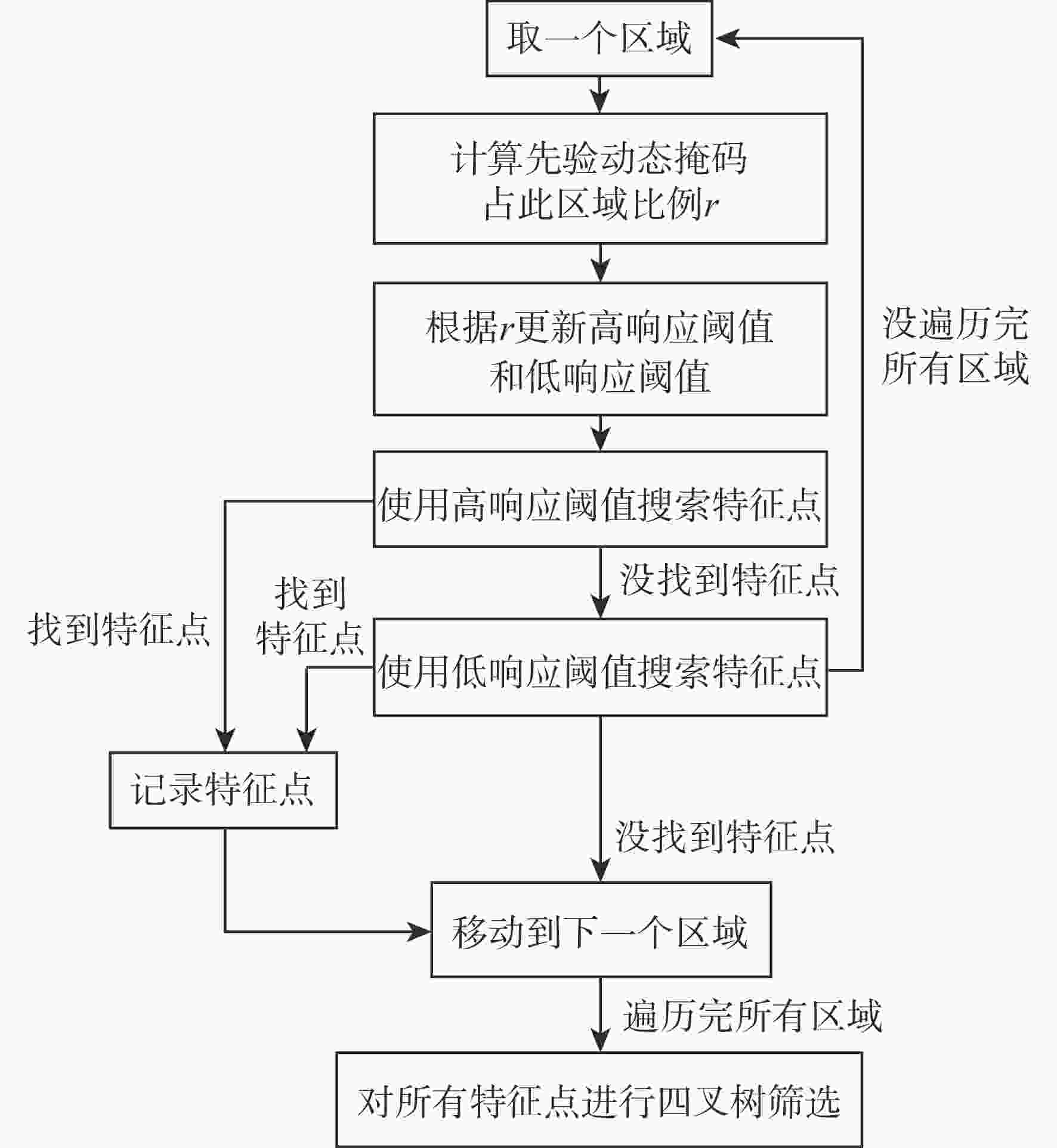

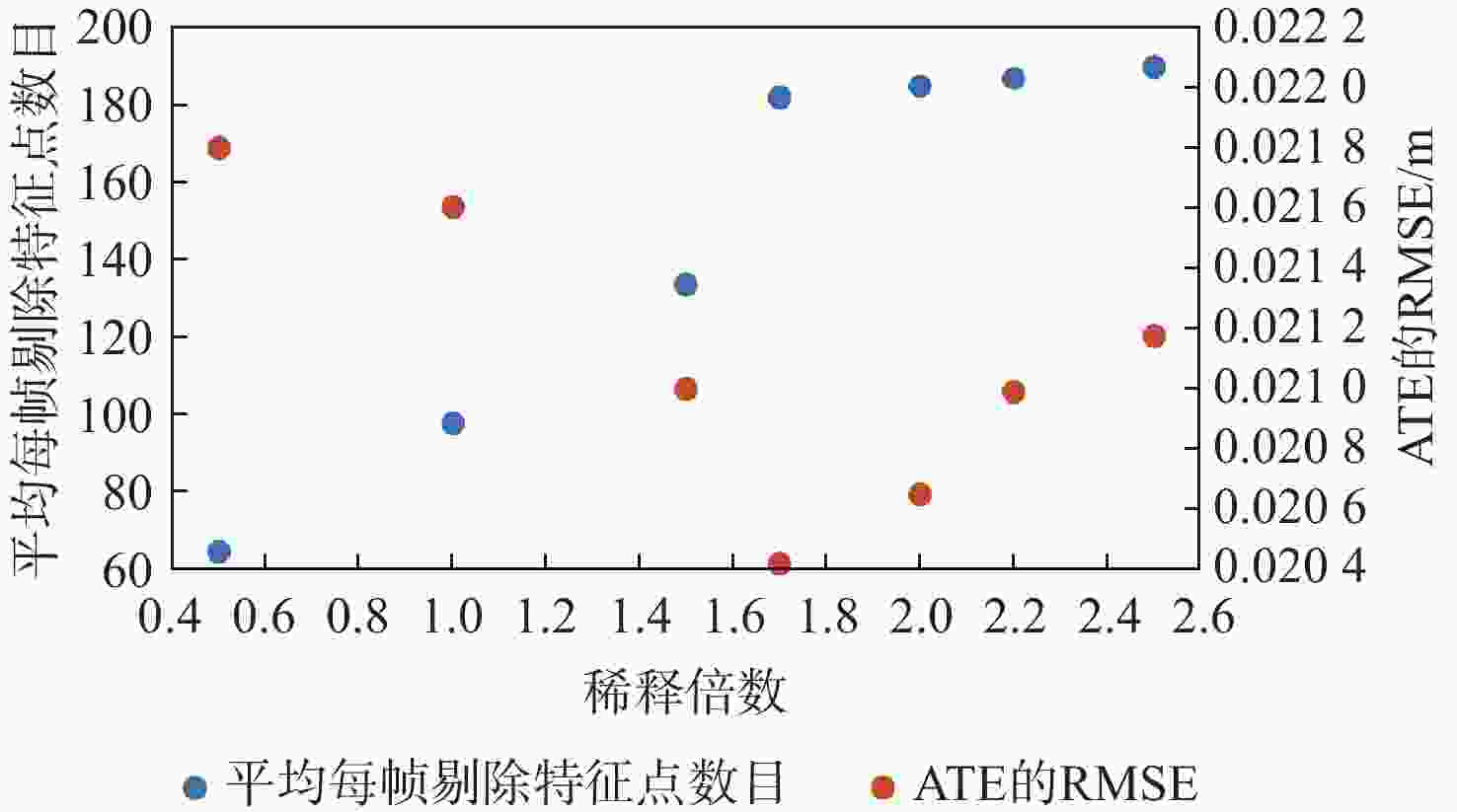

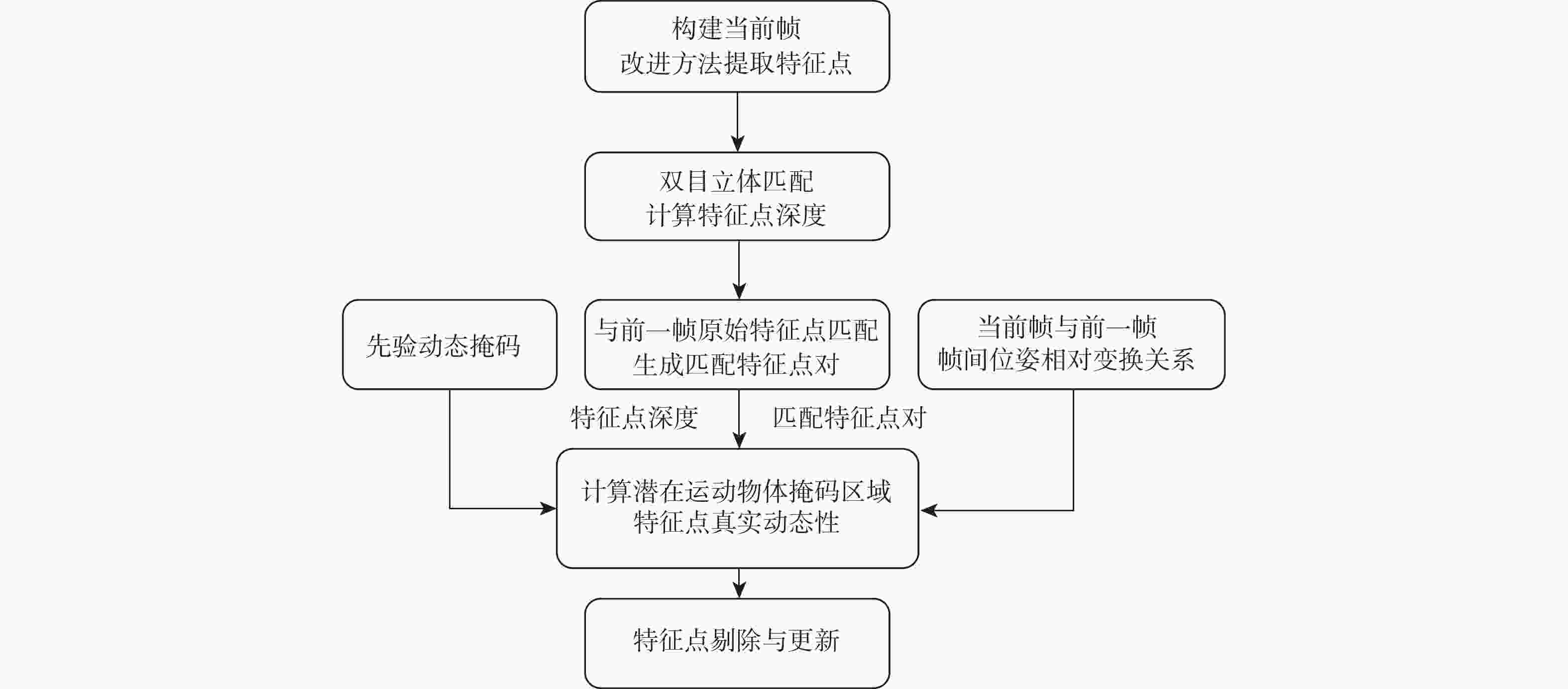

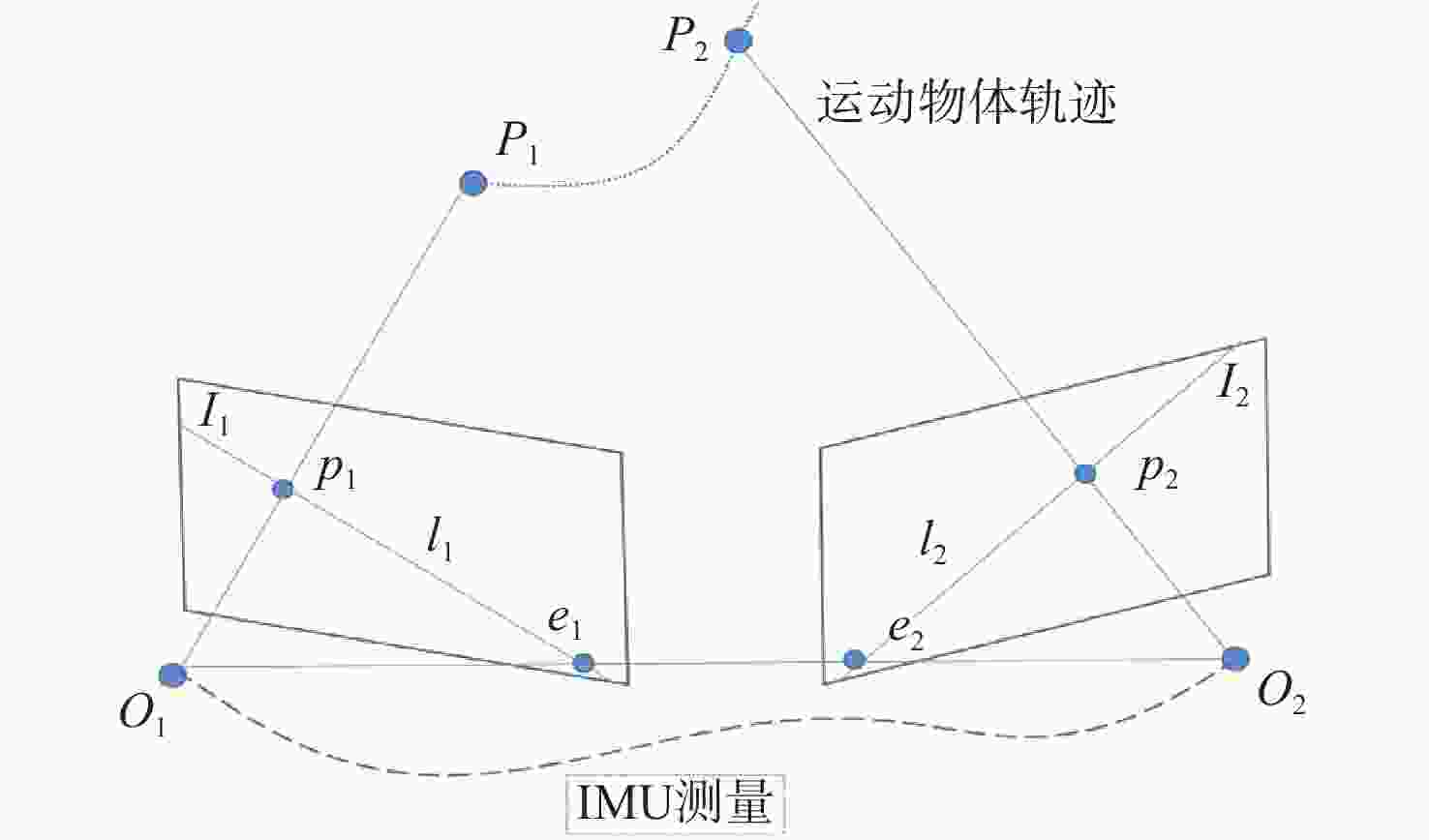

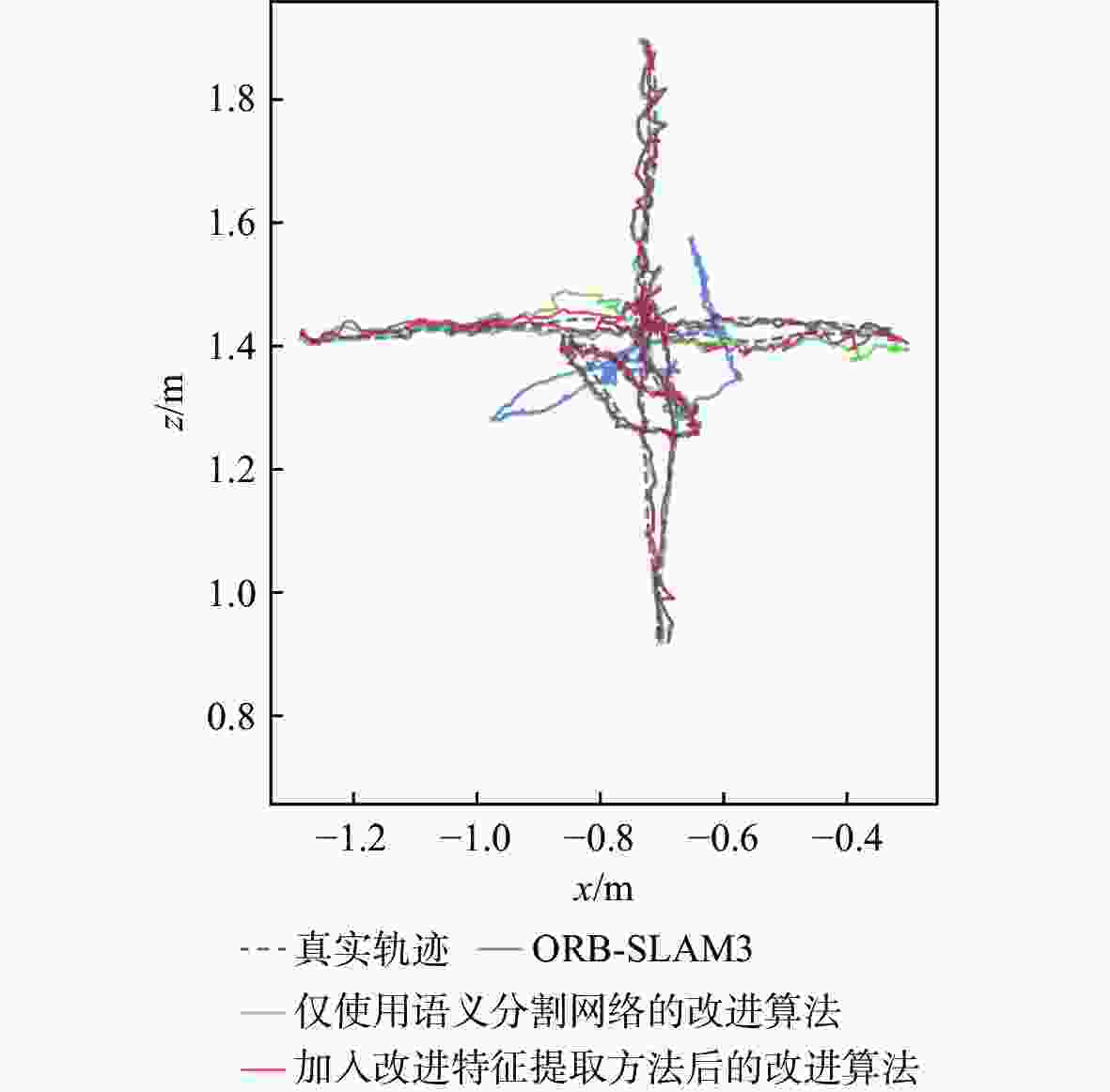

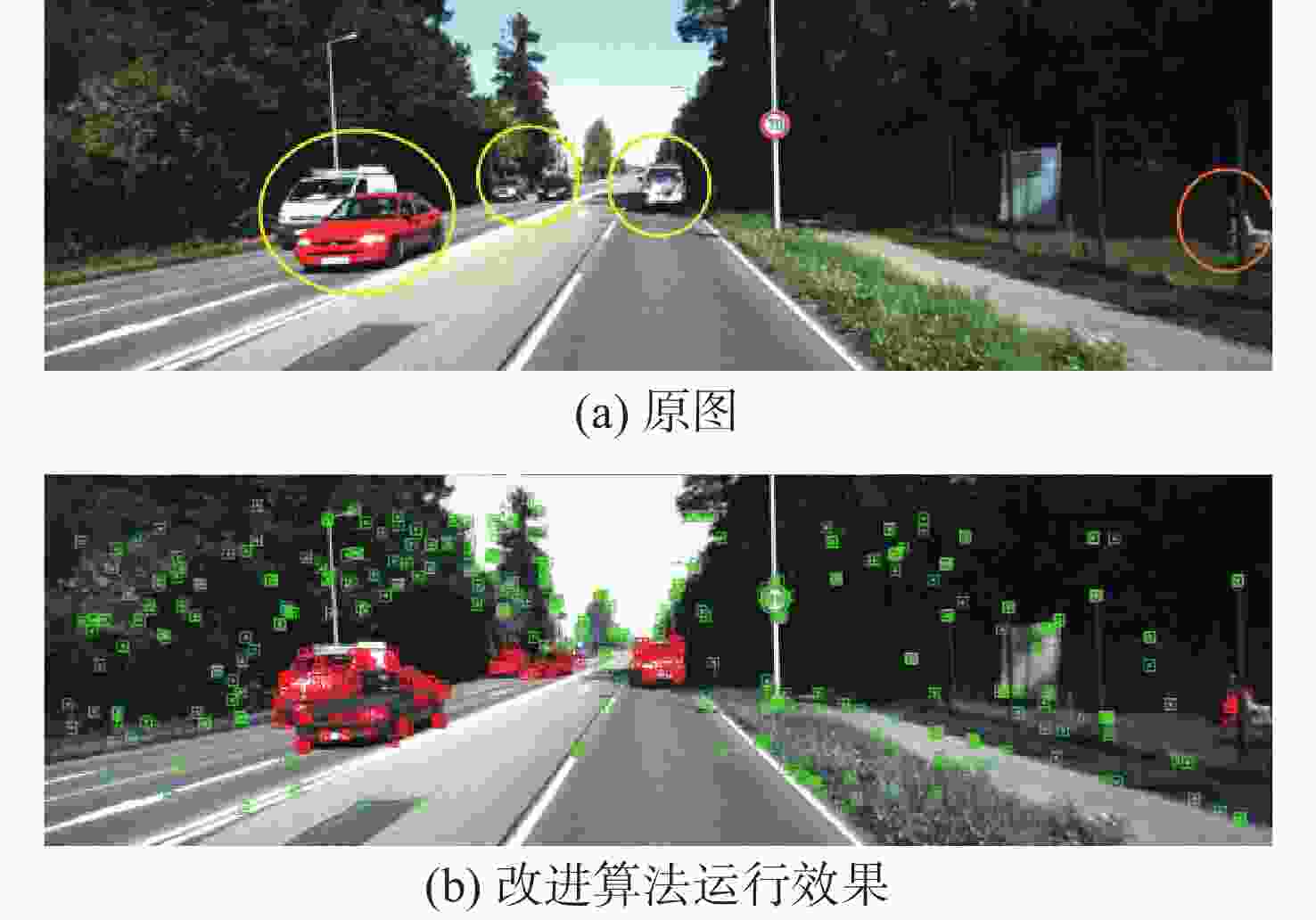

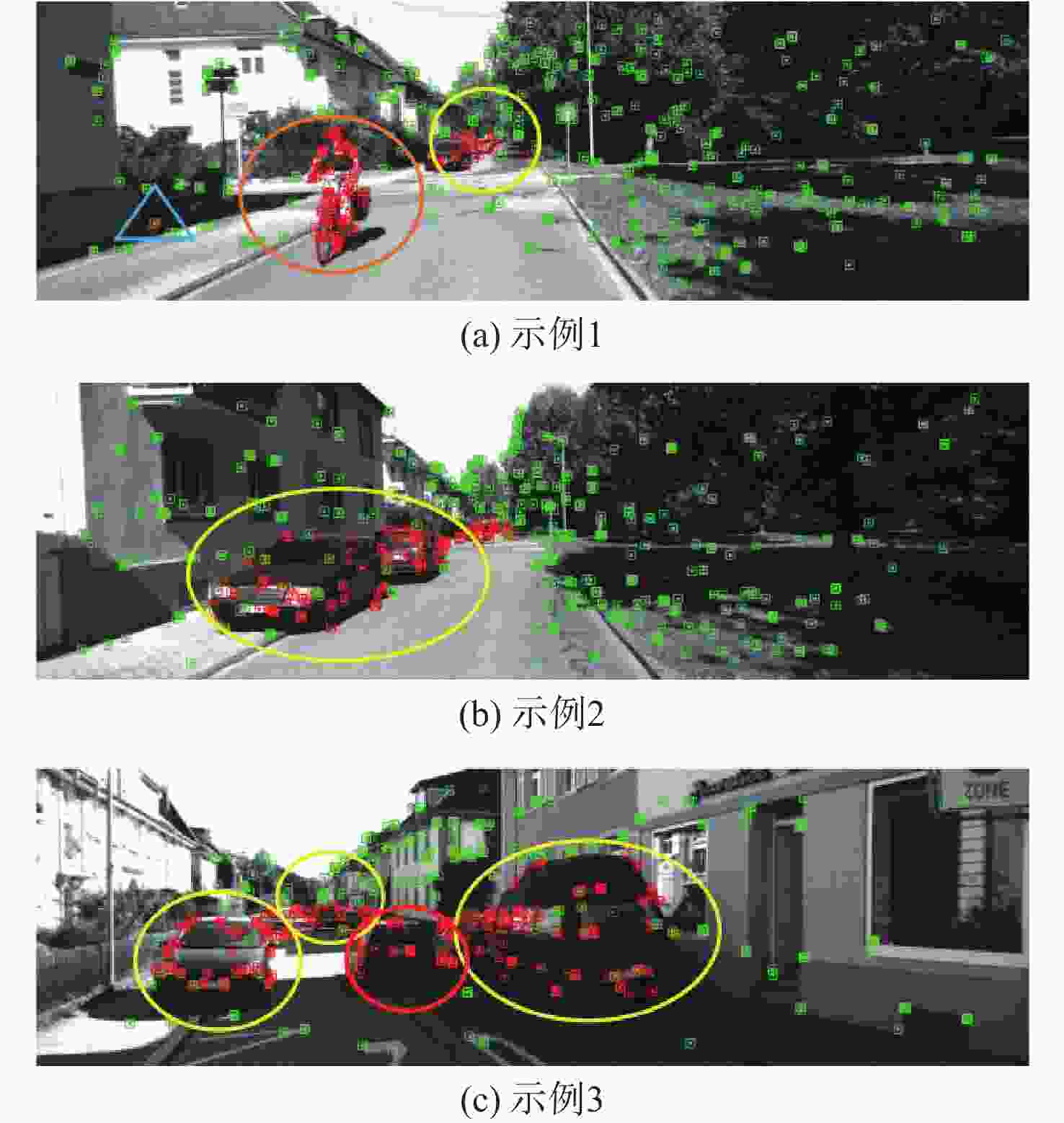

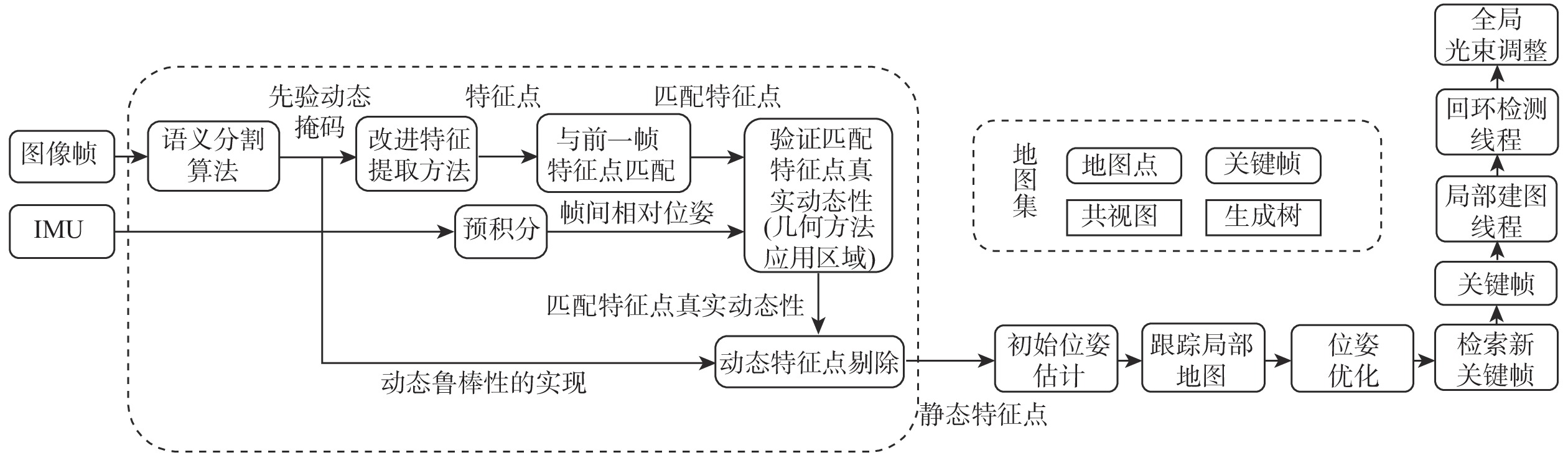

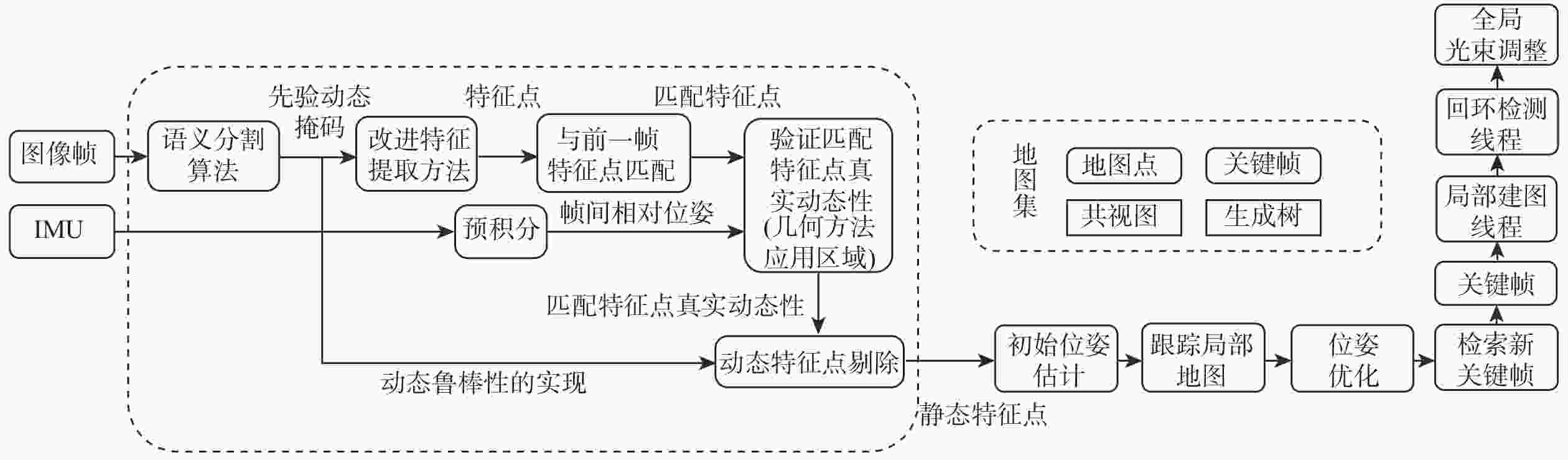

在实际同时定位与地图构建(SLAM)应用场景中,针对大量运动物体的成像特征点参与特征追踪进而降低算法精度和鲁棒性的问题,以及传统的以剔除动态特征为策略的动态SLAM方案中存在剩余静态特征不足而影响SLAM效果的问题,提出一种基于语义分割与几何约束的动态视觉/惯性组合导航方法。使用语义分割网络及不同类别物体的动态性信任程度生成先验动态掩码,并利用改进的抑制先验动态特征方法提取特征点,进而利用惯性测量单元(IMU)预积分分量结合几何约束技术判断特征点的真实动态性,并制定特征点剔除策略进行剔除,最终使用剩余静态特征点进行追踪与定位。相比于ORB-SLAM3,所提算法在室内动态场景数据集TUM下,定位精度平均提升了73.05%,在室外动态场景数据集KITTI下,定位精度平均提升了19.85%;与传统的动态SLAM算法对比,精度也更优。

Abstract:In the actual simultaneous localization and mapping (SLAM) application scenario, in order to solve the problem that a large number of imaging feature points of moving objects participate in feature tracking, which reduces the accuracy and robustness of the algorithm, as well as the problem that the traditional dynamic SLAM scheme with the strategy of eliminating dynamic features has insufficient residual static features and affects the SLAM effect, a dynamic vision-inertial integrated navigation method based on semantic segmentation and geometric constraints is proposed. A priori dynamic masks are created using the semantic segmentation network and the dynamic trust degree of various object types. Feature points are then extracted using an improved method of suppressing prior dynamic features. The real dynamic of feature points is then assessed using inertial measurement unit (IMU) pre-integration in conjunction with geometric constraint technology, and a feature point elimination strategy is developed for elimination. Finally, the remaining static feature points are used for tracking and positioning. Compared with the ORB-SLAM3, the positioning accuracy of the algorithm is improved by 73.05% on average in the indoor dynamic scene dataset TUM, and 19.85% in the outdoor dynamic scene dataset KITTI. Additionally, the accuracy is higher than that of the conventional dynamic SLAM approach.

-

表 1 TUM数据集测试各算法的绝对轨迹误差对比

Table 1. Comparison of absolute trajectory errors of various algorithm on TUM dataset

序列 STD/m RMSE/m RMSE

提升率1/%RMSE

提升率2/%ORB-SLAM3

(RGB-D模式)仅使用语义分割

网络的改进算法改进特征提取

方法的改进算法ORB-SLAM3

(RGB-D模式)仅使用语义分割

网络的改进算法改进特征提取

方法的改进算法walking_xyz 0.1146 0.0099 0.0083 0.2704 0.0223 0.0204 8.52 92.46 walking_rpy 0.0785 0.0350 0.0287 0.1608 0.0541 0.0487 9.98 69.71 walking_halfsphere 0.1658 0.0406 0.0331 0.2913 0.0548 0.0467 14.78 83.97 walking_static 0.0086 0.0033 0.0032 0.0152 0.0088 0.0082 6.82 46.05 表 2 TUM数据集测试各算法的相对位姿误差对比

Table 2. Comparison of relative pose errors of various algorithm on TUM dataset

序列 STD/m RMSE/m RMSE

提升率1/%RMSE

提升率2/%ORB-SLAM3

(RGB-D模式)仅使用语义分割

网络的改进算法改进特征提取

方法的改进算法ORB-SLAM3

(RGB-D模式)仅使用语义分割

网络的改进算法改进特征提取

方法的改进算法walking_xyz 0.0089 0.0091 0.0088 0.0170 0.0156 0.0153 1.92 10.00 walking_rpy 0.0303 0.0271 0.0230 0.0357 0.0341 0.0301 11.73 15.69 walking_halfsphere 0.0177 0.0151 0.0102 0.0240 0.0219 0.0188 14.16 21.67 walking_static 0.0125 0.0039 0.0037 0.0152 0.0074 0.0072 2.70 52.63 表 3 TUM数据集下本文改进算法与其他动态SLAM算法的ATE的RMSE对比

Table 3. RMSE comparison of ATE of the improved algorithm and other dynamic SLAM algorithms on TUM dataset

m 表 4 KITTI数据集测试各算法的绝对轨迹误差对比

Table 4. Comparison of absolute trajectory errors of various algorithms tested on the KITTI dataset

序列 STD/m RMSE/m RMSE

提升率1/%RMSE

提升率2/%ORB-SLAM3

(双目视觉/

惯性模式)未使用几何方法的

动态SLAM方案基于语义分割与

几何约束的

动态SLAM算法ORB-SLAM3

(双目视觉/

惯性模式)未使用几何方法的

动态SLAM方案基于语义分割与

几何约束的

动态SLAM算法01 1.12 1.1455 0.9417 2.6673 2.5815 1.9759 23.46 25.92 04 0.1073 0.0558 0.0534 0.2283 0.1503 0.1469 2.26 35.65 07 0.5029 0.4991 0.4583 0.8926 0.9244 0.8583 7.15 3.84 09 0.5943 0.9086 0.5362 1.3086 1.8222 1.1254 38.24 14.00 表 5 KITTI数据集测试各算法的相对位姿误差对比

Table 5. Comparison of relative pose errors of various algorithms tested on KITTI dataset

序列 STD/m RMSE/m RMSE

提升率1/%RMSE

提升率2/%ORB-SLAM3

(双目视觉/

惯性模式)未使用几何方法的

动态SLAM方案基于语义分割与

几何约束的

动态SLAM算法ORB-SLAM3

(双目视觉/

惯性模式)未使用几何方法的

动态SLAM方案基于语义分割与

几何约束的

动态SLAM算法01 0.0429 0.03 0.0242 0.0662 0.051 0.0457 10.39 30.97 04 0.0119 0.0115 0.0076 0.0224 0.0191 0.0156 18.32 30.36 07 0.01 0.0095 0.0085 0.0194 0.0176 0.0158 10.23 18.56 09 0.0114 0.0092 0.0091 0.0223 0.0198 0.0191 3.54 14.35 表 6 KITTI数据集下本文改进算法与其他动态SLAM算法的ATE的RMSE对比

Table 6. RMSE comparison of ATE of the improved algorithm and other dynamic SLAM algorithms under KITTI dataset

-

[1] 刘哲, 史殿习, 杨绍武, 等. 视觉惯性导航系统初始化方法综述[J]. 国防科技大学学报, 2023, 45(2): 15-26.LIU Z, SHI D X, YANG S W, et al. Review of visual-inertial navigation system initialization method[J]. Journal of National University of Defense Technology, 2023, 45(2): 15-26(in Chinese). [2] CAMPOS C, ELVIRA R, RODRÍGUEZ J J G, et al. ORB-SLAM3: an accurate open-source library for visual, visual-inertial, and multimap SLAM[J]. IEEE Transactions on Robotics, 2021, 37(6): 1874-1890. [3] QIN T, CAO S Z, PAN J, et al. A general optimization-based framework for global pose estimation with multiple sensors[EB/OL]. (2019-01-11)[2024-01-05]. https://arxiv.org/abs/1901.03642. [4] YU C, LIU Z X, LIU X J, et al. DS-SLAM: a semantic visual SLAM towards dynamic environments[C]//Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems. Piscataway: IEEE Press, 2019: 1168-1174. [5] BADRINARAYANAN V, KENDALL A, CIPOLLA R. SegNet: a deep convolutional encoder-decoder architecture for image segmentation[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 39(12): 2481-2495. [6] ZHONG F W, WANG S, ZHANG Z Q, et al. Detect-SLAM: making object detection and SLAM mutually beneficial[C]//Proceedings of the 2018 IEEE Winter Conference on Applications of Computer Vision. Piscataway: IEEE Press, 2018: 1001-1010. [7] ZHANG J, HENEIN M, MAHONY R, et al. VDO-SLAM: a visual dynamic object-aware SLAM system[EB/OL]. (2021-12-14)[2024-01-05]. https://arxiv.org/abs/2005.11052. [8] DAI W C, ZHANG Y, LI P, et al. RGB-D SLAM in dynamic environments using point correlations[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2022, 44(1): 373-389. [9] CHENG J H, WANG Z, ZHOU H Y, et al. DM-SLAM: a feature-based SLAM system for rigid dynamic scenes[J]. ISPRS International Journal of Geo-Information, 2020, 9(4): 202. [10] 蒋畅江, 刘朋, 舒鹏. 基于改进YOLOv5s的动态视觉SLAM算法[J]. 北京航空航天大学学报, 2025, 51(3): 763-771.JIANG C J, LIU P, SHU P. Dynamic visual SLAM algorithm based on improved YOLOv5s[J]. Journal of Beijing University of Aeronautics and Astronautics, 2025, 51(3): 763-771(in Chinese). [11] YUAN X, CHEN S. SaD-SLAM: a visual SLAM based on semantic and depth information[C]//Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems. Piscataway: IEEE Press, 2021: 4930-4935. [12] LI A, WANG J K, XU M, et al. DP-SLAM: a visual SLAM with moving probability towards dynamic environments[J]. Information Sciences, 2021, 556: 128-142. [13] STURM J, ENGELHARD N, ENDRES F, et al. A benchmark for the evaluation of RGB-D SLAM systems[C]//Proceedings of the 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems. Piscataway: IEEE Press, 2012: 573-580. [14] YU C Q, WANG J B, PENG C, et al. BiSeNet: bilateral segmentation network for real-time semantic segmentation[C]//European Conference on Computer Vision. Berlin: Springer, 2018: 334-349. [15] GEIGER A, LENZ P, STILLER C, et al. Vision meets robotics: the KITTI dataset[J]. The International Journal of Robotics Research, 2013, 32(11): 1231-1237. [16] GALVEZ-LÓPEZ D, TARDOS J D. Bags of binary words for fast place recognition in image sequences[J]. IEEE Transactions on Robotics, 2012, 28(5): 1188-1197. [17] PALAZZOLO E, BEHLEY J, LOTTES P, et al. ReFusion: 3D reconstruction in dynamic environments for RGB-D cameras exploiting residuals[C]//Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems. Piscataway: IEEE Press, 2020: 7855-7862. [18] YU X Y, YE W C, GUO X Y, et al. D3FlowSLAM: self-supervised dynamic SLAM with flow motion decomposition and DINO guidance[EB/OL]. (2024-08-21)[2026-01-07]. https://arxiv.org/abs/2207.08794. [19] MUR-ARTAL R, TARDÓS J D. ORB-SLAM2: an open-source SLAM system for monocular, stereo, and RGB-D cameras[J]. IEEE Transactions on Robotics, 2017, 33(5): 1255-1262. [20] BESCOS B, FÁCIL J M, CIVERA J, et al. DynaSLAM: tracking, mapping, and inpainting in dynamic scenes[J]. IEEE Robotics and Automation Letters, 2018, 3(4): 4076-4083. [21] XIAO L H, WANG J G, QIU X S, et al. Dynamic-SLAM: semantic monocular visual localization and mapping based on deep learning in dynamic environment[J]. Robotics and Autonomous Systems, 2019, 117: 1-16. -

下载:

下载: