Intelligent representation method of shale pore structure based on semantic segmentation

-

摘要:

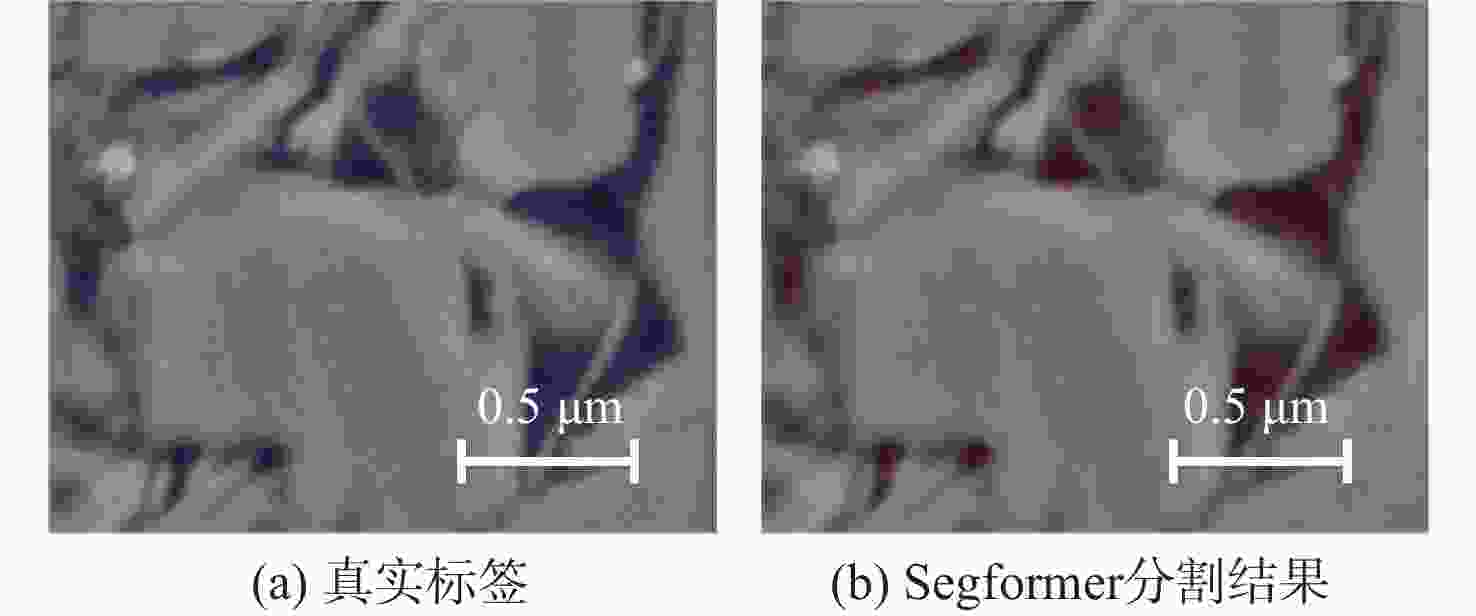

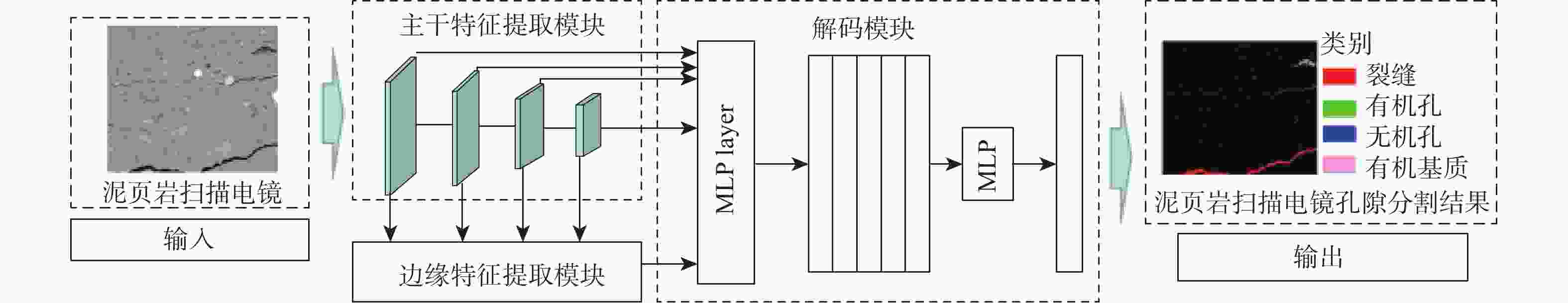

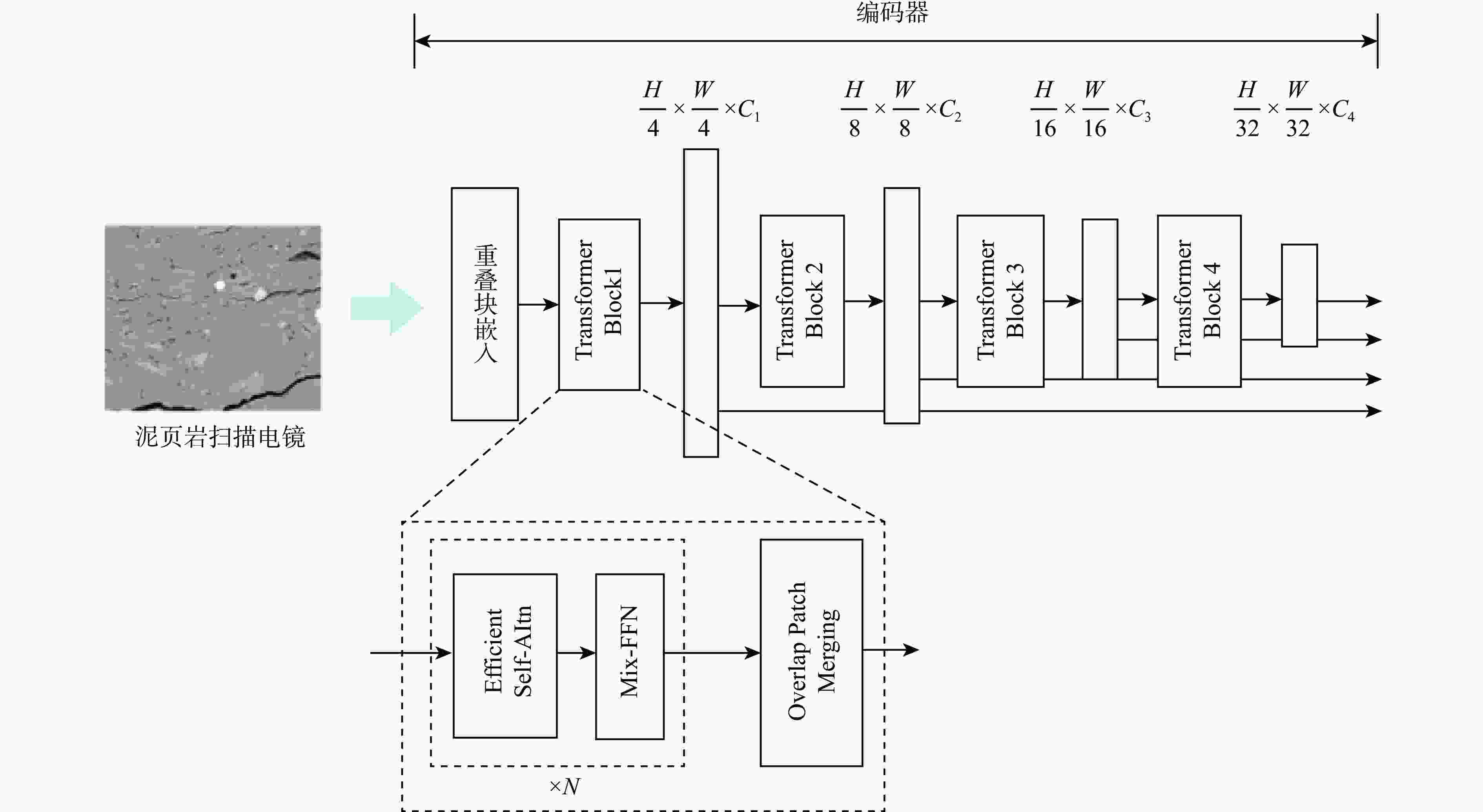

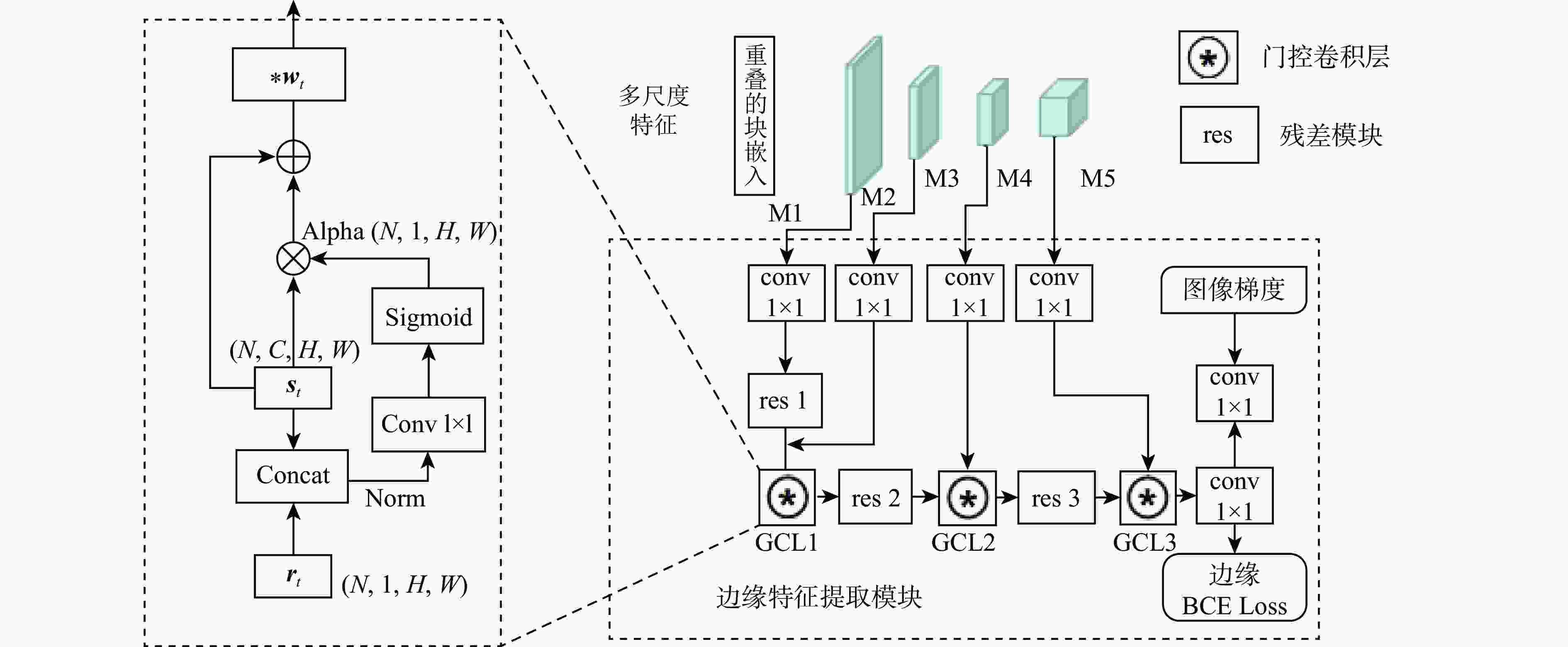

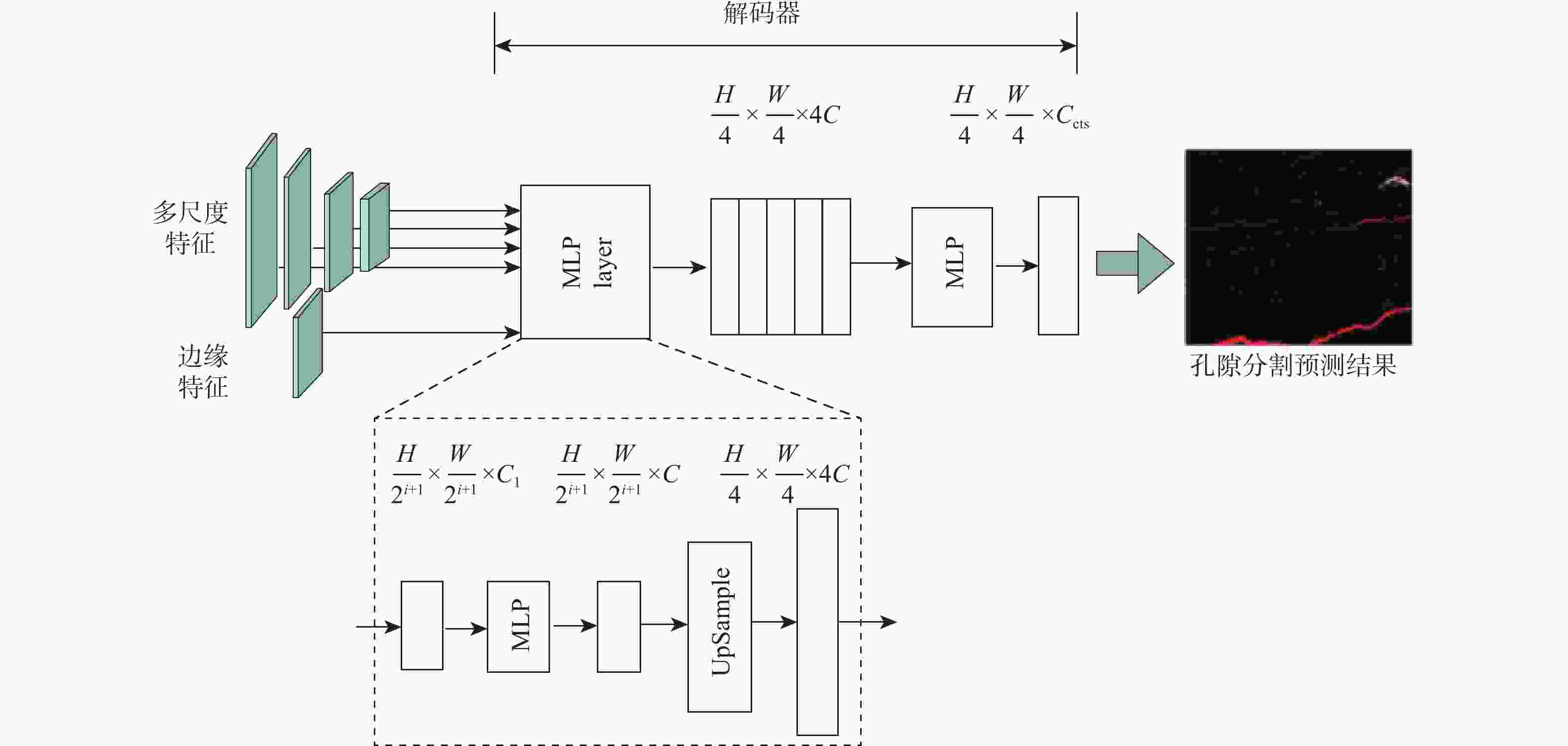

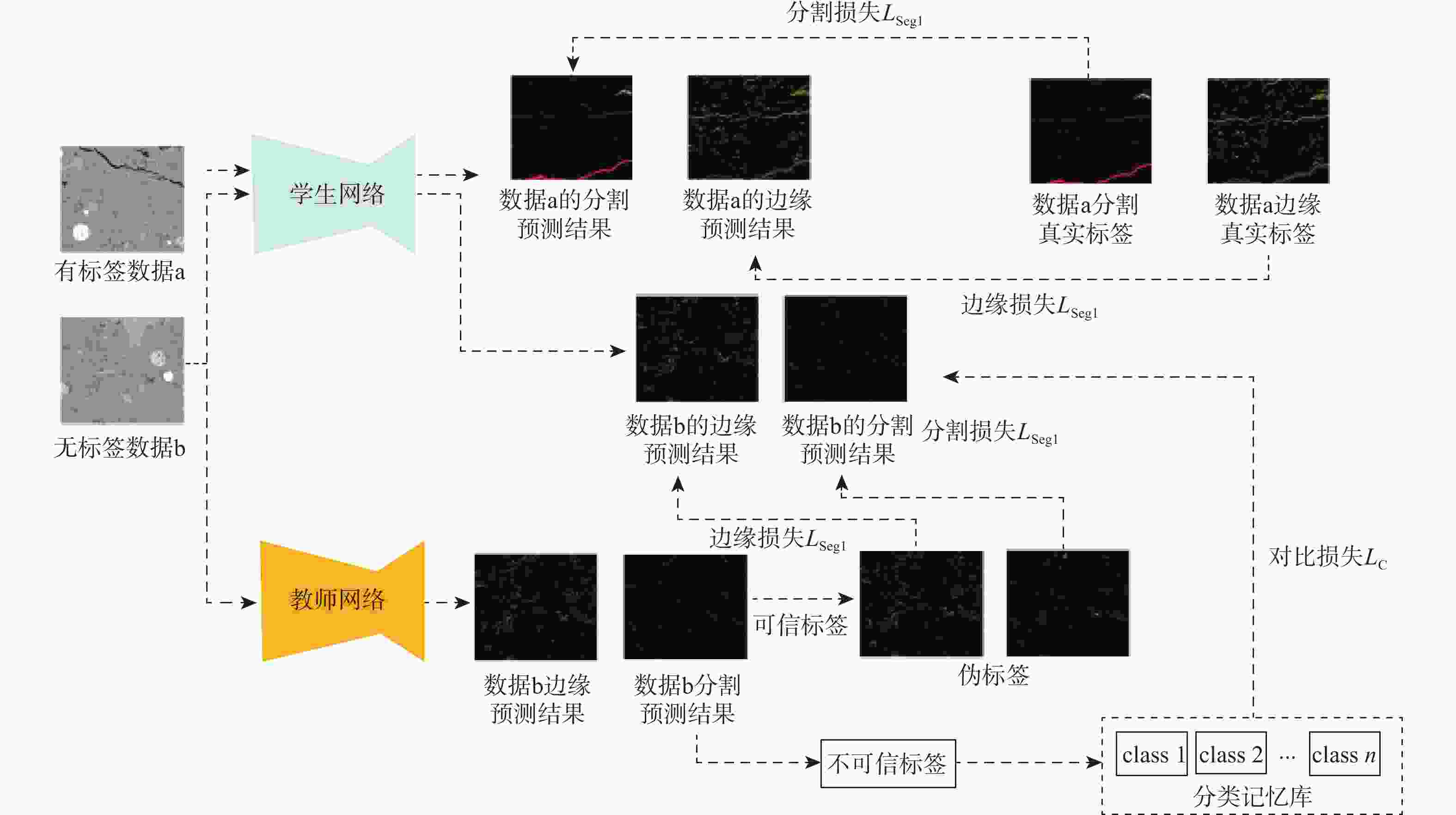

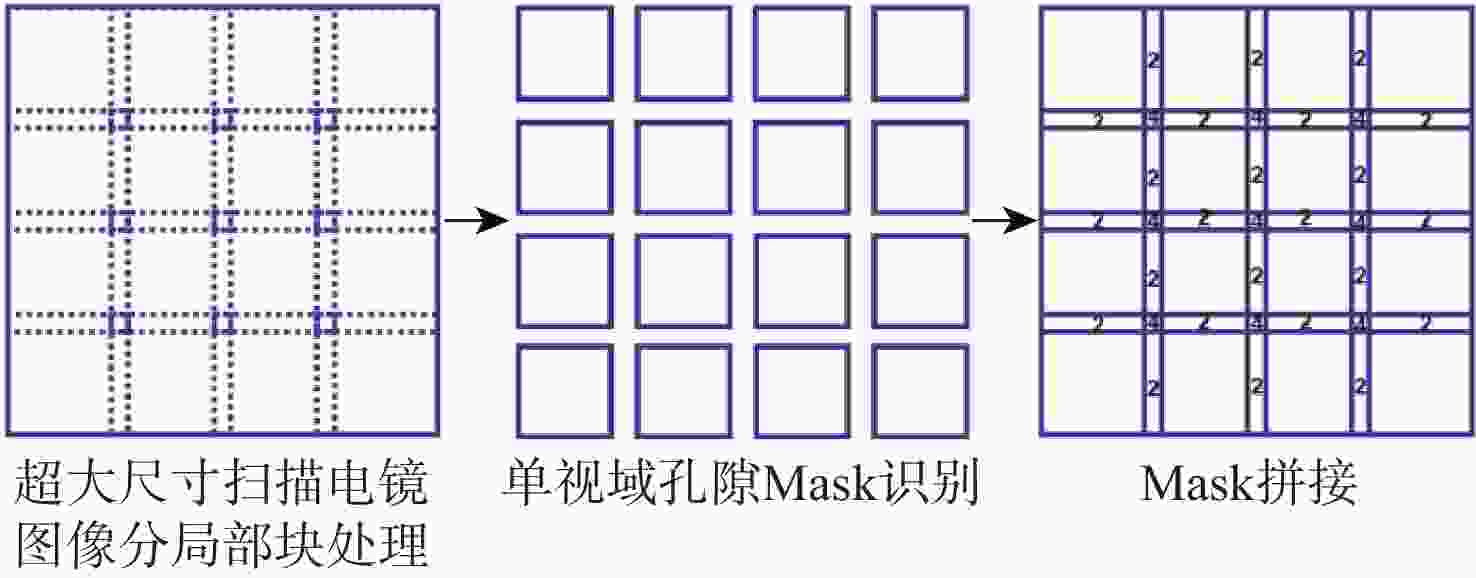

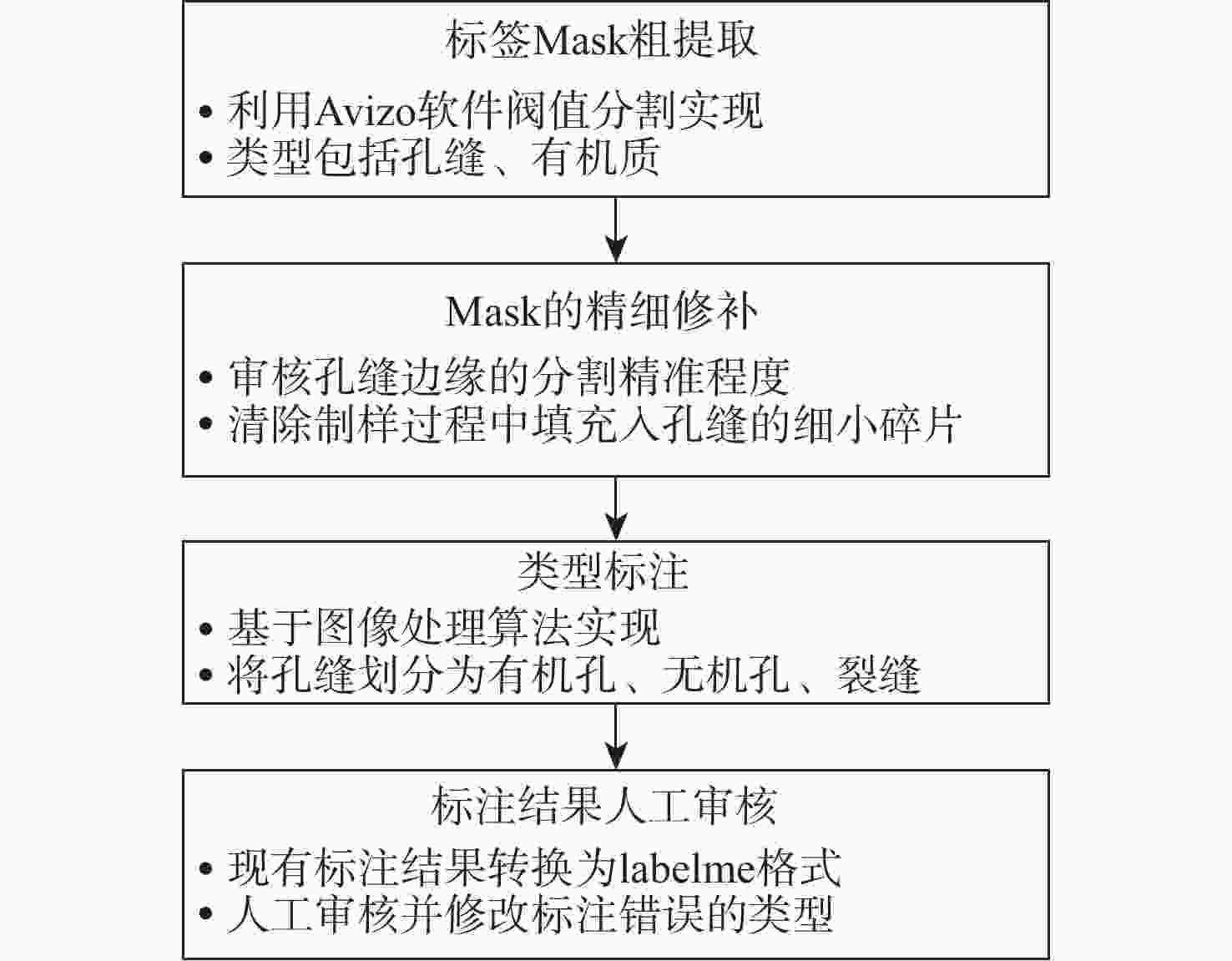

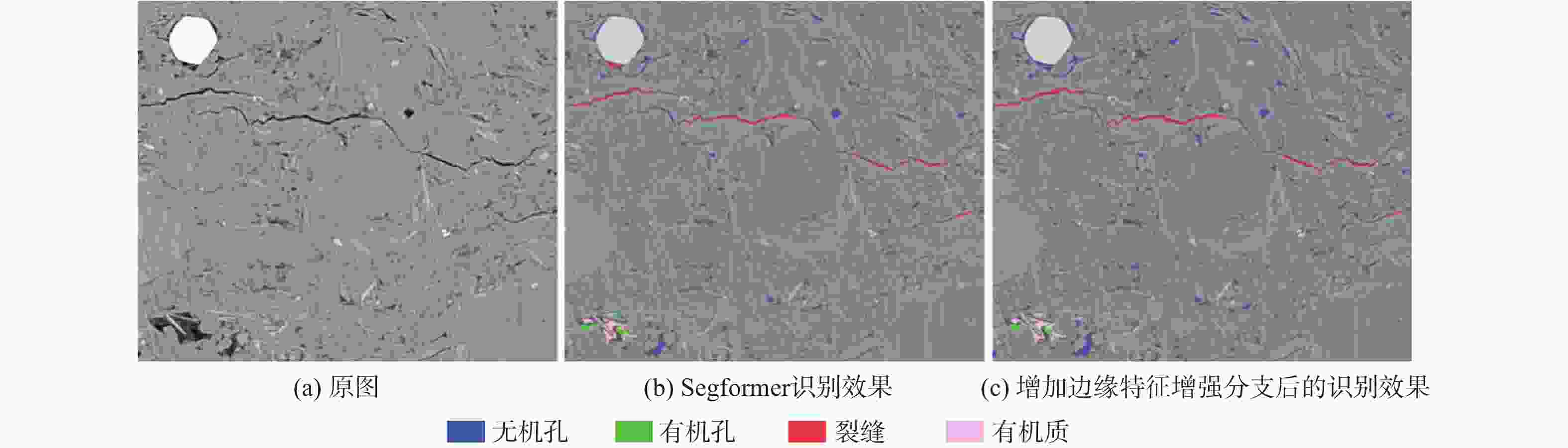

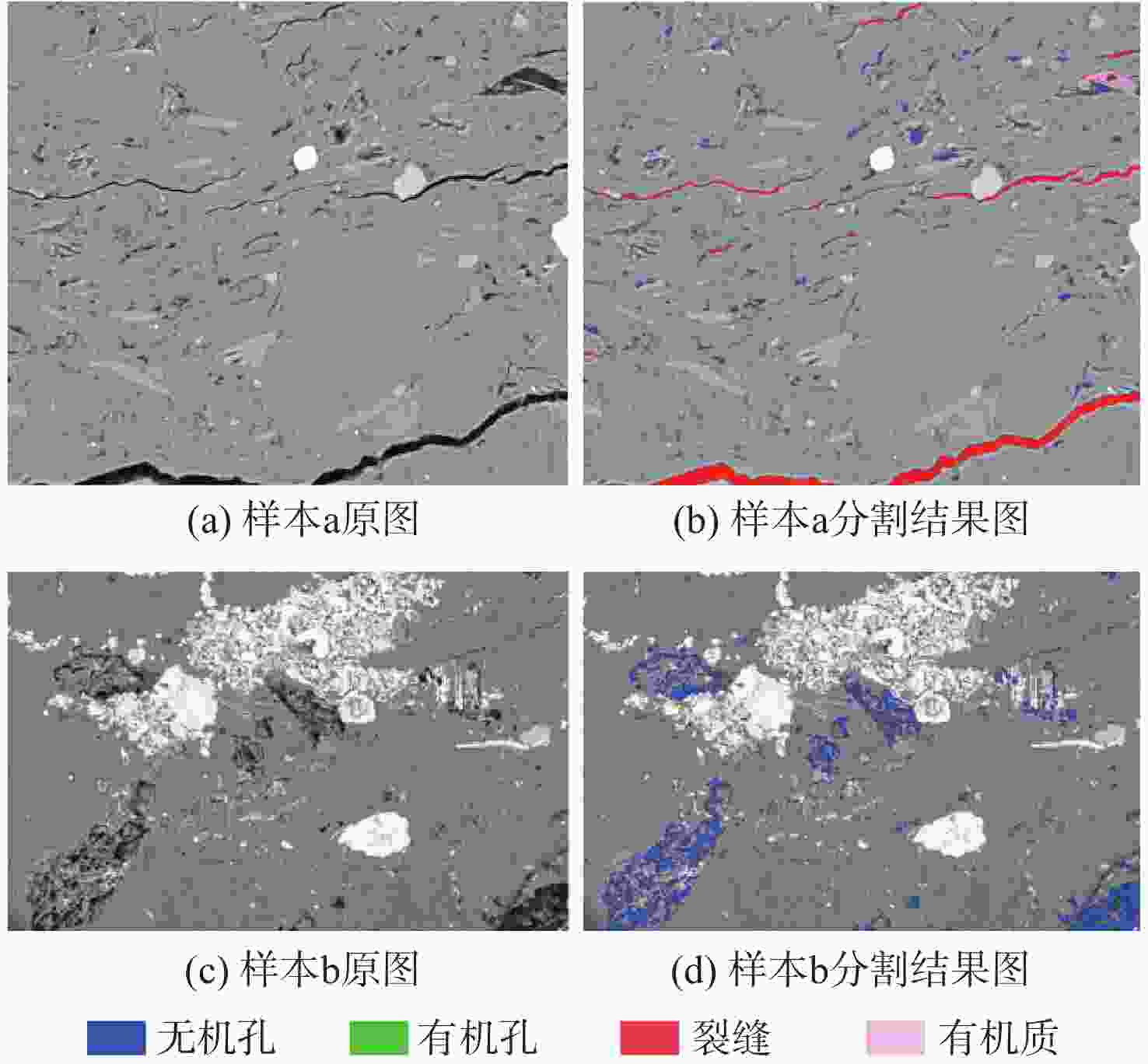

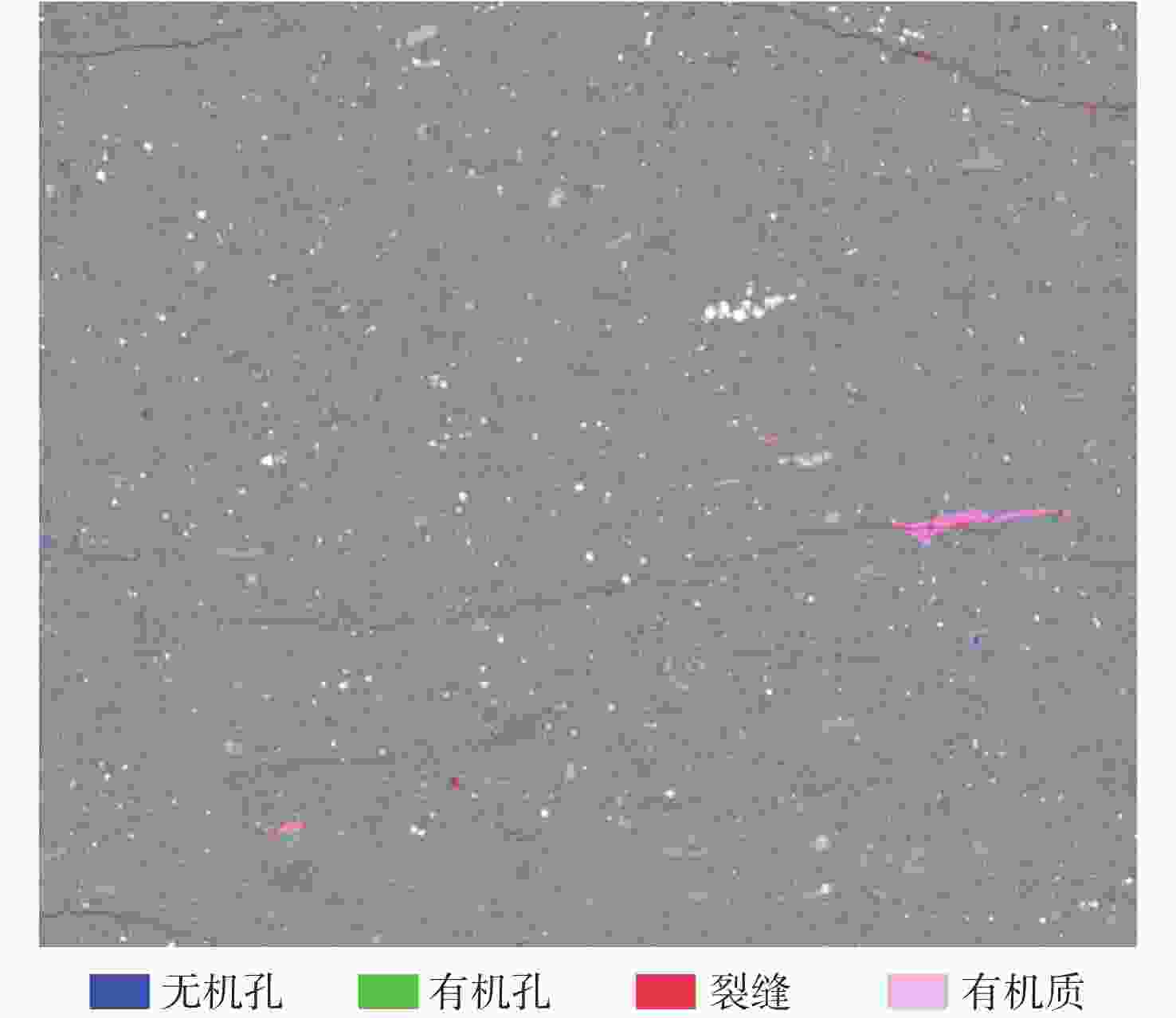

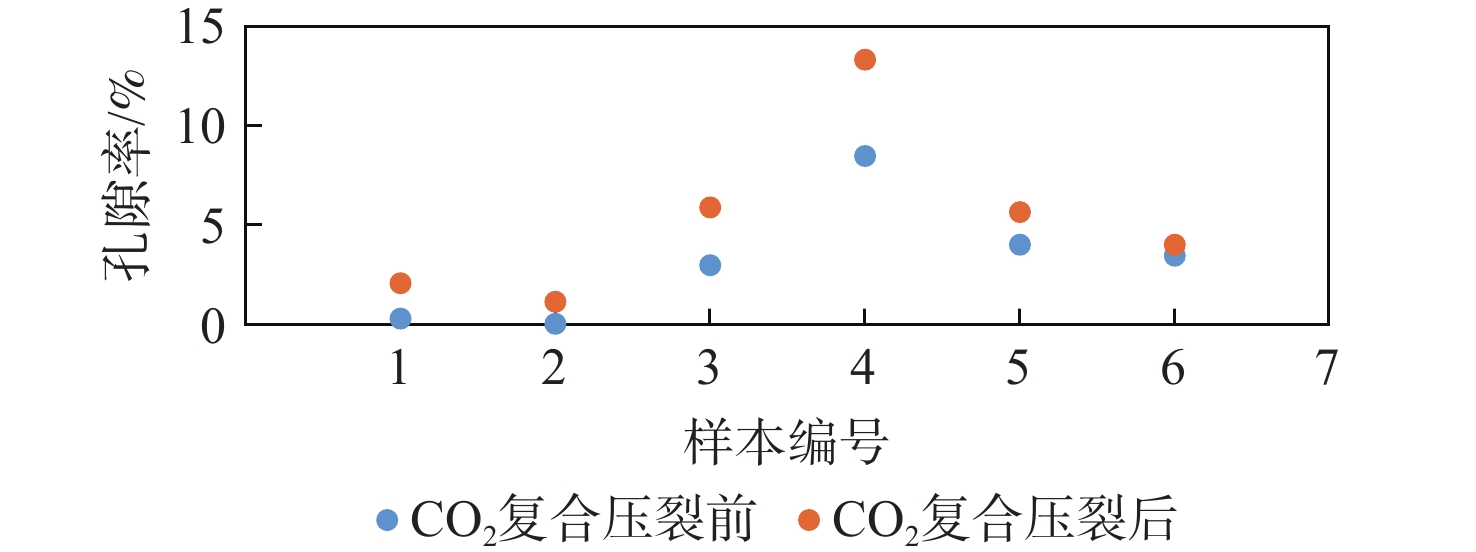

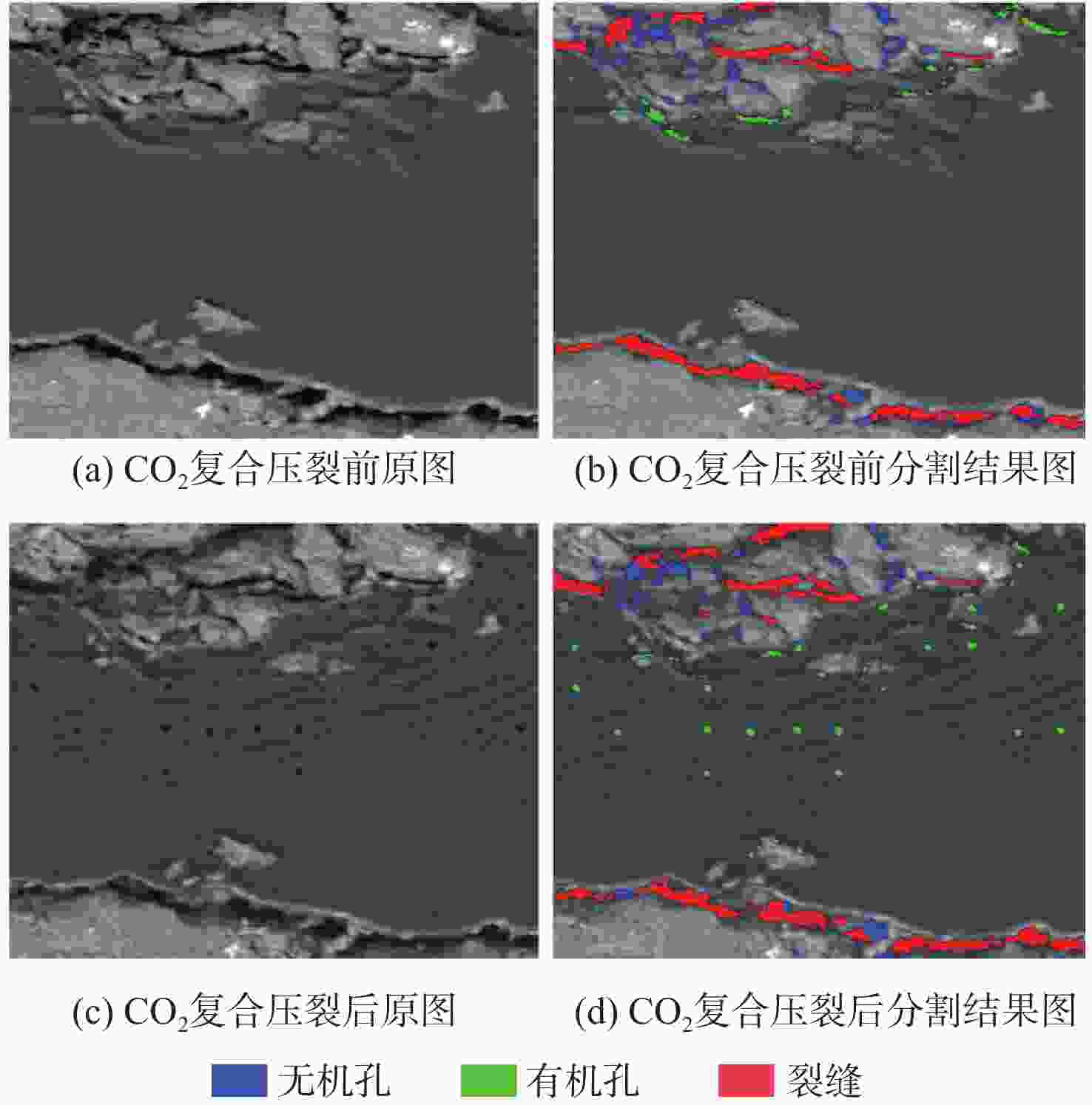

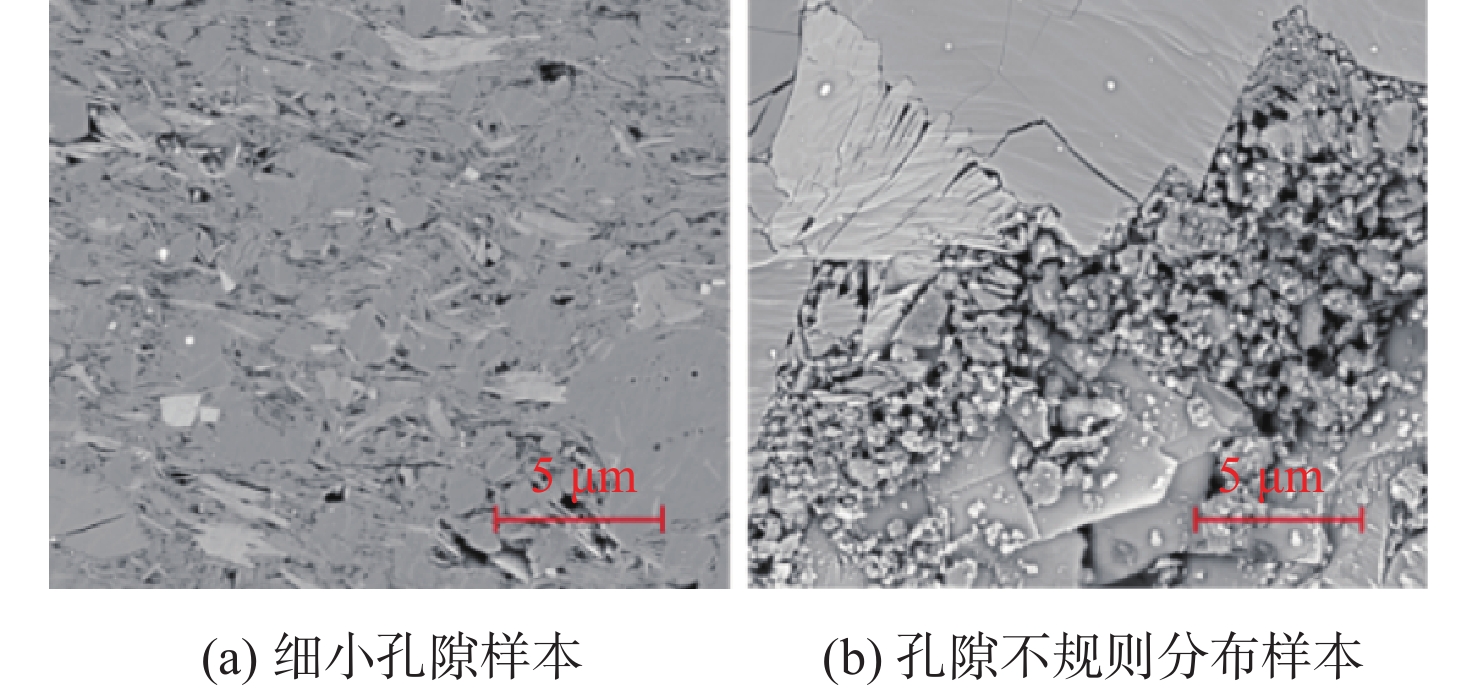

页岩孔隙类型和结构参数影响储层流体的富集和运移,是页岩储层评价的重要内容。现有评价方法存在主观性强、效率低及定量化程度低等问题,难以满足快速、精准分析页岩样品的迫切需求。基于此,提出一种基于语义分割的页岩孔隙结构智能表征方法。利用扫描电子显微镜(SEM)和多尺度采集与处理软件(MAPS)获取页岩二维灰度图像;由岩矿鉴定专家进行数据标注,分为有机孔、无机孔、裂缝和有机质;提出一种面向页岩孔隙结构分析任务的组合网络ShaleSeger及其训练范式,建立基于深度学习的页岩孔隙智能识别模型,形成基于多视域拼接的超大尺寸图像孔隙识别方案,从而实现基于MAPS图像的孔隙提取;利用图像处理技术实现孔隙结构特征智能表征。目前已实现古龙页岩孔隙结构定量分析,能够在孔隙边缘提取和类型识别的基础上自动计算各类型孔隙的面积占比,统计诸如孔径、视孔隙比表面、形状因子等孔隙结构参数。所提方法可以拓展应用于CO2复合压裂效果评估,对CO2复合压裂前后的微观结构特征改变进行定量比较。

Abstract:The enrichment and migration of reservoir fluids are affected by the types and structural parameters of shale pores, which are significant components of shale reservoir evaluation. Due to issues with current assessment methods, including high subjectivity, low efficiency, and low degree of quantification, it is challenging to address the urgent needs of quick and accurate examination of shale samples. Based on this, an intelligent characterization method for shale pore structure based on semantic segmentation is proposed. Firstly, the two-dimensional gray images of shale are obtained by scanning electron microscopy (SEM) and multi-scale acquisition and processing software (MAPS). Secondly, these images are annotated by the rock mineral identification experts, and divided into organic pores, inorganic pores, fractures and organic matter. Then, a combination network Shale Seger and its training paradigm for shale pore structure analysis tasks is innovatively proposed, an intelligent recognition model of shale pores based on deep learning is constructed, as well as, a pore recognition scheme of super large image based on multi-view mosaicisis established to extract pore from MAPS images. Lastly, intelligent characterisation of pore structural features is achieved by applying image processing techniques. As of right now, this study has produced a quantitative analysis of the Gulong shale's pore structure that can statistically compute pore structure parameters like pore diameter, apparent pore ratio surface, shape factor, and so forth, as well as automatically determine the area proportion of each type of pore based on pore edge extraction and type recognition. In addition, the technique described in this article can also be extended to the evaluation of CO2 composite fracturing, through which the change of microstructure characteristics before and after CO2 composite fracturing can be quantitatively compared.

-

Key words:

- tight shale oil /

- micro nano /

- pore structure /

- scanning electron microscopy /

- Gulong shale

-

表 1 分割效果定量对比

Table 1. Quantitative comparison of segmentation effects

模型 半监督训练 像素准确率/% 平均交并比/% 平均准确率/% 平均F1分数/% 平均精确率/% 平均召回率/% SegFormer[25] 否 99.25 66.70 75.57 77.82 80.64 75.57 OCRNet[19] 否 98.99 61.59 71.94 73.48 75.47 71.94 DeepLabv3[21] 否 98.57 52.43 59.49 64.11 71.37 59.49 PSPNet[22] 否 98.54 53.95 62.67 65.94 70.04 62.67 SegFormer+边缘特征增强 否 99.25 66.84 75.49 77.84 81.06 75.49 ShaleSeger 是 99.50 72.28 79.67 82.06 85.03 79.67 表 2 各类型孔隙、有机质面积占比

Table 2. Area proportion of various types pores and organic matter

样本名称 无机孔

占比/%有机孔

占比/%裂缝

占比/%有机质

占比/%其他

占比/%样本a 0.400 0.014 1.997 0.213 97.377 样本b 2.953 0 5.93×10−3 0 97.041 表 3 每个孔隙的特征参数

Table 3. Characteristic parameters of each pore

面积/mm2 直径/$ \mathrm{nm} $ 周长/$ \mathrm{nm} $ 视孔隙比

表面/nm−1形状因子 214.577 16.529 246.510 1.463 0.044 643.728 28.629 153.593 0.304 0.343 5257.087 81.814 367.252 0.089 0.490 1823.911 48.190 208.632 0.146 0.527 858.308 33.058 146.484 0.217 0.503 751.023 30.923 150.039 0.254 0.419 2253.051 53.560 208.632 0.118 0.650 $\vdots $ $\vdots $ $\vdots $ $\vdots $ $\vdots $ 4184.242 72.990 403.657 0.123 0.323 表 4 基于MAPS图像的各类型孔隙、有机质面积占比

Table 4. Area proportion of various types pores and organic matter based on MAPS

无机孔占比/% 有机孔占比/% 裂缝占比/% 有机质占比/% 面孔率/% 0.529 0.016 0.305 0.353 0.849 表 5 CO2复合压裂前后孔隙结构变化

Table 5. Changes in pore structure before and after CO2 composite fracturing

状态 无机孔占比/% 有机孔占比/% 裂缝占比/% 其他占比/% 复合压裂前 1.107 0.541 2.751 95.601 复合压裂后 1.019 0.561 3.211 95.209 -

[1] 朱林奇, 张冲, 周雪晴, 等. 融合深度置信网络与与核极限学习机算法的核磁共振测井储层渗透率预测方法[J]. 计算机应用, 2017, 37(10): 3034-3038.ZHU L Q, ZHANG C, ZHOU X Q, et al. Nuclear magnetic resonance logging reservoir permeability prediction method based on deep belief network and kernel extreme learning machine algorithm[J]. Journal of Computer Applications, 2017, 37(10): 3034-3038(in Chinese). [2] 张益明, 张繁昌, 丁继才, 等. 基于混合深度学习网络的致密砂岩甜点预测[J]. 石油物探, 2021, 60(6): 995-1002.ZHANG Y M, ZHANG F C, DING J C, et al. Sweet spot prediction in tight sand reservoirs by a hybrid deep-learning network[J]. Geophysical Prospecting for Petroleum, 2021, 60(6): 995-1002(in Chinese). [3] ZHOU S X, SHENG W, WEI X, et al. Fast image analysis on pore structure of concrete based on deep learning[J]. Journal of the Chinese Ceramic Society, 2019, 47(5): 653-663. [4] ZHOU S X, SHENG W, WANG Z P, et al. Quick image analysis of concrete pore structure based on deep learning[J]. Construction and Building Materials, 2019, 208: 144-157. [5] CARPENTER C. Machine-learning techniques characterize source-rock images at the pore scale[J]. Journal of Petroleum Technology, 2022, 74(1): 92-95. [6] LIU M L, MUKERJI T. Multiscale fusion of digital rock images based on deep generative adversarial networks[J]. Geophysical Research Letters, 2022, 49(9): e2022GL098342. [7] WANG H, GUO R, DALTON L E, et al. Comparative assessment of U-net-based deep learning models for segmenting microfractures and pore spaces in digital rocks[J]. SPE Journal, 2024, 29(11): 5779-5791. [8] 廖广志, 李远征, 肖立志, 等. 利用卷积神经网络模型预测致密储层微观孔隙结构[J]. 石油科学通报, 2020, 5(1): 26-38.LIAO G Z, LI Y Z, XIAO L Z, et al. Prediction of microscopic pore structure of tight reservoirs using convolutional neural network model[J]. Petroleum Science Bulletin, 2020, 5(1): 26-38(in Chinese). [9] 陈雁, 李祉呈, 程超, 等. FLU-Net: 用于表征页岩储层微观孔隙的深度全卷积网络[J]. 海洋地质前沿, 2021, 37(8): 34-43.CHEN Y, LI Z C, CHENG C, et al. Flu-net: a deep fully convolutional neural network for shale reservoir micro-pore characterization[J]. Marine Geology Frontiers, 2021, 37(8): 34-43(in Chinese). [10] YU Q Y, XIONG Z W, DU C, et al. Identification of rock pore structures and permeabilities using electron microscopy experiments and deep learning interpretations[J]. Fuel, 2020, 268: 117416. [11] ZHANG H, ZHANG R, SUN D Q, et al. Analyzing the pore structure of pervious concrete based on the deep learning framework of Mask R-CNN[J]. Construction and Building Materials, 2022, 318: 125987. [12] 陈宗铭, 唐玄, 梁国栋, 等. 基于深度学习的页岩扫描电镜图像有机质孔隙识别与比较[J]. 地学前缘, 2023(3): 208-220.CHEN Z M, TANG X, LIANG G D, et al. Identification and comparison of organic matter pores in shale scanning electron microscopy images based on deep learning[J]. Geological Frontiers, 2023(3): 208-220(in Chinese). [13] 毕飞宇, 肖占山, 张学忠, 等. 基于深度学习的页岩孔隙类型自动识别方法[J]. 测井技术, 2022, 46(4): 439-445.BI F Y, XIAO Z S, ZHANG X Z, et al. Automated identification method of shale pore types based on deep learning[J]. Well Logging Technology, 2022, 46(4): 439-445(in Chinese). [14] 蔡宇恒, 滕奇志, 涂秉宇. 基于深度学习的岩石铸体薄片图像孔隙自动提取[J]. 科学技术与工程, 2020, 20(28): 11685-11692.CAI Y H, TENG Q Z, TU B Y. Automatic extraction of pores in thin slice images of rock castings based on deep learning[J]. Science Technology and Engineering, 2020, 20(28): 11685-11692(in Chinese). [15] 王庆, 曾齐红, 张友焱, 等. 基于多尺度区域卷积神经网络的露头孔洞自动提取[J]. 现代地质, 2021, 35(4): 1147-1154.WANG Q, ZENG Q H, ZHANG Y Y, et al. Automatic extraction of outcrop cavity based on multi-scale regional convolution neural network[J]. Geoscience, 2021, 35(4): 1147-1154(in Chinese). [16] LONG J, SHELHAMER E, DARRELL T. Fully convolutional networks for semantic segmentation[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2015: 3431-3440. [17] BADRINARAYANAN V, KENDALL A, CIPOLLA R. SegNet: a deep convolutional encoder-decoder architecture for image segmentation[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 39(12): 2481-2495. [18] RONNEBERGER O, FISCHER P, BROX T. U-Net: convolutional networks for biomedical image segmentation[M]. Berlin: Springer, 2015: 234-241. [19] YUAN Y H, CHEN X L, WANG J D. Object-contextual representations for semantic segmentation[C]//Proceedings of the Computer Vision-ECCV. Berlin: Springer, 2020: 173-190. [20] CHEN L C, PAPANDREOU G, KOKKINOS I, et al. DeepLab: semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected CRFs[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2018, 40(4): 834-848. [21] CHEN L C, PAPANDREOU G, SCHROFF F, et al. Rethinking atrous convolution for semantic image segmentation[EB/OL]. (2017-12-05)[2023-07-01]. https://arxiv.org/abs/1706.05587. [22] ZHAO H S, SHI J P, QI X J, et al. Pyramid scene parsing network. [C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2017: 6230-6239. [23] DOSOVITSKIY A, BEYER L, KOLESNIKOV A, et al. An image is worth 16×16 words: transformers for image recognition at scale[EB/OL]. (2021-06-03)[2023-07-01]. https://arxiv.org/abs/2010.11929. [24] ZHENG S X, LU J C, ZHAO H S, et al. Rethinking semantic segmentation from a sequence-to-sequence perspective with transformers[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2021: 6877-6886. [25] XIE E, WANG W, YU Z, et al. SegFormer: simple and efficient design for semantic segmentation with transformers[EB/OL]. (2021-10-283)[2023-07-01]. https://arxiv.org/abs/2105.15203. [26] OUALI Y, HUDELOT C, TAMI M. Semi-supervised semantic segmentation with cross-consistency training[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 12671-12681. [27] LIU Y Y, TIAN Y, CHEN Y H, et al. Perturbed and strict mean teachers for semi-supervised semantic segmentation[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2022: 4248-4257. [28] LAI X, TIAN Z T, JIANG L, et al. Semi-supervised semantic segmentation with directional context-aware consistency[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2021: 1205-1214. [29] HUNG W C, TSAI Y H, LIOU Y T, et al. Adversarial learning for semi-supervised semantic segmentation[EB/OL]. (2018-07-24) [2023-07-01]. https://arxiv.org/abs/1802.07934. [30] WANG Y C, WANG H C, SHEN Y J, et al. Semi-supervised semantic segmentation using unreliable pseudo-labels[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2022: 4238-4247. [31] TAKIKAWA T, ACUNA D, JAMPANI V, et al. Gated-SCNN: gated shape CNNs for semantic segmentation[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2019: 5228-5237. [32] 杨运龙, 梁路, 滕少华. 一种双路网络语义分割模型[J]. 广东工业大学学报, 2022, 39(1): 63-70.YANG Y L, LIANG L, TENG S H. A two-way network model for semantic segmentation[J]. Journal of Guangdong University of Technology, 2022, 39(1): 63-70(in Chinese). -

下载:

下载: