-

摘要:

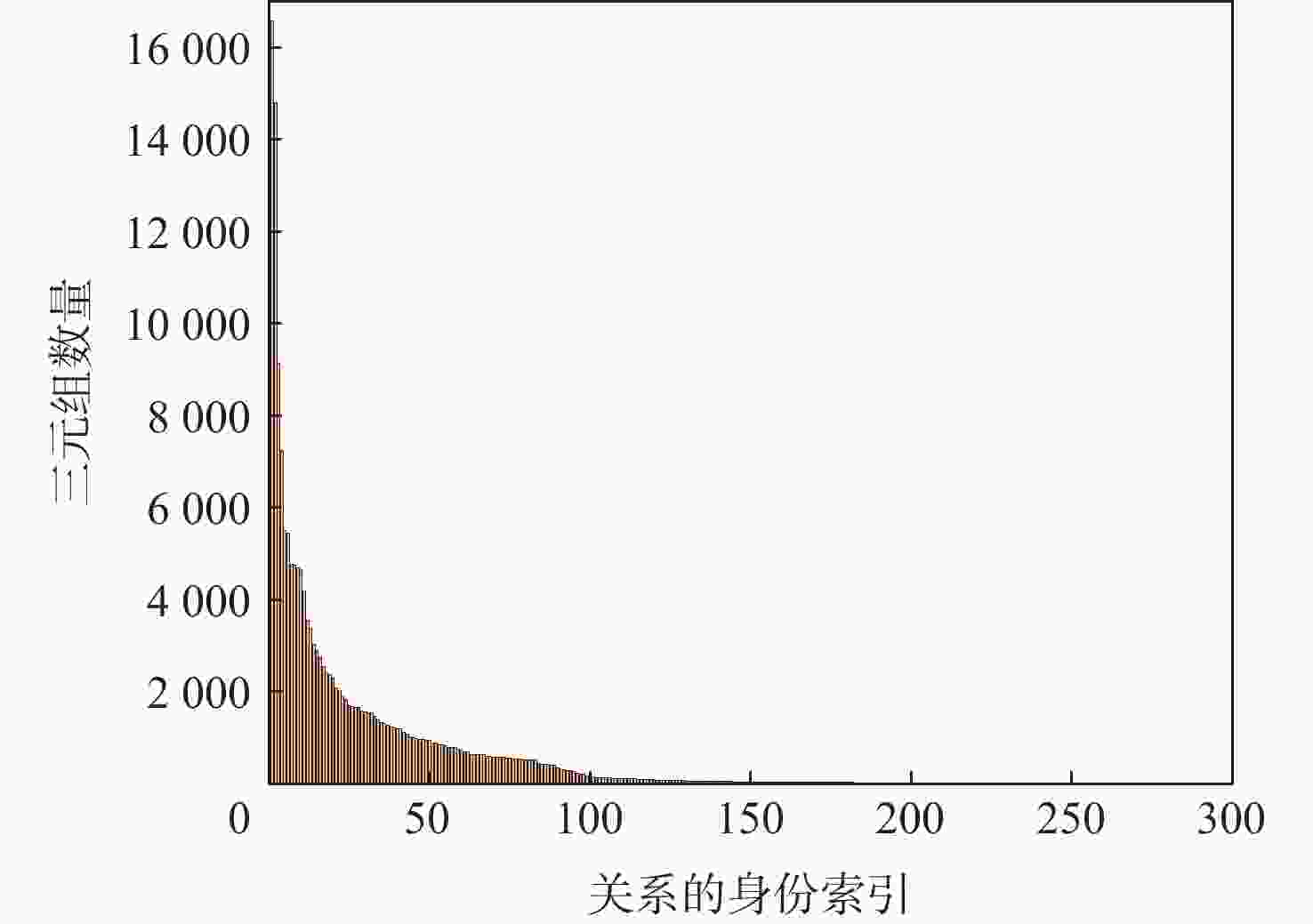

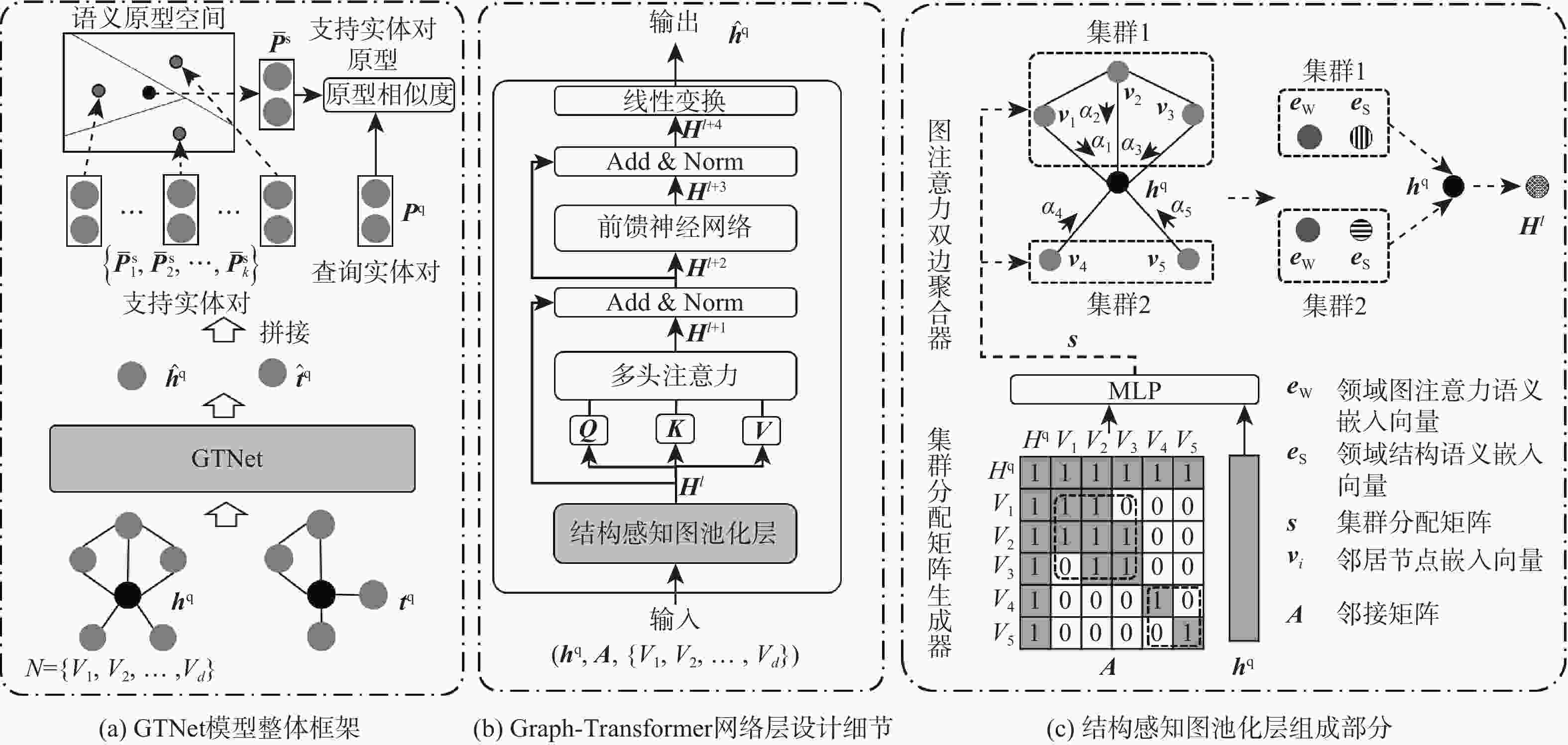

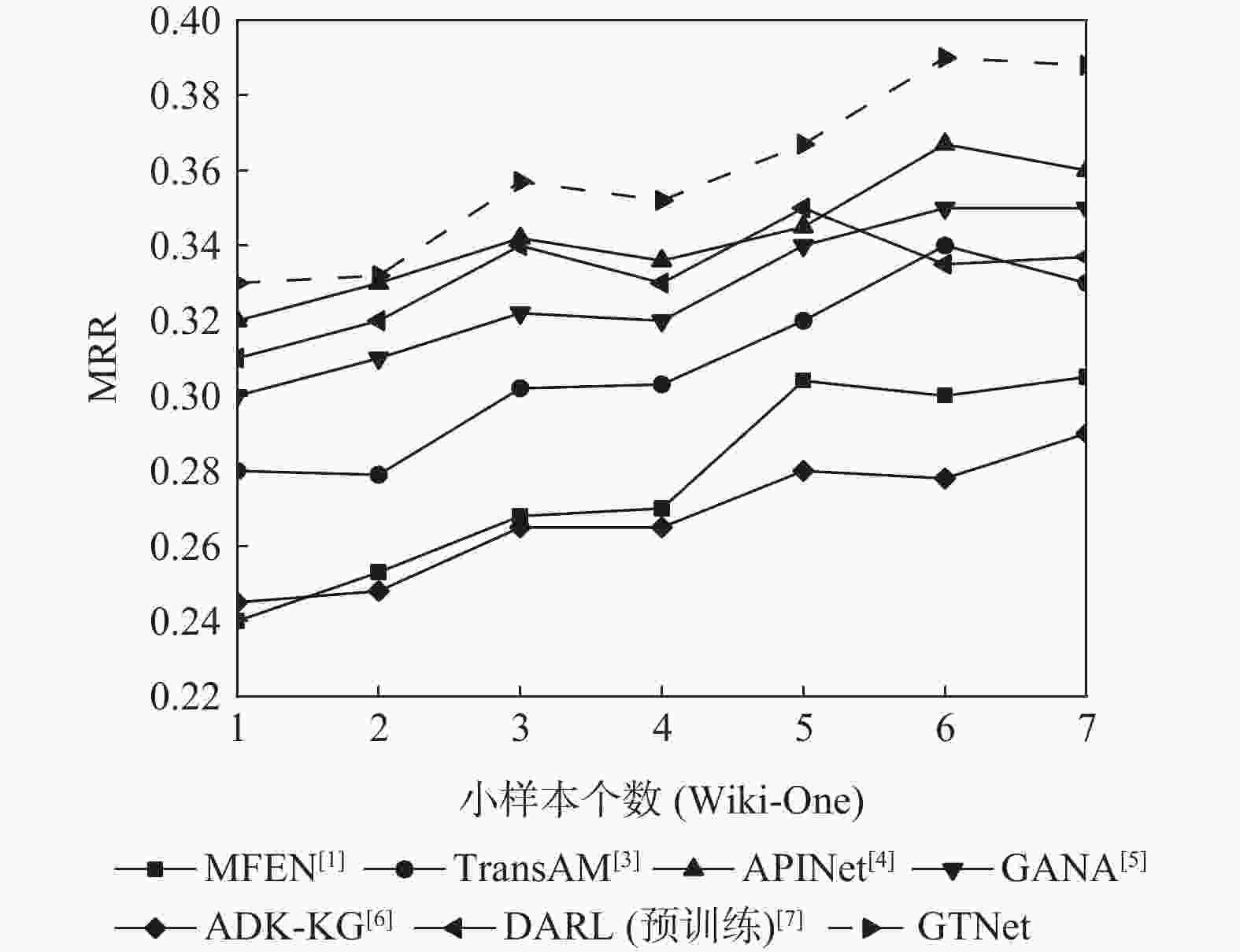

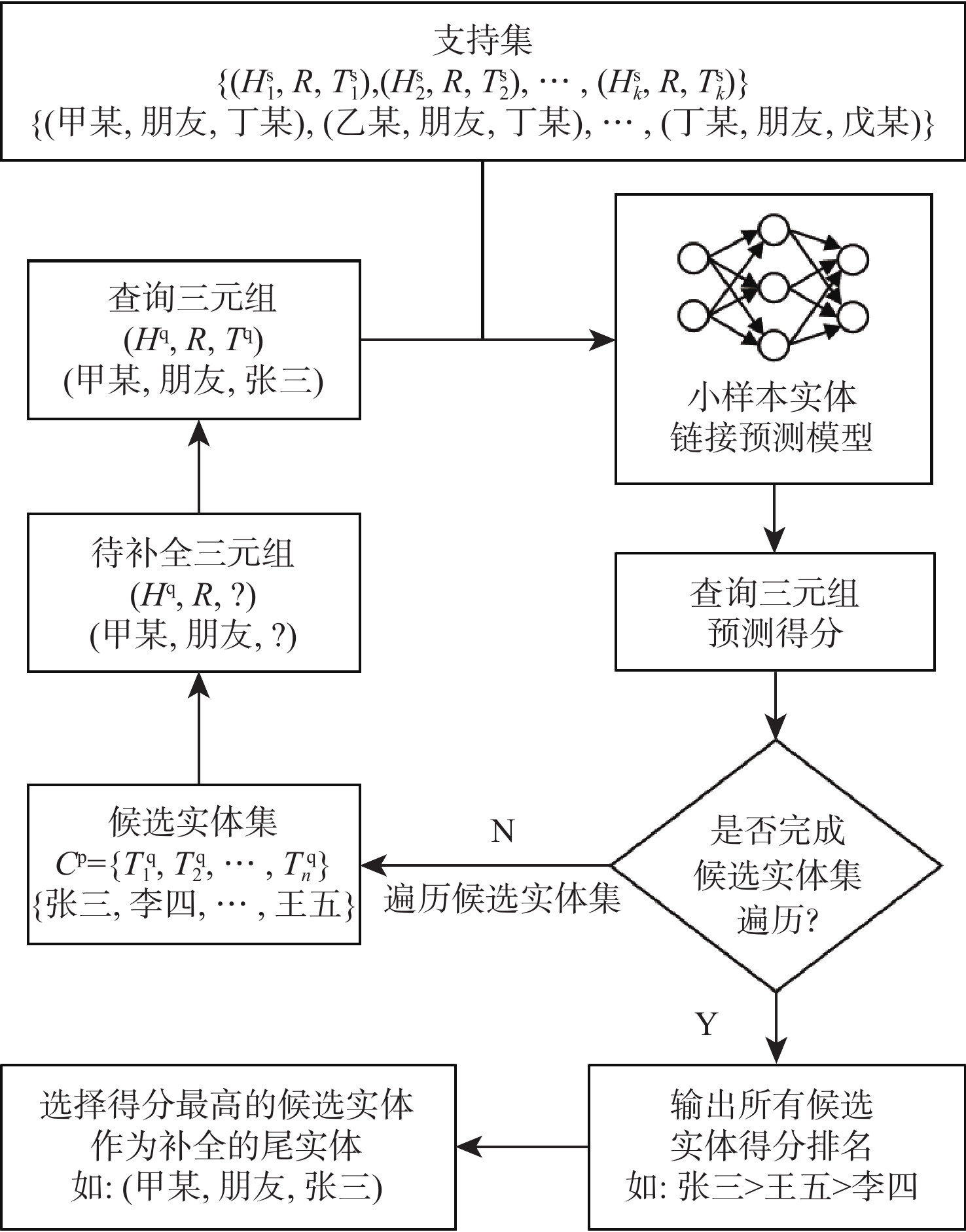

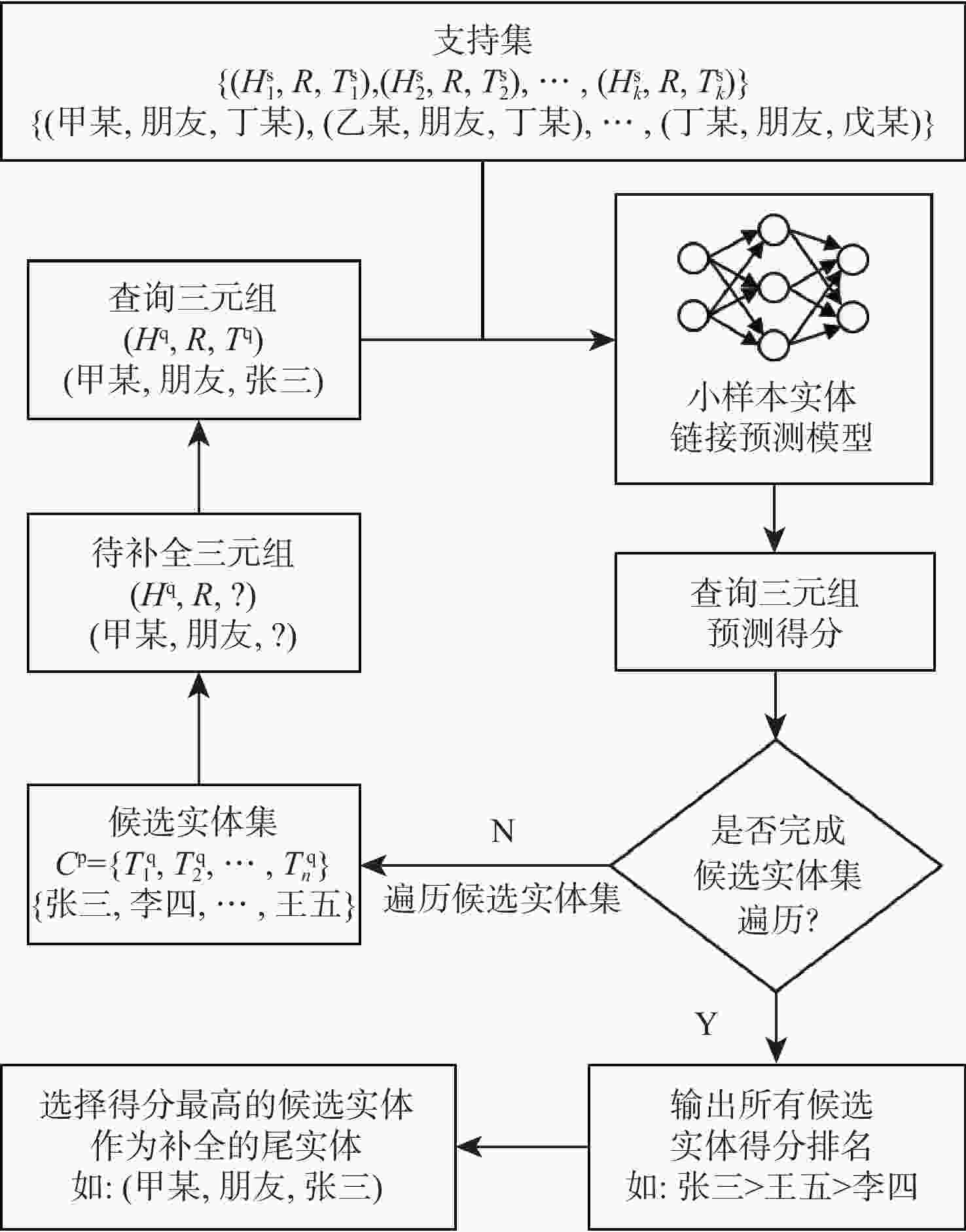

小样本场景下的实体链接预测旨在利用少量参考三元组推断查询三元组中缺失的实体。针对主流方法在实体编码阶段忽视节点所在图结构信息的问题,提出一种Graph-Transformer网络(GTNet)。为了增强实体表示,设计一种结构感知图池化层来学习并融合节点的图结构特征。拼接头尾实体得到实体对嵌入,并将参考实体对投影到语义原型空间中,得到参考实体对的原型嵌入。计算查询实体对嵌入与参考实体对原型嵌入相似度作为链接预测分数。实验表明:所提模型在 NELL-One 和 Wiki-One 数据集中分别对比平均倒数排名(MRR)、Hits@10、Hits@5、Hits@1指标下最优的基线模型,分别有0.012、0.015、0.028、0.023及0.012、0.05、0.033、0.031的提升,表明所提模型能通过挖掘节点所在的图结构信息来增强实体表示,从而有效预测三元组中缺失的实体,泛化性更好。

Abstract:In the context of small sample scenarios, entity linking prediction aims to infer missing entities in query triples using a few reference triples. Nevertheless, the graph structure information of nodes in the entity encoding step is disregarded by popular entity linking prediction techniques. To address this issue, a Graph-Transformer network (GTNet) is proposed. In order to improve entity representation, we first create a structure-aware graph pooling layer that learns and fuses node graph structure information. The entity pair embeddings are then created by concatenating the head and tail entities, and their prototype embeddings are obtained by projecting reference entity pairs into a semantic prototype space. Finally, we calculate the similarity between the entity pair embeddings of the query and the reference entity pair prototype embeddings, and use this similarity as the link prediction score. Experiments on the NELL-One and Wiki-One datasets show that our proposed model outperforms the best baseline models by 0.012, 0.015, 0.028, 0.023 and 0.012, 0.05, 0.033, 0.031 in terms of mean reciprocal ranking (MRR), Hits@10, Hits@5, and Hits@1 metrics, respectively. This shows that by mining the graph structure information of nodes, our model may improve the entity representation ability, effectively predicting missing entities in triples and demonstrating improved generalization.

-

数据集 实体数量 关系数量 三元组数量 预测任务组数量 NELL-One 68545 358 181109 67 Wiki-One 4838244 822 5859240 183 表 2 不同模型在NELL-One和Wiki-One数据集上的 3-shot 链接预测结果

Table 2. 3-shot linking prediction results of the model on the NELL-One and Wiki-One datasets

模型 MRR Hits@10 Hits@5 Hits@1 NELL-One

数据集Wiki-One

数据集NELL-One

数据集Wiki-One

数据集NELL-One

数据集Wiki-One

数据集NELL-One

数据集Wiki-One

数据集MFEN[1] 0.226 0.268 0.401 0.372 0.287 0.322 0.179 0.201 TransAM[3] 0.215 0.302 0.348 0.346 0.245 0.341 0.164 0.264 APINet[4] 0.305 0.342 0.496 0.473 0.405 0.419 0.208 0.283 GANA[5] 0.311 0.322 0.481 0.418 0.413 0.379 0.221 0.276 ADK-KG[6] 0.302 0.265 0.379 0.346 0.301 0.283 0.224 0.231 DARL[7] 0.213 0.345 0.374 0.446 0.326 0.400 0.138 0.290 GTNet 0.323 0.357 0.511 0.523 0.441 0.452 0.247 0.321 表 3 本文模型在3-shot 条件下NELL-One测试集部分任务的预测评分

Table 3. Some task prediction scores of the model on the NELL-One test set under the 3-shot conditions

预测任务实例组 MRR Hits@10 Hits@5 Hits@1 sports game sport 0.977 0.986 0.986 0.971 automobile maker dealers in country 0.601 0.835 0.736 0.484 sport school in country 0.572 0.745 0.653 0.500 produced by 0.479 0.654 0.615 0.370 athlete injured his body part 0.444 0.703 0.641 0.312 animal such as invertebrate 0.381 0.622 0.510 0.263 表 4 结构感知图池化层对链接预测效果的影响

Table 4. The influence of the structure-aware graph pooling layer on the linking prediction effect

结构感知图

池化层MRR Hits@10 Hits@5 Hits@1 × 0.301 0.477 0.403 0.206 √ 0.323 0.511 0.441 0.247 -

[1] WU T, MA H Y, WANG C, et al. Heterogeneous representation learning and matching for few-shot relation prediction[J]. Pattern Recognition, 2022, 131: 108830. [2] YUAN X, XU C C, LI P, et al. Relational learning with hierarchical attention encoder and recoding validator for few-shot knowledge graph completion[C]//Proceedings of the 37th ACM/SIGAPP Symposium on Applied Computing. New York: ACM, 2022: 786-794. [3] LIANG Y, ZHAO S, CHENG B, et al. TransAM: Transformer appending matcher for few-shot knowledge graph completion[J]. Neurocomputing, 2023, 537: 61-72. [4] LI Y L, YU K, ZHANG Y H, et al. Adaptive prototype interaction network for few-shot knowledge graph completion[J]. IEEE Transactions on Neural Networks and Learning Systems, 2024, 35(11): 15237-15250. [5] NIU G L, LI Y, TANG C G, et al. Relational learning with gated and attentive neighbor aggregator for few-shot knowledge graph completion[C]//Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval. New York: ACM, 2021: 213-222. [6] ZHANG Y, QIAN Y, YE Y, et al. Adapting distilled knowledge for few-shot relation reasoning over knowledge graphs[C]//Proceedings of the 2022 SIAM International Conference on Data Mining. Philadelphia: Society for Industrial and Applied Mathematics, 2022: 666-674. [7] CAI L Q, WANG L J, YUAN R D, et al. Meta-learning based dynamic adaptive relation learning for few-shot knowledge graph completion[J]. Big Data Research, 2023, 33: 100394. [8] DWIVEDI V P, BRESSON X. A generalization of Transformer networks to graphs[EB/OL]. (2021-01-24)[2024-01-05]. https://arxiv.org/abs/2012.09699. [9] CHEN D X, O’BRAY L, BORGWARDT K M. Structure-aware Transformer for graph representation learning[C]//Proceedings of the International Conference on Machine Learning. Baltimore: PMLR, 2022: 3469-3489. [10] YING C X, CAI T L, LUO S J, et al. Do Transformers really perform badly for graph representation[C]//Proceedings of the Neural Information Processing Systems. Red Hook: Curran Associates, 2021: 28877-28888. [11] VASWANI A, SHAZEER N, PARMAR N, et al. Attention is all you need[C]//Proceedings of the 31st International Conference on Neural Information Processing Systems. New York: ACM, 2017: 6000-6010. [12] LUO Y, THOST V, SHI L. Transformers over directed acyclic graphs[C]//Proceedings of the Neural Information Processing Systems. Red Hook: Curran Associates, 2022: 47764-47782. [13] SHENG J W, GUO S, CHEN Z Y, et al. Adaptive attentional network for few-shot knowledge graph completion[C]//Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing. Kerrville: Association for Computational Linguistics, 2020: 1681-1691. [14] 刘丹阳, 方全, 张晓伟, 等. 基于图对比注意力网络的知识图谱补全[J]. 北京航空航天大学学报, 2022, 48(8): 1428-1435.LIU D Y, FANG Q, ZHANG X W, et al. Knowledge graph completion based on graph contrastive attention network[J]. Journal of Beijing University of Aeronautics and Astronautics, 2022, 48(8): 1428-1435(in Chinese). [15] BIANCHI F M, GRATTAROLA D, ALIPPI C. Spectral clustering with graph neural networks for graph pooling[C]//Proceedings of the 37th International Conference on Machine Learning. New York: ACM, 2020: 874-883. -

下载:

下载: