Infrared small target detection based on dual-domain and global context feature extraction

-

摘要:

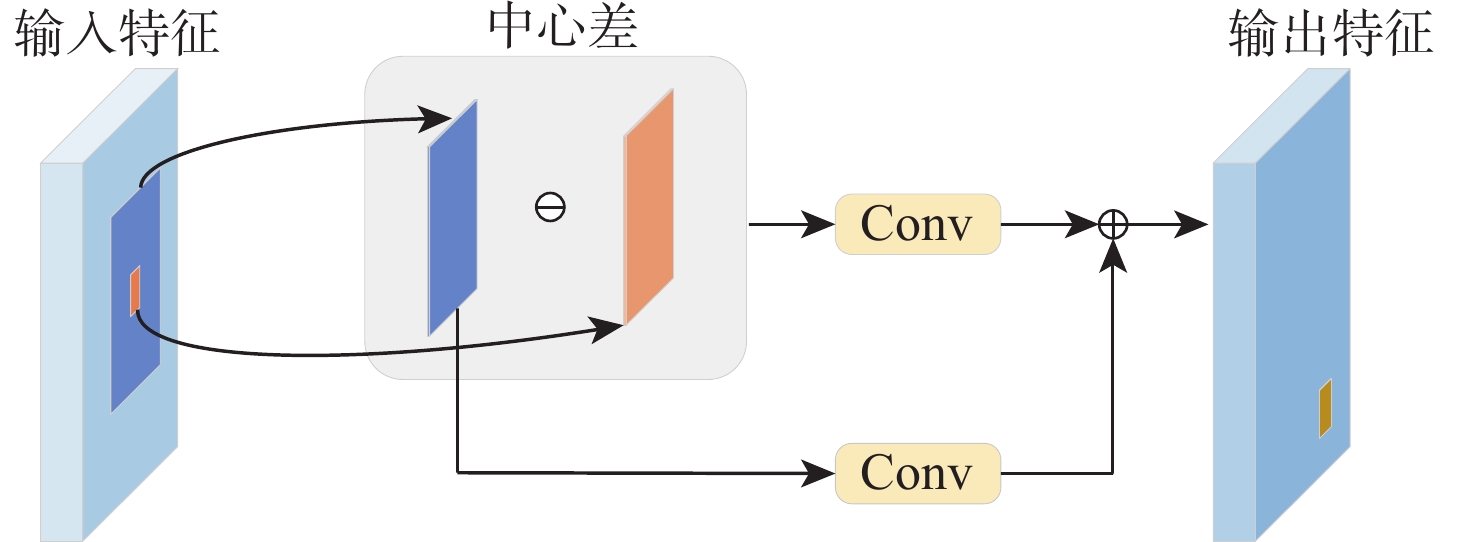

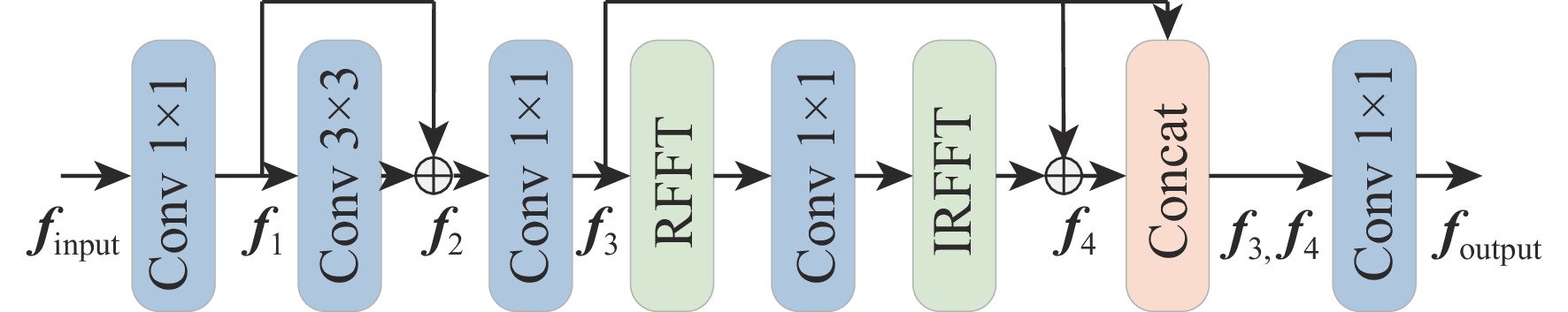

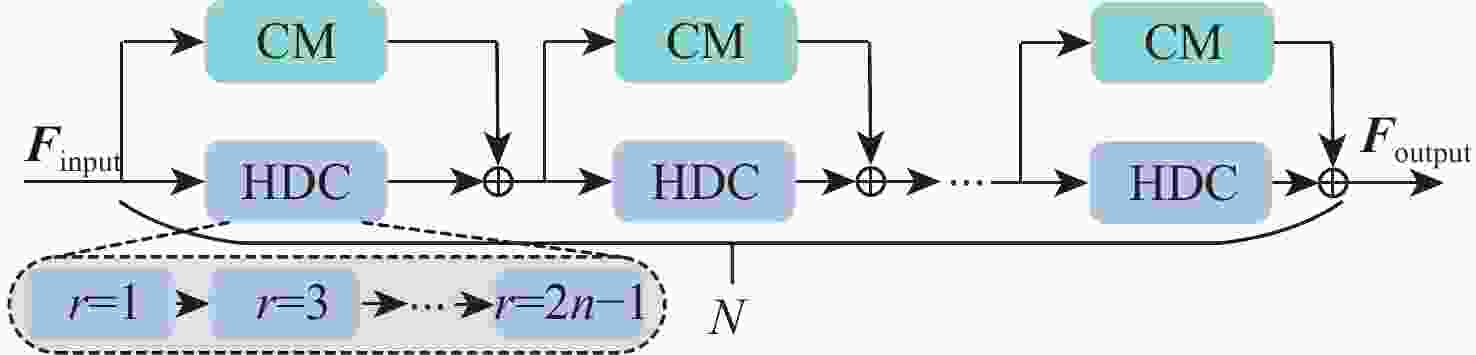

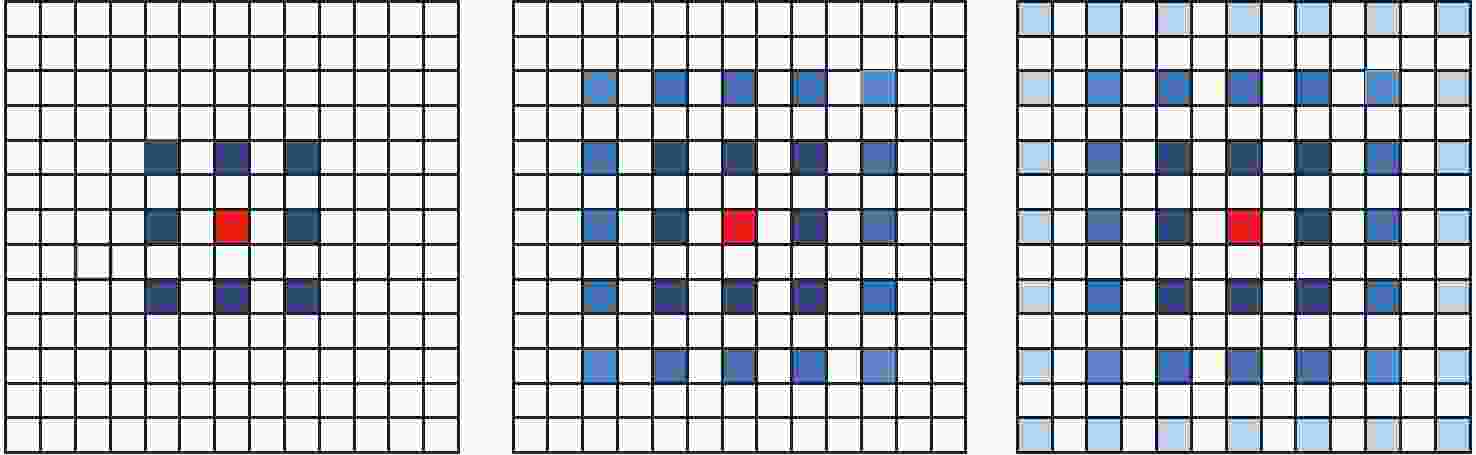

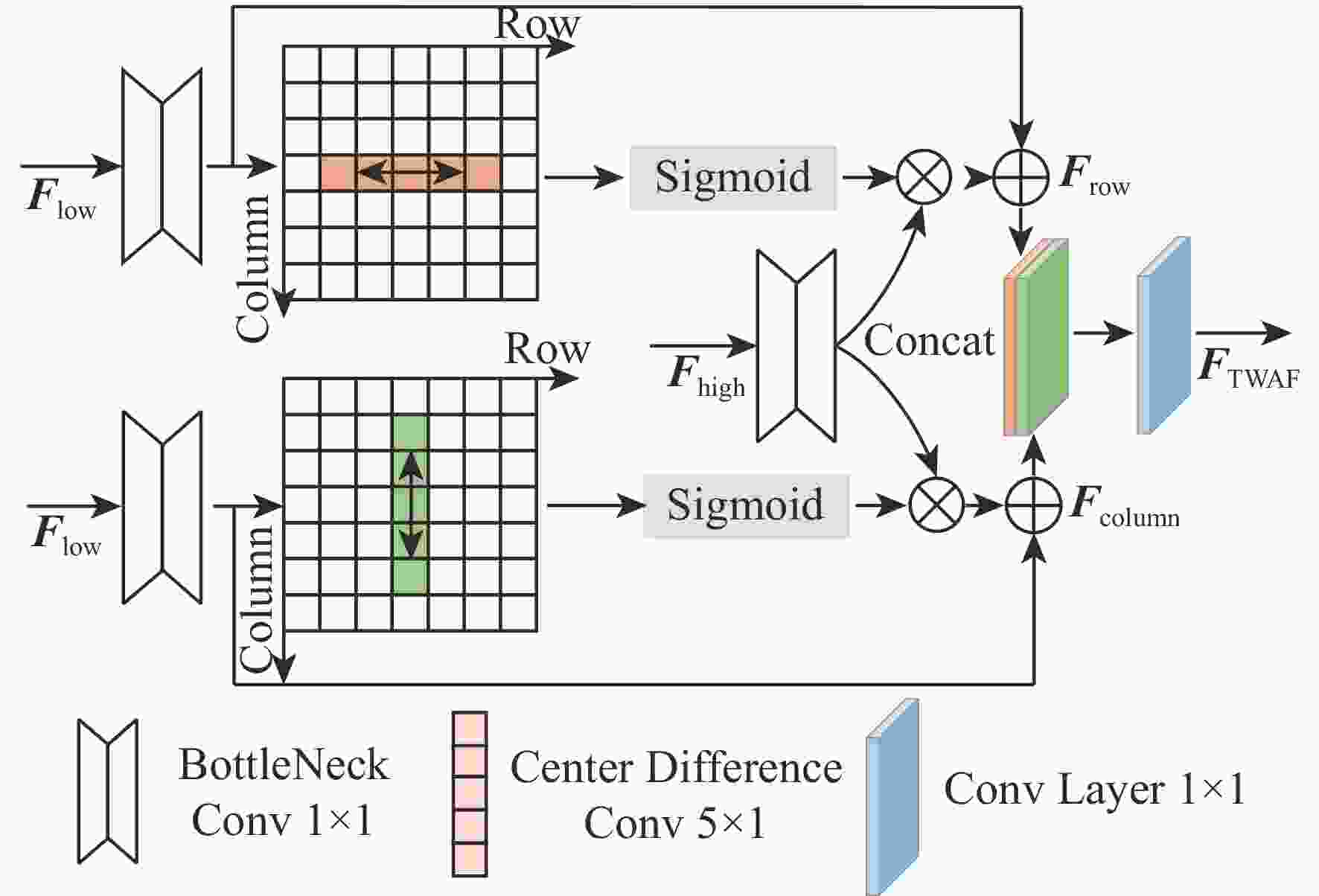

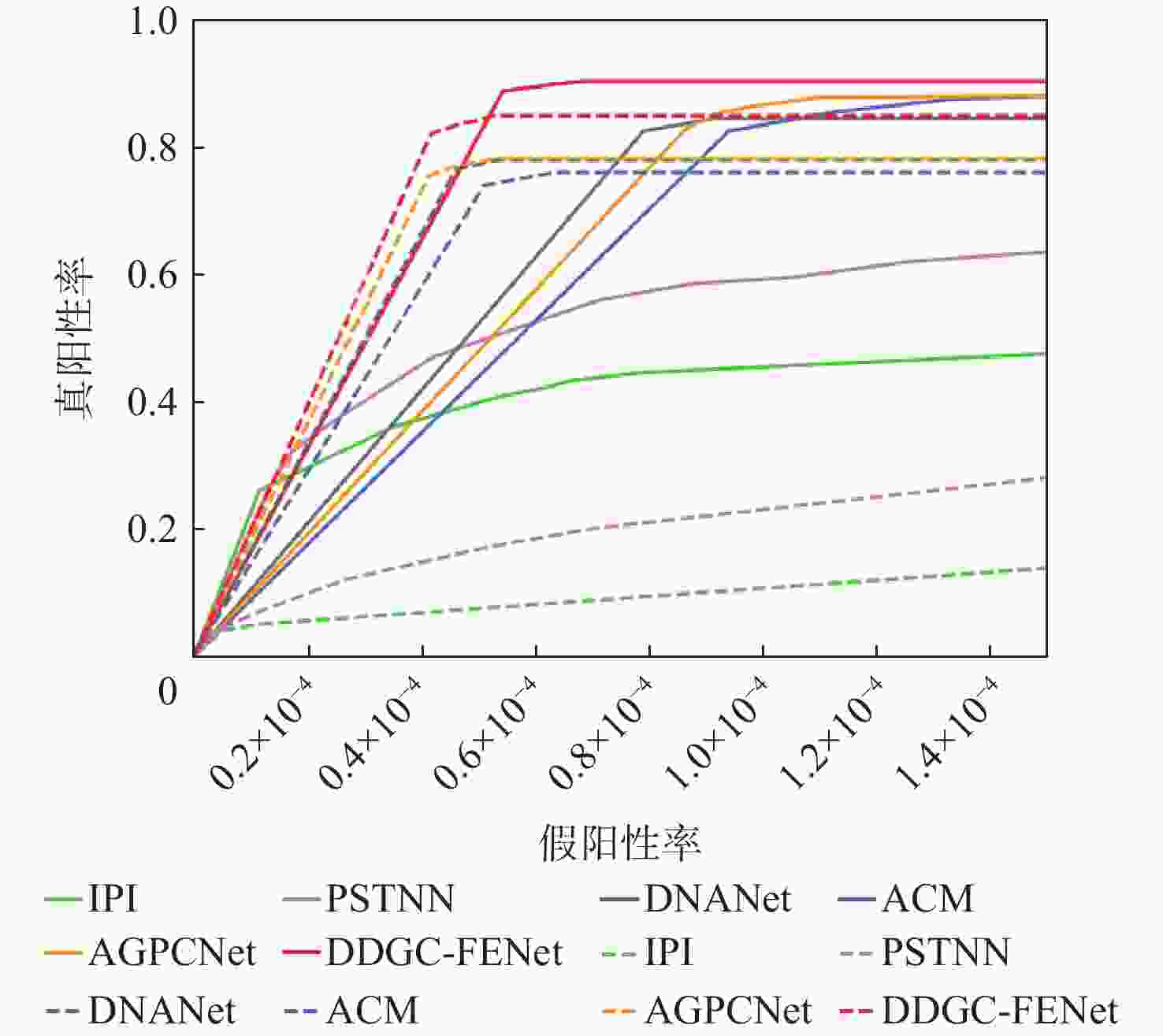

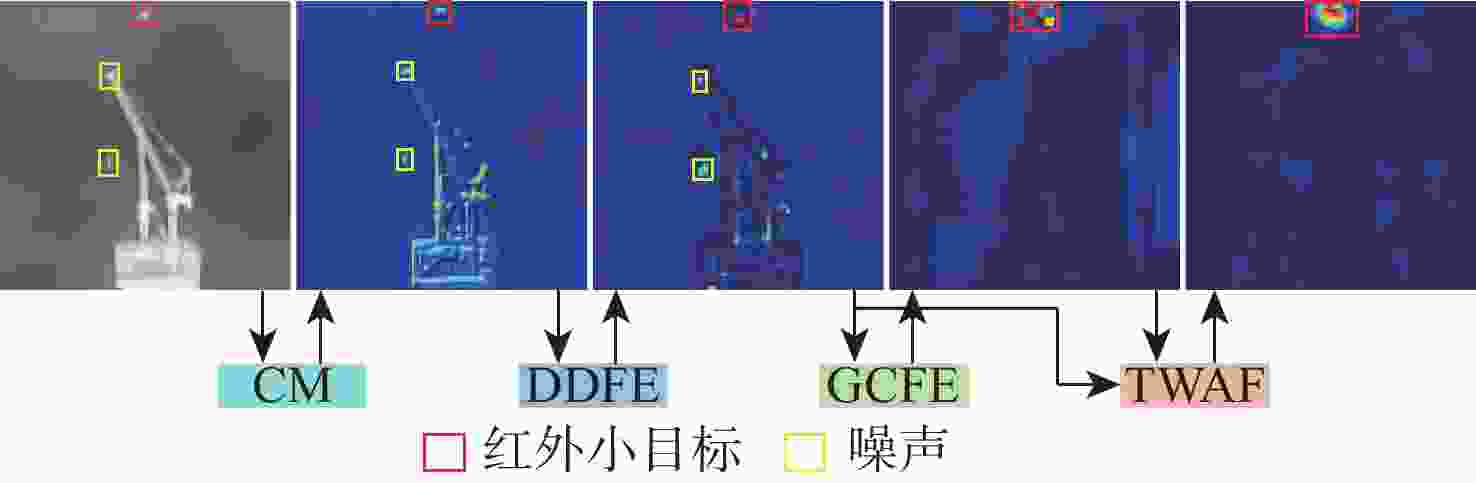

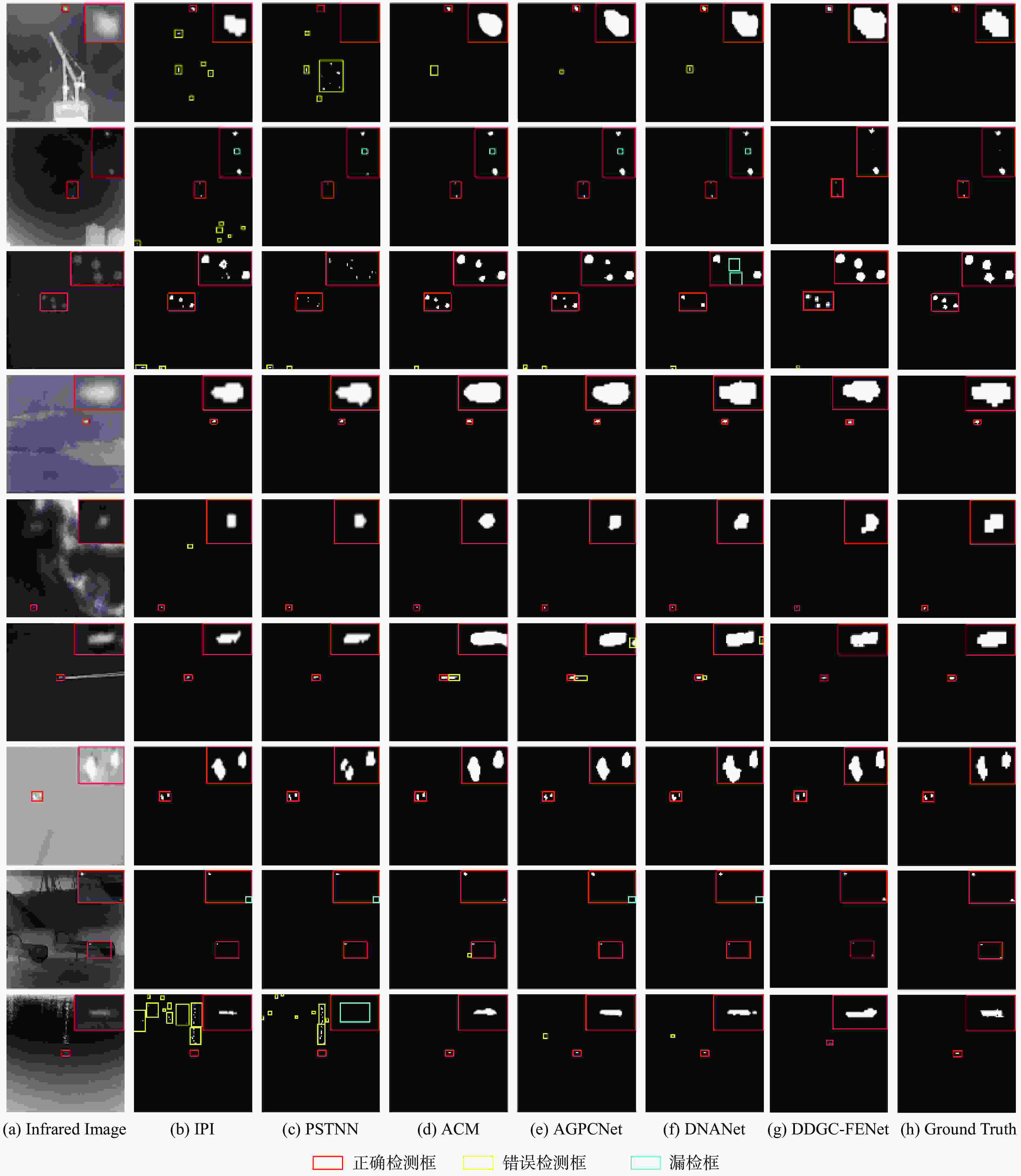

针对单帧红外小目标检测(ISTD)中存在的2个固有问题:小目标缺乏颜色、纹理和形状等局部信息;在检测模型通过连续下采样获取高级语义信息和全局感受野的过程中,小目标极易丢失,提出一种准确、快速的双域和全局上下文特征提取网络(DDGC-FENet)。该模型包括双域特征提取(DDFE)模块和全局上下文特征提取(GCFE)模块。DDFE模块同时在空间域和频域学习小目标与背景的局部对比信息,以此将目标与背景分离开来。GCFE模块可以对经多次下采样的特征图进行全局建模,以提取全局上下文,防止目标特征在网络深层丢失。此外,模型还使用双向注意力融合模块(TWAF)从行和列2个方向融合低级与高级特征。在多个公开数据集上进行实验,结果表明,所提方法在平均交并比、归一化交并比和

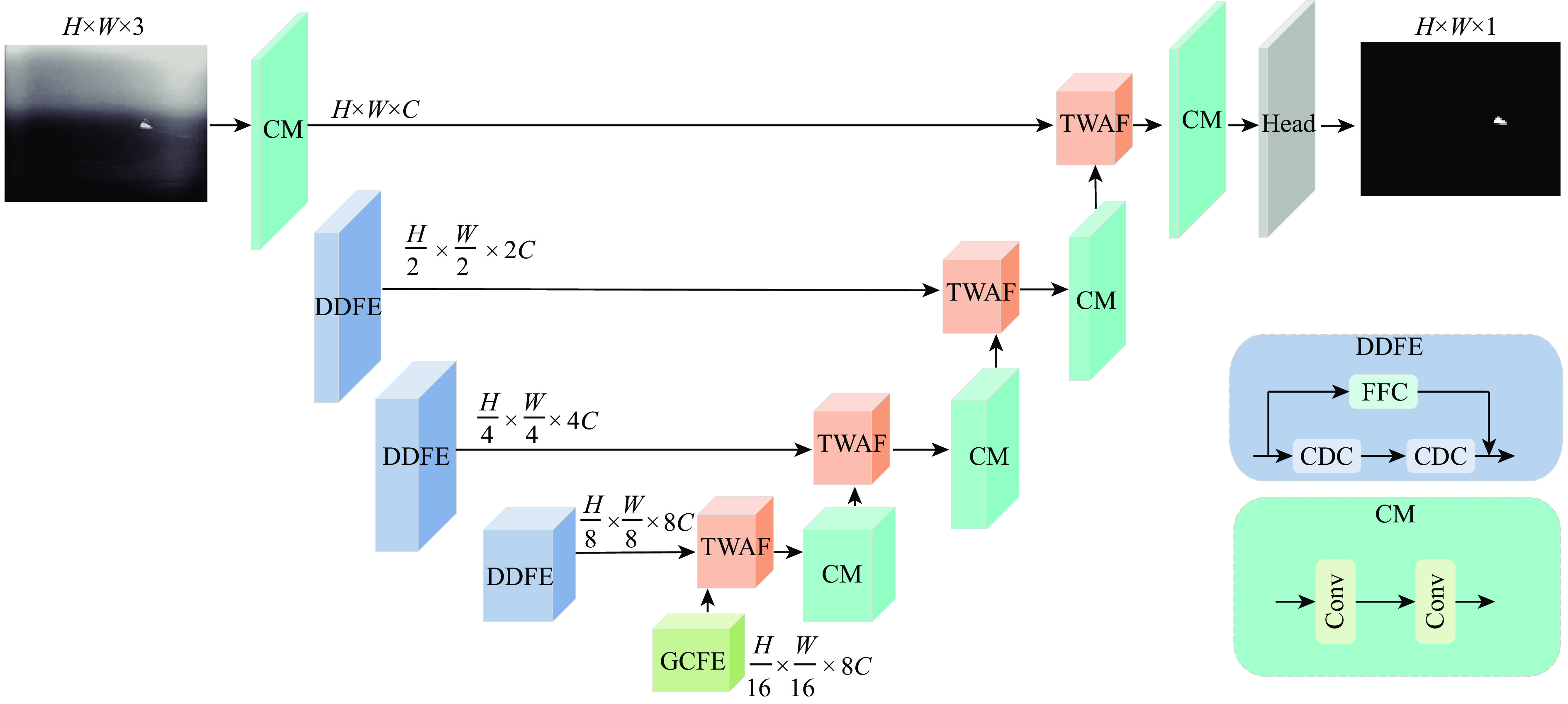

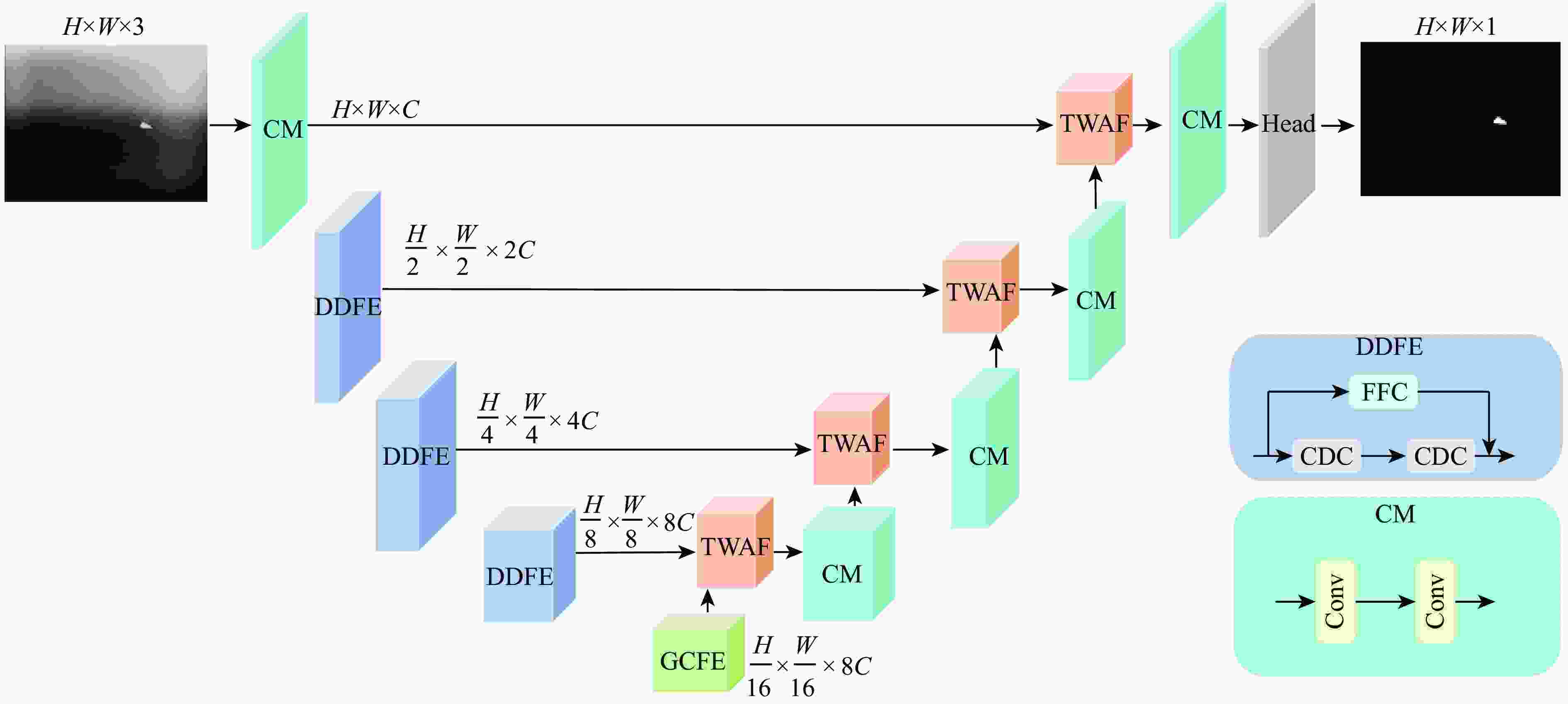

F 1指标上明显优于AGPCNet、DNANet、ISNet等目前较先进的方法。Abstract:Aiming at two inherent problems in single frame infrared small target detection (ISTD): The small target lacks local information such as color, texture and shape; The small targets are readily lost during the continuous down-sampling process that yields high-level semantic information and the global receptive field. A double-domain and global context feature extraction network (DDGC-FENet) that is both precise and quick is suggested. The model includes a dual-domain feature extraction (DDFE) module and a global context feature extraction (GCFE) module. The DDFE module simultaneously learns the local contrast information of the small target and the background in the spatial domain and the frequency domain, so as to separate the target from the background. The GCFE module can globally model the feature map after multiple down-sampling to extract the global context and prevent the loss of target features in the deep layer of the network. Furthermore, the model fuses low-level and high-level features from both row and column directions using a two-way attention fusion (TWAF) module. The suggested approach outperforms cutting-edge techniques like AGPCNet, DNANet, and ISNet in terms of mIoU, nIoU, and F1, according to experiments conducted on a number of public datasets.

-

表 1 不同数据集参数设置

Table 1. Parameter settings of different datasets

表 2 不同方法在NUAA和IRSTD1k数据集上的检测效果

Table 2. The detection effects of different methods on NUAA and IRSTD1k datasets

% 方法 mIoU nIoU F1 NUAA[17] IRSTD1k[20] NUAA[17] IRSTD1k[20] NUAA[17] IRSTD1k[20] IPI[11] 57.62 14.95 63.75 34.53 73.11 26.02 RIPT[13] 28.36 11.37 35.87 17.51 44.24 20.40 PSTNN[26] 51.52 15.94 62.00 32.72 67.98 27.51 ACM[17] 72.88 63.39 72.20 60.81 84.35 77.59 AGPCNet[18] 77.12 68.81 75.13 66.18 87.05 81.52 DNANet[19] 74.91 68.87 75.10 67.53 85.65 81.57 ISNet[20] 80.02 68.77 78.12 64.84 88.90 81.47 DDGC-FENet 81.24 72.58 80.03 68.87 89.65 83.92 注:加粗数值表示最优。 表 3 不同方法在SIRSTAUG和NUDT数据集上的检测效果

Table 3. The detection effects of different methods on SIRSTAUG and NUDT datasets

% 方法 mIoU nIoU F1 SIRSTAUG[18] NUDT[19] SIRSTAUG[18] NUDT[19] SIRSTAUG[18] NUDT[19] IPI[11] 37.74 37.51 45.27 48.39 54.75 54.55 RIPT[13] 24.15 29.17 33.40 36.13 38.91 45.19 PSTNN[26] 19.03 27.72 27.08 39.80 32.11 43.42 ACM[17] 73.84 68.48 69.83 69.26 84.95 81.29 AGPCNet[18] 74.70 88.67 71.47 87.40 85.50 93.95 DNANet[19] 74.90 92.67 70.25 92.05 85.66 96.17 ISNet[20] 74.96 92.01 71.27 91.63 85.62 95.86 DDGC-FENet 76.53 93.28 71.96 93.34 86.71 96.52 注:加粗数值表示最优。 表 4 不同方法在NUAA数据集上的性能比较

Table 4. Performance comparison of different methods on NUAA dataset

% 表 5 本文模型消融实验结果

Table 5. The ablation experimental results of the proposed model

% U-Net mIoU nIoU F1 DDFE GCFE TWAF × × × 76.45 77.24 86.67 √ × × 79.99 78.11 88.88 × √ × 80.05 78.20 88.92 × × √ 76.72 77.51 86.89 × √ √ 80.37 78.47 89.12 √ √ √ 81.24 80.03 89.65 注:加粗数值表示最优。 表 6 混合空洞卷积迭代次数N和卷积层数n的消融实验结果

Table 6. The ablation experimental results of the number of iterations N and the number of convolutional layers n of the hybrid dilated convolution

% 指标 数值 mIoU nIoU F1 迭代次数 2 79.87 78.08 88.81 4 80.05 78.20 88.92 6 79.74 77.68 88.73 卷积层数 2 80.00 78.06 88.89 4 80.05 78.20 88.92 6 79.88 78.17 88.82 注:加粗数值表示最优。 表 7 输入维度C的消融实验结果

Table 7. The ablation experimental results of input dimension C

% C mIoU nIoU F1 16 80.41 79.25 89.14 24 80.92 80.03 89.46 32 81.24 80.03 89.65 48 81.07 79.94 89.55 注:加粗数值表示最优。 表 8 超参数θ的消融实验结果

Table 8. The ablation experimental results of hyperparameters θ

% θ mIoU nIoU F1 0.0 80.17 78.51 89.00 0.3 80.94 79.28 89.46 0.5 81.24 80.03 89.65 0.7 81.08 79.13 89.55 1.0 80.99 78.98 89.50 注:加粗数值表示最优。 -

[1] HUANG S Q, LIU Y H, HE Y M, et al. Structure-adaptive clutter suppression for infrared small target detection: chain-growth filtering[J]. Remote Sensing, 2020, 12(1): 47. [2] TEUTSCH M, KRÜGER W. Classification of small boats in infrared images for maritime surveillance[C]//Proceedings of the 2010 International WaterSide Security Conference. Piscataway: IEEE Press, 2010: 1-7. [3] RAWAT S S, VERMA S K, KUMAR Y. Review on recent development in infrared small target detection algorithms[J]. Procedia Computer Science, 2020, 167: 2496-2505. [4] WANG X Y, PENG Z M, ZHANG P, et al. Infrared small target detection via nonnegativity-constrained variational mode decomposition[J]. IEEE Geoscience and Remote Sensing Letters, 2017, 14(10): 1700-1704. [5] DESHPANDE S D, ER M H, VENKATESWARLU R, et al. Max-mean and max-median filters for detection of small-targets[C]//Proceedings of the Conference on Signal and Data Processing of Small Targets 1999. Bellinghum: SPIE, 1999: 74-83. [6] ANJU T S, NELWIN RAJ N R. Shearlet transform based image denoising using histogram thresholding[C]//Proceedings of the 2016 International Conference on Communication Systems and Networks. Piscataway: IEEE Press, 2016: 162-166. [7] HAN J H, LIANG K, ZHOU B, et al. Infrared small target detection utilizing the multiscale relative local contrast measure[J]. IEEE Geoscience and Remote Sensing Letters, 2018, 15(4): 612-616. [8] CHEN Y W, XIN Y H. An efficient infrared small target detection method based on visual contrast mechanism[J]. IEEE Geoscience and Remote Sensing Letters, 2016, 13(7): 962-966. [9] DENG H, SUN X P, LIU M L, et al. Small infrared target detection based on weighted local difference measure[J]. IEEE Transactions on Geoscience and Remote Sensing, 2016, 54(7): 4204-4214. [10] HAN J H, LIU S B, QIN G, et al. A local contrast method combined with adaptive background estimation for infrared small target detection[J]. IEEE Geoscience and Remote Sensing Letters, 2019, 16(9): 1442-1446. [11] GAO C Q, MENG D Y, YANG Y, et al. Infrared patch-image model for small target detection in a single image[J]. IEEE Transactions on Image Processing, 2013, 22(12): 4996-5009. [12] WANG X Y, PENG Z M, KONG D H, et al. Infrared dim and small target detection based on stable multisubspace learning in heterogeneous scene[J]. IEEE Transactions on Geoscience and Remote Sensing, 2017, 55(10): 5481-5493. [13] DAI Y M, WU Y Q. Reweighted infrared patch-tensor model with both nonlocal and local priors for single-frame small target detection[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2017, 10(8): 3752-3767. [14] ZHU H, LIU S M, DENG L Z, et al. Infrared small target detection via low-rank tensor completion with Top-Hat regularization[J]. IEEE Transactions on Geoscience and Remote Sensing, 2020, 58(2): 1004-1016. [15] LECUN Y, BENGIO Y, HINTON G. Deep learning[J]. Nature, 2015, 521(7553): 436-444. [16] WANG H, ZHOU L P, WANG L. Miss detection vs. false alarm: adversarial learning for small object segmentation in infrared images[C]//Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2019: 8508-8517. [17] DAI Y M, WU Y Q, ZHOU F, et al. Asymmetric contextual modulation for infrared small target detection[C]//Proceedings of the 2021 IEEE Winter Conference on Applications of Computer Vision. Piscataway: IEEE Press, 2021: 949-958. [18] ZHANG T F, LI L, CAO S Y, et al. Attention-guided pyramid context networks for detecting infrared small target under complex background[J]. IEEE Transactions on Aerospace and Electronic Systems, 2023, 59(4): 4250-4261. [19] LI B Y, XIAO C, WANG L G, et al. Dense nested attention network for infrared small target detection[J]. IEEE Transactions on Image Processing, 2022, 32: 1745-1758. [20] ZHANG M J, ZHANG R, YANG Y X, et al. ISNet: shape matters for infrared small target detection[C]//Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2022: 867-876. [21] RONNEBERGER O, FISCHER P, BROX T. U-Net: convolutional networks for biomedical image segmentation[C]//Proceedings of the Medical Image Computing and Computer-Assisted Intervention 2015. Berlin: Springer, 2015: 234-241. [22] YU Z T, ZHAO C X, WANG Z Z, et al. Searching central difference convolutional networks for face anti-spoofing[C]//Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 5294-5304. [23] WANG P Q, CHEN P F, YUAN Y, et al. Understanding convolution for semantic segmentation[C]//Proceedings of the 2018 IEEE Winter Conference on Applications of Computer Vision. Piscataway: IEEE Press, 2018: 1451-1460. [24] HE K M, ZHANG X Y, REN S Q, et al. Deep residual learning for image recognition[C]//Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2016: 770-778. [25] RAHMAN M A, WANG Y. Optimizing intersection-over-union in deep neural networks for image segmentation[C]//Proceedings of the International Symposium on Visual Computing. Berlin: Springer, 2016: 234-244. [26] ZHANG L D, PENG Z M. Infrared small target detection based on partial sum of the tensor nuclear norm[J]. Remote Sensing, 2019, 11(4): 382. -

下载:

下载: