-

摘要:

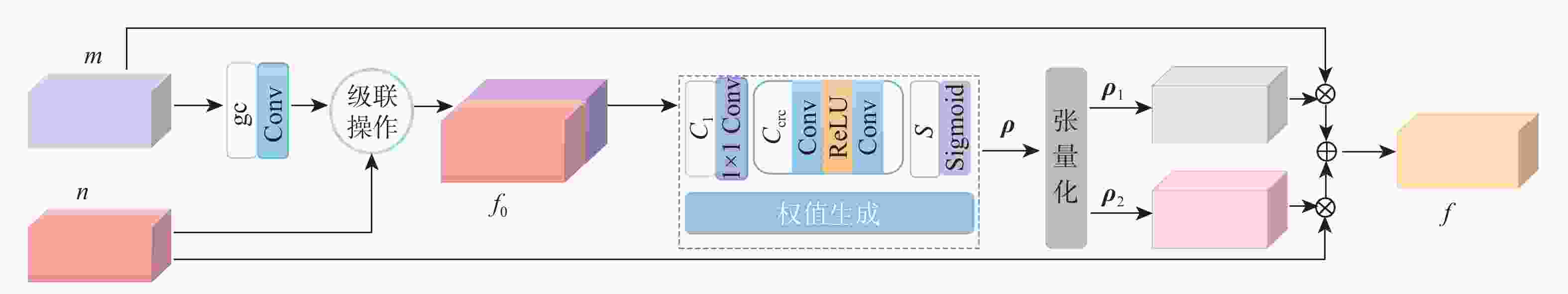

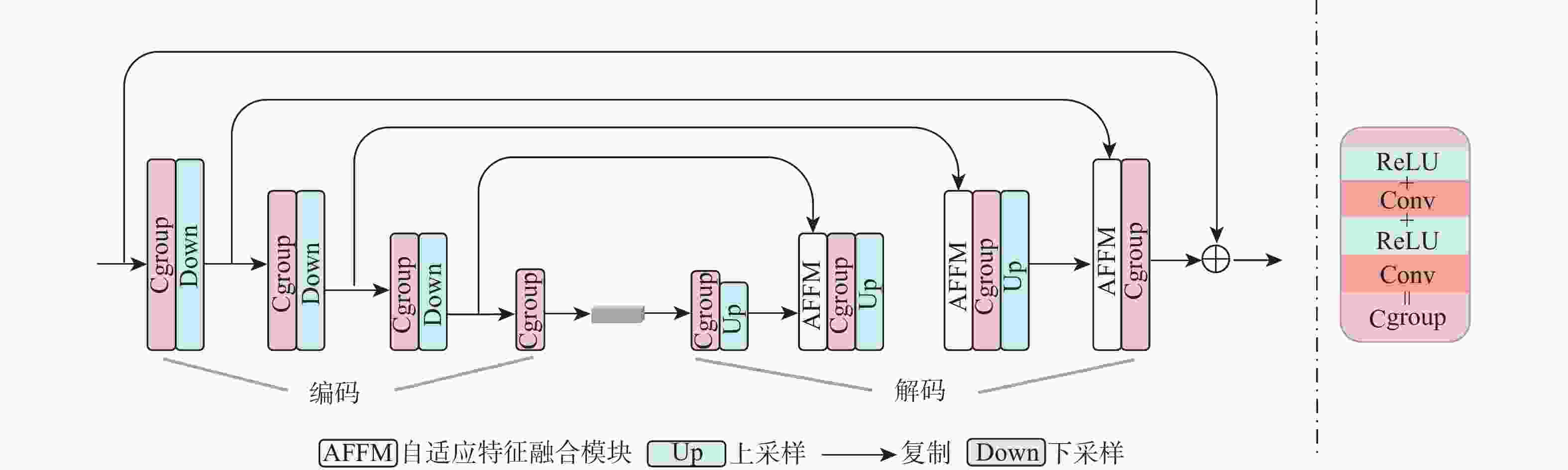

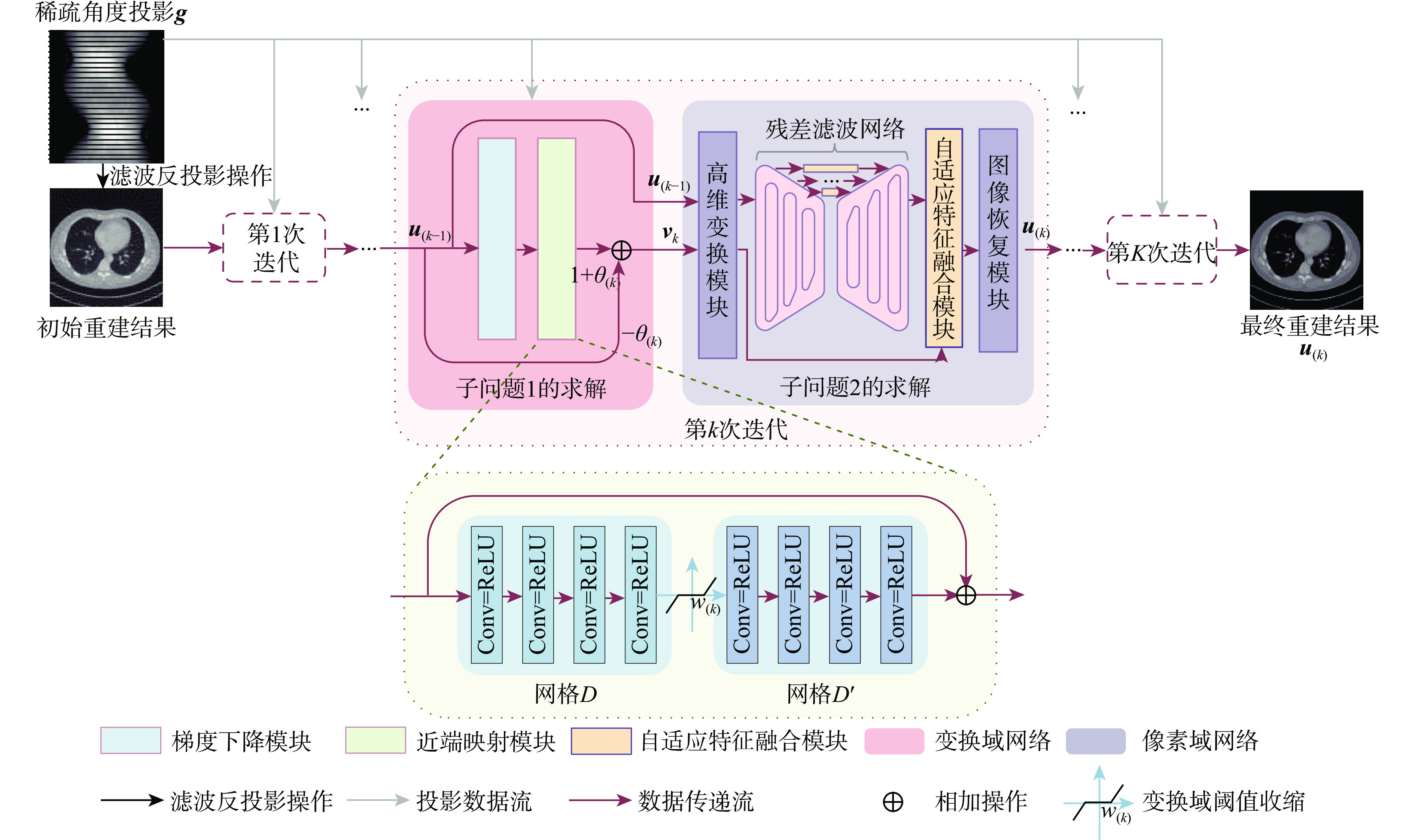

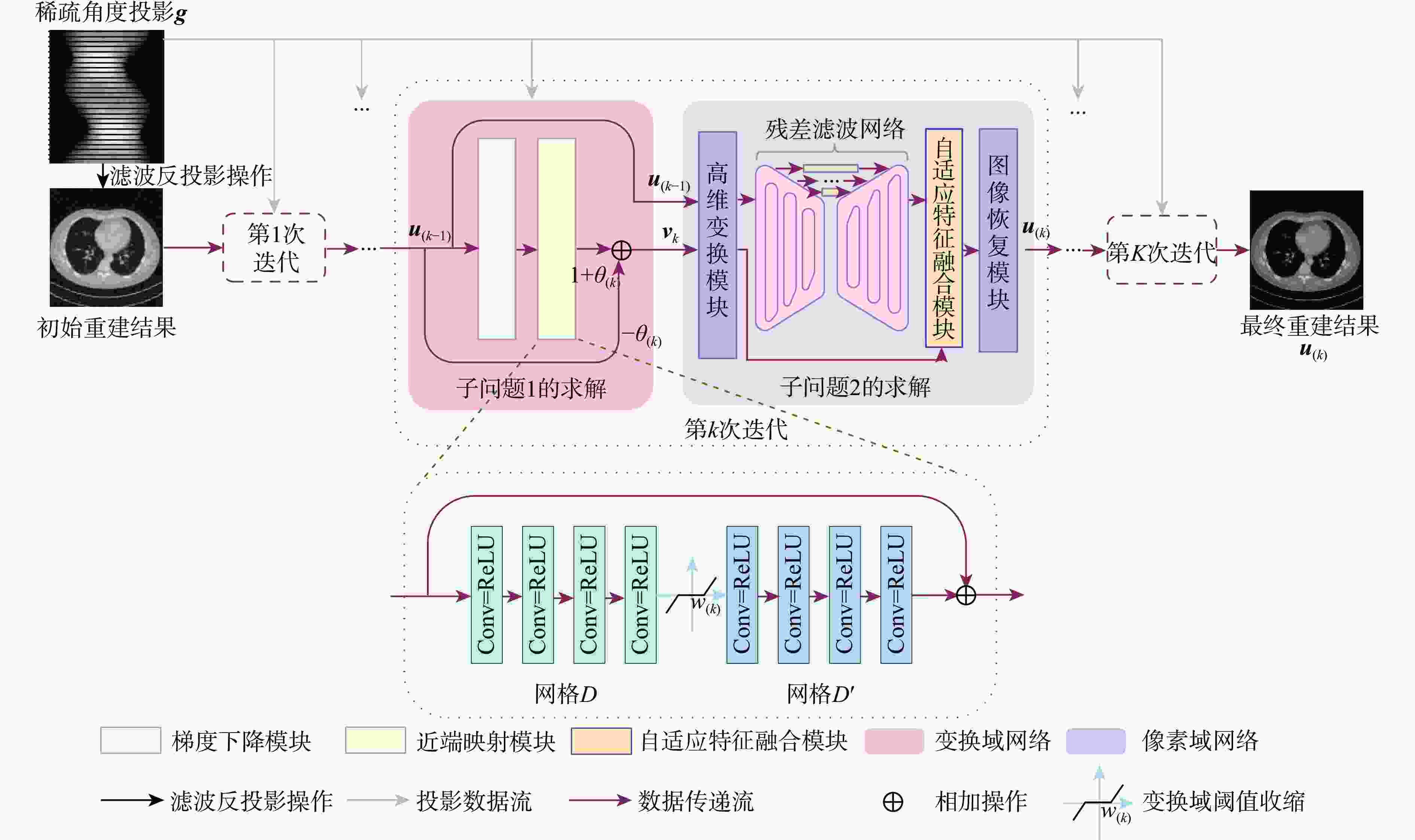

深度展开网络由于可解释性高和学习能力强的特点受到广泛关注。现有CT图像重建算法的正则化项大多只关注某一类域中的信息,重建结果常存在边缘模糊等信息丢失的问题。基于此,提出一种基于稀疏变换先验约束的深度展开网络,用于进行稀疏角度CT重建。考虑像素域信息和变换域信息对图像重建具有重要作用,构建结合变换域稀疏正则化项和像素域一致性正则化项2种具有信息互补作用的正则化项,据此重新设计稀疏角度CT重建的目标函数。根据所构建的目标函数进行迭代优化求解,将得到的一系列约束关系映射为新的深度展开网络,用于低剂量CT图像的迭代重建。实验结果表明:所换算法得到的重建结果与经典的FISTA算法相比,平均峰值信噪比(PSNR)和视觉信息保真度(VIF)均有较大改善。

Abstract:Deep iterative unfolding networks have garnered a lot of attention lately because of their great learning capabilities and good interpretability. The regularization terms in existing CT image reconstruction methods mostly focus on information within a specific domain, leading to issues such as edge blurring and information loss in the reconstructed results. Therefore, a sparse transform prior constrain based deep unfolding network is proposed for sparse-view CT reconstruction. Two regularization terms with complementary information—transform-domain sparse regularization and pixel-domain consistency regularization—are created in consideration of the important roles that both pixel-domain and transform-domain information play in picture reconstruction. Based on these, the objective function for sparse-view CT reconstruction is redesigned. Furthermore, a new deep unfolding network for iterative reconstruction of low-dose CT is created by mapping a set of constraint relationships established from an iterative optimization solution for the constructed objective function. Experimental results demonstrate that the algorithm presented in this paper achieves a great improvement on average peak signal to noise ratio (PSNR) and visual information fidelity (VIF) compared to the classical FISTA algorithms.

-

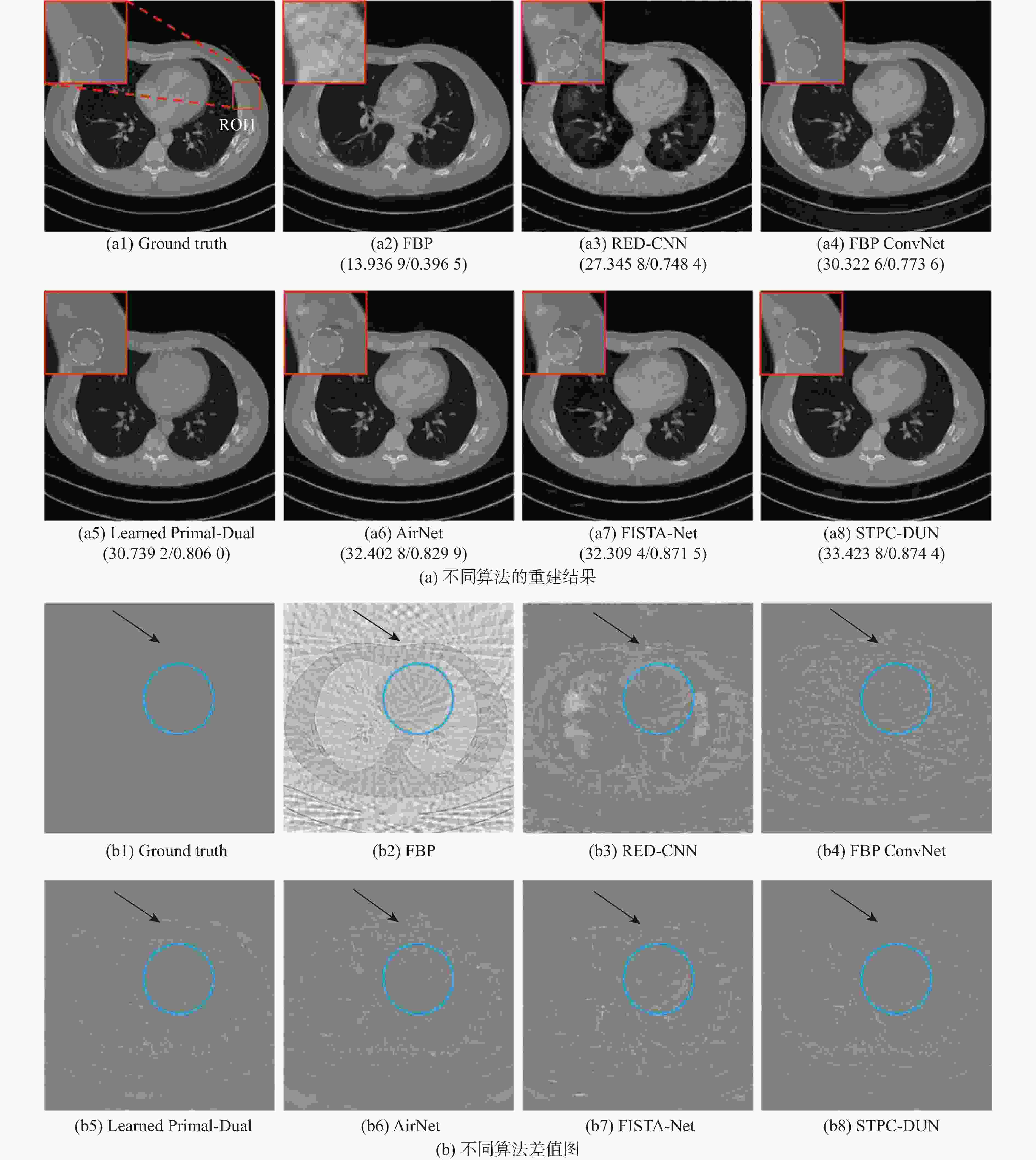

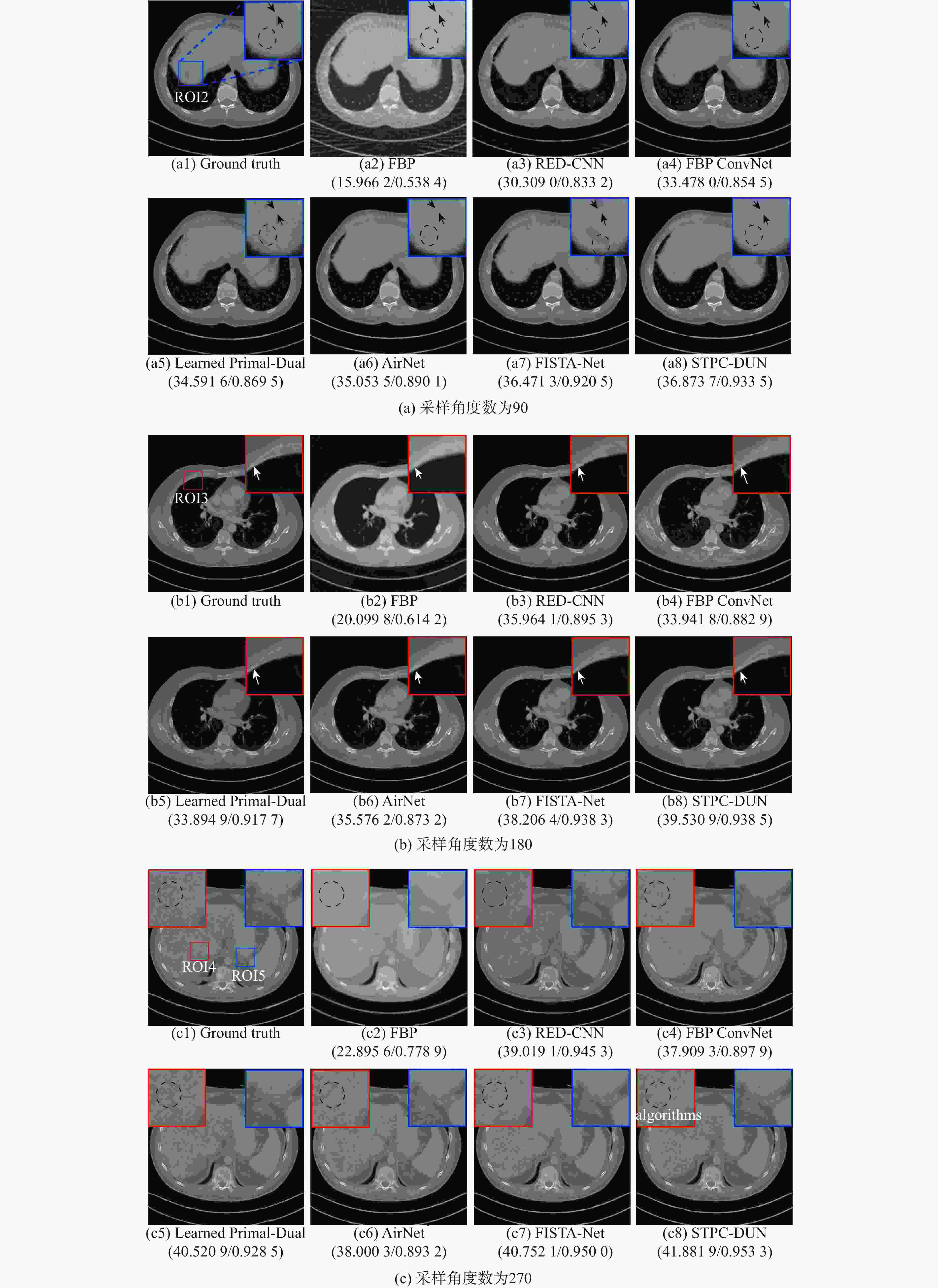

表 1 不同算法在4种采样角度数下的重建结果量化分析值

Table 1. Quantitative values for reconstruction results by different algorithms on 4 kinds of views

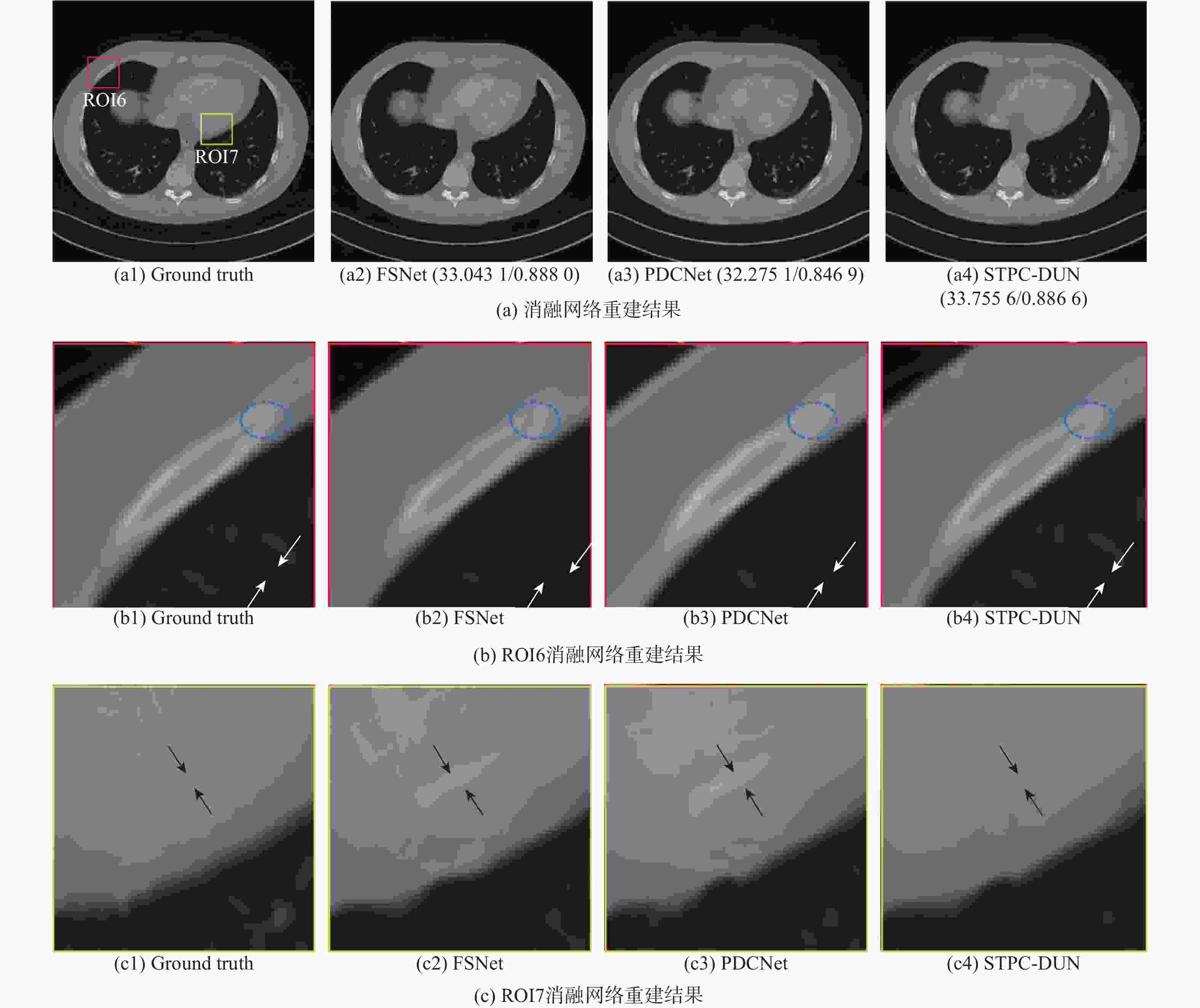

采样角度数 重建算法 SSIM(均值±标准差)↑ PSNR(均值±标准差)/dB↑ VIF(均值±标准差)↑ MSE(均值±标准差)↓ 60 FBP 0.4557 ±0.0512 14.5076 ±1.1519 0.2234 ±0.0174 2386.2 ±646.8316 RED-CNN[44] 0.7775 ±0.0355 27.9191 ±2.2010 0.2792 ±0.0161 121.3903 ±79.5000 FBP ConvNet[45] 0.8078 ±0.0294 30.8149 ±1.6828 0.3346 ±0.0197 58.1162 ±23.9885 Learned Primal-Dual[23] 0.8475 ±0.0144 31.3078 ±1.3920 0.3445 ±0.0186 50.8037 ±18.5659 AirNet[46] 0.8434 ±0.0229 32.0765 ±1.7189 0.3744 ±0.0214 44.1415 ±23.2134 FISTA-Net[39] 0.8865 ±0.0171 31.8418 ±2.0896 0.3413 ±0.0177 48.3184 ±27.7186 STPC-DUN 0.8927 ±0.0155 33.0876 ±1.8421 0.5152 ±0.0398 35.4286 ±19.8697 90 FBP 0.5181 ±0.0455 16.9089 ±1.1465 0.2861 ±0.0193 1373.2 ±381.6216 RED-CNN[44] 0.8348 ±0.0273 31.5023 ±1.8976 0.3667 ±0.0170 50.8892 ±25.9904 FBP ConvNet[45] 0.8443 ±0.0243 32.6084 ±1.8659 0.3932 ±0.0224 39.4103 ±19.6849 Learned Primal-Dual[23] 0.8456 ±0.0245 32.7864 ±1.9438 0.5123 ±0.0261 39.4386 ±33.4385 AirNet[46] 0.8880± 0.0201 35.1691 ±1.7574 0.4933 ±0.0199 21.7726 ±11.9778 FISTA-Net[39] 0.9073 ±0.0128 34.5148 ±1.8938 0.3957 ±0.0192 25.6160 ±14.1523 STPC-DUN 0.9125 ±0.0169 35.7043 ±1.6713 0.5859 ±0.0360 18.9724 ±8.8451 180 FBP 0.6452 ±0.0423 20.9855 ±2.0037 0.3567 ±0.0251 576.4639 ±280.3785 RED-CNN[44] 0.9012 ±0.0159 35.2431 ±1.6113 0.5601 ±0.0188 20.9099 ±8.9502 FBP ConvNet[45] 0.9016 ±0.0215 36.5055 ±2.0669 0.6022 ±0.0289 16.3913 ±9.1723 Learned Primal-Dual[23] 0.9413 ±0.0174 36.7753 ±2.6183 0.5958 ±0.0259 16.9274 ±13.9621 AirNet[46] 0.9223 ±0.0215 38.0494 ±2.0437 0.6384 ±0.0253 11.6899 ±8.4169 FISTA-Net[39] 0.9408 ±0.0090 37.1486 ±2.1449 0.5500 ±0.0252 14.3909 ±8.8337 STPC-DUN 0.9460 ±0.0082 39.1352 ±1.5893 0.7603 ±0.0380 8.5092 ±3.4297 270 FBP 0.6627 ±0.0308 21.9470 ±1.6608 0.4109 ±0.0210 447.6719 ±181.3476 RED-CNN[44] 0.9443 ±0.0089 38.6660 ±1.4345 0.7015 ±0.0189 9.3455 ±3.3080 FBP ConvNet[45] 0.9339 ±0.0228 38.9396 ±2.5118 0.7026 ±0.0294 10.1832 ±8.7321 Learned Primal-Dual[23] 0.9449 ±0.0207 39.5926 ±1.9604 0.7279 ±0.0232 7.9585 ±4.3854 AirNet[46] 0.9441 ±0.0203 40.2075 ±2.2539 0.7585 ±0.0218 7.2035 ±4.7297 FISTA-Net[39] 0.9583 ±0.0071 40.1174 ±1.7459 0.6765 ±0.0222 6.9175 ±3.3517 STPC-DUN 0.9637 ±0.0076 40.9253 ±1.6207 0.8256 ±0.0384 5.6504 ±2.3047 注:“↑”表示该指标数值越大,图像与真实图像越接近;“↓”表示该指标数值越小,图像与真实图像越接近;加粗数值表示最优值。 表 2 不同消融网络所获重建结果的平均量化分析值

Table 2. Average quantitative values for reconstruction results by different ablation networks

采样角度数 正则化类型 PSNR/dB↑ SSIM↑ VIF↑ MSE↓ FSNet PDCNet 90 √ × 34.7607 0.8946 0.5360 24.7216 × √ 35.1753 0.9056 0.5662 21.1300 √ √ 35.7043 0.9125 0.5859 18.9724 180 √ × 37.7035 0.9419 0.6871 13.2066 × √ 37.6831 0.9242 0.7152 11.9676 √ √ 39.1352 0.9460 0.7603 8.5092 -

[1] 吉强, 洪洋. 医学影像物理学[M]. 3版. 北京: 人民卫生出版社, 2010: 8-15.JI Q, HONG Y. Medical imaging physics[M]. 3rd ed. Beijing: People’s Medical Publishing House, 2010: 8-15(in Chinese). [2] 闫镔, 李磊. CT图像重建算法[M]. 北京: 科学出版社, 2014: 12-13.YAN B, LI L. CT image reconstruction algorithm[M]. Beijing: Science Press, 2014: 12-13(in Chinese). [3] 罗立民, 胡轶宁, 陈阳. 低剂量CT成像的研究现状与展望[J]. 数据采集与处理, 2015, 30(1): 24-34.LUO L M, HU Y N, CHEN Y. Research status and prospect for low-dose CT imaging[J]. Journal of Data Acquisition and Processing, 2015, 30(1): 24-34(in Chinese). [4] 上官宏. 低剂量X线CT统计迭代重建方法研究[D]. 太原: 中北大学, 2016: 6-19.SHANGGUAN H. Study on statistical iterative reconstruction methods for low-dose X-ray CT[D]. Taiyuan: North University of China, 2016: 6-19(in Chinese). [5] ZHANG H, WANG J, MA J H, et al. Statistical models and regularization strategies in statistical image reconstruction of low-dose X-ray CT: a survey[EB/OL]. (2015-05-14)[2024-01-06]. https://arxiv.org/abs/1412.1732. [6] SIDKY E Y, KAO C M, PAN X C. Accurate image reconstruction from few-views and limited-angle data in divergent-beam CT[J]. Journal of X-Ray Science and Technology: Clinical Applications of Diagnosis and Therapeutics, 2006, 14(2): 119-139. [7] DO S, KARL W C, KALRA M K, et al. Clinical low dose CT image reconstruction using high-order total variation techniques[J]. Medical Imaging 2010: Physics of Medical Imaging, 2010, 7622: 76225D. [8] LIU Y, LIANG Z R, MA J H, et al. Total variation-Stokes strategy for sparse-view X-ray CT image reconstruction[J]. IEEE Transactions on Medical Imaging, 2014, 33(3): 749-763. [9] GARDUÑO E, HERMAN G T, DAVIDI R. Reconstruction from a few projections by ℓ1-minimization of the Haar transform[J]. Inverse Problems, 2011, 27(5): 055006. [10] JIA X, DONG B, LOU Y F, et al. GPU-based iterative cone-beam CT reconstruction using tight frame regularization[J]. Physics in Medicine and Biology, 2011, 56(13): 3787-3807. [11] VANDEGHINSTE B, GOOSSENS B, VAN HOLEN R, et al. Iterative CT reconstruction using shearlet-based regularization[J]. IEEE Transactions on Nuclear Science, 2013, 60(5): 3305-3317. [12] WU W W, LIU F L, ZHANG Y B, et al. Non-local low-rank cube-based tensor factorization for spectral CT reconstruction[J]. IEEE Transactions on Medical Imaging, 2019, 38(4): 1079-1093. [13] XU Q, YU H Y, MOU X Q, et al. Low-dose X-ray CT reconstruction via dictionary learning[J]. IEEE Transactions on Medical Imaging, 2012, 31(9): 1682-1697. [14] ZHU B, LIU J Z, CAULEY S F, et al. Image reconstruction by domain-transform manifold learning[J]. Nature, 2018, 555(7697): 487-492. [15] HE J, WANG Y B, MA J H. Radon inversion via deep learning[J]. IEEE Transactions on Medical Imaging, 2020, 39(6): 2076-2087. [16] HE J, CHEN S L, ZHANG H, et al. Downsampled imaging geometric modeling for accurate CT reconstruction via deep learning[J]. IEEE Transactions on Medical Imaging, 2021, 40(11): 2976-2985. [17] LI Y S, LI K, ZHANG C Z, et al. Learning to reconstruct computed tomography images directly from sinogram data under a variety of data acquisition conditions[J]. IEEE Transactions on Medical Imaging, 2019, 38(10): 2469-2481. [18] WANG B, LIU H F. FBP-Net for direct reconstruction of dynamic PET images[J]. Physics in Medicine and Biology, 2020, 65(23): 235008. [19] ZHOU B, ZHOU S K, DUNCAN J S, et al. Limited view tomographic reconstruction using a cascaded residual dense spatial-channel attention network with projection data fidelity layer[J]. IEEE Transactions on Medical Imaging, 2021, 40(7): 1792-1804. [20] LIN W A, LIAO H F, PENG C, et al. DuDoNet: dual domain network for CT metal artifact reduction[C]//Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2019: 10504-10513. [21] ZHOU B, CHEN X C, ZHOU S K, et al. DuDoDR-Net: dual-domain data consistent recurrent network for simultaneous sparse view and metal artifact reduction in computed tomography[J]. Medical Image Analysis, 2022, 75: 102289. [22] WANG T, LU Z X, YANG Z Y, et al. IDOL-Net: an interactive dual-domain parallel network for CT metal artifact reduction[J]. IEEE Transactions on Radiation and Plasma Medical Sciences, 2022, 6(8): 874-885. [23] ADLER J, ÖKTEM O. Learned primal-dual reconstruction[J]. IEEE Transactions on Medical Imaging, 2018, 37(6): 1322-1332. [24] ZHANG K, VAN GOOL L, TIMOFTE R. Deep unfolding network for image super-resolution[C]//Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 3214-3223. [25] ZHAO L J, WANG X L, ZHANG J J, et al. Boundary-constrained interpretable image reconstruction network for deep compressive sensing[J]. Knowledge-Based Systems, 2023, 275: 110681. [26] MOU C, WANG Q, ZHANG J. Deep generalized unfolding networks for image restoration[C]//Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2022: 17378-17389. [27] YANG Y, SUN J, LI H B, et al. ADMM-CSNet: a deep learning approach for image compressive sensing[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2020, 42(3): 521-538. [28] 耿梦凡, 张虎, 李哲, 等. 基于迭代优化展开的Cherenkov激发的荧光扫描成像重建算法[J]. 中国激光, 2023, 50(15): 1507106.GENG M F, ZHANG H, LI Z, et al. A reconstruction algorithm for Cherenkov-excited luminescence scanning imaging based on unrolled iterative optimization[J]. Chinese Journal of Lasers, 2023, 50(15): 1507106(in Chinese). [29] SONG J C, CHEN B, ZHANG J. Memory-augmented deep unfolding network for compressive sensing[C]//Proceedings of the 29th ACM International Conference on Multimedia. New York: ACM, 2021: 4249-4258. [30] CHEN H, ZHANG Y, CHEN Y J, et al. LEARN: learned experts’ assessment-based reconstruction network for sparse-data CT[J]. IEEE Transactions on Medical Imaging, 2018, 37(6): 1333-1347. [31] WU D F, KIM K, EL FAKHRI G, et al. Iterative low-dose CT reconstruction with priors trained by artificial neural network[J]. IEEE Transactions on Medical Imaging, 2017, 36(12): 2479-2486. [32] KANG E, CHANG W, YOO J, et al. Deep convolutional framelet denosing for low-dose CT via wavelet residual network[J]. IEEE Transactions on Medical Imaging, 2018, 37(6): 1358-1369. [33] GAO Y F, LIANG Z R, MOORE W, et al. A feasibility study of extracting tissue textures from a previous full-dose CT database as prior knowledge for Bayesian reconstruction of current low-dose CT images[J]. IEEE Transactions on Medical Imaging, 2019, 38(8): 1981-1992. [34] GUPTA H, JIN K H, NGUYEN H Q, et al. CNN-based projected gradient descent for consistent CT image reconstruction[J]. IEEE Transactions on Medical Imaging, 2018, 37(6): 1440-1453. [35] CHUN I Y, ZHENG X H, LONG Y, et al. BCD-Net for low-dose CT reconstruction: acceleration, convergence, and generalization[C]//Proceedings of the Medical Image Computing and Computer Assisted Intervention. Berlin: Springer, 2019: 31-40. [36] SHEN C Y, GONZALEZ Y, CHEN L Y, et al. Intelligent parameter tuning in optimization-based iterative CT reconstruction via deep reinforcement learning[J]. IEEE Transactions on Medical Imaging, 2018, 37(6): 1430-1439. [37] 韩泽芳, 上官宏, 张雄, 等. 基于深度学习的低剂量CT成像算法研究进展[J]. CT理论与应用研究, 2022, 31(1): 117-134.HAN Z F, SHANGGUAN H, ZHANG X, et al. Advances in research on low-dose CT imaging algorithm based on deep learning[J]. Computerized Tomography Theory and Applications, 2022, 31(1): 117-134(in Chinese). [38] GEMAN D, YANG C D. Nonlinear image recovery with half-quadratic regularization[J]. IEEE Transactions on Image Processing, 1995, 4(7): 932-946. [39] XIANG J X, DONG Y G, YANG Y J. FISTA-Net: learning a fast iterative shrinkage thresholding network for inverse problems in imaging[J]. IEEE Transactions on Medical Imaging, 2021, 40(5): 1329-1339. [40] BAUER E, KOHAVI R. An empirical comparison of voting classification algorithms: bagging, boosting, and variants[J]. Machine Learning, 1999, 36(1): 105-139. [41] GOODFELLOW I, BENGIO Y, COURVILLE A. Deep learning[M]. Cambridge: MIT Press, 2016. [42] KYUNGSANG K, LI Z Q, MIN J H, et al. Low dose CT grand challenge[EB/OL]. (2016-06-15)[2024-01-06]. http://www.aapm.org/GrandChallenge/LowDoseCT/. [43] ADLER J, KOHR H, OKTEM O. Operator discretization library (ODL)[EB/OL]. (2017-09-05)[2024-01-06]. https://github.com/odlgroup/odl. [44] CHEN H, ZHANG Y, KALRA M K, et al. Low-dose CT with a residual encoder-decoder convolutional neural network[J]. IEEE Transactions on Medical Imaging, 2017, 36(12): 2524-2535. [45] JIN K H, MCCANN M T, FROUSTEY E, et al. Deep convolutional neural network for inverse problems in imaging[J]. IEEE Transactions on Image Processing, 2017, 26(9): 4509-4522. [46] CHEN G Y, HONG X, DING Q Q, et al. AirNet: fused analytical and iterative reconstruction with deep neural network regularization for sparse-data CT[J]. Medical Physics, 2020, 47(7): 2916-2930. [47] KINGMA D P, BA J. Adam: a method for stochastic optimization[EB/OL]. (2017-01-30)[2024-01-06]. https://arxiv.org/abs/1412.6980. [48] WANG Z, BOVIK A C, SHEIKH H R, et al. Image quality assessment: from error visibility to structural similarity[J]. IEEE Transactions on Image Processing, 2004, 13(4): 600-612. [49] YAO S S, LIN W S, ONG E, et al. Contrast signal-to-noise ratio for image quality assessment[C]//Proceedings of the IEEE International Conference on Image Processing 2005. Piscataway: IEEE Press, 2005: I-397. [50] HAN Y, CAI Y Z, CAO Y, et al. A new image fusion performance metric based on visual information fidelity[J]. Information Fusion, 2013, 14(2): 127-135. -

下载:

下载: