Lightweight intelligent rig pipe column inspection method based on improved YOLOv5s

-

摘要:

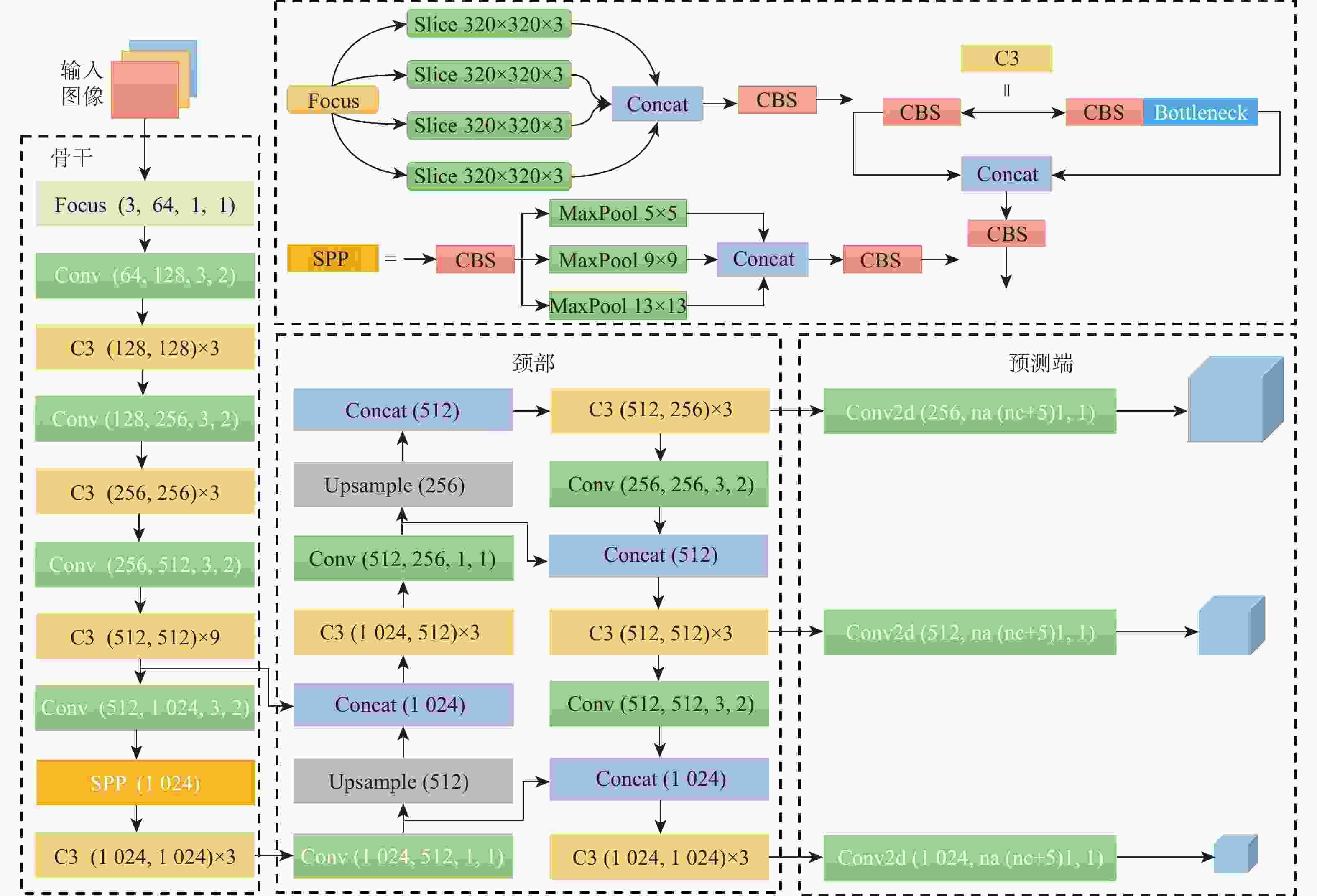

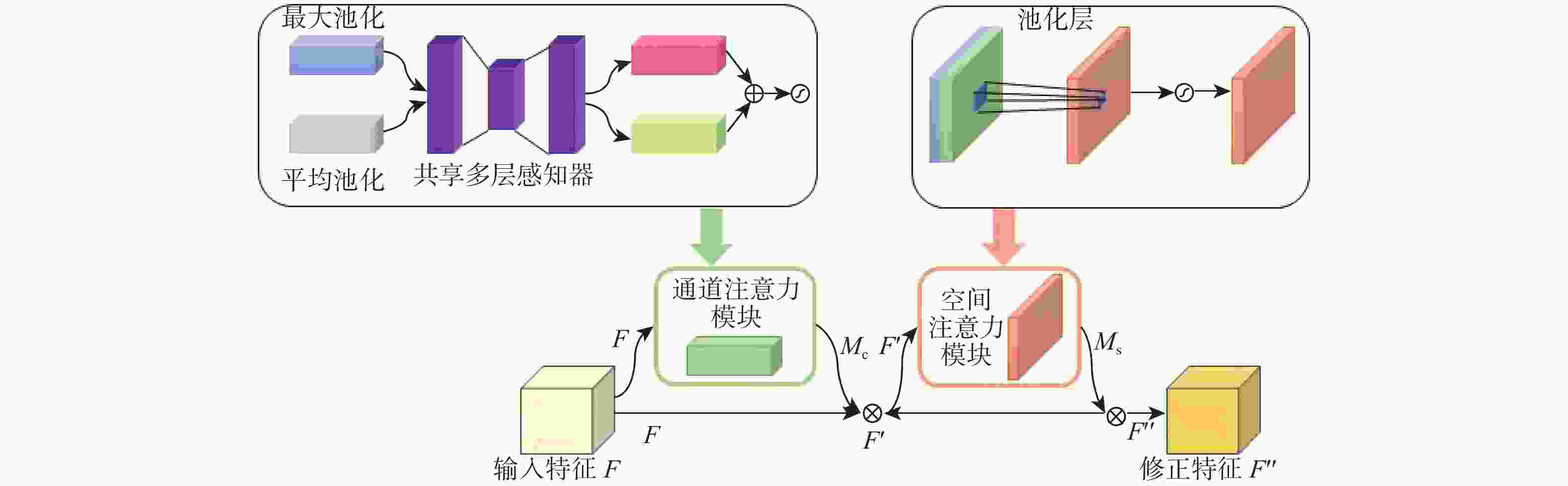

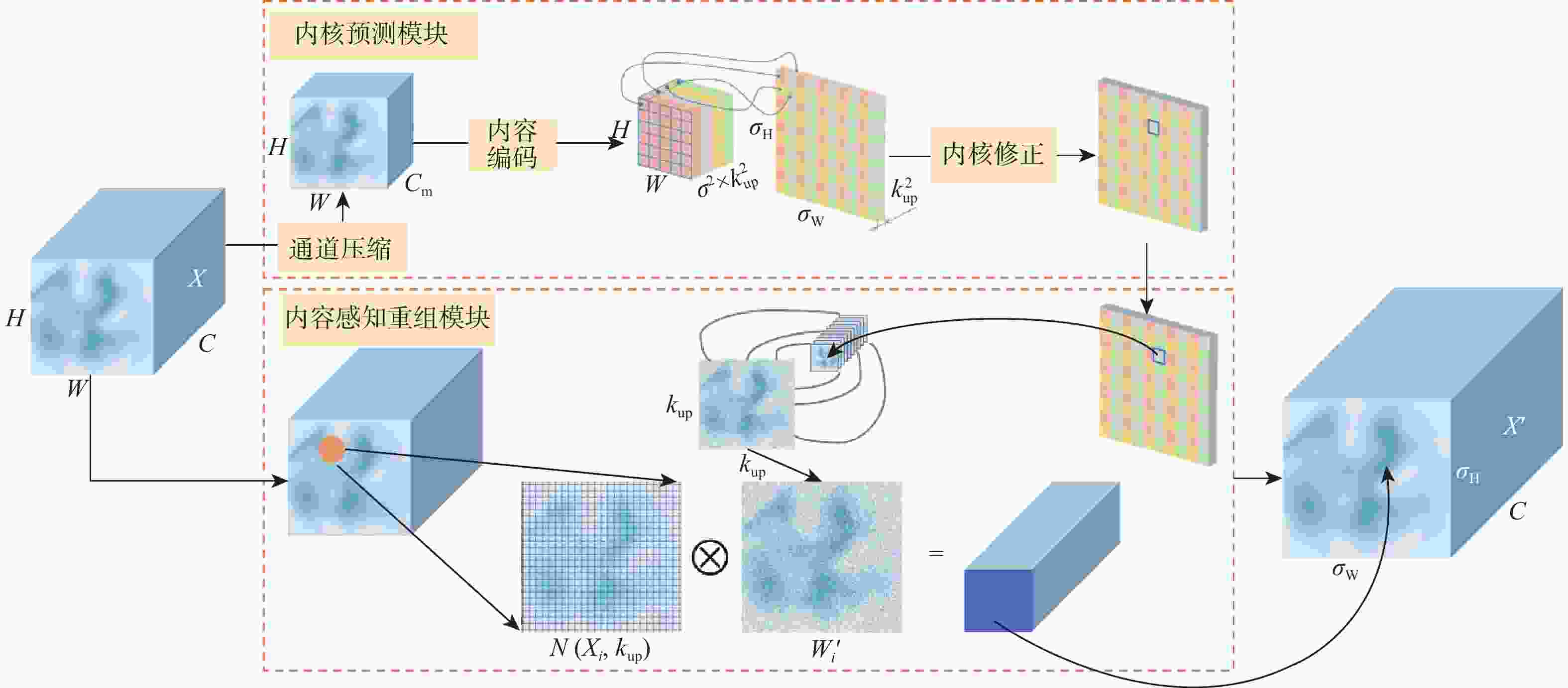

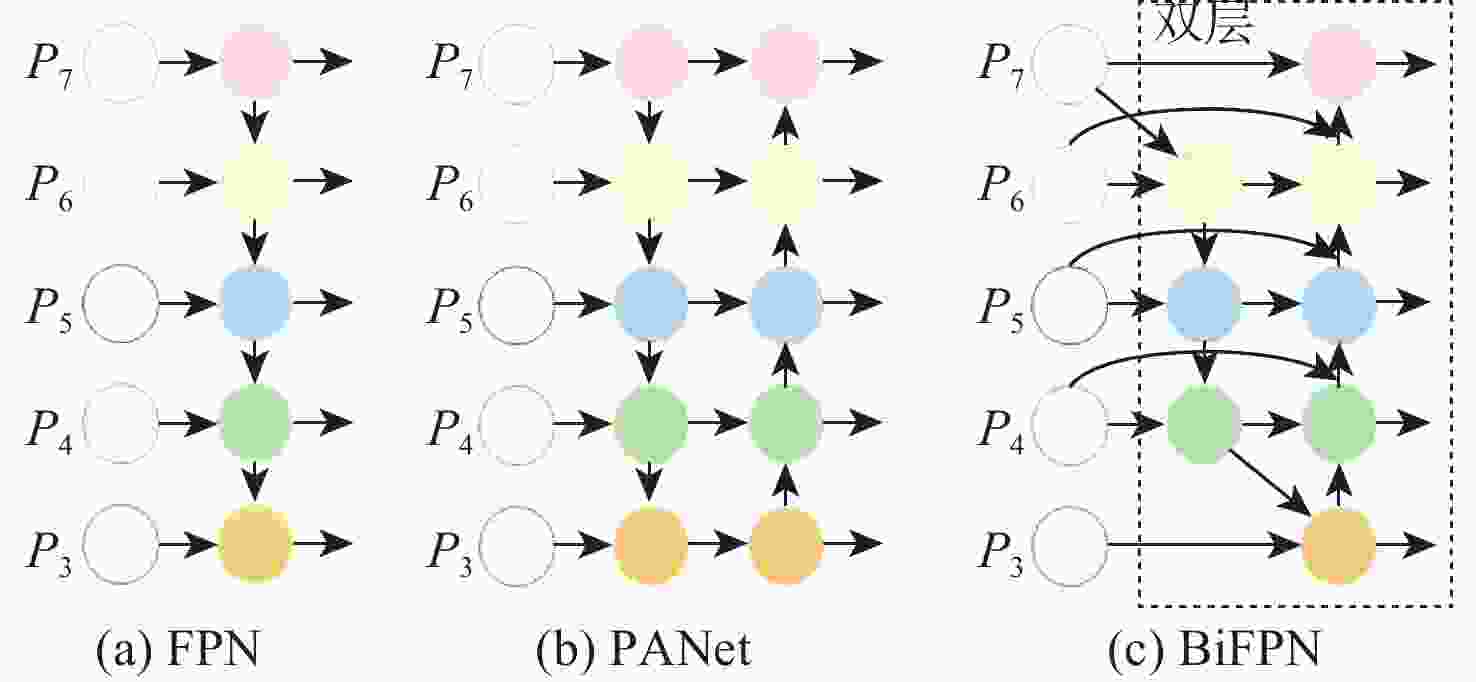

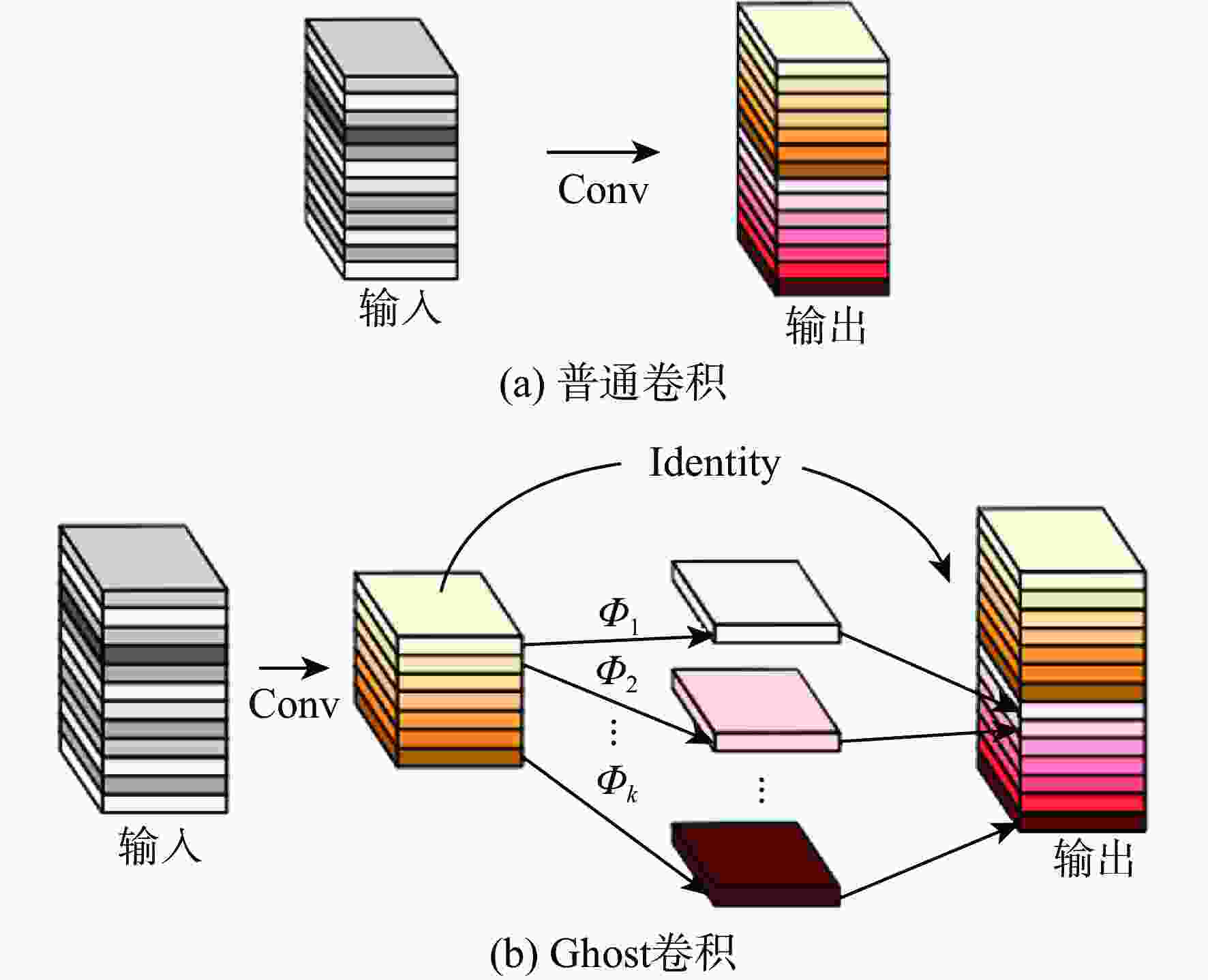

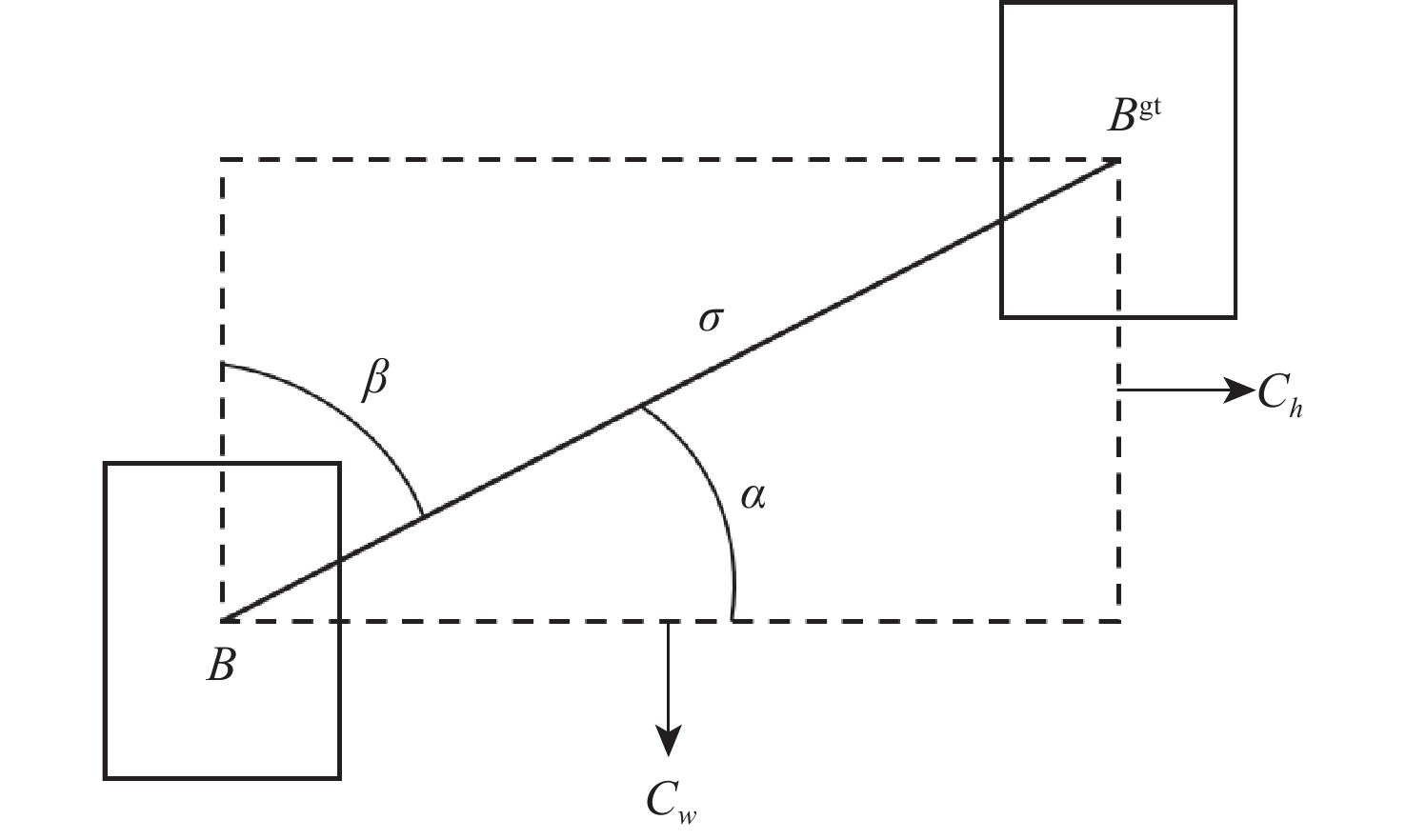

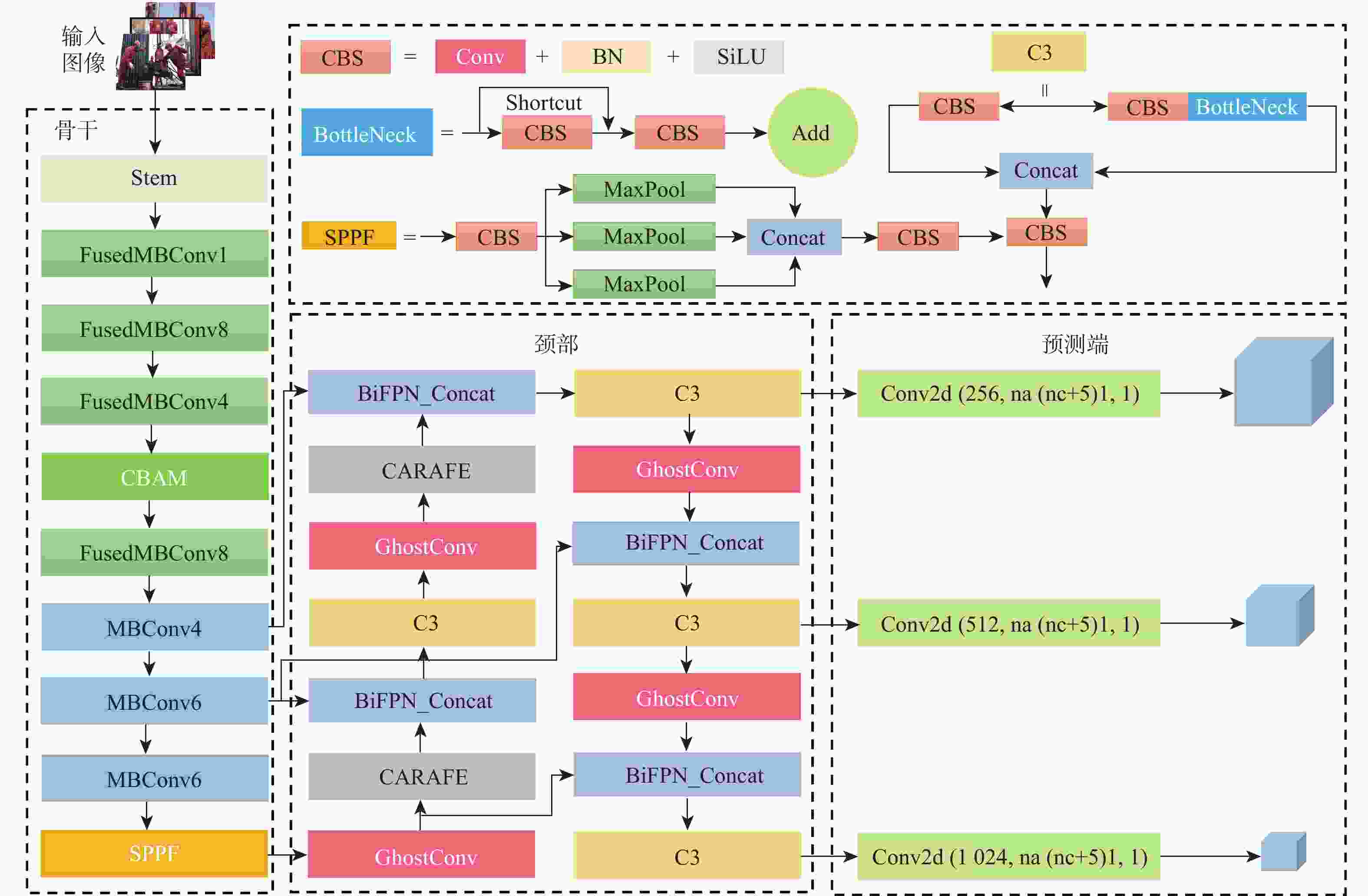

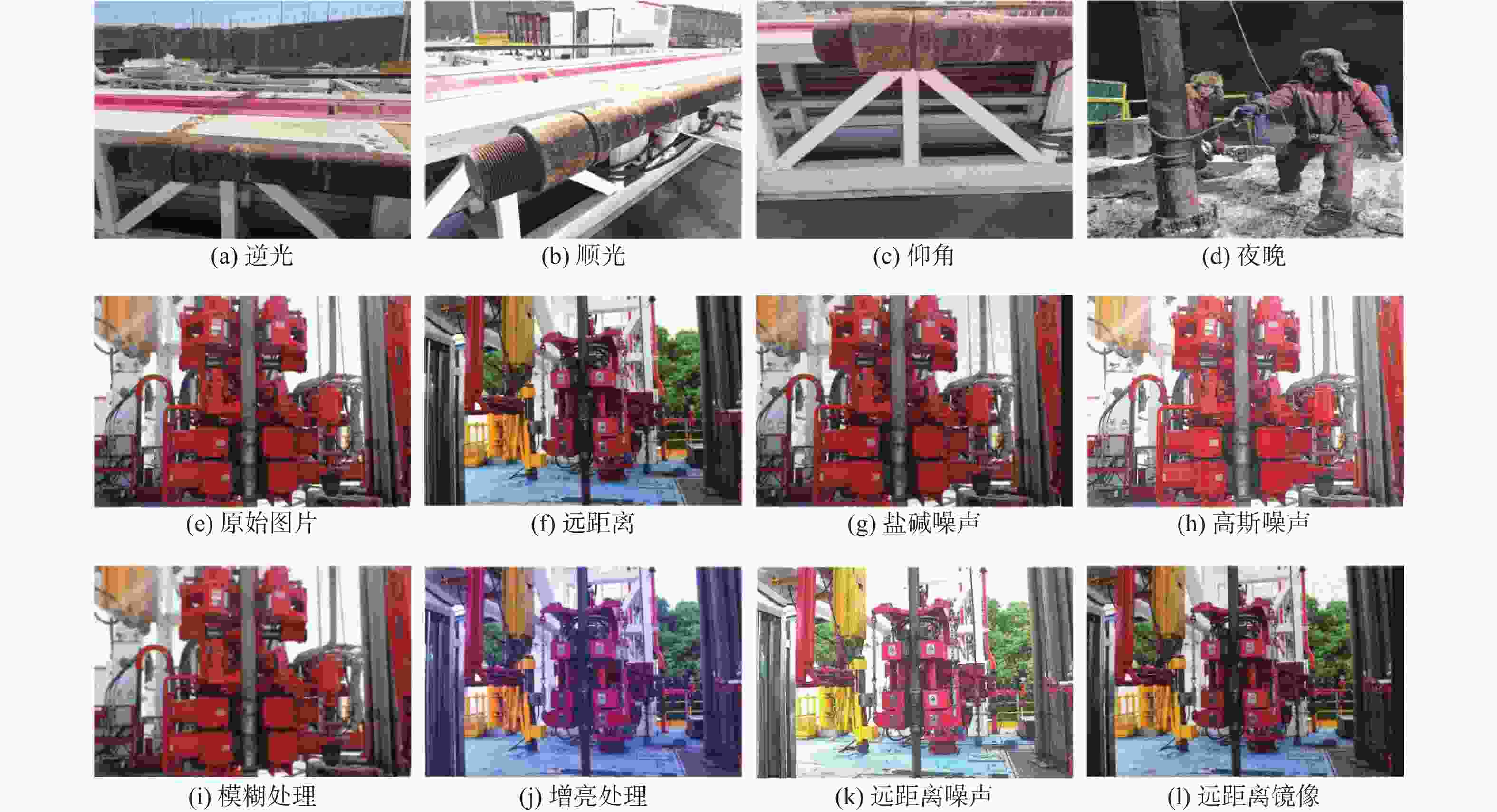

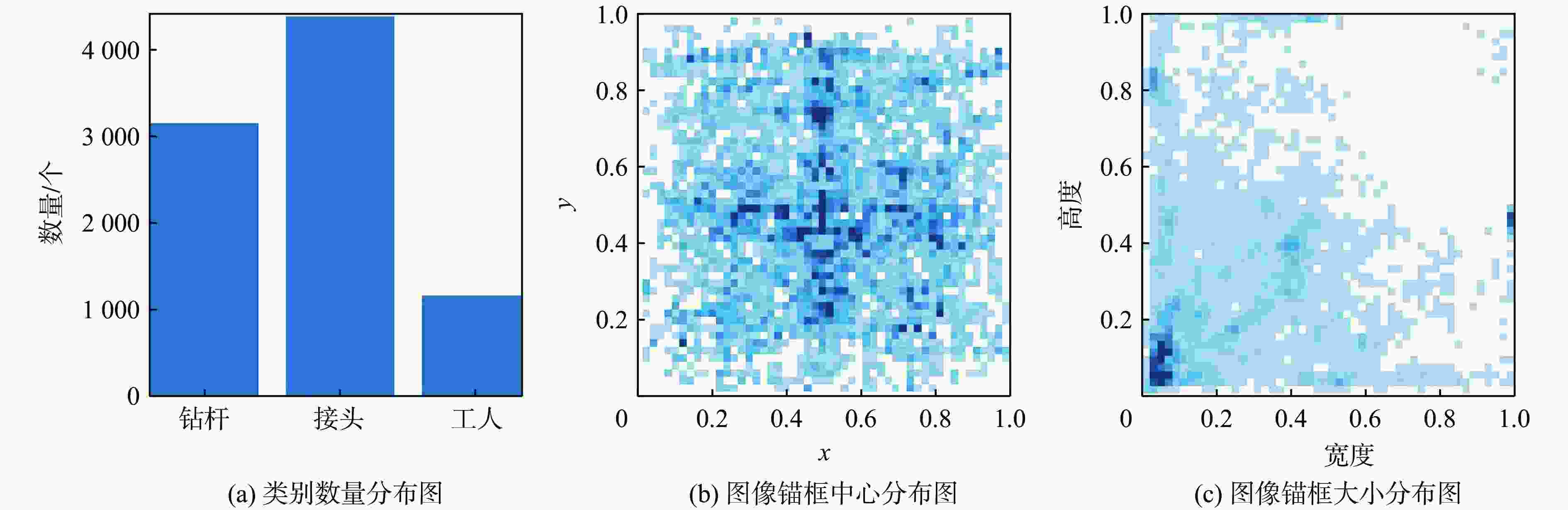

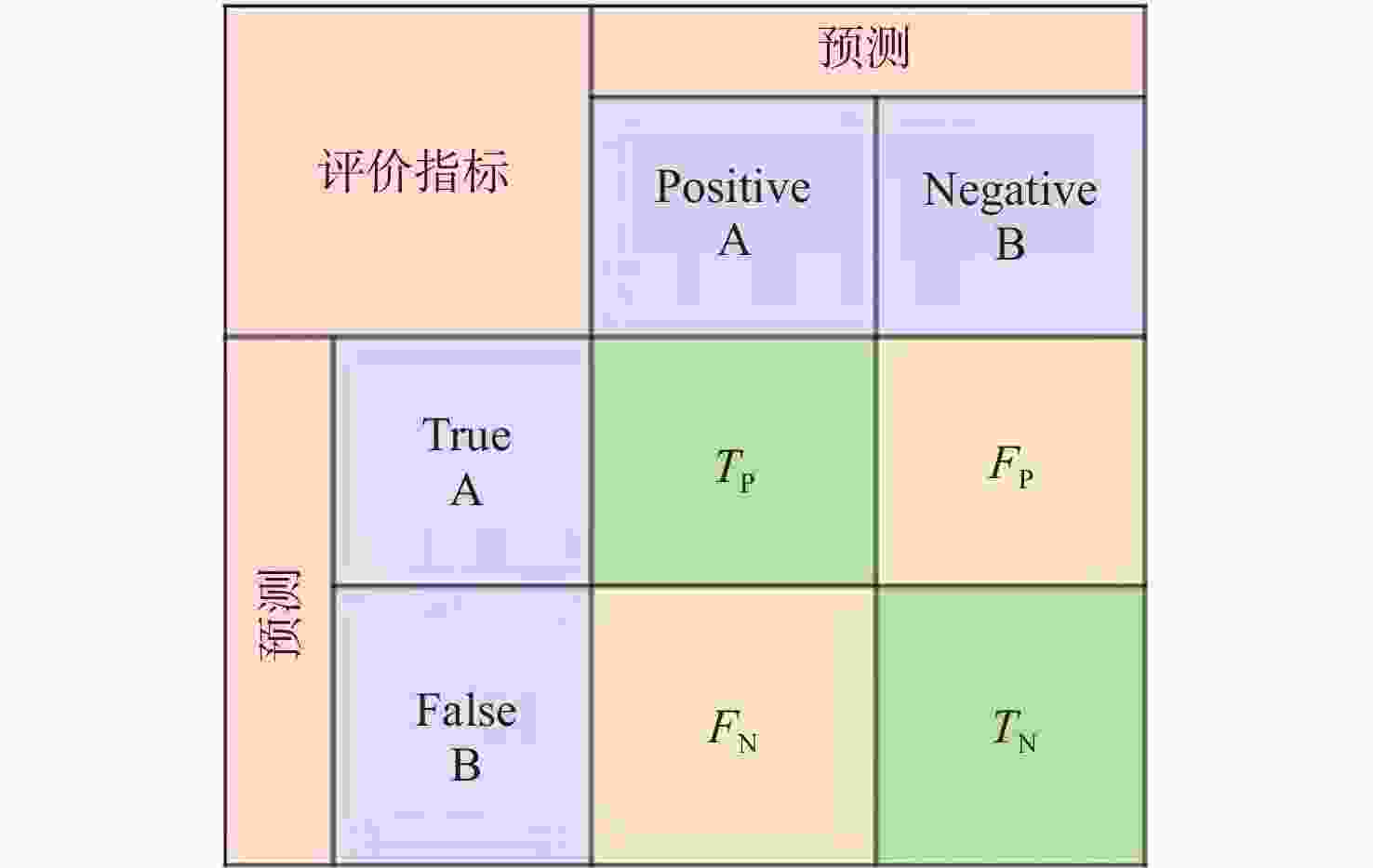

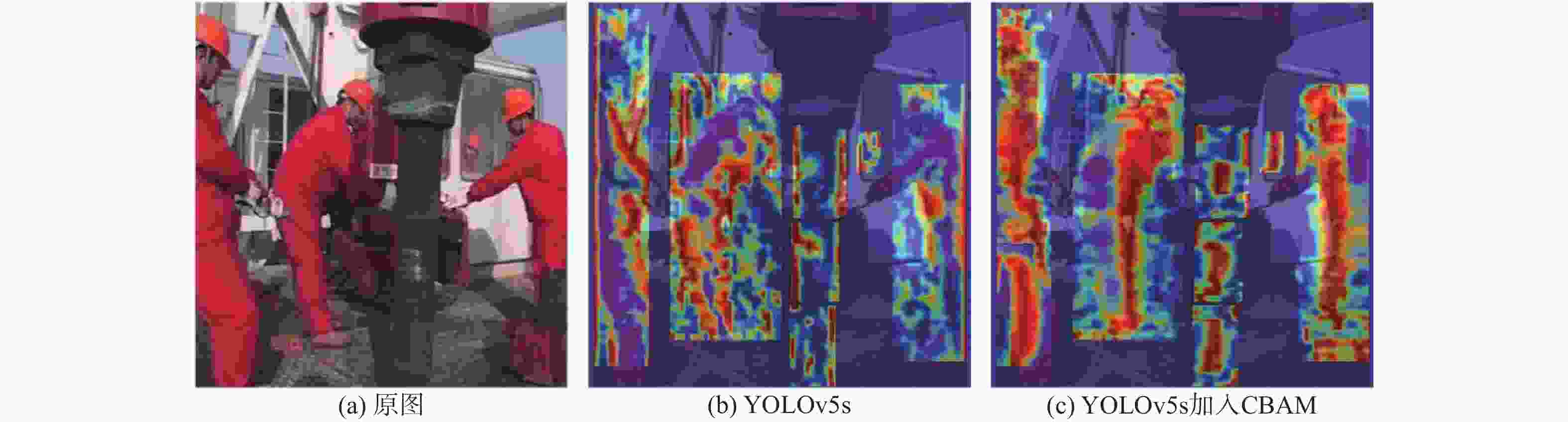

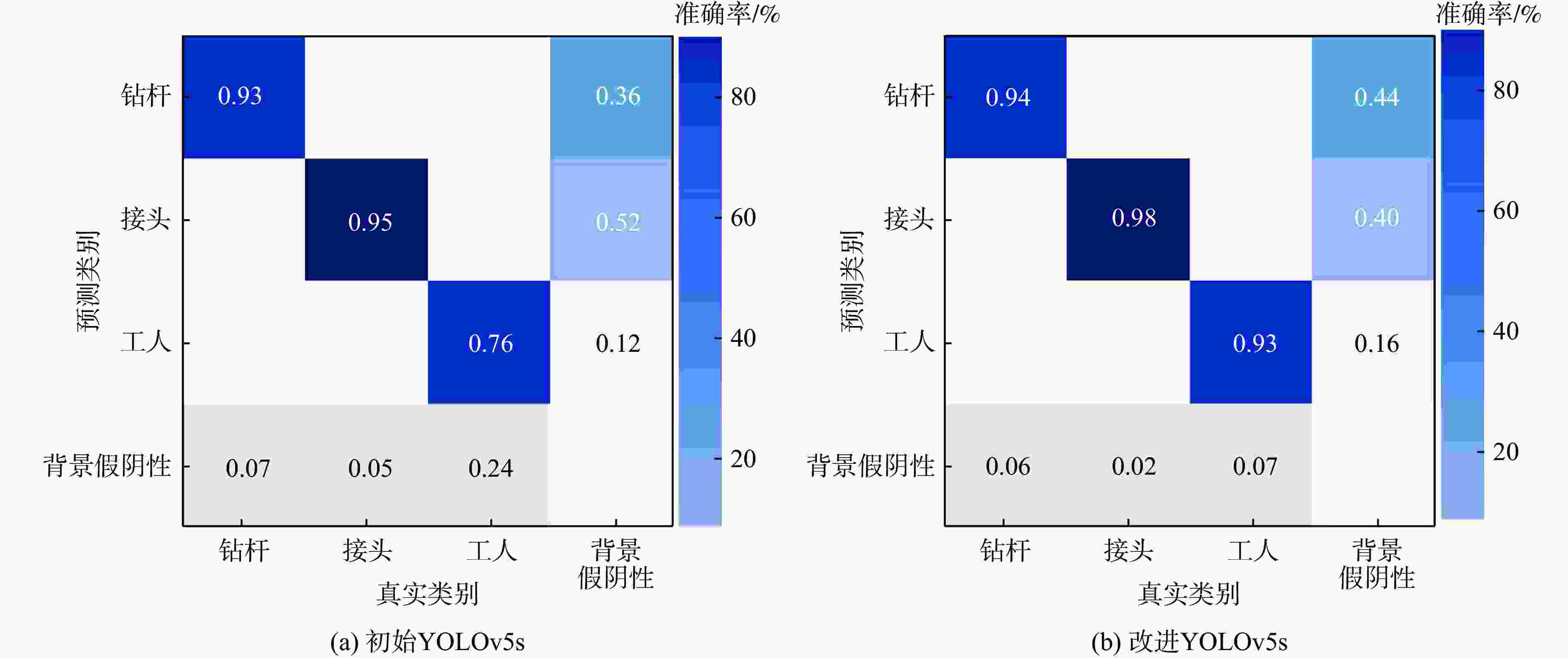

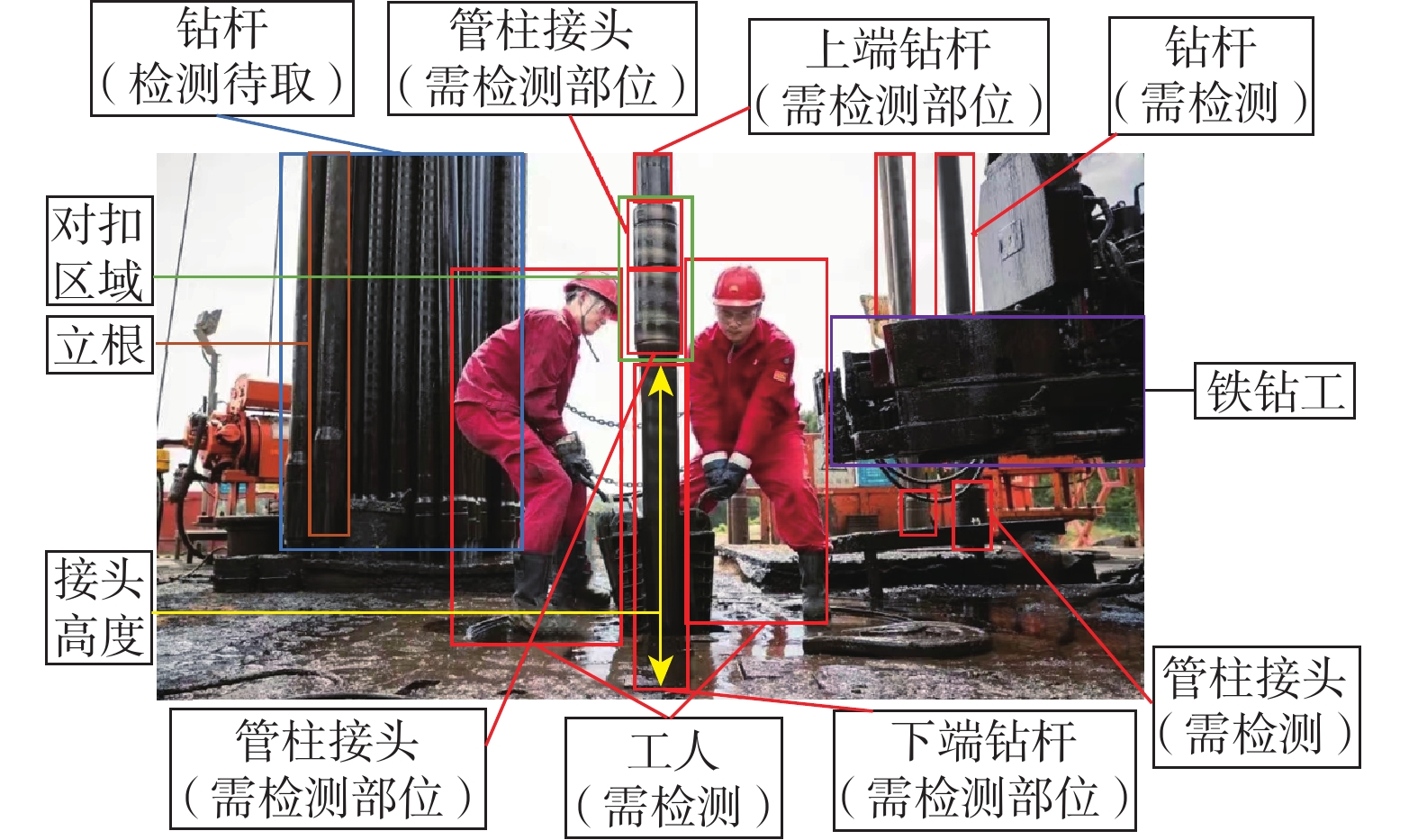

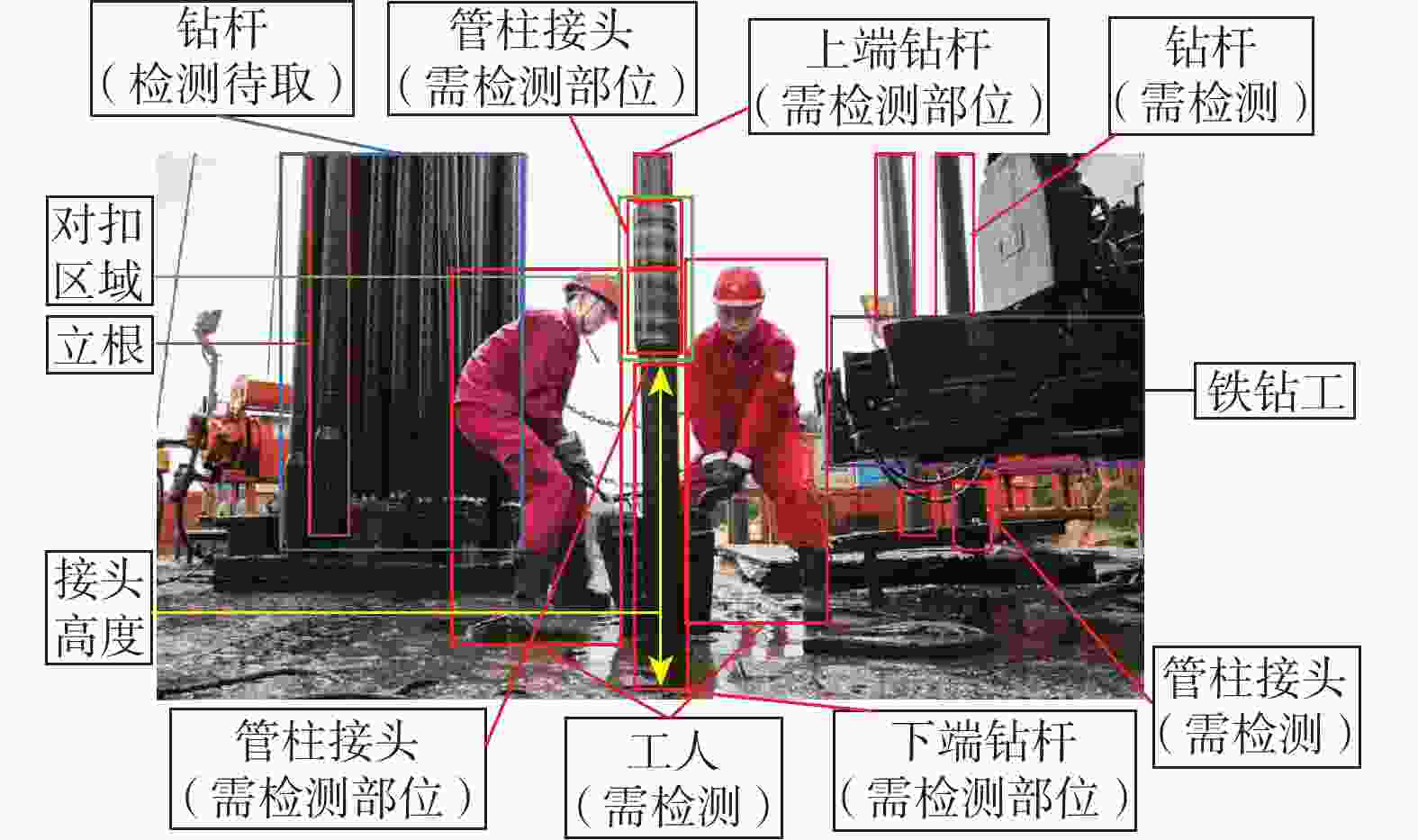

针对钻井平台管柱自动化转运及上卸扣作业水平较低的问题,提出一种改进的多目标检测算法以实现钻杆与管柱接头的精确识别。所提方法以轻量化 EfficientNetV2 为特征提取网络,引入 SPPF 模块降低参数量,并在 Backbone 中融合 CBAM 注意力机制以抑制背景干扰;采用 BiFPN 替代 PANet 提升多尺度特征融合能力,引入 CARAFE 算子增强上采样特征表达;在 Head 部分使用 GhostConv 减少计算复杂度,以 SIoU 损失函数优化边界回归精度,通过AdamW 优化器提升模型收敛性与泛化能力。基于自建数据集的实验结果表明:改进模型在复杂工况下对不同姿态管柱具有良好识别性能,检测准确率达到 90.6%,平均精度均值达到 94.6%,较原模型分别提升 3.9% 和 4.5%,验证了所提方法的有效性与鲁棒性。

Abstract:To address the low level of automation in drill string handling and make-up/break-out operations on drilling platforms, an improved multi-object detection algorithm is proposed for accurate identification of drill pipes and tool joints. The model employs a lightweight EfficientNetV2 as the backbone, with an SPPF module to reduce parameters. A CBAM attention module is integrated to suppress background interference. BiFPN replaces PANet to enhance multi-scale feature fusion, while CARAFE is adopted to improve upsampling quality and feature representation. In the detection head, GhostConv is used to reduce computational cost, and the SIoU loss is introduced to improve bounding box regression. The AdamW optimizer is applied to accelerate convergence and enhance generalization. Experiments on a self-built dataset demonstrate that the proposed method achieves robust performance under complex conditions, accurately detecting drill strings with varying poses. The detection accuracy reaches 90.6% and mAP reaches 94.6%, representing improvements of 3.9% and 4.5% over the baseline, respectively, confirming its effectiveness and robustness.

-

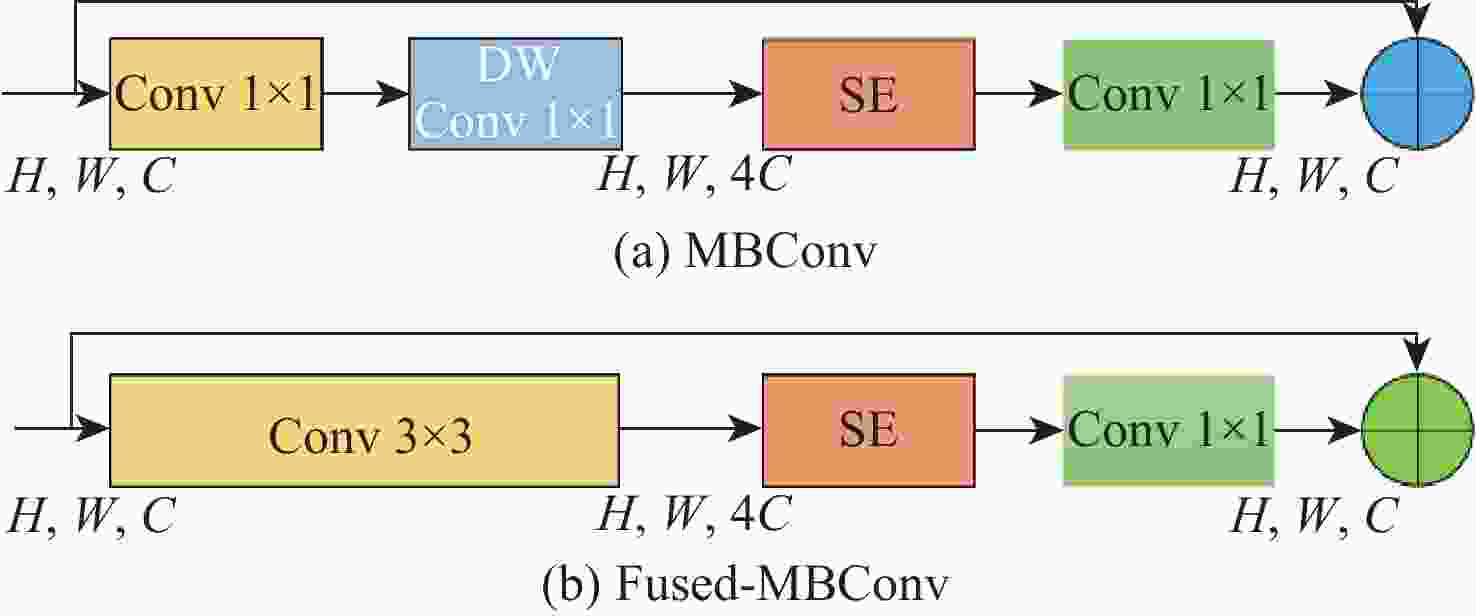

表 1 EfficientNetv2-B0 结构

Table 1. EfficientNetv2-B0 structure

网络阶段 结构 卷积核步距 通道数 层数 1 Stem3×3 2 32 1 2 Fused-MBConv1, k 3×3 1 16 1 3 Fused-MBConv8, k 3×3 2 32 2 4 Fused-MBConv4, k 3×3 2 48 2 5 MBConv8, k 3×3, SE0.25 MBConv6, k 3×3, SE0.25 2 96 3 6 MBConv8, k 3×3, SE0.25 MBConv6, k 3×3, SE0.25 1 112 5 7 MBConv8, k 3×3, SE0.25 MBConv6, k 3×3, SE0.25 2 192 8 8 Conv1×1&Pooling&FC 1280 1 表 2 模型训练参数

Table 2. Model training parameters

训练参数 参数值 迭代次数/轮 200 批量大小[21]/B 16 初始学习速率 0.01 动量 0.937 图片尺寸/(像素×像素) 640×640 表 3 YOLOv5不同版本性能比较

Table 3. Comparison of the performance of different versions of YOLOv5

模型 浮点运算速度/

109 s−1参数量 GmAP/% 检测速度/

(帧·s−1)权重大小/

MBYOLOv5s 16.3 7.06×106 91.6 73.167 14.4 YOLOv5m 47.9 21.6×106 91.2 72.4 42.1 YOLOv5l 107.7 46.1×106 91.7 86.7 92.8 YOLOv5x 204.7 86.2×106 90.4 91.6 86.4 表 4 注意力机制性能对比

Table 4. Attention mechanism performance comparison

模型 参数量 GmAP/% 检测速度/

(帧·s−1)权重大小/

MBYOLOv5s 7.06×106 91.6 73.167 14.4 YOLOv5s+CBAM 6.41×106 92.0 101.919 13.2 YOLOv5s+SE 7.23×106 89.8 97.313 14.8 YOLOv5s+CA 6.42×106 91.6 101.542 13.2 YOLOv5s+ECA 7.20×106 90.4 103.465 14.7 表 5 不同损失函数检测性能

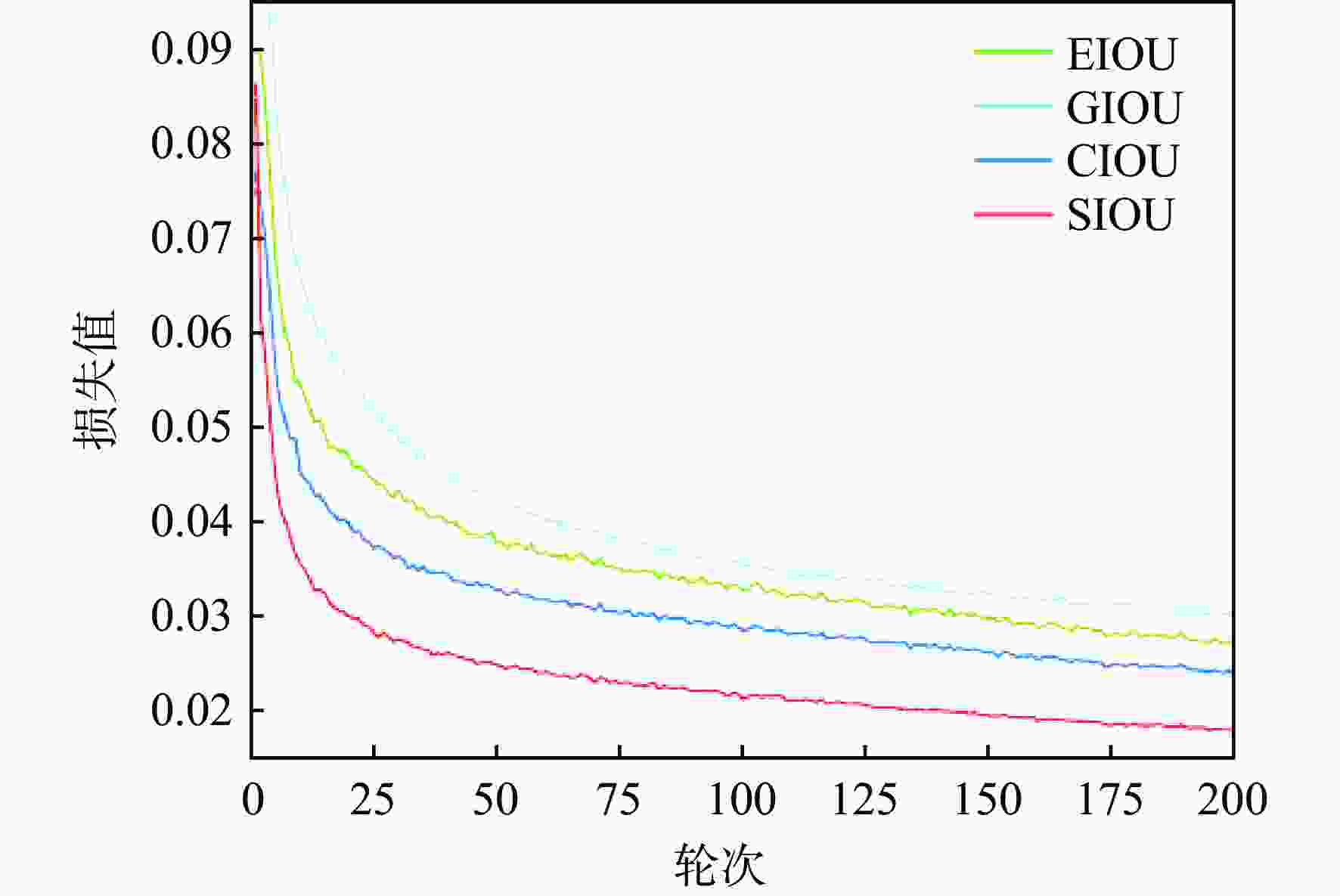

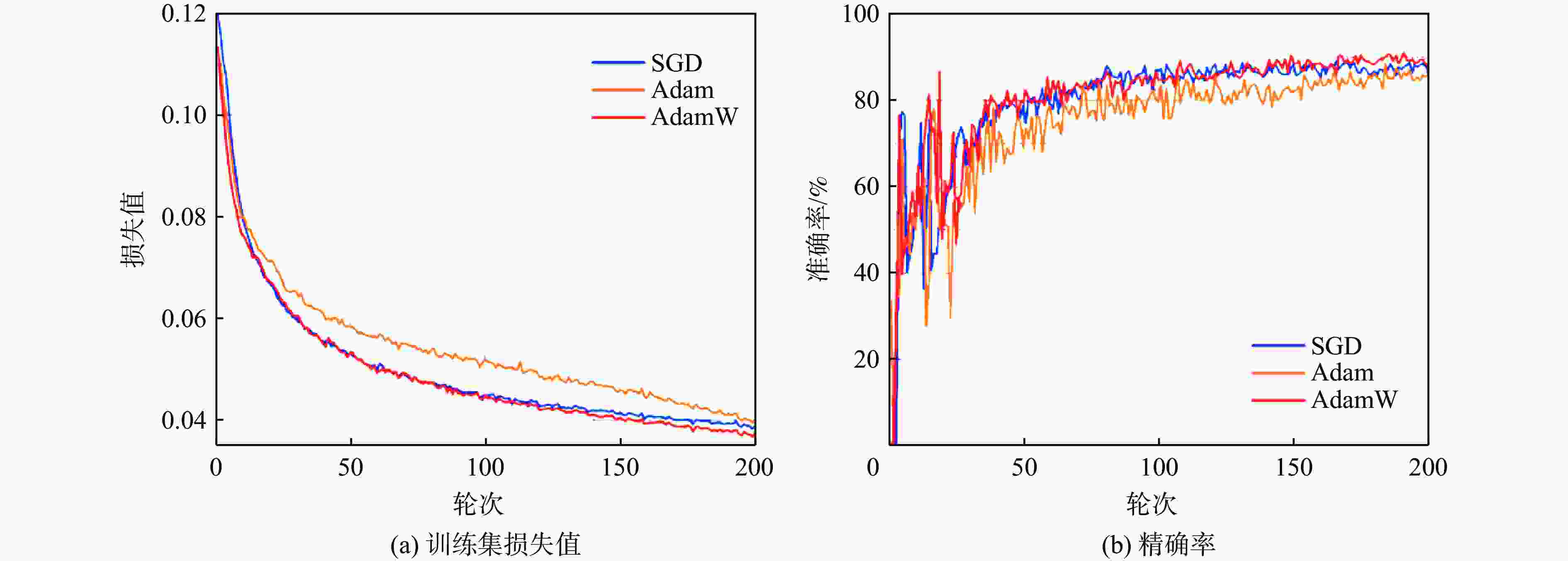

Table 5. Detection performance of different loss functions

损失函数 GmAP/% 精确率/% 召回率/% 训练时长/h CIoU 86.2 86.6 82.6 8.724 GIoU 85.8 85.3 88.4 26.561 EIoU 83.9 88.0 77.3 8.355 SIoU 89.4 88.5 89.6 8.207 表 6 消融实验评价指标

Table 6. Results of ablation experiments

实验 准确率/% 召回率/% GmAP/% 参数量 浮点运算速度/109 s−1 推理时间/(帧·s−1) 1 86.7 91.7 90.1 7.06×106 15.8 10.4 2 88.1 90.4 89.8 5.42×106 6.9 6.2 3 86.6 92.6 92 6.41×106 16 11.7 4 86.4 91.2 89.6 7.17×106 16.4 10.8 5 87.8 91.3 91.2 6.82×106 15.9 11.2 6 88.5 89.6 90.4 7.06×106 15.8 10.2 7 88.8 89.1 90.7 5.57×106 12.1 8.2 8 87.4 88.6 89.8 5.61×106 13.6 8.5 9 89.5 88.4 93.7 5.75×106 13.8 8.9 10 90.3 91.4 94.2 5.75×106 14.2 9.5 11 90.6 91.2 94.6 5.24×106 13.4 8.4 表 7 YOLO系列主流模型对比实验

Table 7. Comparative experiments of mainstream models in the YOLO series

模型 AP/% GmAP@0.5% 参数量 权重大小/MB 浮点运算速度/109 s−1 检测速度/(帧·s−1) 钻杆 接头 工人 YOLOv3[28] 81.3 87.7 84.9 88 8.67×106 17.4 12.9 39.6 YOLOv5s 84.8 90.2 85.3 90.1 7.06×106 14.4 16.3 42.6 YOLOv6[29] 82.4 86.5 81.6 90 18.5×106 38.7 45.2 104 YOLOv7-tiny[30] 85.5 89.9 85.5 91.4 6.01×106 11.6 13.0 84 YOLOv8[31] 84.8 91.3 85.7 92 11.1×106 22.5 28.4 108 YOLOX[32] 88.6 85.4 86.2 90.4 8.94×106 12.4 26.8 86 本文 93.9 92.9 87.6 94.6 5.24×106 10.9 13.4 86.4 -

[1] 白尚懿, 杨小亮, 何生兵, 等. 自动化钻机管柱处理系统研究现状与发展趋势[J]. 机械研究与应用, 2020, 33(5): 203-207.BAI S Y, YANG X L, HE S B, et al. Research status and development trend of pipe handling system for automated drilling rig[J]. Mechanical Research & Application, 2020, 33(5): 203-207(in Chinese). [2] 杨传书, 张好林, 肖莉. 自动化钻井关键技术进展与发展趋势[J]. 石油机械, 2017, 45(5): 10-17.YANG C S, ZHANG H L, XIAO L. Key technical progress and development trend of automated drilling[J]. China Petroleum Machinery, 2017, 45(5): 10-17(in Chinese). [3] 康亮, 李亚伟, 黎善猛, 等. 石油钻机管柱处理系统研究现状和发展趋势分析[J]. 液压气动与密封, 2019, 39(5): 1-5.KANG L, LI Y W, LI S M, et al. Research present situation and development trend analysis of pipeline handling system for drill rig[J]. Hydraulics Pneumatics & Seals, 2019, 39(5): 1-5(in Chinese). [4] JOCHER G, STOKEN A, BOROVEC J, et al. Ultralytics/yolov5: v3.0 [EB/OL]. (2020-08-13)[2023-12-20]. https://doi.org/10.5281/zenodo.3983579. [5] LIU S, QI L, QIN H F, et al. Path aggregation network for instance segmentation[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 8759-8768. [6] WOO S, PARK J, LEE J Y, et al. CBAM: convolutional block attention module[C]//Proceedings of the Computer Vision-ECCV 2018. Cham: Springer, 2018: 3-19. [7] WANG D C, CHEN X N, JIANG M Y, et al. ADS-Net: an attention-based deeply supervised network for remote sensing image change detection[J]. International Journal of Applied Earth Observation and Geoinformation, 2021, 101: 102348. [8] TAN M X, LE Q V. EfficientNetV2: smaller models and faster training[C]//Proceedings of the International Conference on Machine Learning. New York: PMLR, 2021: 10096-10106. [9] WANG J Q, CHEN K, XU R, et al. CARAFE: content-aware ReAssembly of FEatures[C]//Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2019: 3007-3016. [10] LIN T Y, DOLLÁR P, GIRSHICK R, et al. Feature pyramid networks for object detection[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2017: 936-944. [11] TAN M X, PANG R M, LE Q V. EfficientDet: scalable and efficient object detection[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 10778-10787. [12] ZHU L L, GENG X, LI Z, et al. Improving YOLOv5 with attention mechanism for detecting boulders from planetary images[J]. Remote Sensing, 2021, 13(18): 3776. [13] HAN K, WANG Y H, TIAN Q, et al. GhostNet: more features from cheap operations[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 1577-1586. [14] ZHOU D F, FANG J, SONG X B, et al. IoU loss for 2D/3D object detection[C]//Proceedings of the I International Conference on 3D Vision. Piscataway: IEEE Press, 2019: 85-94. [15] ZHENG Z H, WANG P, LIU W, et al. Distance-IoU loss: faster and better learning for bounding box regression[J]. Proceedings of the AAAI Conference on Artificial Intelligence, 2020, 34(7): 12993-13000. [16] GEVORGYAN Z. SIoU loss: more powerful learning for bounding box regression [EB/OL]. (2022-05-25)[2023-12-20]. https://arxiv.org/abs/2107.08430. [17] WANG J Y, JOSHI G. Cooperative SGD: a unified framework for the design and analysis of local-update SGD algorithms[J]. Journal of Machine Learning Research, 2021, 22(1): 9709-9758. [18] LOSHCHILOV I, HUTTER F. Decoupled weight decay regularization[EB/OL]. (2017-11-14) [2023-12-20]. https://doi.org/10.48550/arxiv.1711.05101. [19] ZHOU P, FENG J, MA C, et al. Towards theoretically understanding why sgd generalizes better than adam in deep learning[J]. Advances in Neural Information Processing Systems, 2020, 33: 21285-21296. [20] 杨波, 何金平, 张立娜. 基于改进SPP-x的YOLOv5神经网络水稻叶片病害识别检测[J]. 中国农机化学报, 2023, 44(9): 190-197.YANG B, HE J P, ZHANG L N. Identification and detection of rice leaf diseases by YOLOv5 neural network based on improved SPP-x[J]. Journal of Chinese Agricultural Mechanization, 2023, 44(9): 190-197(in Chinese). [21] XUE B, HE Y, JING F, et al. Robot target recognition using deep federated learning[J]. International Journal of Intelligent Systems, 2021, 36(12): 7754-7769. [22] HU J, SHEN L, SUN G. Squeeze-and-excitation networks [C]// Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 7132-7141. [23] QIN Z Q, ZHANG P Y, WU F, et al. FcaNet: frequency channel attention networks[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2021: 763-772. [24] WANG Q L, WU B G, ZHU P F, et al. ECA-net: efficient channel attention for deep convolutional neural networks[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 11531-11539. [25] SELVARAJU R R, COGSWELL M, DAS A, et al. Grad-CAM: visual explanations from deep networks via gradient-based localization[C]//Proceedings of the IEEE International Conference on Computer Vision. Piscataway: IEEE Press, 2017: 618-626. [26] WANG Y B, WANG H F, PENG Z H. Rice diseases detection and classification using attention based neural network and Bayesian optimization[J]. Expert Systems with Applications, 2021, 178: 114770. [27] PENG S D, JIANG W, PI H J, et al. Deep snake for real-time instance segmentation[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 8530-8539. [28] REDMON J, FARHADI A. YOLOv3: an incremental improvement[EB/OL]. (2021-10-18) [2023-12-20]. https://arxiv.org/abs/1804.02767. [29] DANG F Y, CHEN D, LU Y Z, et al. YOLOWeeds: a novel benchmark of YOLO object detectors for multi-class weed detection in cotton production systems[J]. Computers and Electronics in Agriculture, 2023, 205: 107655. [30] WANG C Y, BOCHKOVSKIY A, LIAO H M. YOLOv7: trainable bag-of-freebies sets new state-of-the-art for real-time object detectors[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2023: 7464-7475. [31] REIS D, KUPEC J, HONG J, et al. Real-time flying object detection with YOLOv8[EB/OL]. (2023-05-17)[2023-12-20]. https://arxiv.org/abs/2305.09972. [32] GE Z, LIU S T, WANG F, et al. YOLOX: exceeding YOLO series in 2021[EB/OL]. (2021-08-06)[2023-12-20]. https://arxiv.org/abs/2107.08430. -

下载:

下载: