-

摘要:

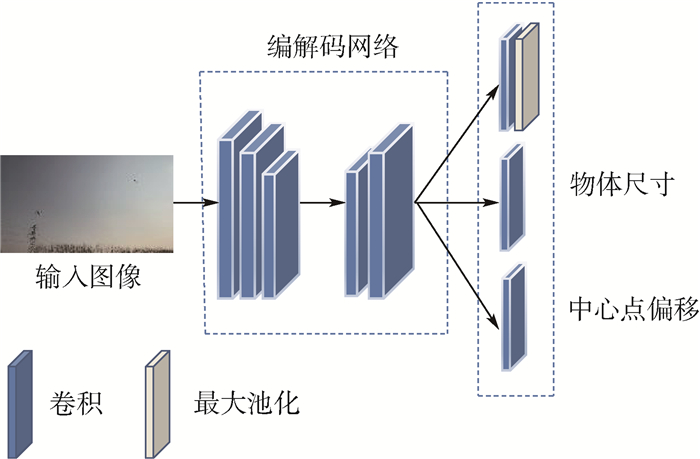

为实现对“低慢小”无人机(UAV)的有效探测, 提升检测精度和定位质量, 提出一种基于联合注意力和CenterNet的低空无人机检测方法。针对通用目标检测算法小目标漏检率高的问题, 引入解耦的非局部算子, 捕捉光学图像目标区域的关联性。利用无人机群个体间的相似性, 将离散的无人机特征相互关联, 降低漏检率。为获得更加精准的检测框, 对CenterNet的标签编码策略和边界框回归方式进行优化, 引入定位质量损失, 提升检测框定位质量。实验结果表明:优化后的S-CenterNet算法相比原始CenterNet算法平均准确率提升了8.9%, 检测框定位质量有明显改善。

Abstract:To achieve effective detection of "low, slow and small" unmanned aerial vehicle(UAV)and improve detection accuracy and positioning quality, we propose a low-altitude UAV detection method based on joint attention and CenterNet. Aiming at the problem of high miss-detection rate of small targets in general target detection algorithms, a decoupled non-local operator is introduced to capture the relevance of target regions in optical images. Utilizing the similarity between individuals of the UAV group, the discrete UAV features are correlated to each other to reduce the missed detection rate. Moreover, to obtain more accurate detection boxes, we optimized the label coding strategy and bounding box regression method of CenterNet, and the positioning quality loss is introduced to improve the positioning quality of the detection boxes. Experimental results show that the optimized S-CenterNet algorithm has an average accuracy increase of 8.9% compared with the original CenterNet, and the detection boxer positioning quality has been significantly improved.

-

Key words:

- object detection /

- deep learning /

- joint attention /

- CenterNet /

- unmanned aerial vehicle

-

表 1 网络结构调整

Table 1. Network structure adjustment

网络 mAP/% AP50/% AP75/% 推理时间/ms DLA34 41.9 89.8 31.8 11 DLA28 42.1 90.0 32.1 10 表 2 PNL性能对比

Table 2. PNL performance comparison

PNL mAP/% AP50/% AP75/% 推理时间/ms × 42.1 90.0 32.1 10 √ 44.8 93.2 34.7 14 注: “√”表示采用相关改进策略,“×”表示不采用相关改进策略 表 3 CNL性能对比

Table 3. CNL performance comparison

阶段 mAP/% AP50/% AP75/% 推理时间/ms L2 42.3 90.5 32.0 11 L3 42.6 91.1 32.2 11 L4 42.5 90.8 32.3 11 L2+L3+L4 43.3 92.0 33.1 13 表 4 标签编码方式

Table 4. Label encoding method

高斯核 mAP/% AP50/% AP75/% 推理时间/ms 圆形 42.1 90.0 32.1 10 椭圆 42.5 90.1 32.8 10 表 5 定位质量损失和比例因子

Table 5. Positioning quality loss and scale factor

损失函数 mAP/% AP50/% AP75/% 推理时间/ms Focal Loss 42.1 90.0 32.1 10 FL+MIOU 46.1 92.5 40.2 10 FL+λ 42.8 90.7 33.4 10 FL+MIOU+λ 46.6 92.7 41.1 10 表 6 消融实验结果

Table 6. Results of ablation experiments

网络结构 椭圆高斯核 损失函数 mAP(0.5∶0.95)/% AP50/% AP75/% mAR(0.9∶0.95)/% 推理时间/ms 参数量/MB × × × 41.9 89.8 31.8 51.8 11 74.0 √ × × 45.6 92.2 38.2 55.3 17 30.0 × √ × 42.5 90.1 32.8 52.4 11 74.0 × × √ 46.6 92.7 41.1 54.9 11 74.0 √ √ × 46.3 93.7 39.0 55.7 17 30.0 × √ √ 47.3 93.4 43.7 57.1 11 74.0 √ × √ 50.2 94.9 47.2 59.1 17 30.0 √ √ √ 50.5 95.5 47.8 59.5 17 30.0 注: “√”表示采用相关改进策略,“×”表示不采用相关改进策略。 表 7 算法准确率对比

Table 7. Algorithm accuracy comparison

算法框架 主干网络 mAP/% AP50/% AP75/% CenterNet ResNet-18 29.5 77.8 13.3 ResNet-101 34.9 85.7 18.2 DLA34 41.9 89.8 31.8 DLA34-DCN 43.7 90.2 34.2 Hourglass 47.2 93.5 42.3 S-CenterNet DLAS28 48.3 94.1 44.0 DLAX28 50.5 95.5 47.8 DLAX28-DCN 51.6 95.3 50.1 表 8 算法速度及模型复杂度对比

Table 8. Algorithm speed and model complexity comparison

算法框架 主干网络 推理时间/ms 参数量/MB CenterNet ResNet-18 8 63.3 ResNet-101 19 214.3 DLA34 11 74.0 DLA34-DCN 16 78.8 Hourglass 49 765.7 S-CenterNet DLAS28 12 8.3 DLAX28 17 30.0 DLAX28-DCN 21 32.1 -

[1] 于飞, 刘东华, 贺飞扬. 无人机"黑飞"对电磁空间安全的挑战[J]. 中国无线电, 2018(8): 43-44. https://www.cnki.com.cn/Article/CJFDTOTAL-ZWDG201808032.htmYU F, LIU D H, HE F Y. UAV "black flying" challenges the safety of electromagnetic space[J]. China Radio, 2018(8): 43-44(in Chinese). https://www.cnki.com.cn/Article/CJFDTOTAL-ZWDG201808032.htm [2] GIRSHICK R, DONAHUE J, DARRELL T, et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]//Proceedings of IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2014: 580-587. [3] GIRSHICK R. Fast-RCNN[C]//Proceedings of the IEEE Conference on Computer Vision. Piscataway: IEEE Press, 2015: 1440-1448. [4] REN S, HE K, GIRSHICK R, et al. Faster R-CNN: Towards real-time object detection with region proposal networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017(6): 1137-1149. [5] CAI Z, VASCONCELOS N. Cascade R-CNN: Delving into high quality object detection[C]//Proceedings of 2018 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 6154-6162. [6] HE K, GKIOXARI G, DOLLÁR P, et al. Mask R-CNN[C]//Proceedings of 2017 IEEE International Conference on Compu-ter Vision. Piscataway: IEEE Press, 2017: 2980-2988. [7] REDMON J, FARHADI A. YOLOv3: An incremental improvement[EB/OL]. (2018-04-08)[2021-01-12]. https://arxiv.org/abs/1804.02767. [8] LIN T, GOYAL P, GIRSHICK R, et al. Focal loss for dense object detection[C]//Proceedings of 2017 IEEE International Conference on Computer Vision. Piscataway: IEEE Press, 2017: 2999-3007. [9] LAW H, DENG J. CornerNet: Detecting objects as paired keypoints[J]. International Journal of Computer Vision, 2018, 128: 642-656. [10] ZHOU X, WANG D, KRÄHENBVHL P. Objects as points[EB/OL]. (2019-04-16)[2021-01-12]. https://arxiv.org/abs/1603.06937. [11] WANG X, GIRSHICK R, GUPTA A, et al. Non-local neural networks[C]//Proceedings of 2018 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 7794-7803. [12] CAO Y, XU J, LIN S, et al. Global context networks[J/OL]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2020(2020-12-24)[2021-01-12]. https://doi.org/10.1109/TPAMI.2020-3047209. [13] YIN M, YAO Z, CAO Y, et al. Disentangled non-local neural networks[EB/OL]. (2020-11-08)[2021-01-12]. https://arxiv.org/abs/2006.06668. [14] WANG Q, WU B, ZHU P, et al. ECA-Net: Efficient channel attention for deep convolutional neural networks[C]//Proceedings of 2020 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 11531-11539. [15] HE K, ZHANG X, REN S, et al. Deep residual learning for image recognition[C]//Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2016: 770-778. [16] YU F, WANG D, SHELHAMER E, et al. Deep layer aggregation[C]//Proceedings of 2018 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 2403-2412. [17] NEWWLL A, YANG K, JIA D. Stacked hourglass networks for human pose estimation[EB/OL ]. (2016-03-22)[2020-12-28]. https://arxiv.org/abs/1603.06937. [18] WANG C, LIAO H Y, WU Y, et al. CSPNet: A new backbone that can enhance learning capability of CNN[C]//Proceedings of 2020 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 1571-1580. [19] ZHU X Z, HU H, LIN S, et al. Deformable ConvNets v2: More deformable, better results[C]//Proceedings of 2019 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2019: 9308-9316. -

下载:

下载: