Borehole image detection of aero-engine based on self-attention semantic segmentation model

-

摘要:

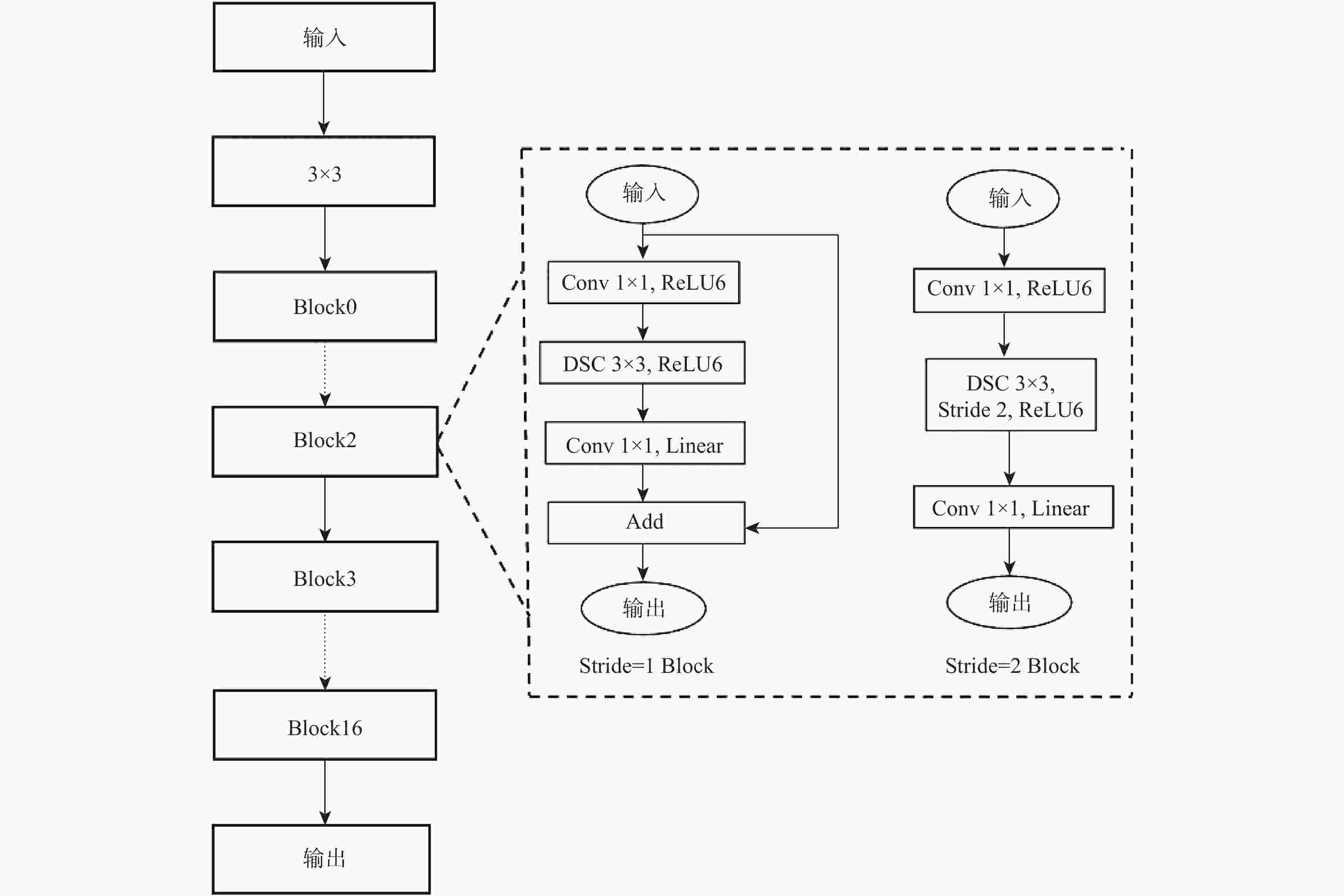

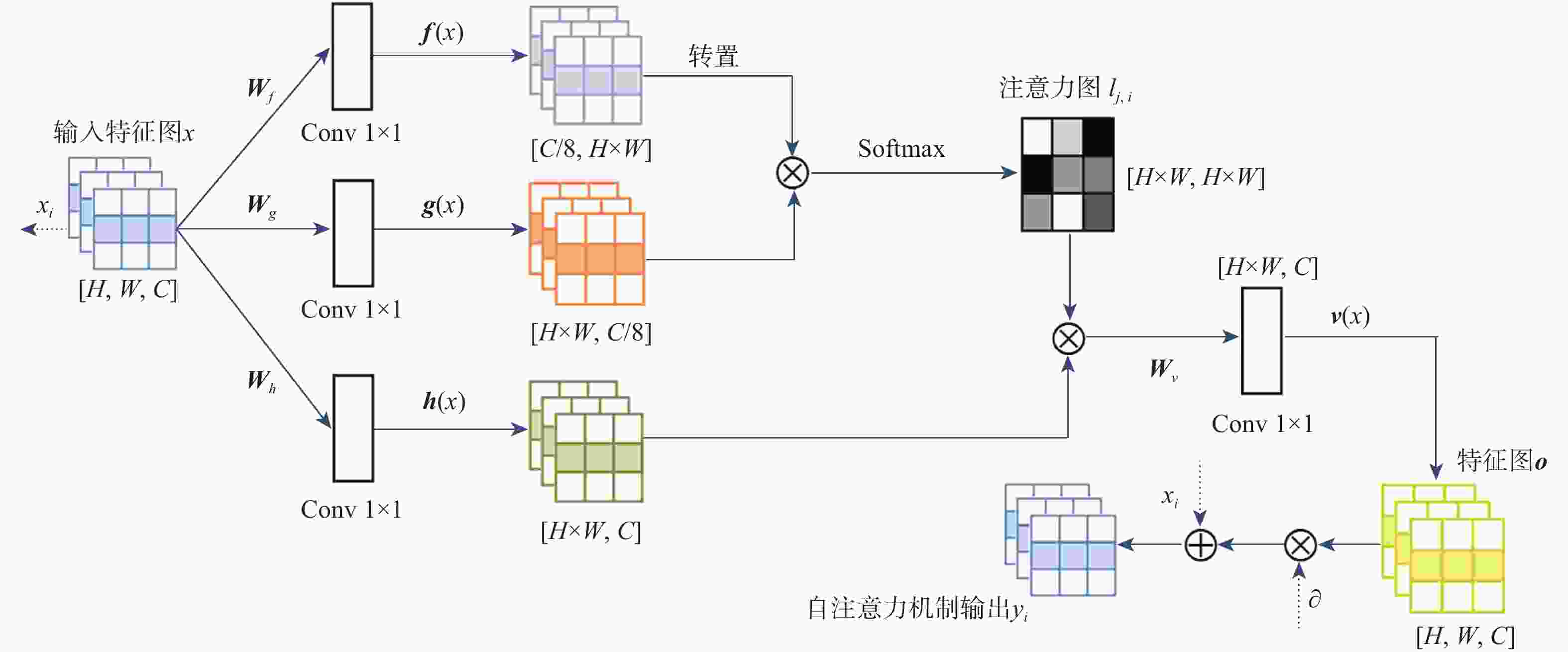

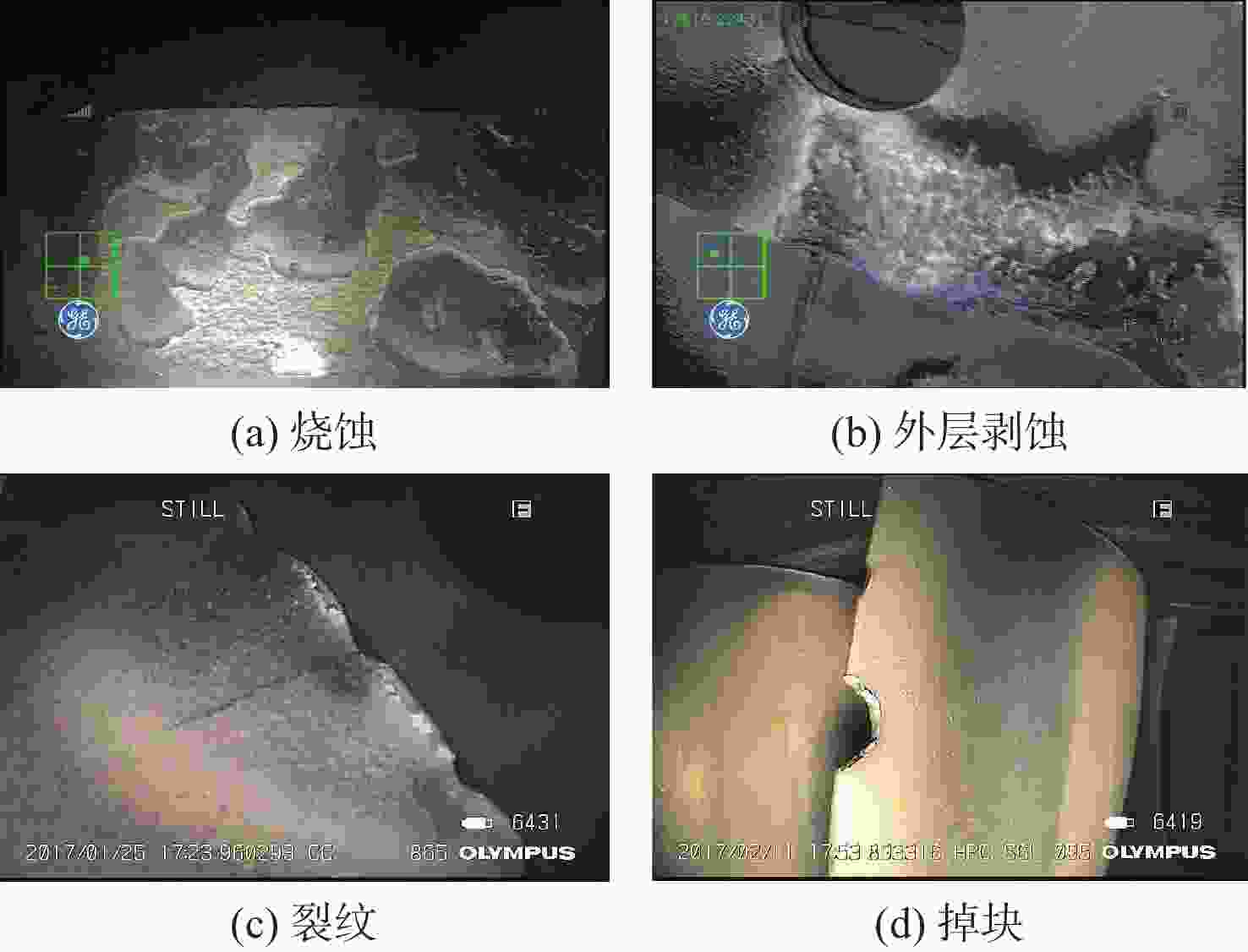

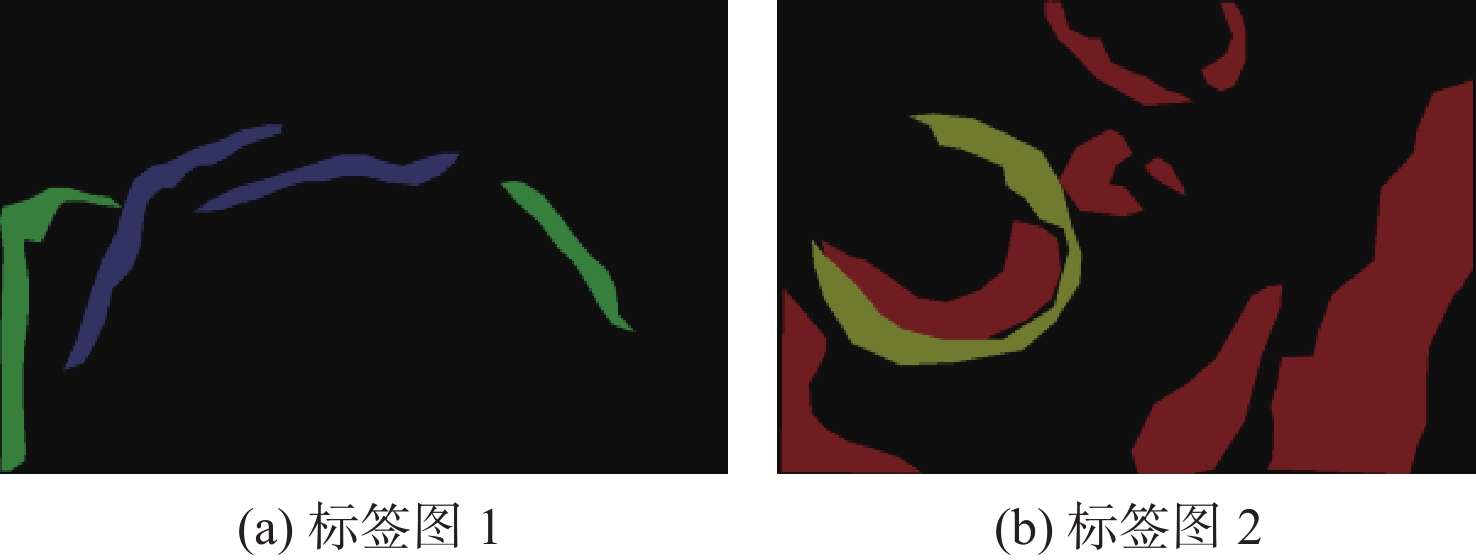

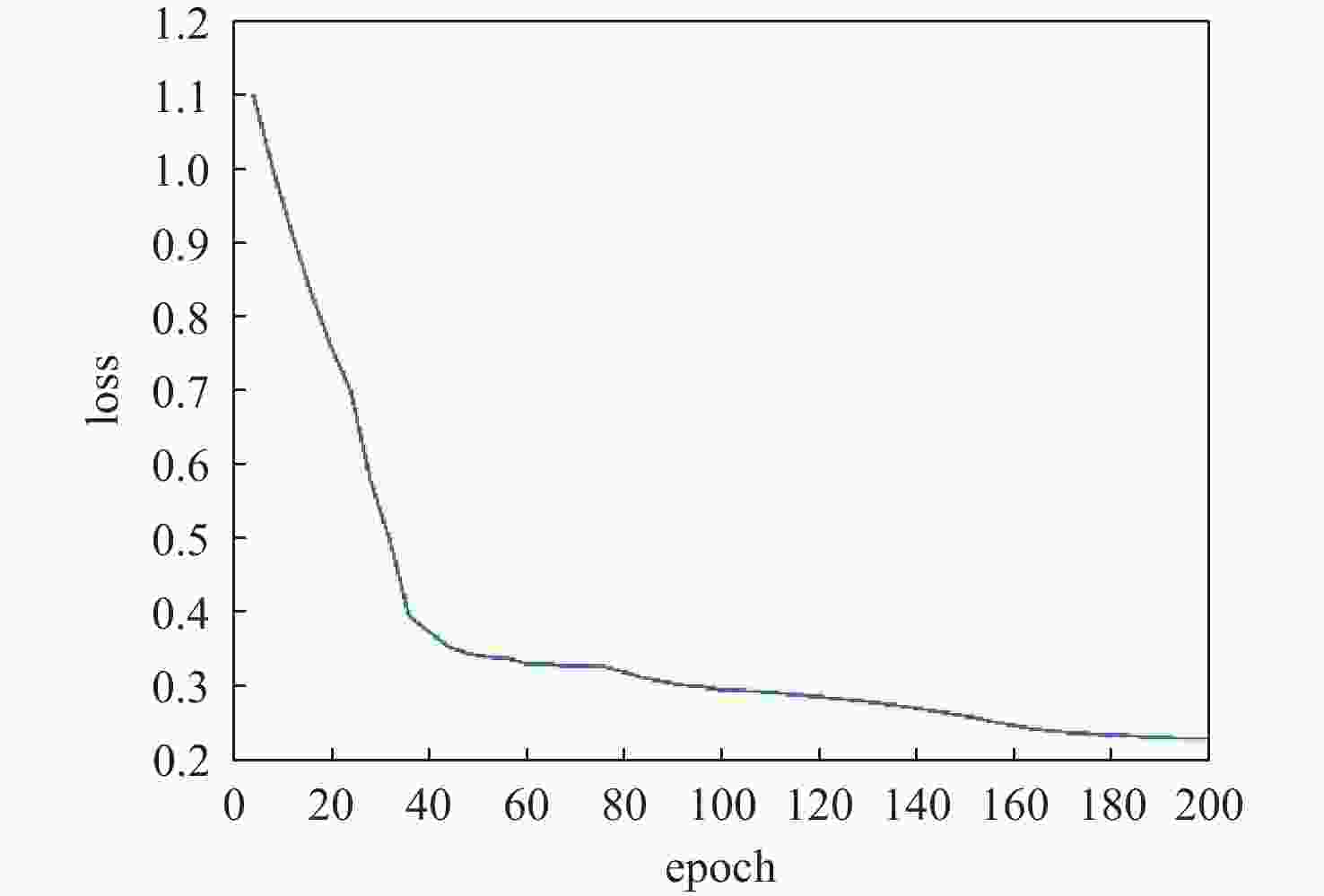

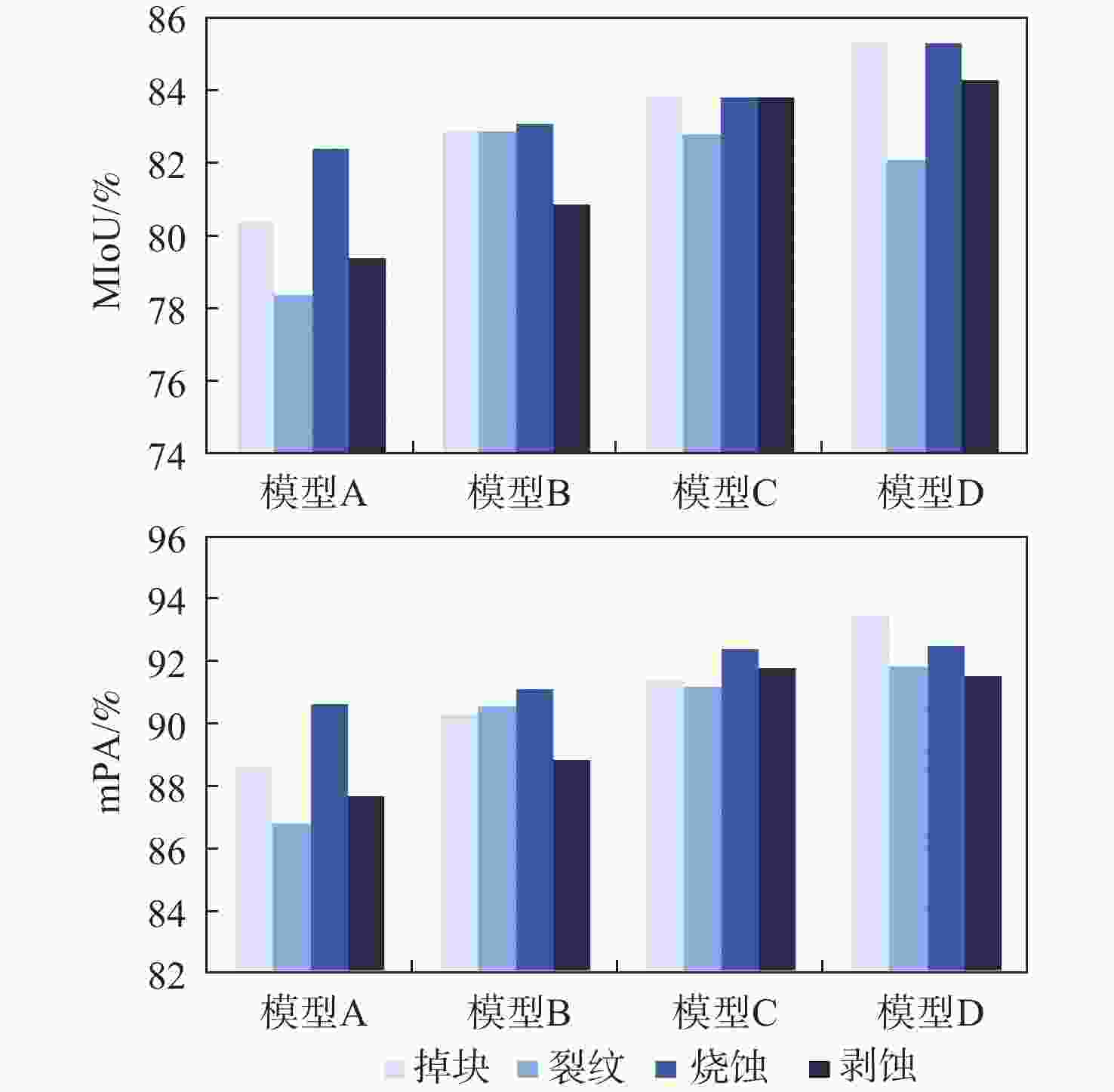

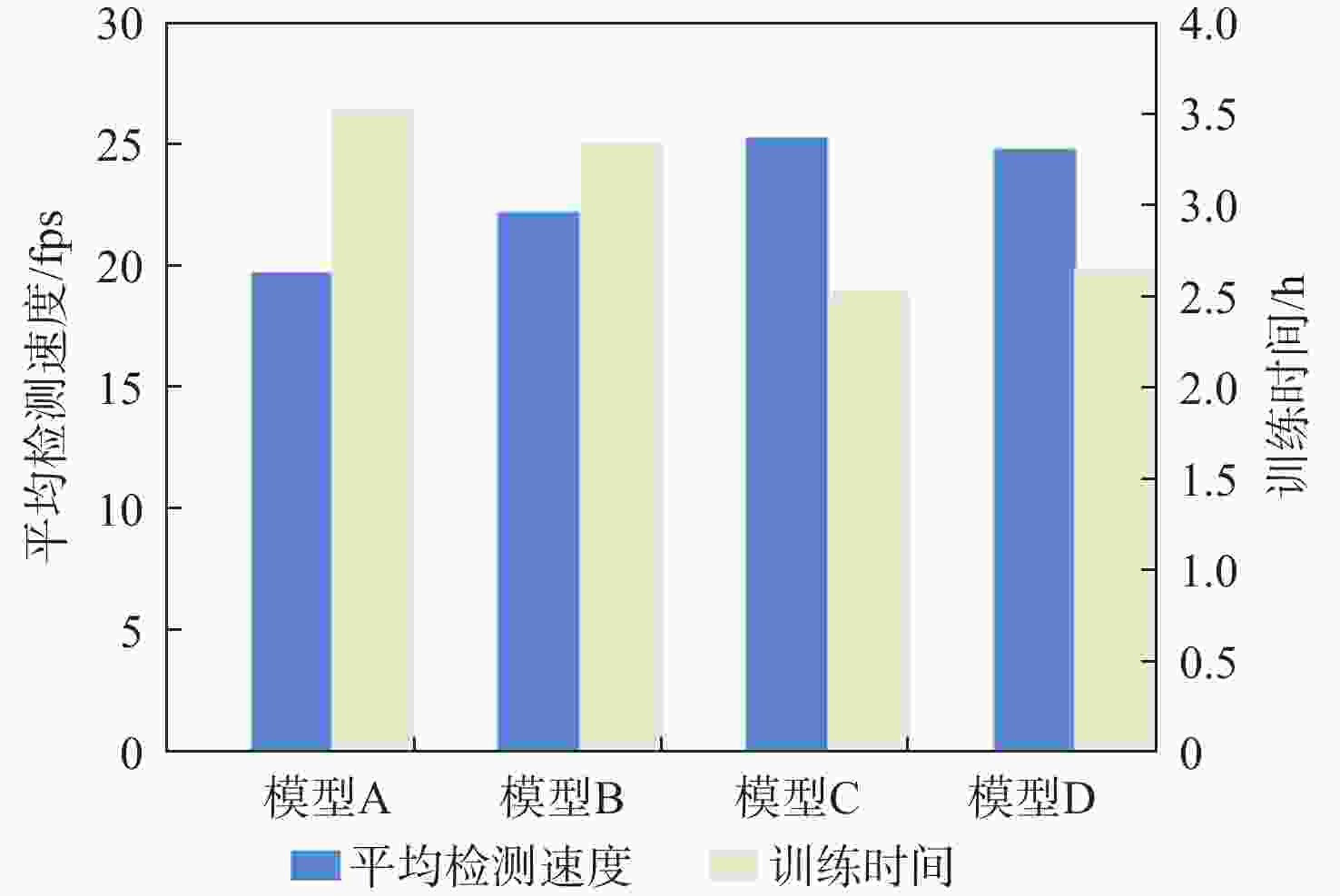

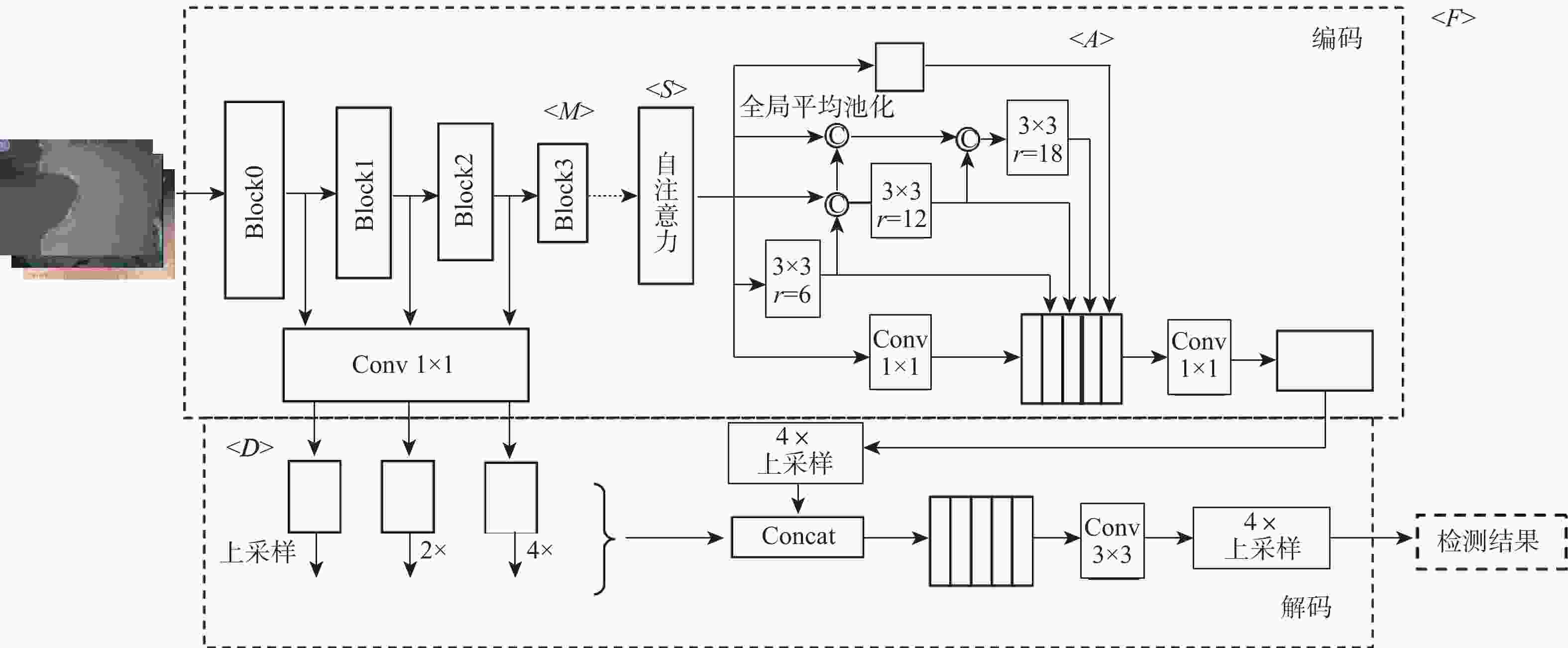

针对传统语义分割模型对于航空发动机孔探图像内损伤的检测存在小尺度或高相似度损伤易被漏检误判的问题,提出了一种基于自注意力语义分割(SA-SS)模型的航空发动机孔探图像检测方法。基于语义分割模型DeepLabv3+的总体架构,采用轻量级MobileNetV2替代原始的Xception作为主干特征提取网络,利用扩张—提取—压缩的结构进行特征提取,以减少模型计算量。基于多层级联结构,改进原始DeepLabv3+的空洞空间金字塔池化结构,使特征图保有更丰富的特征信息。在模型内融合一种自注意力机制,建立全局像素的内部相关性,加强对细节特征的注意力。改进原始DeepLabv3+的解码层,将多尺度空间融合方法引入低层特征提取,融合多个跃层特征。实验结果表明:与传统DeepLabv3+、SegNet-ResNet等方法相比,SA-SS模型的平均交并比和平均像素精确度最大分别提升了4.10%和3.92%,训练时间和平均检测速度最大分别改善了24.43%和5.11 帧/s。

-

关键词:

- 孔探图像 /

- 故障诊断 /

- 自注意力 /

- 语义分割 /

- DeepLabv3+模型

Abstract:Aiming at the problems that small-scale faults tend to be missed and misjudged when detecting borehole images of aero-engines by using traditional methods, a new method based on self-attention semantic segmentation (SA-SS) model is proposed. Based on the overall architecture of classical semantic segmentation model DeepLabv3+, a lightweight MobileNetV2 is adopted as the backbone feature extraction network instead of Xception to reduce calculation by utilizing expansion-extraction-compression strategy; based on the idea of multi-layer cascade, original atrous spatial pyramid pooling structure of DeepLabv3+ is improved to keep more feature information in feature map; a self-attention mechanism is fused to establish the internal correlation of global pixels and strengthen the attention to details. The decoding layer of original DeepLabv3+ is improved; multi-scale spatial fusion method is introduced into low-level feature extraction to fuse multiple layers of features for classification. Experimental results show that compared with original DeepLabv3+, SegNet-ResNet and other methods, mean intersection over union and pixel accuracy and PA of SA-SS are increased by 4.10% and 3.92% respectively. Also, training cost and detection speed are improved by 24.43% and 5.11frame/s respectively.

-

Key words:

- borescope images /

- fault diagnosis /

- self-attention /

- semantic segmentation /

- DeepLabv3+ model

-

表 1 MobileNetV2网络参数

Table 1. Parameters of MobileNetV2

输入 操作 t p q s 2242× 3 conv2d 32 1 2 1122× 32 Block 1 16 1 1 1122× 16 Block 6 24 2 2 562× 24 Block 6 32 3 2 282× 32 Block 6 64 4 2 142× 64 Block 6 96 3 1 142× 96 Block 6 160 3 2 72× 160 Block 6 320 1 1 72× 320 conv2d 1280 1 1 表 2 数据集信息

Table 2. Dataset information

标签 类型 数量/张 尺寸/(像素×像素) 掩膜颜色 background 背景 黑 burn 烧蚀 631 513×513 红 coat 剥蚀 656 513×513 绿 crack 裂纹 639 513×513 蓝 material 掉块 574 513×513 黄 表 3 4种模型平均性能

Table 3. Average performance of four models

% 模型 测试集

MIoU测试集

mPA验证集

MIoU验证集

mPAA 80.15 88.47 79.70 89.54 B 82.45 90.25 82.11 92.76 C 83.55 91.76 83.72 92.59 D 84.25 92.39 85.14 94.69 -

[1] 陈果, 汤洋. 基于孔探图像纹理特征的航空发动机损伤识别方法[J]. 仪器仪表学报, 2008, 29(8): 1709-1713.CHEN G, TANG Y. Aero-engine interior damage recognition based on texture features of borescope image[J]. Chinese Journal of Scientific Instrument, 2008, 29(8): 1709-1713(in Chinese). [2] 樊玮, 李晨炫, 邢艳, 等. 航空发动机损伤图像的二分类到多分类递进式检测网络[J]. 计算机应用, 2021, 41(8): 2352-2357.FAN W, LI C X, XING Y, et al. Two-class to multi-class progressive detection network for aero-engine damage images[J]. Journal of Computer Applications, 2021, 41(8): 2352-2357(in Chinese). [3] 黄鹏, 郑淇, 梁超. 图像分割方法综述[J]. 武汉大学学报(理学版), 2020, 66(6): 519-531.HUANG P, ZHENG Q, LIANG C. Overview of image segmentation methods[J]. Journal of Wuhan University(Natural Science Edition), 2020, 66(6): 519-531(in Chinese). [4] 杨宇. 数字图像处理在航空发动机孔探检测技术中的应用[D]. 沈阳: 东北大学, 2011: 8-15.YANG Y. Application of digital image processing in the borescope inspection technology of aeroengine[D]. Shenyang: Northeastern University, 2011: 8-15(in Chinese). [5] 张勇, 刘冠军, 邱静. 基于图像自动测量的航空发动机故障检测技术研究[J]. 机械科学与技术, 2008, 27(2): 176-179. doi: 10.3321/j.issn:1003-8728.2008.02.009ZHANG Y, LIU G J, QIU J. Aeroengine’s fault detection technology based on image automatic measurement[J]. Mechanical Science and Technology for Aerospace Engineering, 2008, 27(2): 176-179(in Chinese). doi: 10.3321/j.issn:1003-8728.2008.02.009 [6] 张维亮. 航空发动机叶片损伤图像快速识别技术研究[D]. 沈阳: 沈阳航空航天大学, 2014: 18-23.ZHANG W L. Research on technologies of aeroengine blades damage image fast recognition[D]. Shenyang: Shenyang Aerospace University, 2014: 18-23(in Chinese). [7] REN S Q, HE K M, GIRSHICK R, et al. Faster R-CNN: Towards real-time object detection with region proposal networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 39(6): 1137-1149. doi: 10.1109/TPAMI.2016.2577031 [8] 张新峰, 郭宇桐, 蔡轶珩, 等. 基于DCNN和全连接CRF的舌图像分割算法[J]. 北京航空航天大学学报, 2019, 45(12): 2364-2374.ZHANG X F, GUO Y T, CAI Y H, et al. Tongue image segmentation algorithm based on deep convolutional neural network and fully conditional random fields[J]. Journal of Beijing University of Aeronautics and Astronautics, 2019, 45(12): 2364-2374(in Chinese). [9] SIMONYAN K, ZISSERMAN A. Very deep convolutional networks for large-scale image recognition[EB/OL]. (2019-04-10)[2021-08-14].https://arxiv.org/1409.1556. [10] 李浩. 基于图像识别的航空发动机叶片裂纹检测研究[D]. 成都: 电子科技大学, 2019: 17-23.LI H. Research on the blade crack detection of aero-engine based on image recognition[D]. Chengdu: University of Electronic Science and Technology of China, 2019: 17-23(in Chinese). [11] KIM Y H, LEE J R. Videoscope-based inspection of turbofan engine blades using convolutional neural networks and image processing[J]. Structural Health Monitoring, 2019, 18(5-6): 2020-2039. doi: 10.1177/1475921719830328 [12] VASWANI A, SHAZEER N, PARMAR N, et al. Attention is all you need[EB/OL]. (2017-06-12)[2021-08-14]. https://arxiv.org/abs/1706.03762v5. [13] 陈永, 陈锦, 陶美风. 多尺度特征和注意力融合的生成对抗壁画修复[J]. 北京航空航天大学学报, 2023, 49(2): 254-264.CHEN Y, CHEN J, TAO M F. Mural inpainting generative adversarial networks based on multi-scale feature and attention fusion[J]. Journal of Beijing University of Aeronautics and Astronautics, 2023, 49(2): 254-264(in Chinese). [14] NIU R G, SUN X, TIAN Y, et al. HMANet: Hybrid multiple attention network for semantic segmentation in aerial images[EB/OL]. (2020-03-25)[2021-08-14].https://arxiv.org/abs/2001.02870. [15] LI Z L, YUAN L M, XU H X, et al. Deep multi-instance learning with induced self-attention for medical image classification[C]//2020 IEEE International Conference on Bioinformatics and Biomedicine(BIBM). Piscataway: IEEE Press, 2021: 446-450. [16] SANDLER M, HOWARD A, ZHU M L, et al. MobileNetV2: Inverted residuals and linear bottlenecks[C]//IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 4510-4520. [17] 刘梦竹. 基于自注意力机制的图像语义分割算法研究[D]. 大连: 大连理工大学, 2020: 20.LIU M Z. The research on image semantic segmentation algorithm based on self-attention mechanism[D]. Dalian: Dalian University of Technology, 2020: 20(in Chinese). [18] 蒲磊, 冯新喜, 侯志强, 等. 基于级联注意力机制的孪生网络视觉跟踪算法[J]. 北京航空航天大学学报, 2020, 46(12): 2302-2310.PU L, FENG X X, HOU Z Q, et al. Siamese network visual tracking algorithm based on cascaded attention mechanism[J]. Journal of Beijing University of Aeronautics and Astronautics, 2020, 46(12): 2302-2310(in Chinese). [19] YU F, KOLTUN V. Multi-scale context aggregation by dilated convolutions[EB/OL]. (2016-04-30)[2021-08-14]. https://arxiv.org/abs/1511.07122. [20] 李春虹, 卢宇. 基于深度可分离卷积的人脸表情识别[J]. 计算机工程与设计, 2021, 42(5): 1448-1454.LI C H, LU Y. Facial expression recognition based on depthwise separable convolution[J]. Computer Engineering and Design, 2021, 42(5): 1448-1454(in Chinese). [21] 杨波, 陶青川, 董沛君. 改进Deeplab v3+网络的手术器械分割方法[J]. 计算机工程与应用, 2021, 57(7): 222-227. doi: 10.3778/j.issn.1002-8331.2001-0064YANG B, TAO Q C, DONG P J. Surgical instrument segmentation method based on improved Deeplab v3+ network[J]. Computer Engineering and Applications, 2021, 57(7): 222-227(in Chinese). doi: 10.3778/j.issn.1002-8331.2001-0064 [22] HOFFMAN J, WANG D Q, YU F, et al. FCNs in the wild: Pixel-level adversarial and constraint-based adaptation[EB/OL]. (2016-12-08)[2021-08-14].https://arxiv.org/abs/1612.02649v1. [23] SI Y F, GONG D W, GUO Y, et al. An advanced spectral-spatial classification framework for hyperspectral imagery based on DeepLab v3+[J]. Applied Sciences, 2021, 11(12): 5703. doi: 10.3390/app11125703 [24] IBRAHIM M S, VAHDAT A, RANJBAR M, et al. Semi-supervised semantic image segmentation with self-correcting networks[C]// IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 12712-12722. -

下载:

下载: