Cross-domain person re-identification based on progressive attention and block occlusion

-

摘要:

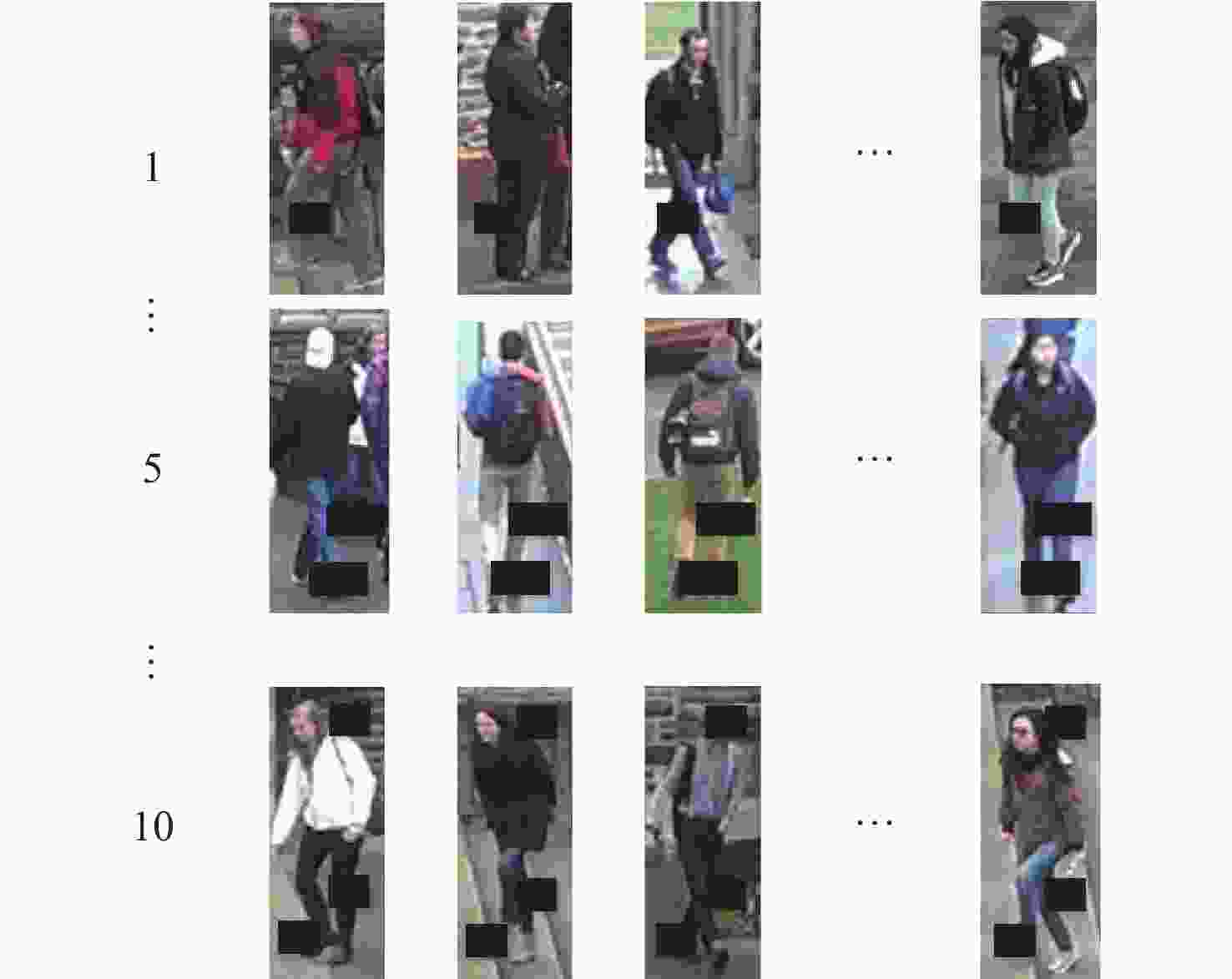

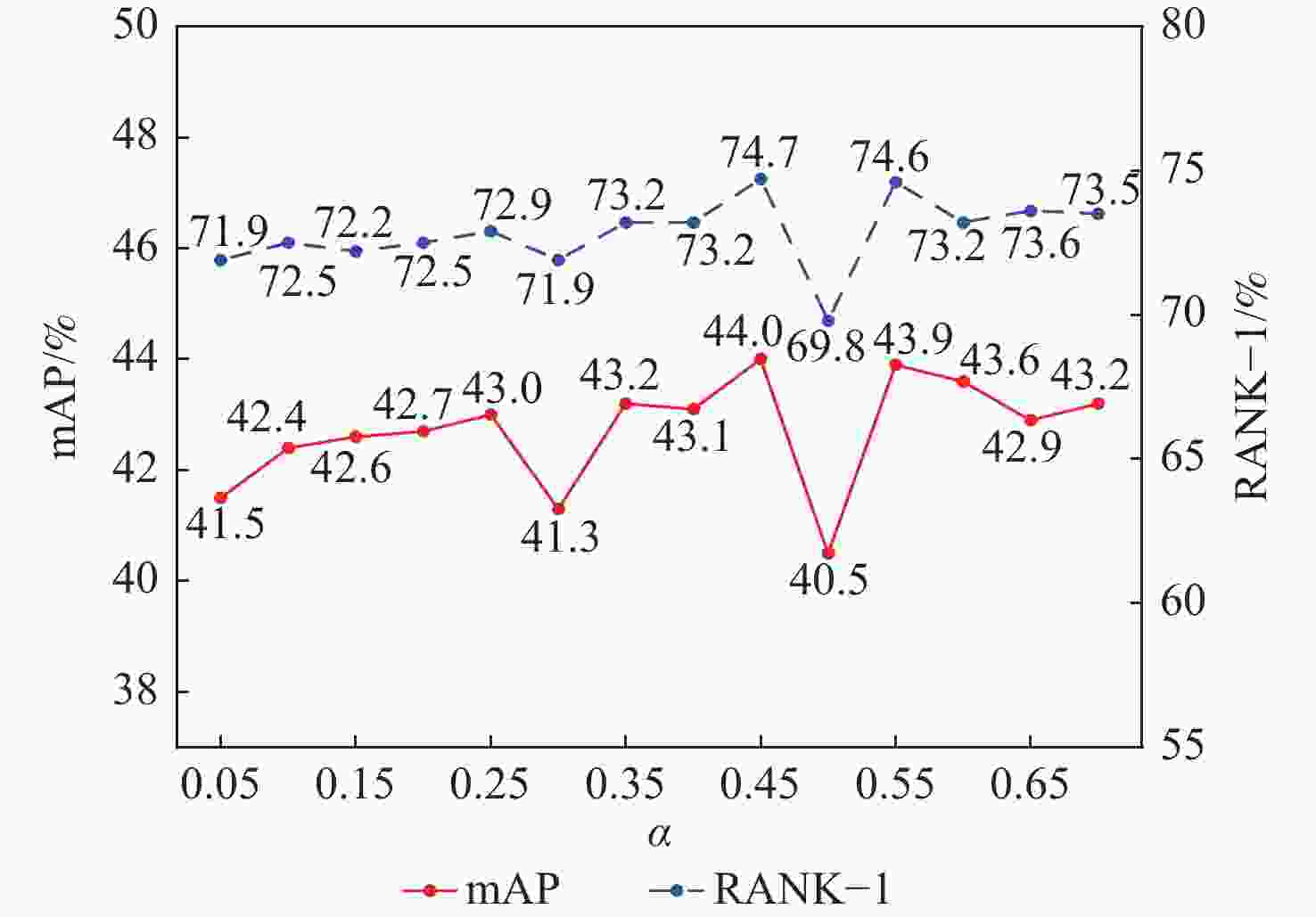

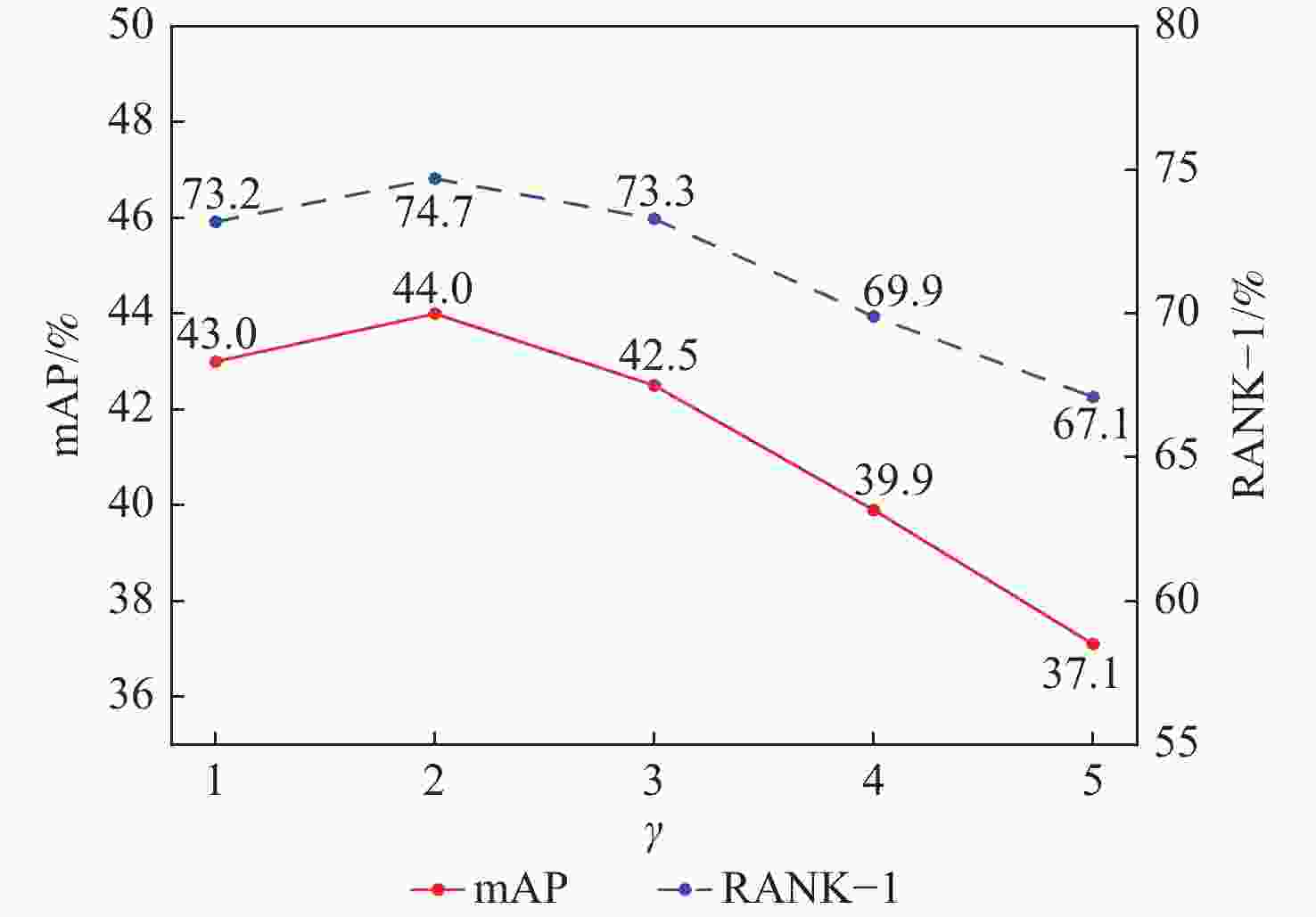

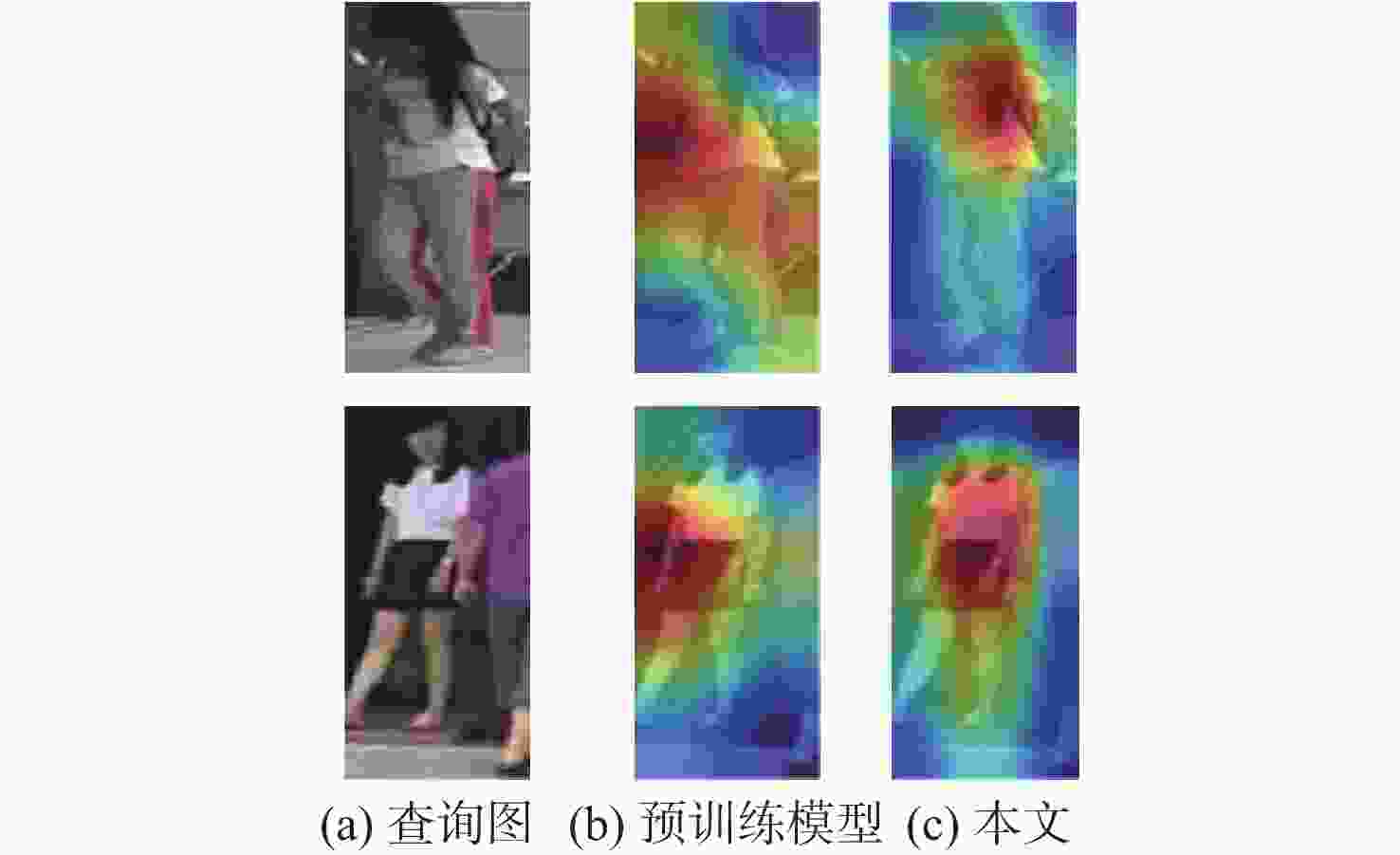

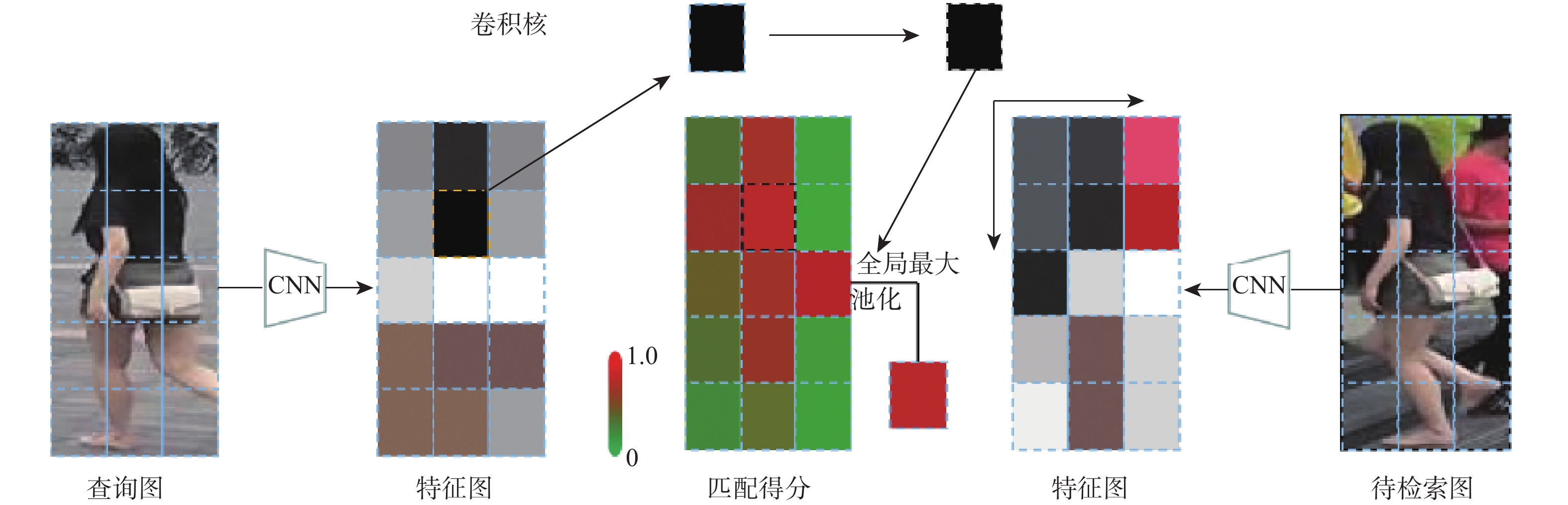

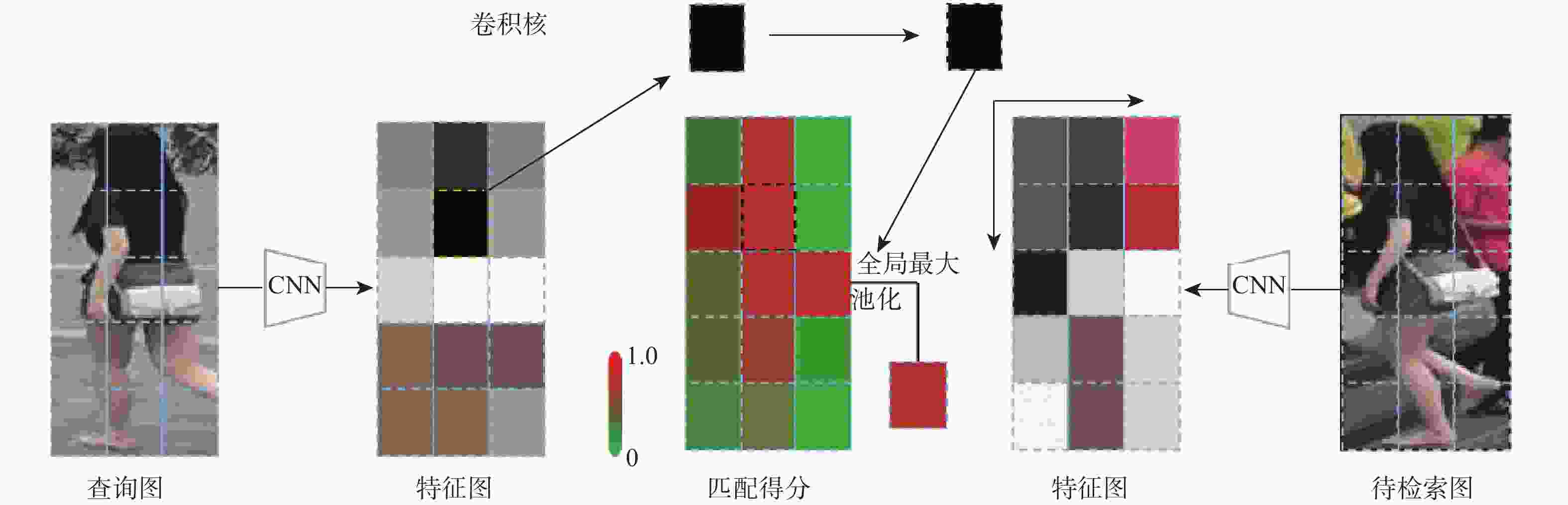

针对跨域行人重识别中遮挡造成特征匹配缺失及细粒度辨识性特征被忽略的问题,提出了基于渐进式注意力和分块遮挡的跨域行人重识别方法。该方法通过学习行人未遮挡区域的多粒度辨别性特征,实现空间不对齐下的特征匹配。渐进式注意力模块将特征逐步分割为多个局部块,依次学习每块的辨别性特征,由粗到细地感知前景信息,从而解决目前网络不能提取多层次辨识性特征的问题,增强了特征的匹配能力;渐进式分块遮挡模块很好地适应模型逐步变强的学习能力特性,通过由易到难地生成遮挡数据,有效提取了未遮挡区域的辨识性特征,进而解决模型错误识别遮挡样本的问题,使得所提模型在遮挡情况下的鲁棒性得到有效提高。实验结果表明:所提方法在首位命中率和平均精确度2个指标上与当前主流方法相比具有显著的优越性;与2020年CVPR会议中QAConv行人重识别方法相比,在DukeMTMC-reID数据集(MSMT17→DukeMTMC-reID)上的2个指标分别高出2.3%和6.2%,能够更加有效地实现跨域行人重识别,在Occluded-Duke数据集(DukeMTMC-reID→Occluded-Duke)上的2个指标分别达到49.5%和39.0%,在遮挡数据集上有着很好的识别效果。

Abstract:A cross-domain person re-identification method based on progressive attention and block occlusion is proposed in order to address the issue of missing feature matching caused by occlusion and neglect of fine-grained discriminative features in cross-domain person re-identification. This method realizes feature matching under spatial misalignment by learning multi-granularity discriminative features of regions where people are not blocked. The progressive attention module gradually divides the features into multiple local blocks, learns the discriminative features of each block in turn, and perceives the foreground information from coarse to fine, which solves the problem that the current network cannot extract multi-level distinguishing features and improves the feature matching ability of the model. In addition, the progressive block occlusion module is well adapted to the gradually stronger learning ability of the model. The robustness of the model proposed in this paper is finally effectively improved in the case of occlusion by effectively generating occlusion data from easy to difficult, effectively extracting the identifying features of non-occlusion areas, and then solving the problem of the model misidentifying occluded samples. The experimental results show that the algorithm has significant advantages compared with the current mainstream algorithms in the two indicators of first hit rate and mean average accuracy. Especially when compared with the QAConv person re-identification algorithm published in CVPR in 2020, the two indicators of this algorithm on the DukeMTMC-reID dataset (MSMT17→DukeMTMC-reID) are 2.3% and 6.2% higher, respectively, and the algorithm in this paper can realize cross-domain person re-identification more effectively. Additionally, the DukeMTMC-reID→Occluded-Duke dataset shows good recognition results for the system in this article, with the two indicators reaching 49.5% and 39.0%, respectively.

-

表 1 不同注意力块的消融实验

Table 1. Ablation studies of different attention modules

% 方法 Rank-1 mAP 数据集1 数据集2 数据集1 数据集2 QAConv[20] 54.4 62.8 33.6 31.6 PABO-QAConv (SE) 60.4 74.9 38.6 44.1 PABO-QAConv (SA) 57.1 71.9 36.7 41.4 PABO-QAConv (CBAM) 58.0 55.1 34.7 23.3 注:数据集1为训练集Market1501、测试集DukeMTMC-reID,数据集2为训练集DukeMTMC-reID,测试集Market1501。 表 2 不同模块的消融实验

Table 2. Ablation studies of different modules

% 方法 Rank-1 mAP 数据集1 数据集2 数据集1 数据集2 QAConv[20] 54.4 62.8 33.6 31.6 QAConv+PBO 56.9 73.2 37.1 42.7 QAConv+PA 58.2 72.4 37.3 42.5 PABO-QAConv 60.4 74.9 38.6 44.1 注:数据集1为训练集Market1501、测试集DukeMTMC-reID,数据集2为训练集DukeMTMC-reID,测试集Market1501。 表 3 遮挡数据集的消融实验

Table 3. Ablation studies in occluded dataset

% 方法 Rank-1 mAP QAConv 37.2 27.8 QAConv +Rerank+Tlift 53.7 54.4 QAConv+PA 43.7 35.4 QAConv+PA+Rerank+Tlift 60.6 62.6 QAConv+PBO 48.5 38.0 QAConv+PBO+Rerank+Tlift 64.8 65.8 PABO-QAConv 49.5 39.0 PABO-QAConv+Rerank+Tlift 64.7 65.7 表 4 PABO-QAConv实验验证结果

Table 4. PABO-QAConv experimental validation results

% 方法 Rank-1 mAP 数据集1 数据集2 数据集3 数据集4 数据集1 数据集2 数据集3 数据集4 QAConv[20] 54.4 62.8 72.2 73.9 33.6 31.6 53.4 46.6 PABO-QAConv 60.4 74.9 74.5 82.7 38.6 44.1 59.6 58.7 注:数据集1为训练集Market1501、测试集DukeMTMC-reID,数据集2为训练集DukeMTMC-reID、测试集Market1501,数据集3为训练集MSMT17、测试集DukeMTMC-reID,数据集4为训练集MSMT17、测试集Market1501。 表 5 本文方法与跨域行人重识别方法结果对比

Table 5. Comparison of results between the proposed algorithm and cross-domain pedestrian re-recognition algorithm

方法 训练集 测试集:Duke 训练集 测试集:Market 源域 目标域 Rank-1 mAP 源域 目标域 Rank-1 mAP CSGAN[38] Market Duke 47.8 26.3 Duke Market 61.9 29.7 CASC[36] Market Duke 51.5 30.5 Duke Market 64.7 35.6 ECN[33] Market Duke 63.3 40.4 Duke Market 75.1 43.0 PAUL[34] Market Duke 56.1 35.7 Duke Market 66.7 36.8 CBN[19] Market Duke 58.7 38.2 Duke Market 72.7 43.0 UCDA[37] Market Duke 55.4 36.7 Duke Market 64.3 34.5 CDS[6] Market Duke 67.2 42.7 Duke Market 71.6 39.9 PN-GAN[39] Market 29.9 15.8 Duke SSL[14] Duke 52.5 28.6 Market 71.7 37.8 PABO-QAConv Market 60.4 38.6 Duke 74.9 44.1 PABO-QAConv+Rerank+ Tlift Market 73.5 64.8 Duke 88.3 73.1 CASC[36] MSMT Duke 59.3 37.8 MSMT Market 65.4 35.5 MAR[35] MSMT Duke 43.1 48.0 MSMT Market 67.7 40.0 CBN[19] MSMT Duke 66.2 46.7 MSMT Market 72.8 42.9 PAUL[34] MSMT Duke 72.0 53.2 MSMT Market 68.5 40.1 MAR baseline[35] MSMT 43.1 28.8 MSMT 46.2 24.6 PAUL baseline[34] MSMT 65.7 45.6 MSMT 59.3 31.0 PABO-QAConv MSMT 74.5 59.6 MSMT 82.7 58.7 PABO-QAConv+Rerank+ Tlift MSMT 82.6 81.2 MSMT 93.4 84.6 -

[1] WANG G S, YUAN Y F, CHEN X, et al. Learning discriminative features with multiple granularities for person re-identification[C]// Proceedings of the 26th ACM International Conference on Multimedia. New York: ACM, 2018: 274-282. [2] ZHAO C R, LV X B, DOU S G, et al. Incremental generative occlusion adversarial suppression network for person ReID[J]. Transactions on Image Processing, 2021, 30: 4212-4224. doi: 10.1109/TIP.2021.3070182 [3] SUN Y F, ZHENG L, YANG Y, et al. Beyond part models: Person retrieval with refined part pooling (and a strong convolutional baseline)[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2018: 501-518. [4] LAVI B, ULLAH I, FATAN M, et al. Survey on reliable deep learning-based person re-identification models: Are we there yet?[EB/OL]. (2020-04-30)[2022-01-01]. https://arxiv.org/abs/2005.0035501. [5] FU Y, WEI Y C, WANG G S, et al. Self-similarity grouping: A simple unsupervised cross domain adaptation approach for person re-identification[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2020: 6111-6120. [6] WU J L, LIAO S C, LEI Z, et al. Clustering and dynamic sampling based unsupervised domain adaptation for person re-identification[C]//Proceedings of the IEEE International Conference on Multimedia and Expo. Piscataway: IEEE Press, 2019: 886-891. [7] LI J H, CHENG D Q, LIU R H, et al. Unsupervised person re-identification based on measurement axis[J]. IEEE Signal Processing Letters, 2021, 28: 379-383. doi: 10.1109/LSP.2021.3055116 [8] MEKHAZNI D, BHUIYAN A, EKLADIOUS G, et al. Unsupervised domain adaptation in the dissimilarity space for person re-identification[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2020: 159-174. [9] WANG D K, ZHANG S L. Unsupervised person re-identification via multi-label classification[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 10978-10987. [10] CHEN G Y, LU Y H, LU J W, et al. Deep credible metric learning for unsupervised domain adaptation person re-identification[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2020: 643-659. [11] 张晓伟, 吕明强, 李慧. 基于局部语义特征不变性的跨域行人重识别[J]. 北京航空航天大学学报, 2020, 46(9): 1682-1690.ZHANG X W, LYU M Q, LI H. Cross-domain person re-identification based on partial semantic feature invariance[J]. Journal of Beijing University of Aeronautics and Astronautics, 2020, 46(9): 1682-1690(in Chinese). [12] ZHONG Z, ZHENG L, ZHENG Z D, et al. CamStyle: A novel data augmentation method for person re-identification[J]. IEEE Transactions on Image Processing, 2019, 28(3): 1176-1190. doi: 10.1109/TIP.2018.2874313 [13] ZENG K W, NING M N, WANG Y H, et al. Hierarchical clustering with hard-batch triplet loss for person re-identification [C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 13654-13662. [14] LIN Y T, XIE L X, WU Y, et al. Unsupervised person re-identification via softened similarity learning[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 3387-3396. [15] LIN Y T, DONG X Y, ZHENG L A, et al. A bottom-up clustering approach to unsupervised person re-identification[C]//Proceedings of the AAAI Conference on Artificial Intelligence. Palo Alto: AAAI, 2019, 33(1): 8738-8745. [16] YE M, SHEN J B, LIN G J, et al. Deep learning for person re-identification: A survey and outlook[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2022, 44(6): 2872-2893. doi: 10.1109/TPAMI.2021.3054775 [17] ZHANG X Y, CAO J W, SHEN C H, et al. Self-training with progressive augmentation for unsupervised cross-domain person re-identification[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2020: 8221-8230. [18] ZHONG Z, ZHENG L, CAO D L, et al. Re-ranking person re-identification with k-reciprocal encoding[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2017: 3652-3661. [19] ZHUANG Z J, WEI L H, XIE L X, et al. Rethinking the distribution gap of person re-identification with camera-based batch normalization[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2020: 140-157. [20] LIAO S C, SHAO L. Interpretable and generalizable person re-identification with query-adaptive convolution and temporal lifting[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2020: 456-474. [21] 廖华年, 徐新. 基于注意力机制的跨分辨率行人重识别[J]. 北京航空航天大学学报, 2021, 47(3): 605-612.LIAO H N, XU X. Cross-resolution person re-identification based on attention mechanism[J]. Journal of Beijing University of Aeronautics and Astronautics, 2021, 47(3): 605-612(in Chinese). [22] ZHANG Z Z, LAN C L, ZENG W J, et al. Relation-aware global attention for person re-identification[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 3183-3192. [23] WOO S, PARK J, LEE J Y, et al. CBAM: Convolutional block attention module[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2018: 3-19. [24] HE K M, ZHANG X Y, REN S Q, et al. Deep residual learning for image recognition[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2016: 770-778. [25] LIN T Y, GOYAL P, GIRSHICK R, et al. Focal loss for dense object detection[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2020, 42(2): 318-327. doi: 10.1109/TPAMI.2018.2858826 [26] HU J, SHEN L, SUN G. Squeeze-and-excitation networks[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 7132-7141. [27] ZHENG L, SHEN L Y, TIAN L, et al. Scalable person re-identification: A benchmark[C]//Proceedings of the IEEE International Conference on Computer Vision. Piscataway: IEEE Press, 2016: 1116-1124. [28] RISTANI E, SOLERA F, ZOU R, et al. Performance measures and a data set for multi-target, multi-camera tracking[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2016: 17-35. [29] WEI L H, ZHANG S L, GAO W, et al. Person transfer GAN to bridge domain gap for person re-identification[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 79-88. [30] MIAO J X, WU Y, LIU P, et al. Pose-guided feature alignment for occluded person re-identification[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2020: 542-551. [31] ZHAO R, OUYANG W, WANG X. Person re-identification by salience matching [C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2013: 2528-2535 [32] ZHU X Z, CHENG D Z, ZHANG Z, et al. An empirical study of spatial attention mechanisms in deep networks[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2020: 6687-6696. [33] ZHONG Z, ZHENG L, LUO Z M, et al. Invariance matters: Exemplar memory for domain adaptive person re-identification[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 598-607. [34] YANG Q Z, YU H X, WU A C, et al. Patch-based discriminative feature learning for unsupervised person re-identification[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 3628-3637. [35] YU H X, ZHENG W S, WU A C, et al. Unsupervised person re-identification by soft multilabel learning[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 2143-2152. [36] WU A C, ZHENG W S, LAI J H. Unsupervised person re-identification by camera-aware similarity consistency learning[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2020: 6921-6930. [37] QI L, WANG L, HUO J, et al. A novel unsupervised camera-aware domain adaptation framework for person re-identification[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2020: 8079-8088. [38] ZHANG W Y, ZHU L, LU L. Improving the style adaptation for unsupervised cross-domain person re-identification[C]//Proceedings of the International Joint Conference on Neural Networks. Piscataway: IEEE Press, 2020: 1-8. [39] QIAN X L, FU Y W, XIANG T, et al. Pose-normalized image generation for person re-identification[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2018: 661-678. -

下载:

下载: