Semi-supervised locality preserving dense graph convolution for hyperspectral image classification

-

摘要:

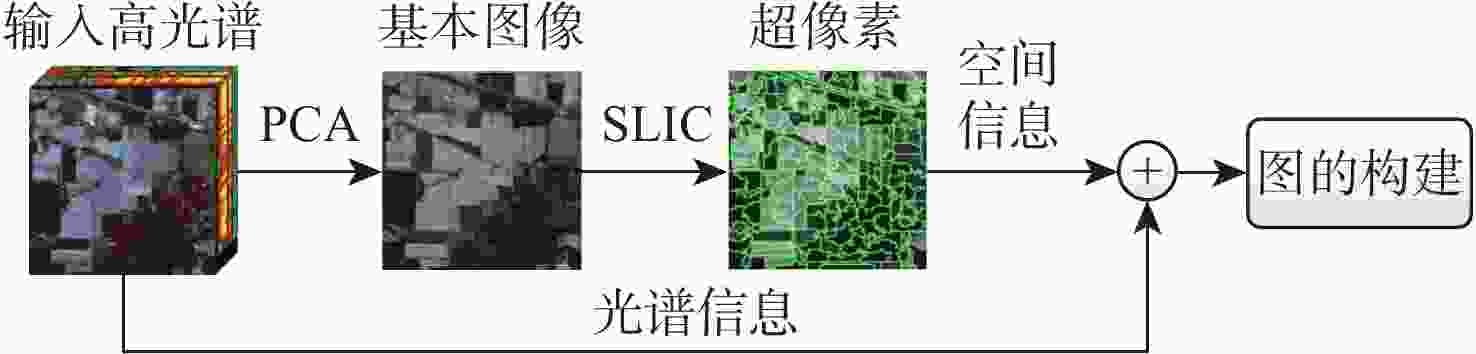

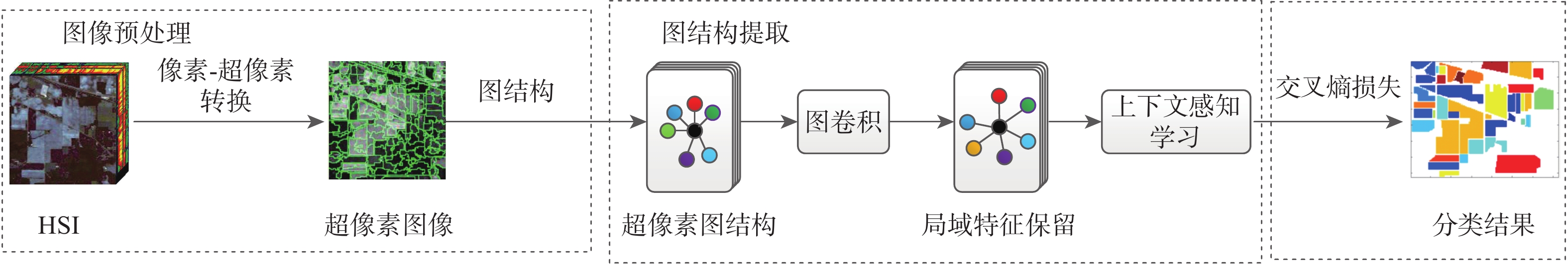

图卷积网络(GCN)应用于高光谱图像(HSI)分类是现在研究的热点和前沿。但是现有的图卷积网络算法依然面临着计算量大、过度平滑和特征自适应选择的问题。面对这些问题提出超像素分割算法减少网络节点数量,在保留节点光谱特征的同时降低运算量;采用DenseNet结构保留卷积过程特征,解决图卷积过度平滑问题;最后提出一种半监督局部特征保留稠密连接上下文感知的图卷积网络算法,利用层注意力机制针对分类目标进行特征自适应选择。所提算法实现了端到端的半监督分类,在3个真实数据集上,与最新的分类算法对比实验结果表明:所提算法在各项指标上都有较好的表现,提高了HSI的分类正确率。

Abstract:The application of graph convolutional network (GCN) to hyperspectral image (HSI) classification is the hotspot and frontier of current research. Nevertheless, the over-smoothing, feature adaptive selection, and calculation complexity issues still exist for the graph convolution network approaches that are now accessible. To circumvent these problems, a superpixel segmentation method to reduce the spatial dimension of the HSI is proposal, which reduces the amount of calculation while preserving the spectral characteristics of the nodes. In addition, the dense structure is adopted to retain the features of the convolution in process, and the problem of excessive smoothing of the graph convolution is settled. Finally, a mechanism for extracting the practical local knowledge produced by each layer of the dense GCN is created using a layer-wise context-aware learning approach. The network realizes end-to-end semi-supervised classification. The experimental results on three real datasets show that the proposed algorithm outperforms the compared state-of-the-art methods on all indices and improves the classification accuracy of HSI.

-

表 1 PU数据集用于训练和测试像素数据量

Table 1. Numbers of training and testing pixels in PU date set

序号 类型 训练数据量 测试数据量 1 Asphalt 30 6601 2 Meadows 30 18619 3 Gravel 30 2069 4 Trees 30 3034 5 Painted metal sheets 30 1315 6 Bare soil 30 4999 7 Bitumen 30 1300 8 Self-Blocking Bricks 30 3652 9 Shadows 30 917 表 2 KSC数据集用于训练和测试像素数据量

Table 2. Numbers of training and testing pixels in KSC date set

序号 类型 训练数据量 测试数据量 1 Scrub 30 731 2 Willow swamp 30 213 3 CP hammock 30 226 4 Slash pine 30 222 5 Oak/Broadleaf 30 131 6 Hardwood 30 199 7 Swamp 30 75 8 Graminoid 30 401 9 Spartina marsh 30 490 10 Cattail marsh 30 374 11 Salt marsh 30 389 12 Mud flats 30 473 13 Water 30 897 表 3 Salinas数据用于训练和测试像素数据量

Table 3. Numbers of training and testing pixels in Salinas date set

序号 类型 训练数据量 测试数据量 1 Broccoli green weed 1 30 1979 2 Broccoli green weed 2 30 3696 3 Fallow 30 1946 4 Fallow rough plow 30 1364 5 Fallow smooth 30 2648 6 Stubble 30 3929 7 Celery 30 3549 8 Grapes untrained 30 11241 9 Soil vineyard develop 30 6137 10 Corn Senesced green weeds 30 3248 11 Lettuce romianes-4wk 30 1038 12 Lettuce romianes-5wk 30 1897 13 Lettuce romianes-6wk 30 886 14 Lettuce romianes-7wk 30 1040 15 Vineyard untrained 30 7238 16 Vineyard vertical trellis 30 1777 表 4 网络的结构细节

Table 4. The architecture details of proposed network

结构 组成 像素-区域处理 差像素的光谱(PCA)

特征(输入)(SLIC)图构建 计算图的邻接矩阵A 图处理 DGCN (输入节点的光谱维度 -32)

BN

ReLU上下文感知学习 GAT (32)

BN输出 交叉熵函数 (分类目标) 表 5 PU数据集上的精度对比

Table 5. Accuracy comparisons for PU scene

算法 精度/% OA/% AA/% κ 1 2 3 4 5 6 7 8 9 DR-CNN 92.10 96.39 84.23 95.26 97.77 90.44 89.05 78.49 96.34 92.62 91.12 0.90 RBF-SVM 83.14 66.75 69.65 88.24 92.18 93.54 91.84 90.67 95.38 77.65 85.71 0.77 JSDF 82.40 90.76 86.71 92.88 100.00 94.30 96.62 94.69 99.56 90.82 93.10 0.88 S2GCN 92.87 87.06 87.97 90.85 100.00 88.69 98.88 89.97 98.89 89.74 92.80 0.87 S2GAT 87.31 87.94 77.28 96.57 96.74 95.11 87.45 95.86 94.31 90.56 90.95 0.90 DGCN-CAL 91.42 97.13 98.31 87.11 93.21 98.82 94.27 93.68 94.82 95.12 94.30 0.95 表 6 KSC数据集上的精度对比

Table 6. Accuracy comparisons for KSC scene

算法 1 2 3 4 5 6 7 8 9 10 11 12 13 OA/% AA/% κ DR-CNN 98.72 97.97 97.49 62.46 94.66 97.65 100.00 97.42 99.93 98.84 100.00 98.94 100 97.21 95.70 0.97 RBF-SVM 93.27 92.14 90.27 91.74 85.10 86.23 72.98 91.33 89.17 90.62 88.35 92.46 90.13 88.46 88.75 0.86 JSDF 100.00 92.07 95.13 59.01 85.34 86.48 98.93 94.76 100.00 100.00 100.00 95.52 100 97.21 94.38 0.95 S2GCN 95.12 95.15 96.17 71.17 97.71 89.95 98.22 89.10 99.59 98.04 99.23 95.63 100 95.44 94.24 0.95 S2GAT 99.16 96.27 98.30 84.62 96.23 93.11 97.18 95.67 96.89 100.00 100.00 97.96 100.00 96.31 96.56 0.97 DGCN-CAL 100.00 98.13 98.61 93.14 92.38 97.22 100.00 100.00 95.63 100.00 100.00 95.17 100.00 97.84 97.71 0.98 表 7 Salinas数据集上的精度对比

Table 7. Accuracy comparisons for the Salinas scene

算法 精度/% OA/% AA/% κ 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 DR-CNN 99.40 99.46 98.58 99.70 98.90 99.57 99.50 75.59 99.75 94.29 97.57 99.99 99.95 98.57 72.18 98.45 90.35 95.72 0.89 RBF-SVM 97.47 92.65 96.71 92.27 96.47 89.58 93.73 77.36 92.31 90.89 73.64 93.61 89.22 92.61 71.38 81.34 86.75 88.83 0.86 JSDF 100.00 100.00 100.00 99.93 99.77 100.00 99.99 87.79 99.67 96.53 99.76 100.00 100.00 98.71 81.86 98.99 94.67 97.69 0.94 S2GCN 99.01 99.18 97.15 99.11 97.55 99.32 90.06 70.68 98.32 90.97 98.00 99.56 97.83 95.75 70.36 96.90 88.39 94.30 0.87 S2GAT 99.62 99.37 96.51 99.60 95.21 98.64 99.73 77.67 95.32 93.76 94.33 99.61 92.40 92.72 77.31 95.66 93.67 94.21 0.93 DGCN-CAL 100.00 98.51 99.62 99.20 88.64 95.35 97.39 90.63 99.81 96.16 94.83 99.44 98.62 92.17 98.27 95.44 95.79 96.51 0.96 表 8 PU数据集上不同算法的消融实验结果

Table 8. Ablation experiments results of different algorithms on PU dataset

算法 OA/% AA/% κ GCN-CAL 91.68 92.87 0.92 DGCN 92.59 91.97 0.92 DGCN-CAL 95.12 94.30 0.95 表 9 KSC数据集上不同算法的消融实验结果

Table 9. Ablation experiments results of different algorithms on KSC dataset

算法 OA/% AA/% κ GCN-CAL 95.18 95.39 0.95 DGCN 96.24 95.81 0.96 DGCN-CAL 97.84 97.71 0.98 表 10 Salinas数据集上不同算法的消融实验结果

Table 10. Ablation experiments results of different algorithms on Salinas dataset

算法 OA/% AA/% κ GCN-CAL 93.03 96.30 0.94 DGCN 92.11 94.17 0.92 DGCN-CAL 95.79 96.51 0.96 -

[1] RASTI B, HONG D F, HANG R L, et al. Feature extraction for hyperspectral imagery: The evolution from shallow to deep, overview and toolbox[J]. IEEE Geoscience and Remote Sensing Magazine, 2020, 8(4): 60-88. doi: 10.1109/MGRS.2020.2979764 [2] DING Y, ZHAO X F, ZHANG Z L, et al. Semi-supervised locality preserving dense graph neural network with ARMA filters and context-aware learning for hyperspectral image classification[J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 1-12. [3] DING Y, ZHAO X F, ZHANG Z L, et al. Graph sample and aggregate-attention network for hyperspectral image classification[J]. IEEE Geoscience and Remote Sensing Letters, 2022, 19: 1-5. [4] ZHONG P, GONG Z Q, SHAN J X. Multiple instance learning for multiple diverse hyperspectral target characterizations[J]. IEEE Transactions on Neural Networks and Learning Systems, 2020, 31(1): 246-258. doi: 10.1109/TNNLS.2019.2900465 [5] DING Y, ZHAO X F, ZHANG Z L, et al. Multiscale graph sample and aggregate network with context-aware learning for hyperspectral image classification[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2021, 14: 4561-4572. doi: 10.1109/JSTARS.2021.3074469 [6] BO C J, LU H C, WANG D. Hyperspectral image classification via JCR and SVM models with decision fusion[J]. IEEE Geoscience and Remote Sensing Letters, 2016, 13(2): 177-181. doi: 10.1109/LGRS.2015.2504449 [7] MA L, CRAWFORD M M, TIAN J W. Local manifold learning-based k-nearest-neighbor for hyperspectral image classification[J]. IEEE Transactions on Geoscience and Remote Sensing, 2010, 48(11): 4099-4109. [8] DING Y, ZHANG Z L, ZHAO X F, et al. Deep hybrid: Multi-graph neural network collaboration for hyperspectral image classification[J]. Defence Technology, 2023, 23: 164-176. doi: 10.1016/j.dt.2022.02.007 [9] CHEN Y S, LIN Z H, ZHAO X, et al. Deep learning-based classification of hyperspectral data[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2014, 7(6): 2094-2107. doi: 10.1109/JSTARS.2014.2329330 [10] LI T, ZHANG J P, ZHANG Y. Classification of hyperspectral image based on deep belief networks[C]//2014 IEEE International Conference on Image Processing. Piscataway: IEEE Press, 2015: 5132-5136. [11] FENG J E, FENG X L, CHEN J T, et al. Generative adversarial networks based on collaborative learning and attention mechanism for hyperspectral image classification[J]. Remote Sensing, 2020, 12(7): 1149. doi: 10.3390/rs12071149 [12] MOU L C, GHAMISI P, ZHU X X. Deep recurrent neural networks for hyperspectral image classification[J]. IEEE Transactions on Geoscience and Remote Sensing, 2017, 55(7): 3639-3655. doi: 10.1109/TGRS.2016.2636241 [13] HONG D F, YOKOYA N, CHANUSSOT J, et al. Joint and progressive subspace analysis (JPSA) with spatial-spectral manifold alignment for semisupervised hyperspectral dimensionality reduction[J]. IEEE Transactions on Cybernetics, 2020, 51(7): 3602-3615. [14] HU W, HUANG Y Y, WEI L, et al. Deep convolutional neural networks for hyperspectral image classification[J]. Journal of Sensors, 2015, 2015: 1-12. [15] HE K M, ZHANG X Y, REN S Q, et al. Deep residual learning for image recognition[C]//2016 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2016: 770-778. [16] KIPF T N, WELLING M. Semi-supervised classification with graph convolutional networks[EB/OL]. (2020-03-04) [2021-06-04]. https://arxiv.org/abs/1609.02907. [17] HAMILTON W L, YING R, LESKOVEC J. Inductive representation learning on large graphs[C]//Proceedings of the 31st International Conference on Neural Information Processing Systems. New York: ACM, 2017: 1025-1035. [18] DUVENAUD D, MACLAURIN D, AGUILERA-IPARRAGUIRRE J, et al. Convolutional networks on graphs for learning molecular fingerprints[C]//Proceedings of the 28th International Conference on Neural Information Processing Systems - Volume 2. New York: ACM, 2015: 2224-2232. [19] WANG C, PAN S R, HU R Q, et al. Attributed graph clustering: A deep attentional embedding approach[C]//Proceedings of the Twenty-Eighth International Joint Conference on Artificial Intelligence. California: International Joint Conferences on Artificial Intelligence Organization, 2019: 3670-3676. [20] QIN A Y, SHANG Z W, TIAN J Y, et al. Spectral–spatial graph convolutional networks for semisupervised hyperspectral image classification[J]. IEEE Geoscience and Remote Sensing Letters, 2019, 16(2): 241-245. doi: 10.1109/LGRS.2018.2869563 [21] HONG D F, GAO L R, YAO J, et al. Graph convolutional networks for hyperspectral image classification[J]. IEEE Transactions on Geoscience and Remote Sensing, 2021, 59(7): 5966-5978. doi: 10.1109/TGRS.2020.3015157 [22] WAN S, GONG C, ZHONG P, et al. Multiscale dynamic graph convolutional network for hyperspectral image classification[J]. IEEE Transactions on Geoscience and Remote Sensing, 2020, 58(5): 3162-3177. doi: 10.1109/TGRS.2019.2949180 [23] LIU W F, GONG M G, TANG Z D, et al. Locality preserving dense graph convolutional networks with graph context-aware node representations[J]. Neural Networks, 2021, 143: 108-120. doi: 10.1016/j.neunet.2021.05.031 [24] SCHÖLKOPF B, SMOLA A, MÜLLER K R. Nonlinear component analysis as a kernel eigenvalue problem[J]. Neural Computation, 1998, 10(5): 1299-1319. doi: 10.1162/089976698300017467 [25] ACHANTA R, SHAJI A, SMITH K, et al. SLIC superpixels compared to state-of-the-art superpixel methods[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2012, 34(11): 2274-2282. doi: 10.1109/TPAMI.2012.120 [26] ZOU F, SHEN L, JIE Z, et al. A sufficient condition for convergences of adam and rmsprop[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2019: 11127-11135. [27] ZHANG M M, LI W, DU Q. Diverse region-based CNN for hyperspectral image classification[J]. IEEE Transactions on Image Processing, 2018, 27(6): 2623-2634. doi: 10.1109/TIP.2018.2809606 [28] SHA A S, WANG B, WU X F, et al. Semisupervised classification for hyperspectral images using graph attention networks[J]. IEEE Geoscience and Remote Sensing Letters, 2021, 18(1): 157-161. doi: 10.1109/LGRS.2020.2966239 [29] LI W, WU G D, ZHANG F, et al. Hyperspectral image classification using deep pixel-pair features[J]. IEEE Transactions on Geoscience and Remote Sensing, 2017, 55(2): 844-853. doi: 10.1109/TGRS.2016.2616355 -

下载:

下载: