-

摘要:

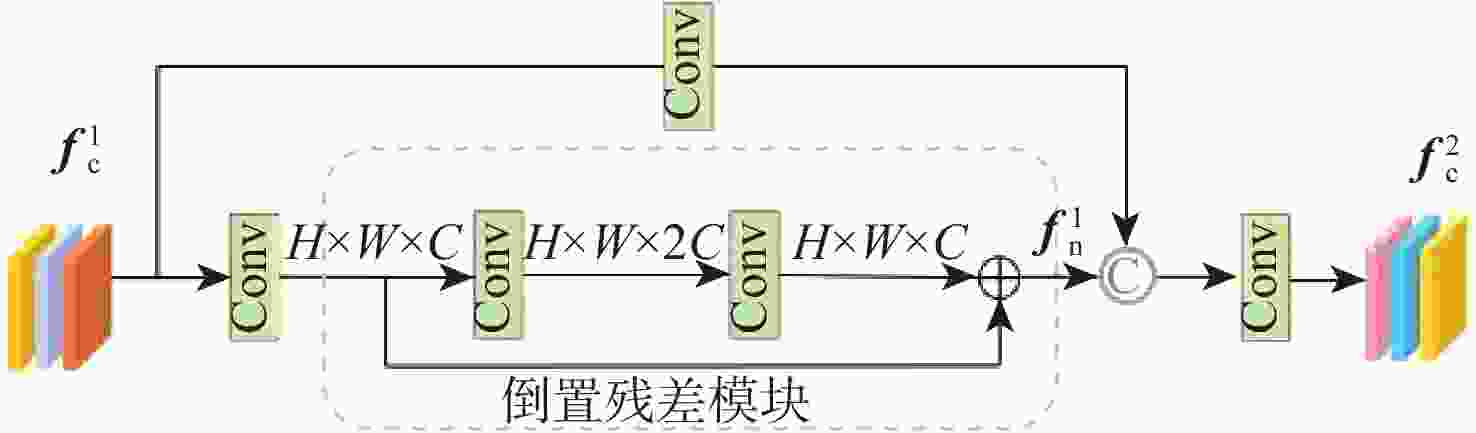

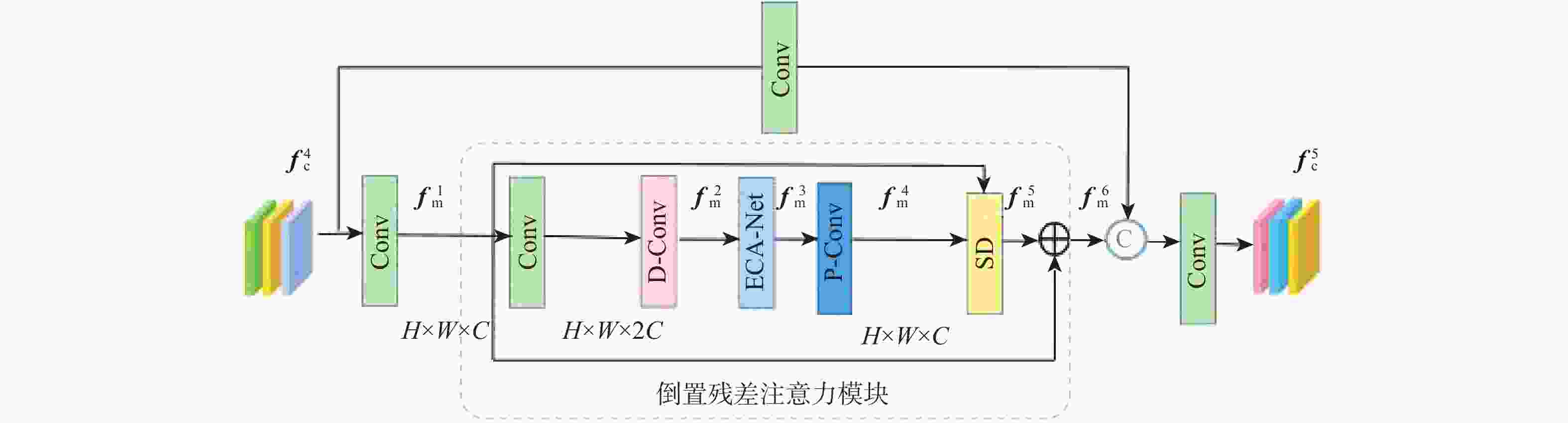

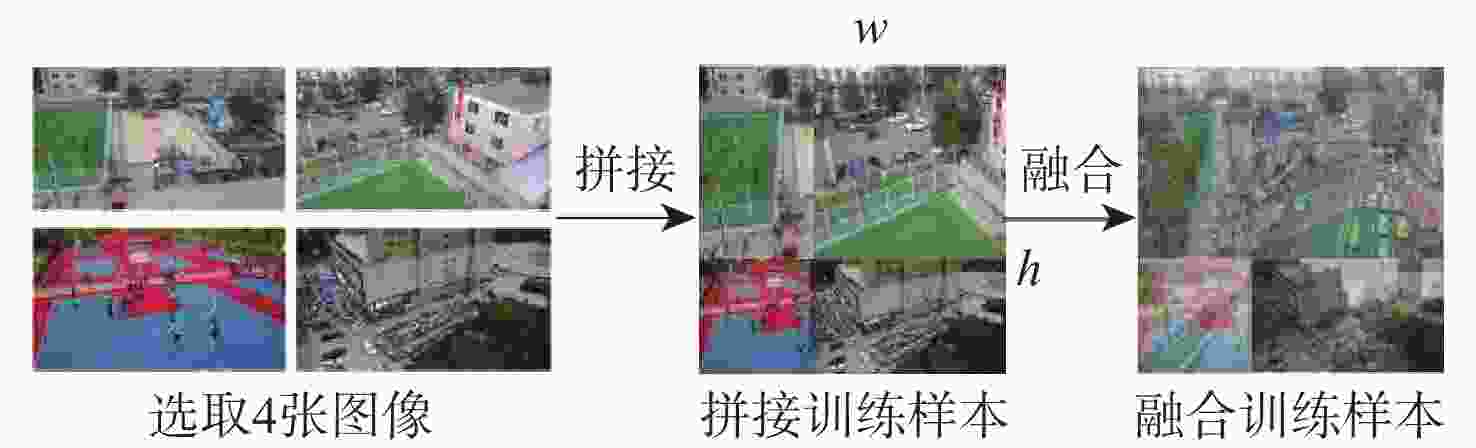

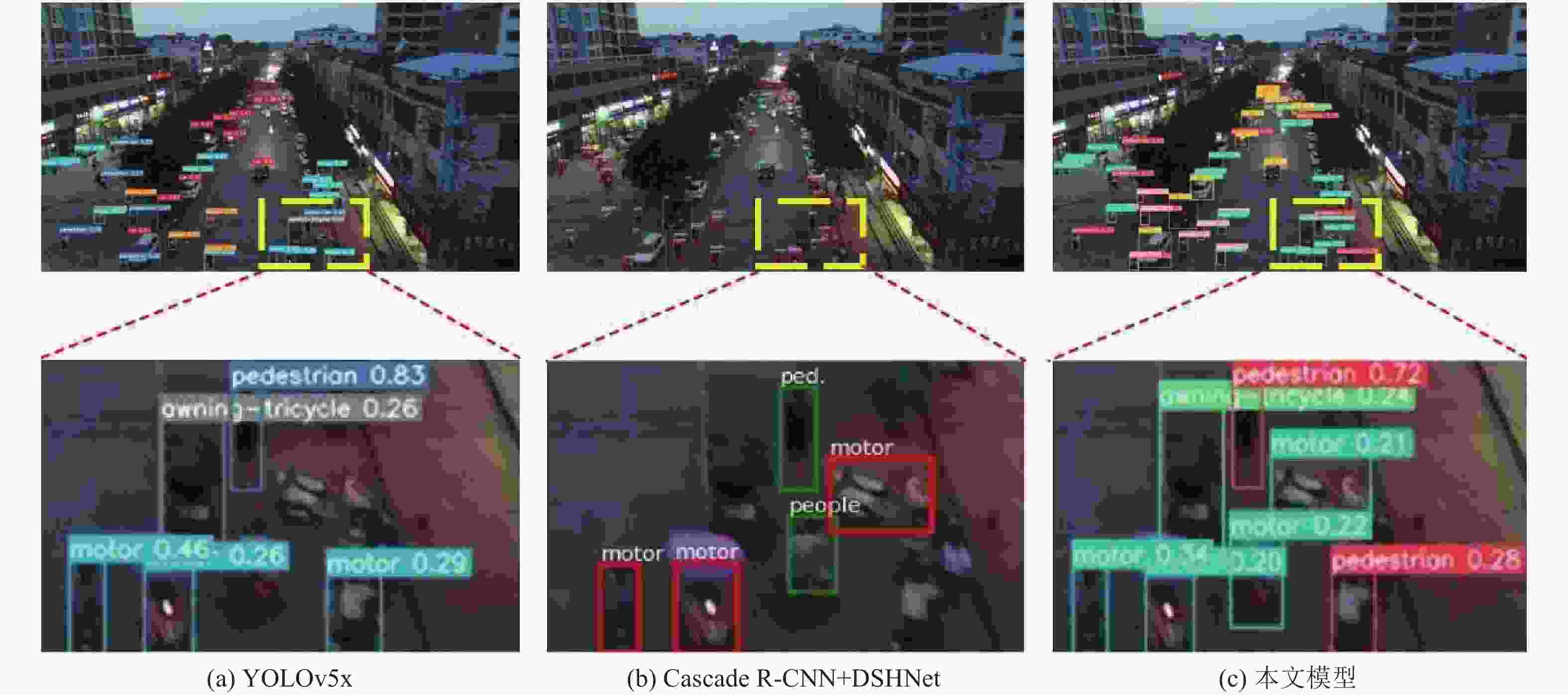

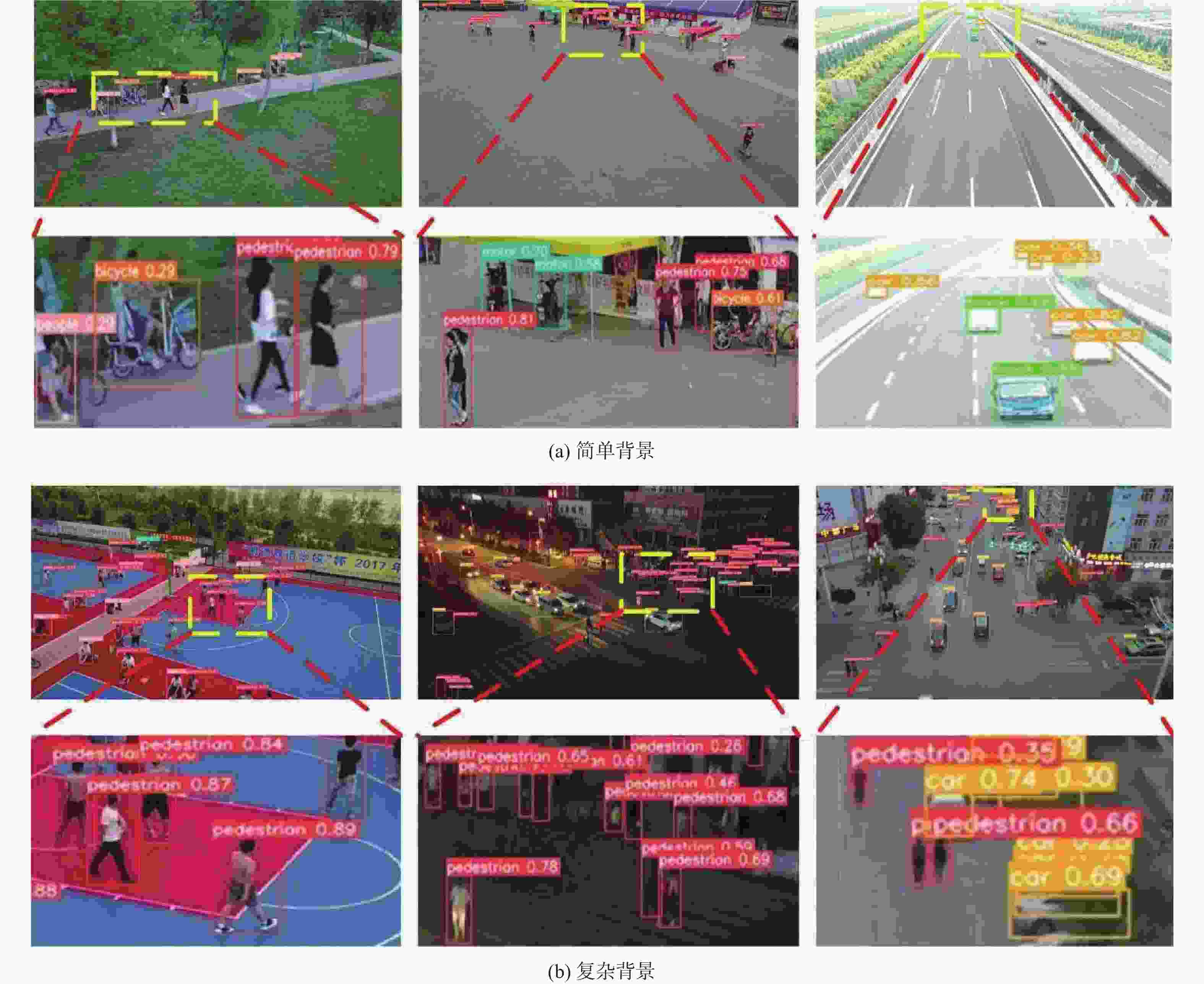

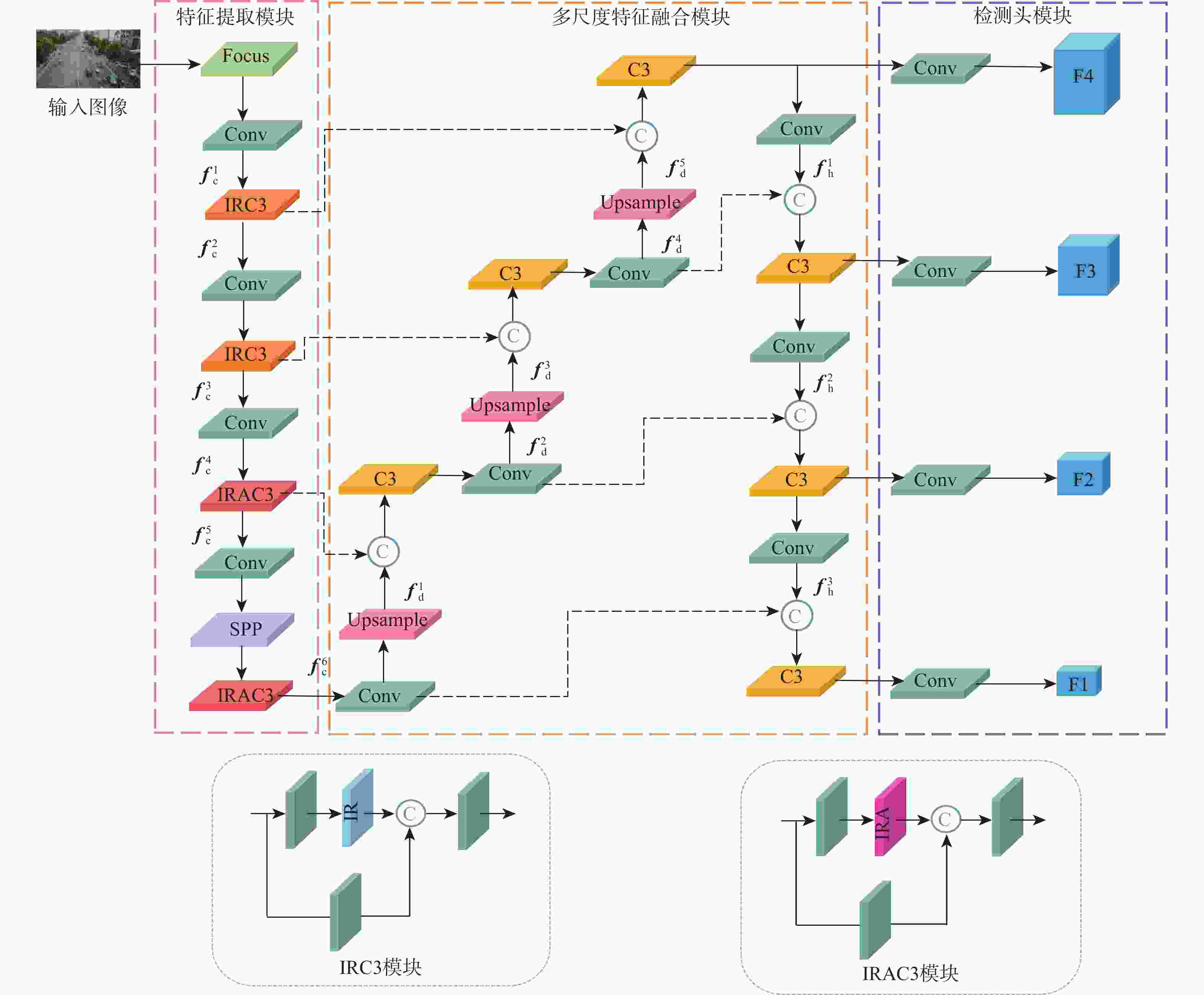

针对无人机航拍图像背景复杂、小尺寸目标较多等问题,提出了一种基于倒置残差注意力的无人机航拍图像小目标检测算法。在主干网络部分嵌入倒置残差模块与倒置残差注意力模块,利用低维向高维的特征信息映射,获得丰富的小目标空间信息和深层语义信息,提升小目标的检测精度;在特征融合部分设计多尺度特征融合模块,融合浅层空间信息和深层语义信息,并生成4个不同感受野的检测头,提升模型对小尺寸目标的识别能力,减少小目标的漏检;设计马赛克混合数据增强方法,建立数据之间的线性关系,增加图像背景复杂度,提升算法的鲁棒性。在VisDrone数据集上的实验结果表明:所提模型的平均精度均值比DSHNet模型提升了1.2%,有效改善了无人机航拍图像小目标漏检、误检的问题。

Abstract:Aiming at the problems of complex background and too many small-size targets in UAV aerial images, a small target detection algorithm based on inverted residual attention is proposed. Firstly, an inverted residual module and an inverted residual attention module are embedded into the backbone network, while rich spatial information and deep semantic information of small targets are obtained by feature information mapping from low dimension to high dimension, thus improving the accuracy of small target detection; Secondly, in feature fusion, a multi-scale feature fusion module is established to fuse the shallow spatial information and deep semantic information, and to generate four detection heads with different sensory fields, which improves the recognition of small-size targets and reduces missed detection of small targets; Finally, a mosaic mixed data enhancement method is designed to establish the linear relationship between the data, increase the complexity of the image background and improve the robustness of the algorithm. The experimental results on data set VisDrone show that the mean average precision of this algorithm is 1.2% higher than that of DSHNet, which means that the proposed algorithm could effectively reduce missed detection and false detection of small targets in UAV aerial images.

-

Key words:

- object detection /

- UAV images /

- inverted residual /

- attention /

- multi-scale feature fusion

-

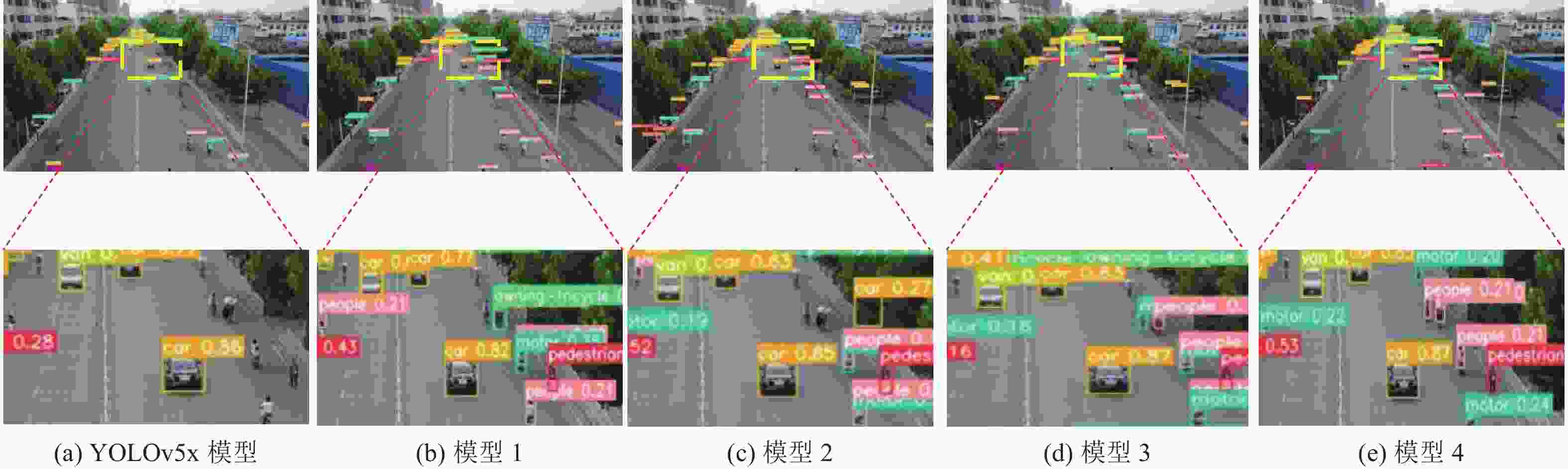

表 1 不同模型的客观指标对比

Table 1. Comparison of objective indicators of different models

模型 mAP/% mAP0.5/% mAP0.75/% 参数量/106 检测速度/FPS YOLOv5x 23.4 35.7 25.1 83.2 41.3 模型 1 24.6 38.6 26.2 86.7 28.7 模型 2 25.4 39.7 27.1 85.5 28.7 模型 3 26.8 41.4 28.8 69.3 25.6 模型 4 27.4 42.4 29.0 72.5 23.4 表 2 不同算法的检测结果对比

Table 2. Comparison of detection results of different algorithms

算法 backbone mAP/% AP/% Pedestrian Person Bicycle Car Van Truck Tricycle Awning-tricycle Bus Motor RetinaNet R50 13.9 13.0 7.9 1.4 45.5 19.9 11.5 6.3 4.2 17.8 11.8 Faster R-CNN X101 22.4 21.3 15.5 7.9 52.0 29.5 20.5 14.7 8.9 32.1 21.6 Cascade R-CNN R50 23.2 22.2 14.8 7.6 54.6 31.5 21.6 14.8 8.6 34.9 21.4 Faster R-CNN+MMF R50 22.6 21.6 15.3 9.6 51.5 28.5 20.4 15.9 7.5 33.7 21.6 Faster R-CNN+SimCal R50 20.0 18.7 13.8 5.7 51.0 28.4 16.4 13.6 5.9 27.0 19.4 Faster R-CNN +BGS R50 23.0 21.8 16.0 8.1 51.8 31.1 19.8 15.0 8.4 36.1 21.5 RetinaNet+DSHNet R50 16.1 14.1 8.9 1.3 48.2 24.8 14.2 8.8 6.0 21.6 13.1 Faster R-CNN+DSHNet R50 24.6 22.5 16.5 10.1 52.8 32.6 22.1 17.5 8.8 39.5 23.7 Faster R-CNN+DSHNet X101 25.8 23.3 16.7 11.4 53.7 33.1 23.8 19.5 11.1 40.0 25.5 Cascade R-CNN+DSHNet R50 26.2 23.2 16.1 11.2 55.5 33.5 25.2 19.1 10.0 43.0 25.1 本文模型 CSPDarknet53 27.4 28.9 6.0 9.5 60.1 36.2 34.5 16.7 17.2 47.2 18.0 表 3 不同算法的平均精度均值与检测速度结果对比

Table 3. Comparison of average accuracy and detection speed of different algorithms

算法 backbone mAP/% 检测速度/FPS RetinaNet+DSHNet R50 16.1 19 Faster R-CNN+DSHNet R50 24.6 22.5 Cascade R-CNN+DSHNet R50 26.2 15 本文模型 CSPDarknet53 27.4 23.4 -

[1] WU X, LI W, HONG D, et al. Deep learning for UAV-based object detection and tracking: A survey[EB/OL]. (2021-10-25)[2022-05-01].https://arxiv.org/abs/2110.12638. [2] GIRSHICK R, DONAHUE J, DARRELL T, et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2014: 580-587. [3] GIRSHICK R. Fast R-CNN[C]//Proceedings of the IEEE International Conference on Computer Vision. Piscataway: IEEE Press, 2015: 1440-1448. [4] REN S Q, HE K M, GIRSHICK R, et al. Faster R-CNN: Towards real-time object detection with region proposal networks[C]//Proceedings of the 28th International Conference on Neural Information Processing Systems. Piscataway: IEEE Press, 2015: 91-99. [5] HE K, GKIOXARI G, DOLLÁR P, et al. Mask R-CNN[C]//Proceedings of the IEEE International Conference on Computer Vision. Piscataway: IEEE Press, 2017: 2961-2969. [6] CAI Z, VASCONCELOS N. Cascade R-CNN: Delving into high quality object detection[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 6154-6162. [7] REDMON J, DIVVALA S, GIRSHICK R, et al. You only look once: Unified, real time object detection[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2016: 779-788. [8] REDMON J, FARHADI A. YOLO9000: Better, faster, stronger[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2017: 7263-7271. [9] REDMON J, FARHADI A. YOLOv3: An incremental improvement[EB/OL]. (2018-04-08)[2022-05-01].https://arxiv.org/abs/1804.02767. [10] BOCHKOVSKIY A, WANG C Y, LIAO H Y M. YOLOv4: Optimal speed and accuracy of object detection[EB/OL]. (2020-04-23)[2022-05-01].https://arxiv.org/abs/2004. 10934?sid=NDAqzT. [11] LIU W, ANGUELOV D, ERHAN D, et al. SSD: Single shot multibox detector[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2016: 21-37. [12] LIN T Y, GOYAL P, GIRSHICK R, et al. Focal loss for dense object detection[C]//Proceedings of the IEEE International Conference on Computer Vision. Piscataway: IEEE Press, 2017: 2980-2988. [13] LIU M, WANG X, ZHOU A, et al. UAV-YOLO: Small object detection on unmanned aerial vehicle perspective[J]. Sensors, 2020, 20(8): 2238. doi: 10.3390/s20082238 [14] LIANG X, ZHANG J, ZHUO L, et al. Small object detection in unmanned aerial vehicle images using feature fusion and scaling-based single shot detector with spatial context analysis[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2019, 30(6): 1758-1770. [15] ZHANG P, ZHONG Y, LI X. SlimYOLOv3: Narrower, faster and better for real-time UAV applications[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2019: 37-45. [16] 裴伟, 许晏铭, 朱永英, 等. 改进的 SSD 航拍目标检测方法[J]. 软件学报, 2019, 30(3): 738-758.PEI W, XU Y M, ZHU Y Y, et al. The target detection method of aerial photography images with improved SSD[J]. Journal of Software, 2019, 30(3): 738-758(in Chinese). [17] 刘婷婷, 苗华, 李琳, 等. 融合场景上下文的轻量级目标检测网络[J]. 激光与光电子学进展, 2021, 58(20): 127-135.LIU T T, MIAO H, LI L, et al. Lightweight target detection network integrating scene context[J]. Laser & Optoelectronics Progress, 2021, 58(20): 127-135(in Chinese). [18] HOWARD A, SANDLER M, CHEN B, et al. Searching for mobileNetV3[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2019: 1314-1324. [19] TAN M, LE Q. EfficientNetV2: Smaller models and faster training[EB/OL](2021-06-23)[2022-05-01].https://arxiv.org/abs/2104.00298v2. [20] 刘艳菊, 王秋霁, 赵开峰, 等. 基于卷积神经网络的热轧钢条表面实时缺陷检测[J]. 仪器仪表学报, 2021, 42(12): 211-219. doi: 10.19650/j.cnki.cjsi.J2108078LIU Y J, WANG Q J, ZHAO K F, et al. Real-time defect detection of hot rolling steel bar based on convolution neural network[J]. Chinese Journal of Scientific Instrument, 2021, 42(12): 211-219(in Chinese). doi: 10.19650/j.cnki.cjsi.J2108078 [21] 周中, 张俊杰, 龚琛杰, 等. 基于深度语义分割的隧道渗漏水智能识别[J]. 岩石力学与工程学报, 2022, 41(10): 2082-2093. doi: 10.13722/j.cnki.jrme.2022.0016ZHOU Z, ZHANG J J, GONG C J, et al. Automatic identification of tunnel leakage based on deep semantic segmentation[J]. Chinese Journal of Rock Mechanics and Engineering, 2022, 41(10): 2082-2093(in Chinese). doi: 10.13722/j.cnki.jrme.2022.0016 [22] WANG Q, WU B, ZHU P, et al. ECA-Net: Efficient channel attention for deep convolutional neural networks[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 11534-11542. [23] HOWARD A G, ZHU M, CHEN B, et al. MobileNets: Efficient convolutional neural networks for mobile vision applications[EB/OL]. (2017-04-17)[2022-05-01].https://arxiv.org/abs/1704.04861. [24] HUANG G, SUN Y, LIU Z, et al. Deep networks with stochastic depth[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2016: 646-661. [25] ZHANG H, CISSE M, DAUPHIN Y N, et al. Mixup: Beyond empirical risk minimization[EB/OL]. (2018-04-27)[2022-05-01].https://arxiv.org/abs/1710.09412. [26] ZHANG X, IZQUIERDO E, CHANDRAMOULI K. Dense and small object detection in UAV vision based on cascade network[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2019: 118-126. [27] WANG T, LI Y, KANG B, et al. The devil is in classification: A simple framework for long-tail instance segmentation[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2020: 728-744. [28] LI Y, WANG T, KANG B, et al. Overcoming classifier imbalance for long-tail object detection with balanced group softmax[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2020: 10991-11000. [29] YU W P, YANG T J N, CHEN C. Towards resolving the challenge of long-tail distribution in UAV images for object detection[C]//Proceedings of the 2021 IEEE Winter Conference on Applications of Computer Vision. Piscataway: IEEE Press, 2021: 3258-3267. -

下载:

下载: