-

摘要:

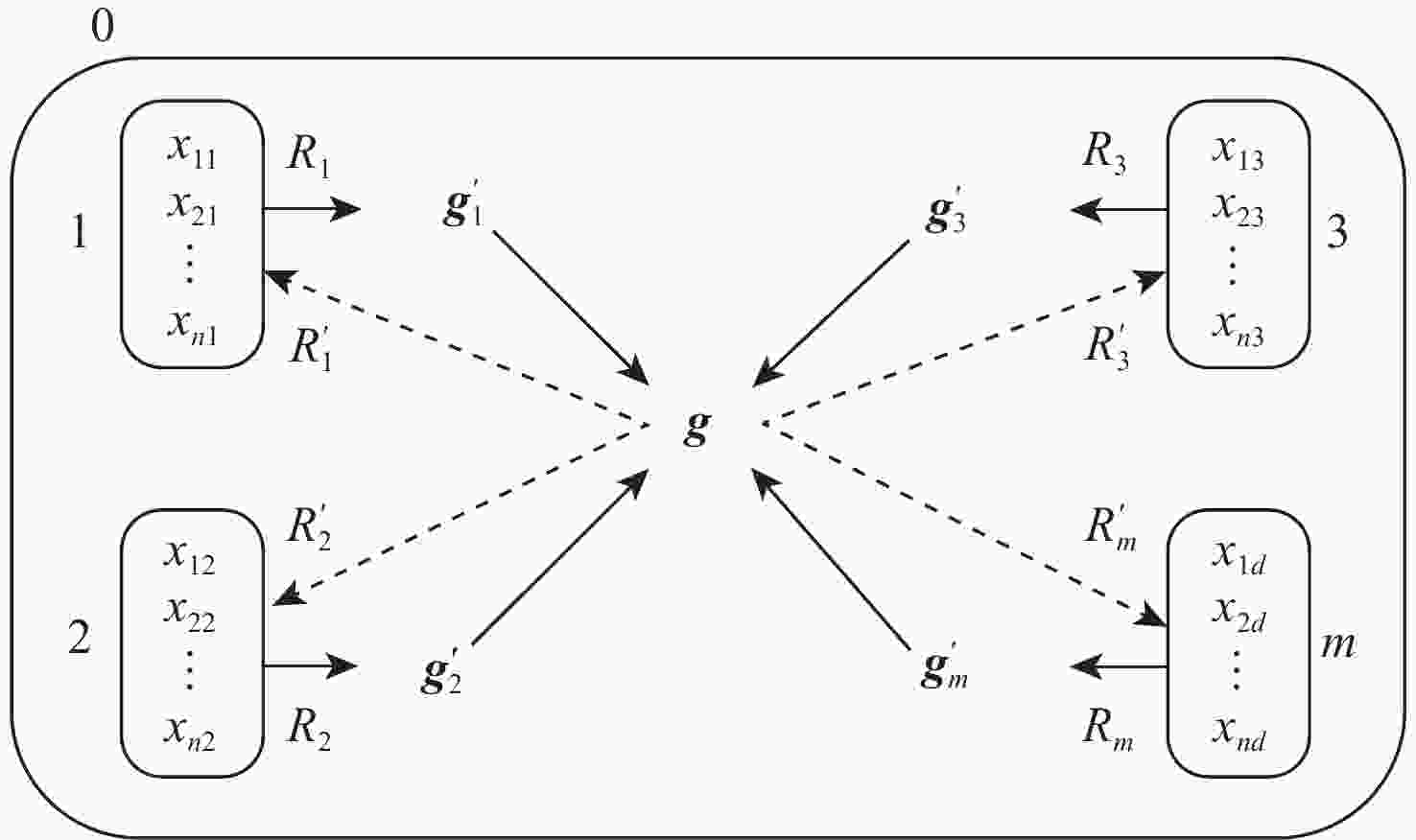

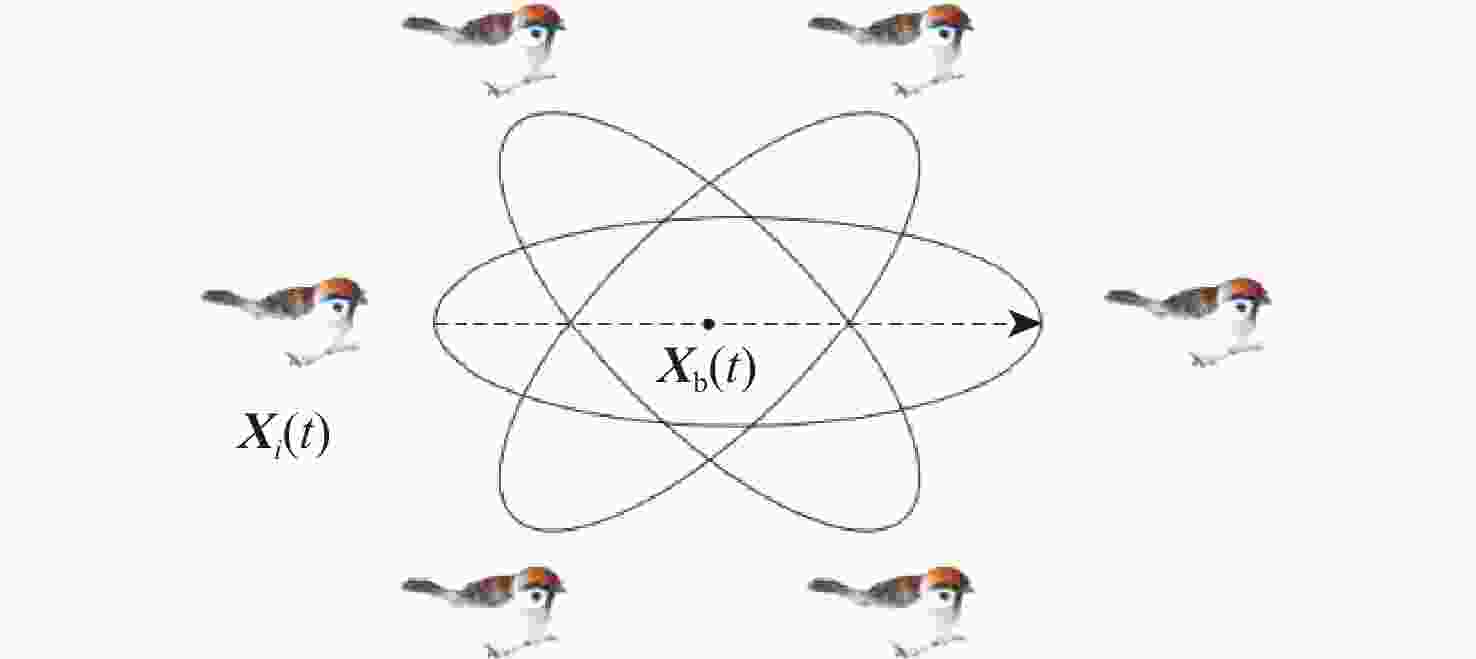

原始麻雀搜索算法存在寻优精度低、迭代后期容易陷入局部极值的问题,结合高效寻优性能的改进麻雀搜索算法和具有并行计算能力的膜计算,提出一种膜内麻雀优化算法( IMSSA)。在10个CEC2017测试函数上的实验结果表明,IMSSA具有更高的寻优精度。为进一步验证IMSSA的性能,使用IMSSA优化极限学习机(ELM)参数,提出一种膜内麻雀优化ELM(IMSSA-ELM)算法,并将其应用于软件缺陷预测领域。实验结果表明:在15个公开的软件缺陷数据集中,IMSSA-ELM算法预测性能在G-mean、MCC这2个评价指标下明显优于其他4种先进的对比算法,表明IMSSA-ELM算法具有更好的预测精度和稳定性,其实验结果在Friedman ranking和Holm’s post-hoc test非参数检验中具有明显的统计显著性。

Abstract:The original sparrow search algorithm,easy to fall into local extremum in the later stage of iteration, has the problems of low optimization accuracy. Combining the improved sparrow search algorithm with efficient optimization performance and the membrane computing with parallel computing capability, an intra-membrane sparrow optimization algorithm is proposed (IMSSA). The experimental results on ten CEC2017 test functions show that IMSSA has higher optimization accuracy. In addition, to further verify the performance of IMSSA, the extreme learning machine(ELM) parameters are optimized using IMSSA. An intra-membrane sparrow optimal ELM algorithm to be used in software defect predictionis proposed (IMSSA-ELM). The experimental results show that in the 15 public software defect datasets, the prediction performance of the IMSSA-ELM algorithm is significantly better than the other fourcompared algorithms under the two evaluation indicators of G-mean and MCC. The results also show that the IMSSA-ELM algorithm has better prediction accuracy and stability,and have obvious statistical significance in Friedman ranking and Holm’s post-hoc test nonparametric tests.

-

表 1 CEC2017函数基本信息

Table 1. Characteristics of CEC2017 benchmark functions

函数 序号 维度 范围 最优值 平移和旋转 Rastrigin 函数 C01 10 [−100,100] 500 平移和旋转 Lunacek Bi_Rastrigin 函数 C02 10 [−100,100] 700 平移和旋转非连续 Rastrigin 函数 C03 10 [−100,100] 800 混合函数 4 (N=4) C04 10 [−100,100] 1400 混合函数 6 (N=4) C05 10 [−100,100] 1600 混合函数 6 (N=5) C06 10 [−100,100] 1700 混合函数 6 (N=6) C07 10 [−100,100] 2000 复合函数 1 (N=3) C08 10 [−100,100] 2100 复合函数 1 (N=4) C09 10 [−100,100] 2300 复合函数 4 (N=4) C10 10 [−100,100] 2400 表 2 各算法优化结果

Table 2. 3Optimization results of each algorithm

算法 C01 C02 最优值 最差值 平均值 标准差 最优值 最差值 平均值 标准差 IMSSA 33.914 117.73 78.579 19.647 96.636 158.07 133.03 16.493 ASSA 39.103 158.02 126.51 31.707 102.40 172.78 133.79 13.606 SSA 326.94 470.74 412.07 33.216 642.36 773.56 727.59 28.529 IMODE 215.01 356.19 266.06 44.849 621.82 1252.6 861.21 250.12 AGSK 76.287 103.06 88.230 8.2978 110.59 136.81 124.19 8.6883 算法 C03 C04 最优值 最差值 平均值 标准差 最优值 最差值 平均值 标准差 IMSSA 31.211 77.441 62.421 9.1380 96.697 1124.3 285.88 227.83 ASSA 32.685 99.041 58.822 14.050 109.51 27414 3631.9 6926.8 SSA 275.84 382.17 331.99 31.277 97.980 19223 4102.0 4774.3 IMODE 176.10 300.47 237.55 38.684 103.57 260.38 167.53 53.626 AGSK 71.912 112.95 98.216 11.242 42.865 56.807 47.673 4.8499 算法 C05 C06 最优值 最差值 平均值 标准差 最优值 最差值 平均值 标准差 IMSSA 90.223 682.57 428.26 138.95 78.836 195.85 133.41 35.781 ASSA 158.57 914.91 580.22 186.11 71.840 532.16 250.55 126.47 SSA 2415.5 7295.7 4168.8 1127.5 938.01 9739.8 3140.3 2490.5 IMODE 538.56 2204.6 1498.1 544.34 587.23 1456.8 967.73 255.26 AGSK 550.84 907.42 738.77 104.88 99.017 243.21 146.12 45.266 算法 C07 C08 最优值 最差值 平均值 标准差 最优值 最差值 平均值 标准差 IMSSA 71.457 352.03 201.48 73.075 160.58 317.37 268.29 32.983 ASSA 75.892 510.55 292.15 129.06 190.55 323.88 279.25 40.194 SSA 732.58 1 486.9 1 090.4 187.73 438.64 735.10 633.05 61.259 IMODE 488.72 979.70 766.05 155.53 384.49 450.60 417.83 22.045 AGSK 212.50 412.20 281.83 60.994 177.14 307.28 264.04 43.527 算法 C09 C010 最优值 最差值 平均值 标准差 最优值 最差值 平均值 标准差 IMSSA 355.26 441.35 394.00 19.205 286.69 537.23 450.48 65.565 ASSA 442.70 647.13 506.88 37.550 527.92 779.56 681.47 59.241 SSA 829.88 1 979.9 1 286.9 213.85 1 138.1 1 798.2 1 457.1 138.83 IMODE 671.16 888.74 776.26 83.061 802.47 1 112.9 955.99 97.061 AGSK 41 629 45 327 43 461 11 806 474.97 525.60 501.22 13.510 表 3 各算法最优值Friedman平均排序和APVs值

Table 3. Friedman average ranking and APV value of best values of each algorithm

算法 Friedman排序 p值 IMSSA 1.2000 ASSA 2.3000 0.0500 AGSK 2.6000 0.0250 IMODE 4.0000 0.0167 SSA 4.9000 0.0125 表 4 各算法标准差Friedman平均排序和APVs值

Table 4. Friedman mean ranking and APVs of standard deviations for each algorithm

算法 Friedman排序 p值 AGSK 1.5000 IMSSA 2.1000 0.0500 ASSA 3.0000 0.0250 IMODE 3.8000 0.0167 SSA 4.6000 0.0125 表 5 数据集基本信息

Table 5. Basic information of dataset

组 特征数 数据集 样本数 属性数 缺陷数 缺陷率 主成分数 NASA 37 PC1 735 2 61 0.0830 11 37 CM1 344 2 42 0.1221 10 39 MC2 125 2 44 0.3520 10 37 MW1 263 2 27 0.1027 10 SOFTLAB 29 ar6 101 2 15 0.1485 7 AEEEM 61 EQ 324 2 129 0.3981 20 61 JDT 997 2 206 0.2066 23 61 PDE 1497 2 209 0.1403 22 61 ML 1862 2 245 0.1321 26 MORPH 20 arc 234 2 27 0.1154 9 20 camel-1.0 339 2 13 0.0383 11 20 poi-1.5 237 2 136 0.5738 11 20 redktor 176 2 27 0.1534 10 20 velocity-1.4 196 2 146 0.7449 11 JIRA 65 Hive-0.9.0 1416 2 283 0.1998 21 表 6 各算法预测G-mean值

Table 6. G-mean value predicted by each algorithm

数据集 G-mean值 ELM KPWE PSO-ELM ASSA-ELM IMSSA-ELM PC1 0.7103 0.5828 0.9019 0.8316 0.9134 CM1 0.4933 0.5248 0.7480 0.7894 0.8122 MC2 0.3742 0.5432 0.8675 0.9181 0.9289 MW1 0.4390 0.6605 0.8090 0.6854 0.8505 ar6 0.5941 0.4529 0.9360 0.9080 0.9841 EQ 0.6707 0.6552 0.8795 0.8484 0.8511 JDT 0.6958 0.6227 0.8667 0.7988 0.8248 PDE 0.5616 0.5689 0.7332 0.6803 0.7437 ML 0.7029 0.4808 0.7694 0.7751 0.7717 arc 0.5738 0.5828 0.8385 0.7818 0.8898 camel-1.0 0.5581 0.4493 0.6274 0.7034 0.9177 poi-1.5 0.5742 0.6816 0.7732 0.7900 0.8576 redktor 0.6782 0.6453 0.8023 0.8643 0.8832 velocity-1.4 0.6804 0.7165 0.8660 0.8929 0.9328 hive-0.9.0 0.7079 0.7120 0.7721 0.7887 0.8101 表 7 算法预测MCC值

Table 7. 8MCC value predicted by each algorithm

数据集 MCC值 ELM KPWE PSO-ELM ASSA-ELM IMSSA-ELM PC1 0.3312 0.2173 0.6262 0.4830 0.6377 CM1 0.0401 0.1746 0.4754 0.4811 0.5500 MC2 0.0891 0.3057 0.8162 0.8458 0.8535 MW1 0.0132 0.3349 0.5764 0.4148 0.5682 ar6 0.1715 0.2048 0.9044 0.8079 0.9305 EQ 0.3564 0.4168 0.7735 0.7097 0.6978 JDT 0.3798 0.4448 0.6946 0.5885 0.6160 PDE 0.1266 0.2905 0.4243 0.3381 0.4169 ML 0.2964 0.2237 0.4518 0.4298 0.4354 arc 0.1101 0.2537 0.5960 0.4780 0.7345 camel-1.0 0.2678 0.1200 0.4882 0.3781 0.7725 poi-1.5 0.1475 0.3776 0.5475 0.5804 0.7102 redktor 0.2840 0.3497 0.6808 0.6550 0.7828 velocity-1.4 0.3752 0.4004 0.8393 0.7866 0.9137 hive-0.9.0 0.3955 0.4752 0.4931 0.5239 0.5806 表 8 F-measure的G-mean平均排序和APVs值

Table 8. Friedman ranking and APVs of G-mean

算法 Friedman排序 p值 IMSSA-ELM 1.2000 ASSA-ELM 1.4000 0.0753 PSO-ELM 1.4000 0.0753 KPWE 4.4667 0.0000 ELM 4.5333 0.0000 表 9 MCC的Friedman平均排序和APVs值

Table 9. Friedman ranking and APVs of MCC

算法 Friedman排序 p值 IMSSA-ELM 1.4000 ASSA-ELM 2.6667 0.0565 PSO-ELM 1.9333 0.3556 KPWE 4.2000 0.0000 ELM 4.8000 0.0000 -

[1] XU Z, LI L, YAN M, et al. A comprehensive comparative study of clustering-based unsupervised defect prediction models[J]. Journal of Systems and Software, 2021, 172: 110862. doi: 10.1016/j.jss.2020.110862 [2] JAYANTHI R, FLORENCE L. Software defect prediction techniques using metrics based on neural network classifier[J]. Cluster Computing, 2019, 22(1): 77-88. [3] 董浩, 李明星, 张淑清, 等. 基于核主成分分析和极限学习机的短期电力负荷预测[J]. 电子测量与仪器学报, 2018, 32(1): 188-193.DONG H, LI M X, ZHANG S Q, et al. Short-term power load forecasting based on kernel principal component analysis and extreme learning machine[J]. Journal of Electronic Measurement and Instrumentation, 2018, 32(1): 188-193(in Chinese). [4] 陈恒志, 杨建平, 卢新春, 等. 基于极限学习机(ELM) 的连铸坯质量预测[J]. 工程科学学报, 2018, 40(7): 815-821.CHEN H Z, YANG J P, LU X C, et al. Quality prediction of the continuous casting bloom based on the extreme learning machine (ELM)[J]. Chinese Journal of Engineering Science, 2018, 40(7): 815-821(in Chinese). [5] LIU B Y, CHEN G L, LIN H C, et al. Prediction of IGBT junction temperature using improved cuckoo search-based extreme learning machine[J]. Microelectronics Reliability, 2021, 124: 114267. doi: 10.1016/j.microrel.2021.114267 [6] DING L, ZHANG X Y, WU D Y, et al. Application of an extreme learning machine network with particle swarm optimization in syndrome classification of primary liver cancer[J]. Journal of Integrative Medicine, 2021, 19(5): 395-407. doi: 10.1016/j.joim.2021.08.001 [7] LI L L, SUN J, TSENG M L, et al. Extreme learning machine optimized by whale optimization algorithm using insulated gate bipolar transistor module aging degree evaluation[J]. Expert Systems with Applications, 2019, 127: 58-67. doi: 10.1016/j.eswa.2019.03.002 [8] 孙远, 杨峰, 郑晶, 等. 基于膜计算与粒子群算法的盲源分离方法[J]. 振动与冲击, 2018, 37(17): 63-71.SUN Y, YANG F, ZHENG J, et al. Blind source separation method based on membrane computing and particle swarm optimization[J]. Journal of Vibration and Shock, 2018, 37(17): 63-71(in Chinese). [9] 谢佩军, 张育斌. 膜计算粒子群算法改进极限学习机的水肥预测模型研究[J]. 中国农机化学报, 2021, 42(4): 142-149.XIE P J, ZHANG Y B. Research on water and fertilizer prediction model of improved extreme learning machine by membrane computing particle swarm optimization[J]. Chinese Journal of Chinese Agricultural Mechanization, 2021, 42(4): 142-149(in Chinese). [10] ADNAN R M, MOSTAFA R R, KISI O, et al. Improving streamflow prediction using a new hybrid ELM model combined with hybrid particle swarm optimization and grey wolf optimization[J]. Knowledge-Based Systems, 2021, 230: 107379. doi: 10.1016/j.knosys.2021.107379 [11] XU Z, LIU J, LUO X P, et al. Software defect prediction based on kernel PCA and weighted extreme learning machine[J]. Information and Software Technology, 2019, 106: 182-200. doi: 10.1016/j.infsof.2018.10.004 [12] KHADIJAH K, SASONGKO P S. Software defect prediction using synthetic minority over-sampling technique and extreme learning machine[J]. Kinetik Game Technology Information System Computer Network Computing Electronics and Control, 2020, 5(3): 203-210. [13] 曾亮, 雷舒敏, 王珊珊, 等. 基于OVMD-SSA-DELM-GM模型的超短期风电功率预测方法[J]. 电网技术, 2021, 45(12): 4701-4712.ZENG L, LEI S M, WANG S S, et al. Ultra-short-term wind power prediction method based on OVMD-SSA-DELM-GM model[J]. Power System Technology, 2021, 45(12): 4701-4712 (in Chinese). [14] 刘栋, 魏霞, 王维庆, 等. 基于SSA-ELM的短期风电功率预测[J]. 智慧电力, 2021, 49(6): 53-59.LIU D, WEI X, WANG W Q, et al. Short-term wind power prediction based on SSA-ELM[J]. Smart Power, 2021, 49(6): 53-59(in Chinese). [15] 兰世豪, 韩涛, 黄友锐, 等. 基于膜计算和粒子群的煤矿移动机器人动态窗口算法研究[J]. 工矿自动化, 2020, 46(11): 46-53.LAN S H, HAN T, HUANG Y R, et al. Research on dynamic window algorithm of coal mine mobile robot based on membrane computing and particle swarm[J]. Industry and Mine Automation, 2020, 46(11): 46-53(in Chinese). [16] XUE J K, SHEN B. A novel swarm intelligence optimization approach: sparrow search algorithm[J]. Systems Science & Control Engineering, 2020, 8(1): 22-34. [17] ZHANG C, DING S. A stochastic configuration network based on chaotic sparrow search algorithm[J]. Knowledge-Based Systems, 2021, 220(10): 106924. [18] 付华, 刘昊. 多策略融合的改进麻雀搜索算法及其应用[J]. 控制与决策, 2022, 37(1): 87-96.FU H, LIU H. Improved sparrow search algorithm based on multi-strategy fusion and its application[J]. Control and Decision, 2022, 37(1): 87-96(in Chinese). [19] WANG W C, XU L, CHAU K W, et al. Yin-Yang firefly algorithm based on dimensionally Cauchy mutation[J]. Expert Systems with Applications, 2020, 150: 113216. doi: 10.1016/j.eswa.2020.113216 [20] 王正通, 程凤芹, 尤文, 等. 基于翻筋斗觅食策略的灰狼优化算法[J]. 计算机应用研究, 2021, 38(5): 1434-1437.WANG Z T, CHENG F Q, YOU W, et al. Optimization algorithm of gray wolf based on somersault foraging strategy[J]. Application Research of Computers, 2021, 38(5): 1434-1437(in Chinese). [21] PĂUN G. Computing with membranes[J]. Journal of Computer and System Sciences, 2000, 61(1): 108-143. [22] SALLAM K M, ELSAYED S M, CHAKRABORTTY R K, et al. Improved multi-operator differential evolution algorithm for solving unconstrained problems[C]//Proceedings of the IEEE Congress on Evolutionary Computation. Piscataway: IEEE Press, 2020: 1-8. [23] MOHAMED A W, HADI A A, MOHAMED A K, et al. Evaluating the performance of adaptive gainingsharing knowledge based algorithm on CEC 2020 benchmark problems[C]//Proceedings of the IEEE Congress on Evolutionary Computation. Piscataway: IEEE Press, 2020: 1-8. [24] 江妍, 马瑜, 梁远哲, 等. 基于分数阶麻雀搜索优化OTSU肺组织分割算法[J]. 计算机科学, 2021, 48(S1): 28-32.JIANG Y, MA Y, LIANG Y Z, et al. Optimization of OTSU lung tissue segmentation algorithm based on fractional sparrow search[J]. Computer Science, 2021, 48(S1): 28-32(in Chinese). [25] GARCI S, TRIGUERO I, DERRAC J, et al. Evolutionary-based selection of generalized instances for imbalanced classification[J]. Knowledge-Based Systems, 2012, 25(1): 3-12. [26] 吕鑫, 慕晓冬, 张钧, 等. 混沌麻雀搜索优化算法[J]. 北京航空航天大学学报, 2021, 47(8): 1712-1720.LYU X, MU X D, ZHANG J, et al. Chaossparrow search optimization algorithm[J]. Journal of Beijing University of Aeronautics and Astronautics, 2021, 47(8): 1712-1720(in Chinese). [27] DAI Q, LIU J W, LIU Y. Multi-granularity relabeled under-sampling algorithm for imbalanced data[J]. Applied Soft Computing, 2022, 124: 109083. doi: 10.1016/j.asoc.2022.109083 -

下载:

下载: