-

摘要:

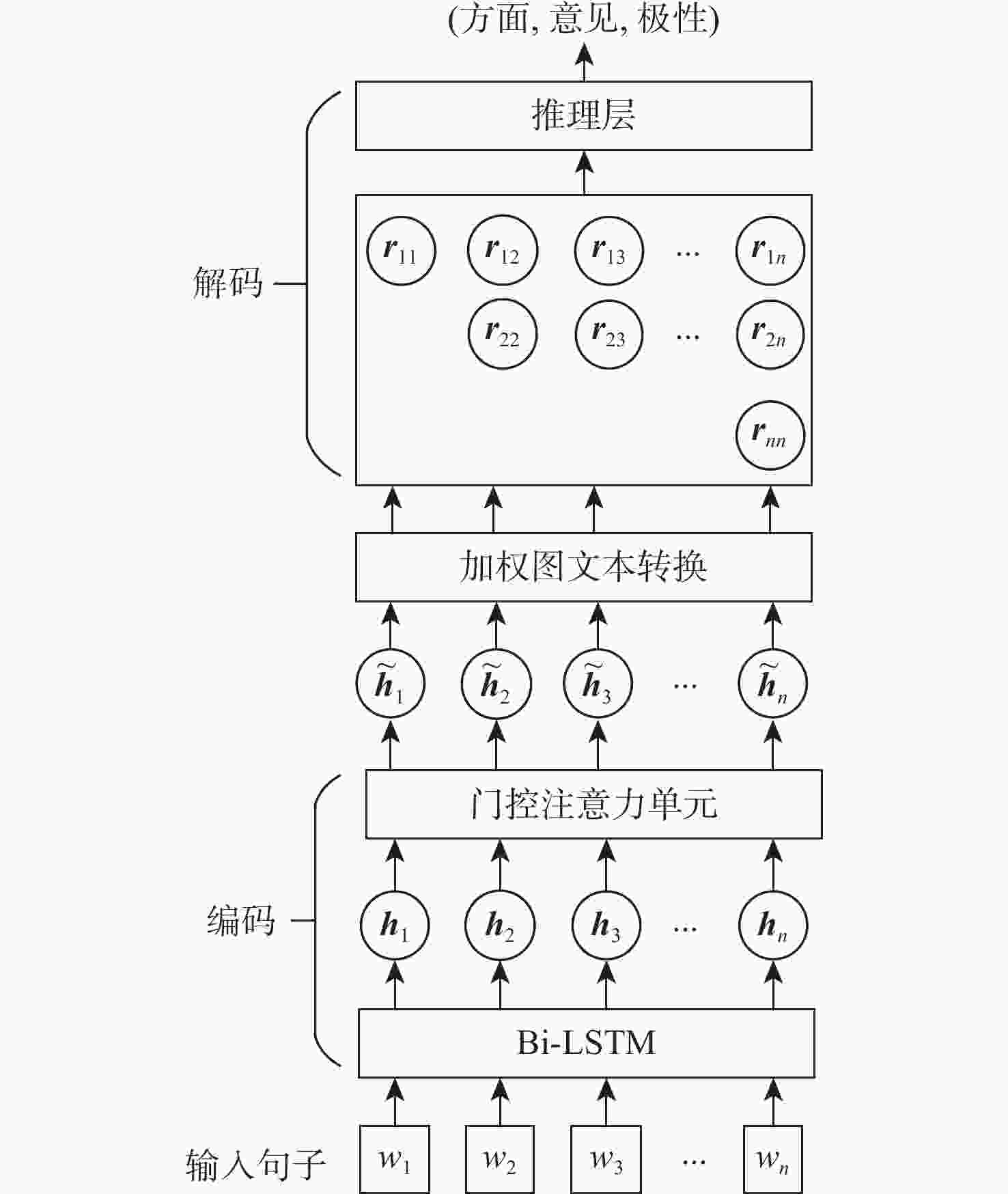

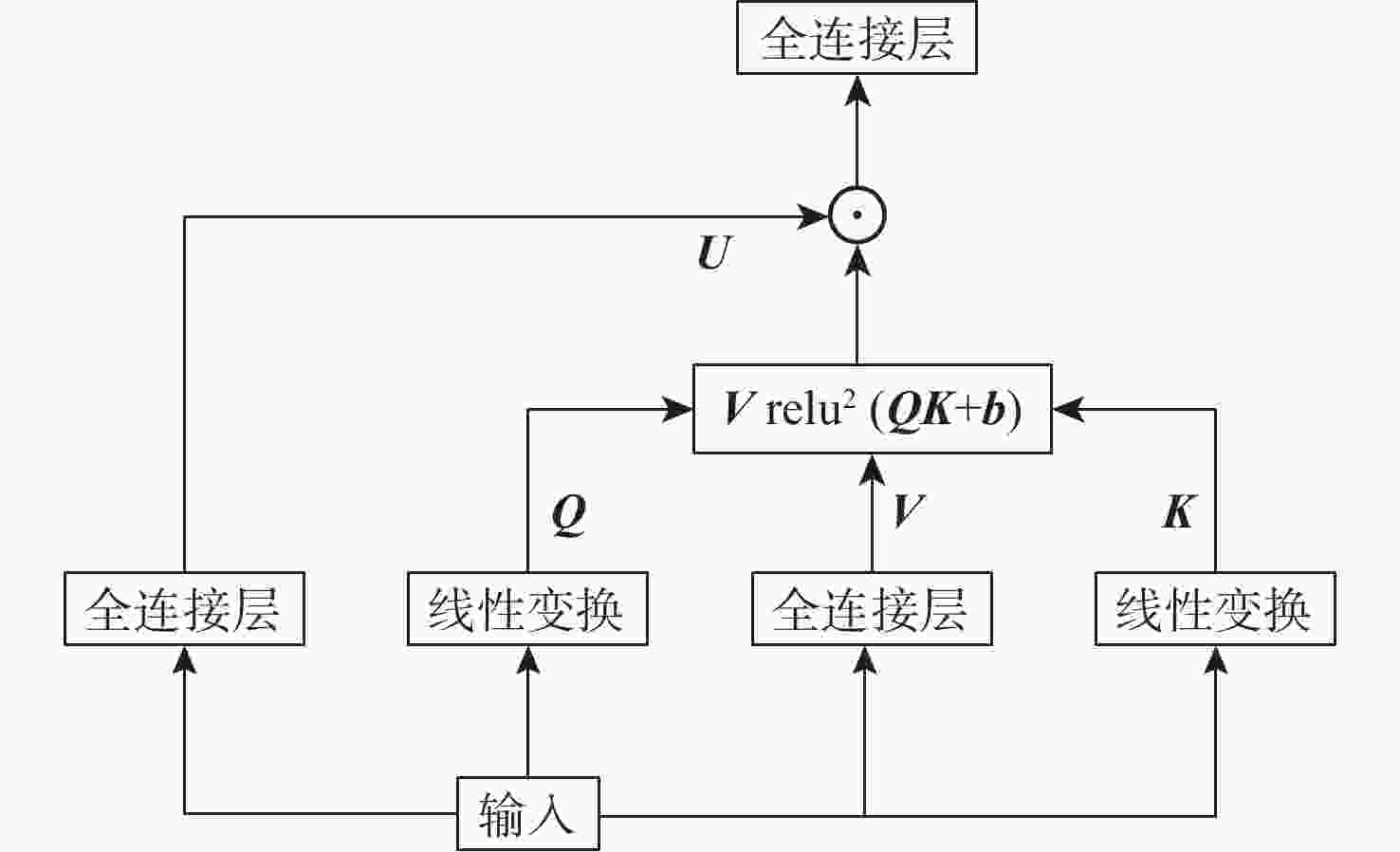

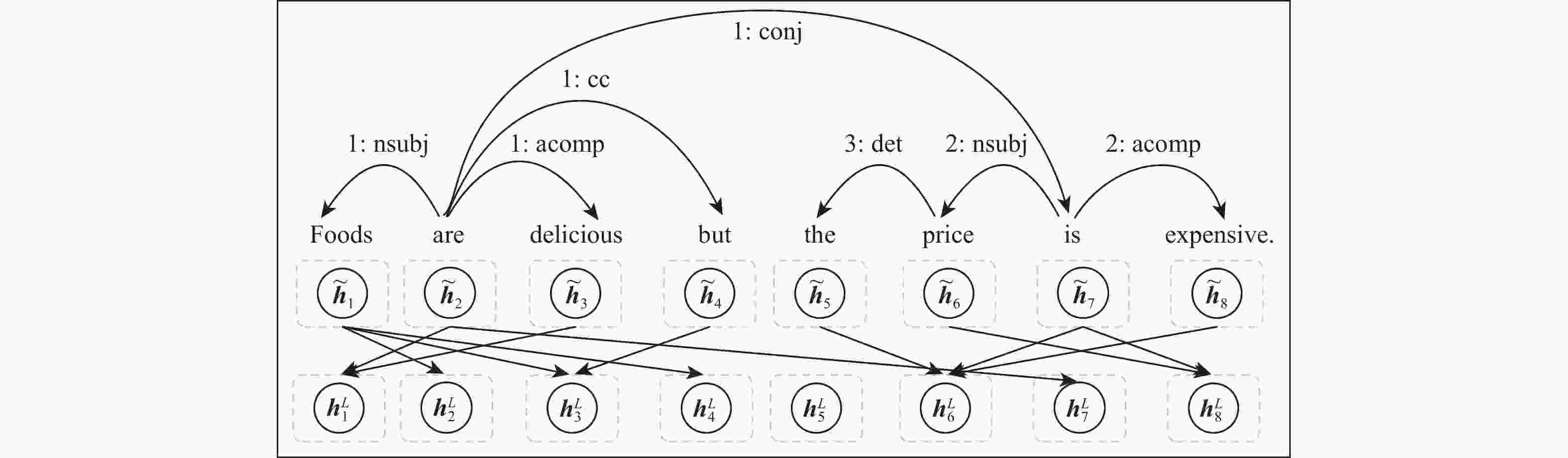

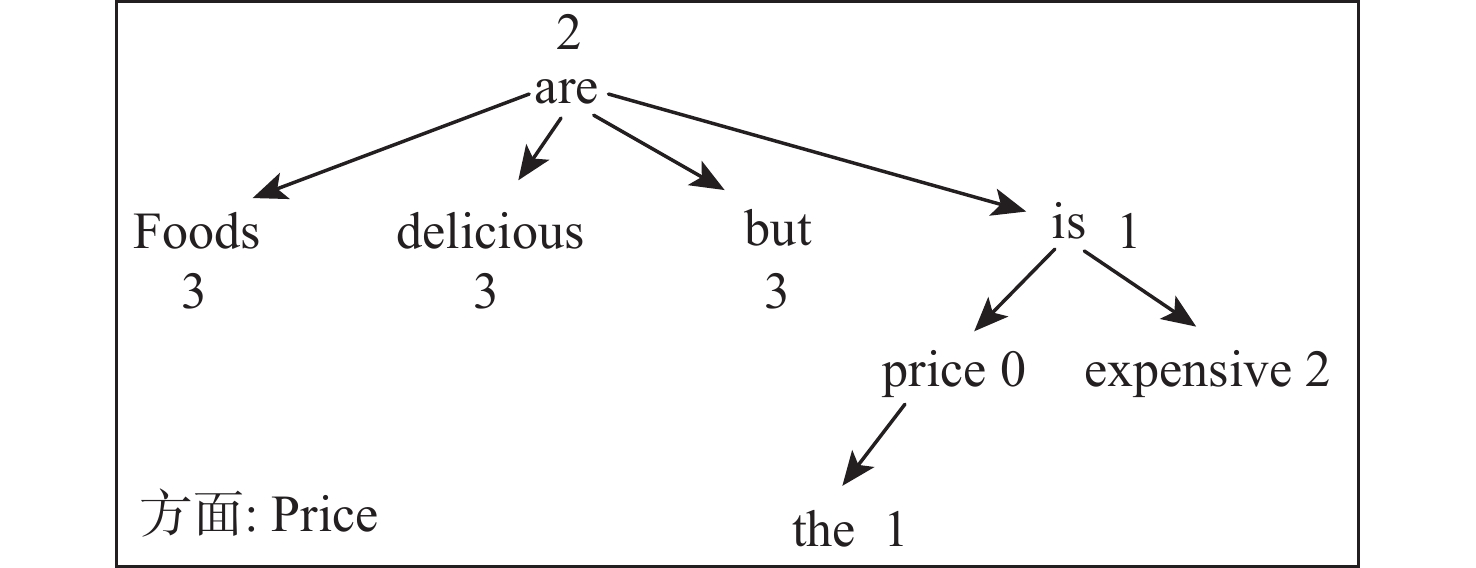

方面情感三元组抽取包括方面抽取、意见抽取和方面情感分类3项任务,以管道方式解决该任务的研究方法无法利用元素之间的交互信息,同时也会造成错误传播和冗余训练。基于此,提出一种基于门控注意力和加权图文本的方面情感三元组抽取方法。采用双向长短时记忆网络学习句子的序列特征表示;利用门控注意力单元学习单词之间的线性联系;利用语法距离加权图卷积网络增强三元组元素之间的交互;利用网格标记推理策略预测三元组。在4个公开数据集上进行实验,结果表明:所提方法可以有效增强三元组元素之间的交互,提高三元组抽取的准确率;同时,所提方法的

F 1值分别为57.94%、70.54%、61.95%和67.66%,与基准模型相比均有所提高。Abstract:Aspect sentiment triple extraction includes three tasks: aspect term extraction, opinion term extraction, and aspect sentiment classification. However, research methods that solve this task in a pipeline way cannot utilize the interaction information between elements, and will also cause error propagation and redundant training. To solve the above problems, an aspect sentiment triple extraction method based on gated attention and weighted graph text is proposed, which makes full use of the semantic and grammatical relationships between triple elements to enhance element interaction. Firstly, the model uses a bidirectional long-short-term memory network to learn the sequence feature representation of sentences. Secondly, a gated attention unit is used to learn linear connections between words. Thirdly, a grammatical distance-weighted graph convolutional network is employed to enhance the interactions between triplet elements. Finally, a grid tagging inference strategy is applied to predict triples. Experimental results on four public datasets show that the proposed method can effectively enhance the interaction between triple elements and improve the accuracy of triple extraction. Moreover, the

F 1 values of the proposed method are 57.94%, 70.54%, 61.95% and 67.66%, respectively, which are all improved compared to the baseline model. -

表 1 数据集统计结果

Table 1. Dataset statistics results

数据集 句子

数量三元组

数量情感极性

积极的

三元组数量情感极性

中立的

三元组数量情感极性

消极的

三元组数量lap14 训练集 899 1452 808 111 533 验证集 225 383 199 48 136 测试集 332 547 364 67 116 res14 训练集 1259 2356 1693 172 491 验证集 315 580 427 46 107 测试集 493 1008 784 68 156 res15 训练集 603 1038 799 29 210 验证集 151 239 181 9 49 测试集 325 493 324 25 144 res16 训练集 863 1421 1036 55 330 验证集 216 348 263 8 77 测试集 328 525 416 30 79 表 2 GA-DWGT模型与基准模型结果对比

Table 2. Comparison of GA-DWGT model and benchmark model results

% 模型 P R F1 lap14 res14 res15 res16 lap14 res14 res15 res16 lap14 res14 res15 res16 Li-unified-R+PD 42.25 41.44 43.34 38.19 42.78 68.79 50.73 53.47 42.47 51.68 46.69 44.51 Peng-unified-R+PD 40.40 44.18 40.97 46.76 47.24 62.99 54.68 62.97 43.50 51.89 46.79 53.62 Peng-unified-R+IOG 48.62 58.89 51.70 59.25 45.52 60.41 46.04 58.09 47.02 59.64 48.71 58.67 IMN+IOG 49.21 59.57 55.24 46.23 63.88 52.33 47.68 61.65 53.75 PASTE 52.10 63.40 54.80 62.30 48.10 61.90 52.60 63.60 50.00 62.60 53.70 62.90 GTS-CNN 55.93 70.79 60.09 62.63 47.52 61.71 53.57 66.98 51.38 65.94 56.64 64.73 GTS-LSTM 59.42 67.28 63.26 66.07 45.13 61.91 50.71 65.05 51.30 64.49 56.29 65.56 GA-DWGT 59.09 70.67 65.87 67.80 47.34 62.42 50.92 65.39 52.60 66.21 57.44 66.29 PASTE-BERT 59.70 68.70 63.60 68.00 55.30 63.80 59.80 67.70 57.40 66.10 61.60 67.80 GTS-BERT 57.52 70.92 59.29 68.58 51.92 69.49 58.07 66.60 54.58 70.20 58.67 67.58 GA-DWGT-BERT 63.80 70.74 64.11 65.41 51.74 68.38 59.92 70.08 57.94 70.54 61.95 67.66 表 3 对比模型的可训练参数数量

Table 3. Number of trainable parameters of comparison models

模型 可训练参数数量/106 Li-unified-R+PD 0.31 Peng-unified-R+PD 0.21 Peng-unified-R+IOG 0.36 IMN+IOG 0.45 PASTE 0.71 GTS-CNN 0.47 GTS-LSTM 0.33 GA-DWGT 0.33 表 4 消融实验结果

Table 4. Ablation study results

% 模型 P R F1 lap14 res14 res15 res16 lap14 res14 res15 res16 lap14 res14 res15 res16 GA-DWGT-GAU-WG 52.29 67.75 58.79 66.46 43.85 59.79 49.89 61.19 47.71 63.18 53.98 63.72 GA-DWGT-WG 57.07 67.78 59.65 67.68 42.20 61.01 55.01 64.29 48.52 64.22 57.23 65.94 GA-DWGT-GAU 55.71 69.57 62.89 65.59 43.85 59.59 49.89 62.55 49.08 64.20 55.65 64.03 GA-DWGT 59.09 70.67 65.87 67.80 47.34 62.42 50.92 65.39 52.60 66.21 57.44 66.29 表 5 案例分析

Table 5. Case study

例句 正确的三元组 模型预测三元组 GTS-CNN GTS-LSTM GA-DWGT Made interneting difficult

to maintain(interneting, difficult, NEG) (maintain, difficult, POS) (maintain, difficult, POS) (interneting, difficult, NEG) Made interneting difficult to maintain

and the screen is very sharp(speed, much more, POS)

(screen, sharp, POS)(screen, sharp, POS) (screen, sharp, POS) (speed, much more, POS)

(screen, sharp, POS)The bread is top notch

as well(bread, top notch, POS) (bread, top notch, POS) (bread, top notch, POS) (bread, top notch, POS) The staff should be

a bit more friendly(staff, friendly, NEG) (staff, more friendly, POS) (staff, more friendly, POS)

(staff, should be, POS)(staff, friendly, NEG)

(staff, should, NEG)The food was extremely tasty,

creatively presented

and the wine excellent(food, tasty, POS) (food,

creatively presented, POS)

(wine, excellent, POS)(food, tasty, POS) (food,

creatively presented, POS)

(wine, excellent, POS)(food, tasty, POS) (food,

creatively presented, POS)

(wine, excellent, POS)

(food, excellent, POS)(food, tasty, POS) (food,

creatively presented, POS)

(wine, excellent, POS) -

[1] PONTIKI M, GALANIS D, PAVLOPOULOS J, et al. SemEval-2014 Task 4: Aspect based sentiment analysis[C]//Proceedings of the 8th International Workshop on Semantic Evaluation. Stroudsburg: ACL, 2014: 27-35. [2] DONG L, WEI F R, TAN C Q. Adaptive recursive neural network for target-dependent twitter sentiment classification[C]//Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics. Stroudsburg: ACL, 2014, 2: 49-54. [3] YIN Y C, WEI F R, DONG L, et al. Unsupervised word and dependency path embeddings for aspect term extraction[C]//Proceedings of the 25th International Joint Conference on Artificial Intelligence. Palo Alto: AAAI, 2016: 2979-2985. [4] YANG B S, CARDIE C. Extracting opinion expressions with semi-Markov conditional random fields[C]//Proceedings of the Joint Conference on Empirical Methods in Natural Language Processing and Computational Natural Language Learning. Stroudsburg: ACL, 2012: 1335-1345. [5] XU L, CHIA Y K, BING L D. Learning span-level interactions for aspect sentiment triplet extraction[C]//Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing. Stroudsburg: ACL, 2021: 4755-4766. [6] PENG H Y, XU L, BING L D, et al. Knowing what, how and why: A near complete solution for aspect-based sentiment analysis[C]//Proceedings of the AAAI Conference on Artificial Intelligence. Palo Alto: AAAI, 2020, 34: 8600-8607. [7] XU L, LI H, LU W, et al. Position-aware tagging for aspect sentiment triplet extraction[C]//Proceedings of the Conference on Empirical Methods in Natural Language Processing. Stroudsburg: ACL, 2020: 2339-2349. [8] ZHANG C, LI Q C, SONG D W, et al. A multi-task learning framework for opinion triplet extraction[C]//Proceedings of the Findings of the Association for Computational Linguistics. Stroudsburg: ACL, 2020: 819-828. [9] WU Z, YING C C, ZHAO F, et al. Grid tagging scheme for aspect-oriented fine-grained opinion extraction[C]//Proceedings of the Findings of the Association for Computational Linguistics. Stroudsburg: ACL, 2020: 2576-2585. [10] HUANG L Z, WANG P Y, LI S J, et al. First target and opinion then polarity: Enhancing target-opinion correlation for aspect sentiment triplet extraction[EB/OL]. (2021-11-01)[2022-05-01]. https://arxiv.org/abs/2102.08549v1. [11] MUKHERJEE R, NAYAK T, BUTALA Y, et al. PASTE: A tagging-free decoding framework using pointer networks for aspect sentiment triplet extraction[C]//Proceedings of the Conference on Empirical Methods in Natural Language Processing. Stroudsburg: ACL, 2021: 9279-9291. [12] JEFFREY P, RICHARD S, CHRISTOPHER M. Glove: Global vectors for word representation[C]//Proceedings of the Conference on Empirical Methods in Natural Language Processing. Stroudsburg: ACL, 2014: 1532-1543. [13] BOJANOWSKI P, GRAVE E, JOULIN A, et al. Enriching word vectors with subword information[J]. Transactions of the Association for Computational Linguistics, 2017, 5(1): 135-146. [14] DEVLIN J, CHANG M W, LEE K, et al. BERT: Pre-training of deep bidirectional transformers for language understanding[C]// Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Stroudsburg: ACL, 2019, 1: 4171-4186. [15] VASWANI A, SHAZEER N, PARMAR N, et al. Attention is all you need[C]//Proceedings of the 31st Annual Conference on Neural Information Processing Systems. La Jolla: NIPS, 2017: 5999-6009. [16] HUA W Z, DAI Z H, LIU H X, et al. Transformer quality in linear time[EB/OL]. (2022-02-21)[2022-05-01]. https://arxiv.org/abs/2202.10447. [17] 苏锦钿, 欧阳志凡, 余珊珊. 基于依存树及距离注意力的句子属性情感分类[J]. 计算机研究与发展, 2019, 56(8): 1731-1745. doi: 10.7544/issn1000-1239.2019.20190102SU J D, OUYANG Z F, YU S S. Aspect-level sentiment classification for sentences based on dependency tree and distance attention[J]. Journal of Computer Research and Development, 2019, 56(8): 1731-1745(in Chinese). doi: 10.7544/issn1000-1239.2019.20190102 [18] PONTIKI M, GALANIS D, PAPAGEORGIOU H, et al. SemEval-2015 Task 12: Aspect based sentiment analysis[C]//Proceedings of the 9th International Workshop on Semantic Evaluation. Stroudsburg: ACL, 2015: 486-495. [19] PATERIA S, CHOUBEY P K. SemEval-2016 Task 5: Aspect based sentiment analysis[C]//Proceedings of the 10th International Workshop on Semantic Evaluation. Stroudsburg: ACL, 2016: 318-324. [20] LI X, BING L D, LI P J, et al. A unified model for opinion target extraction and target sentiment prediction[C]//Proceedings of the 33rd AAAI Conference on Artificial Intelligence. Palo Alto: AAAI, 2019: 6714-6721. [21] FAN Z F, WU Z, DAI X Y, et al. Target-oriented opinion words extraction with target-fused neural sequence labeling[C]//Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Stroudsburg: ACL, 2019, 1: 2509-2518. [22] HE R D, LEE W S, NG H T, et al. An interactive multi-task learning network for end-to-end aspect-based sentiment analysis[C]//Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics. Stroudsburg: ACL, 2020: 504-515. -

下载:

下载: