-

摘要:

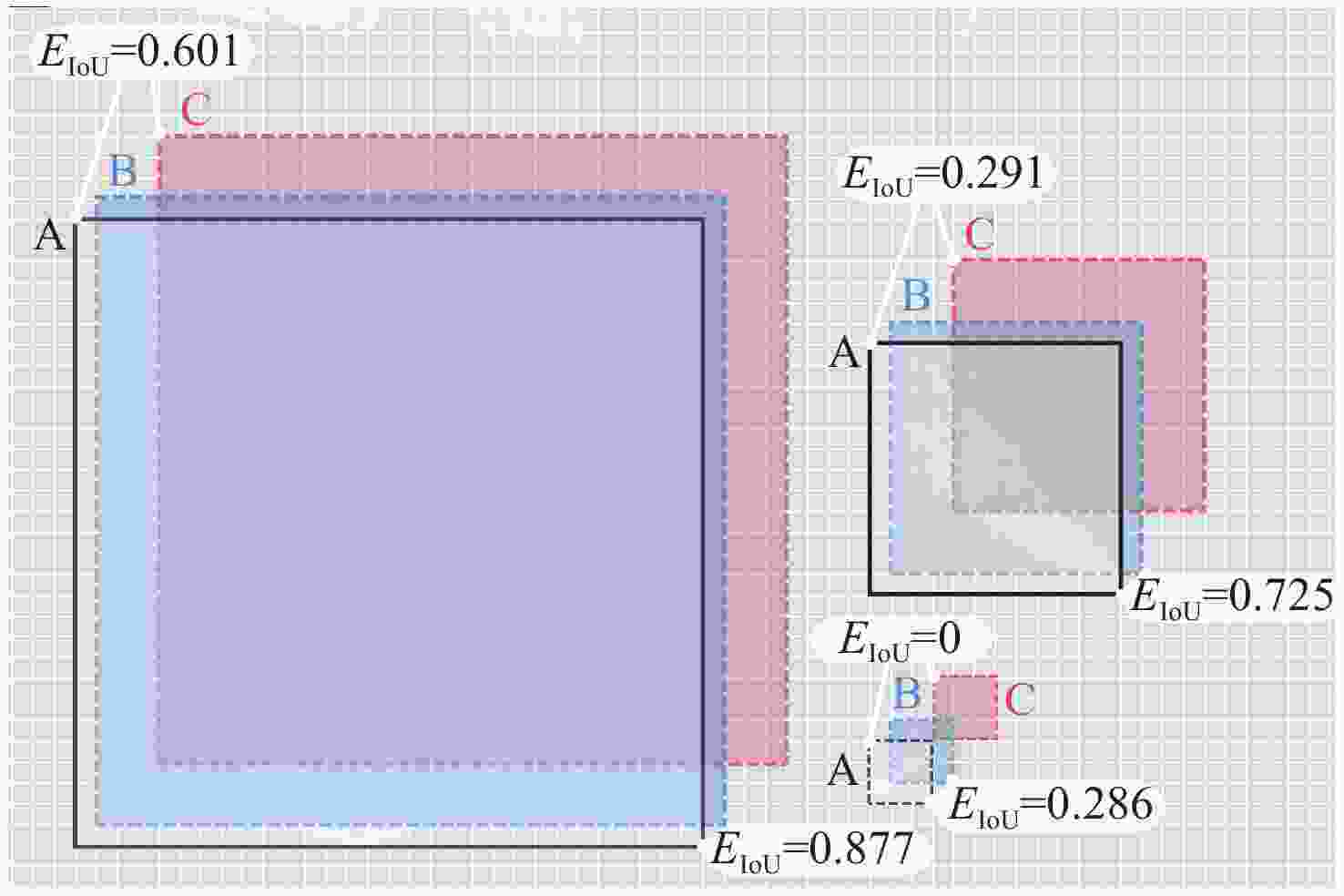

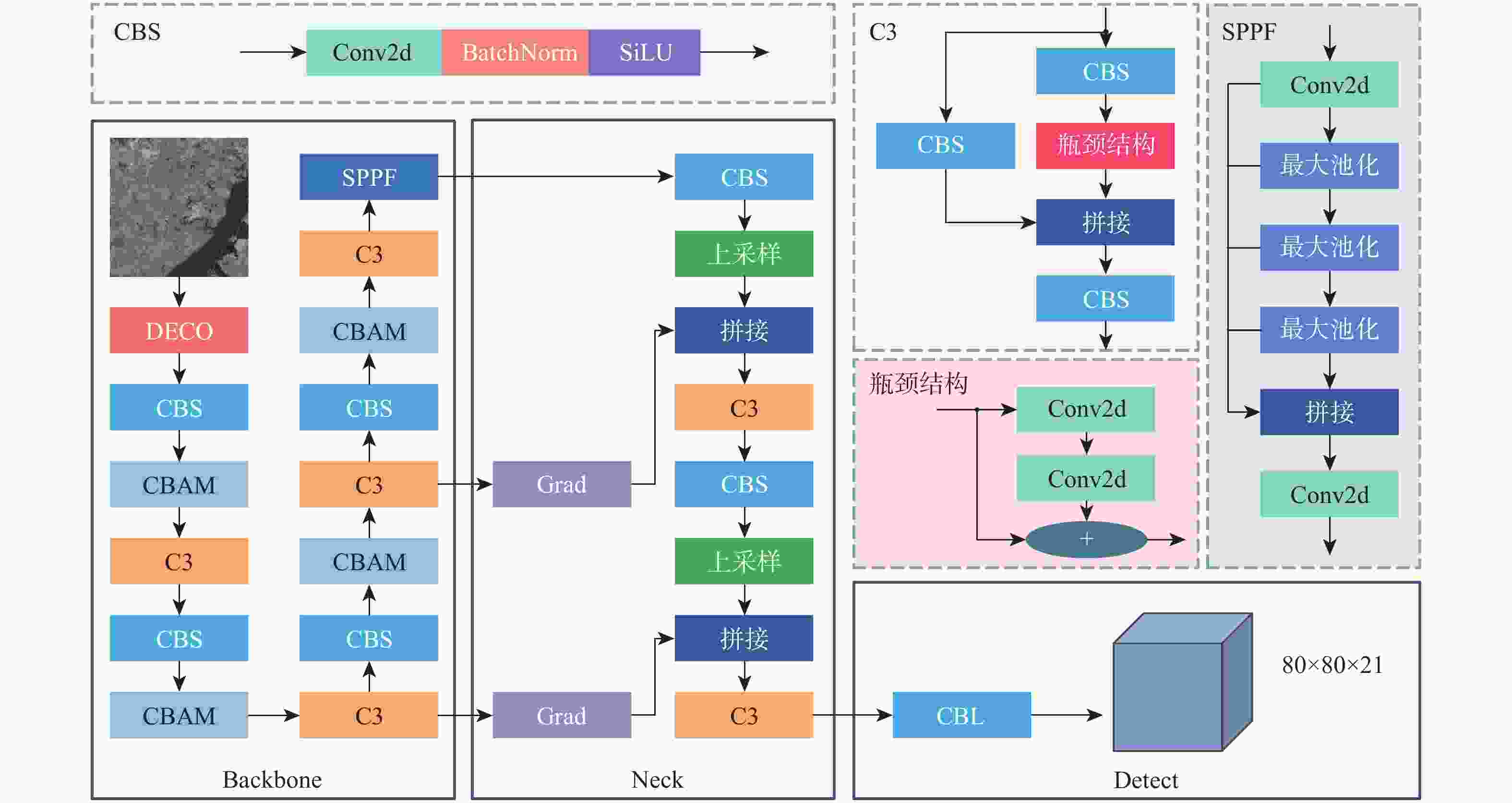

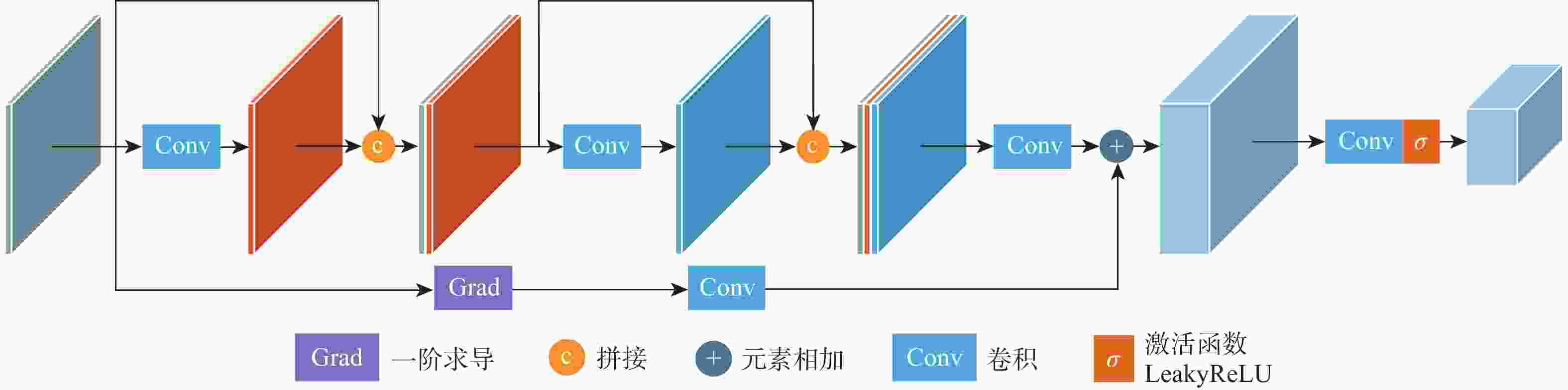

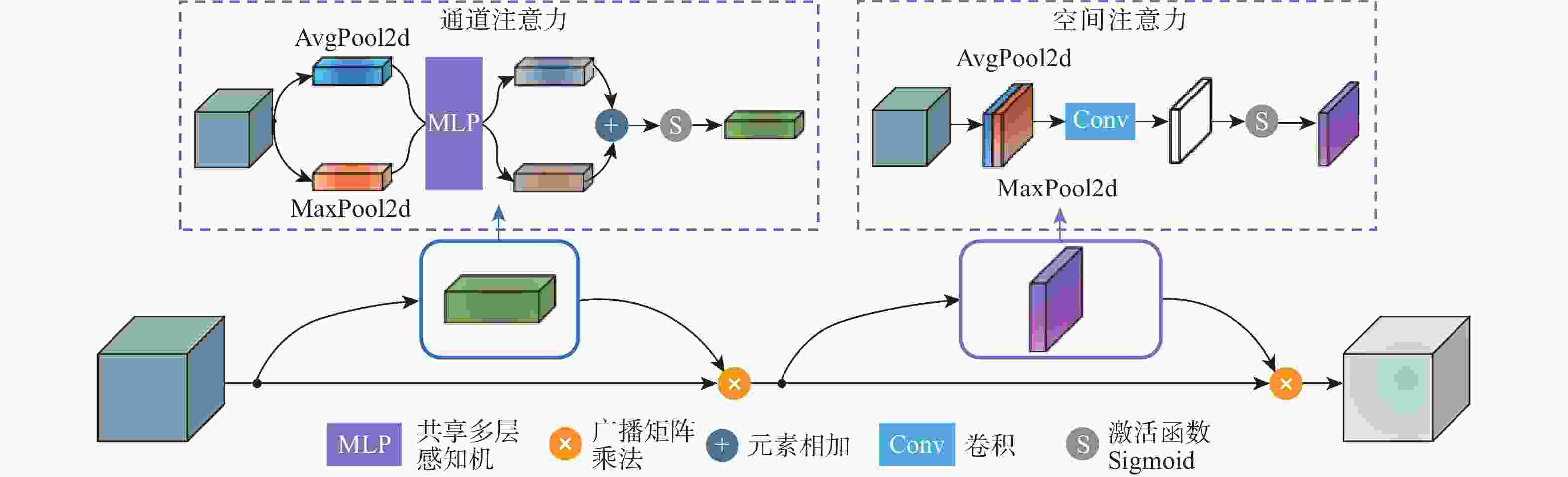

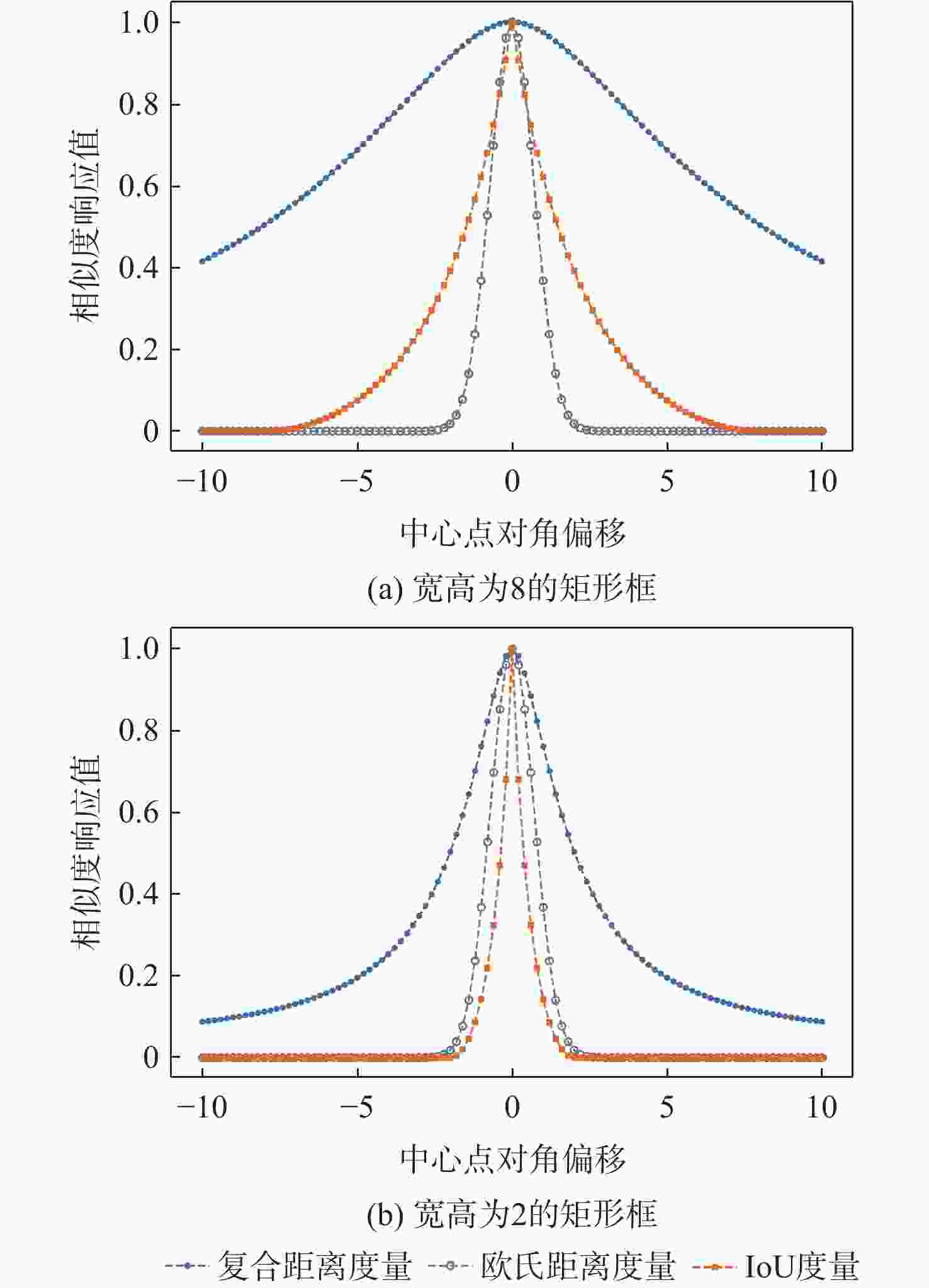

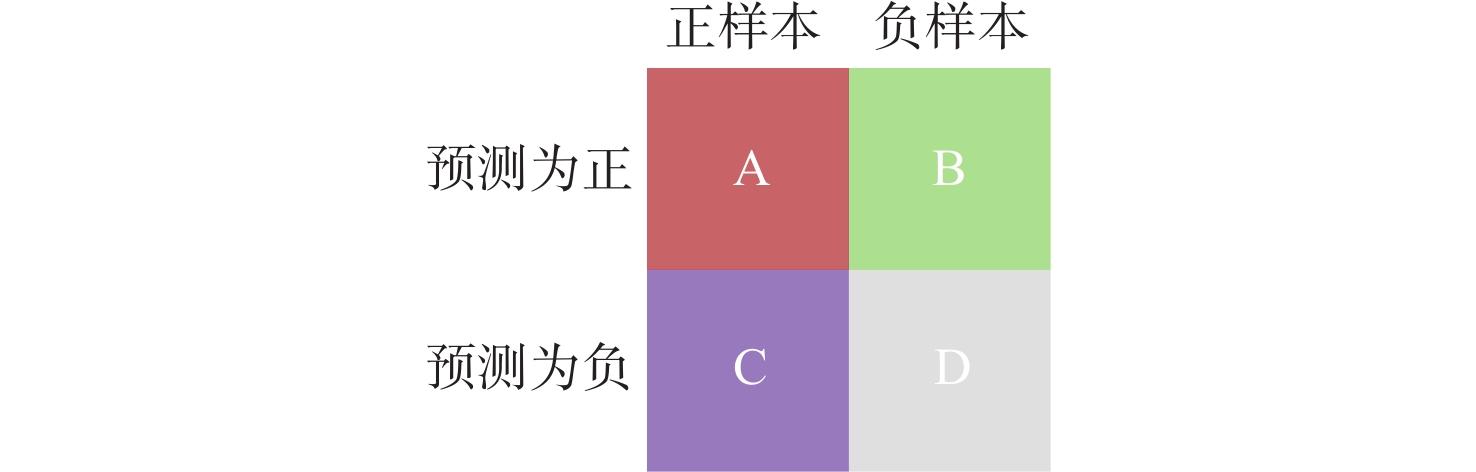

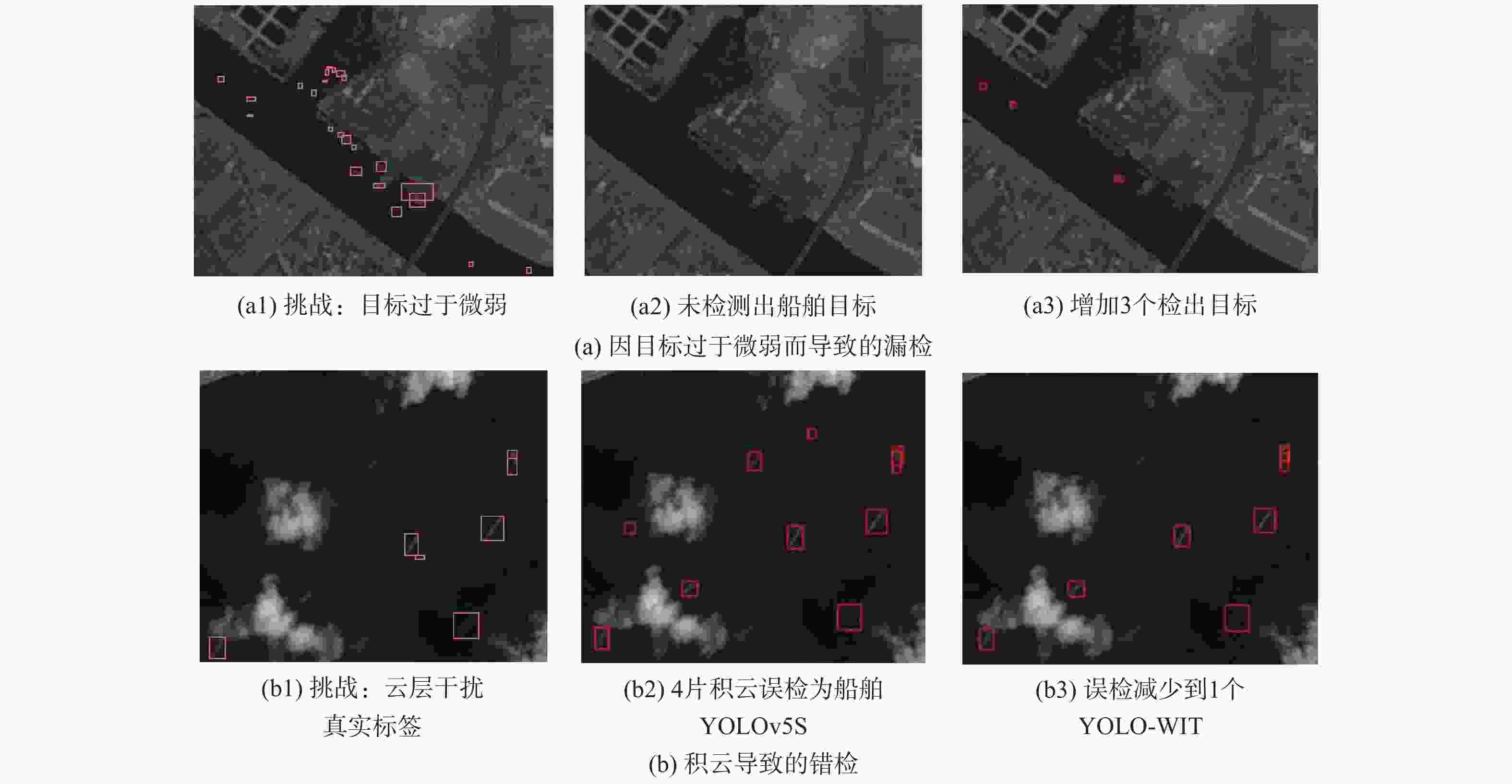

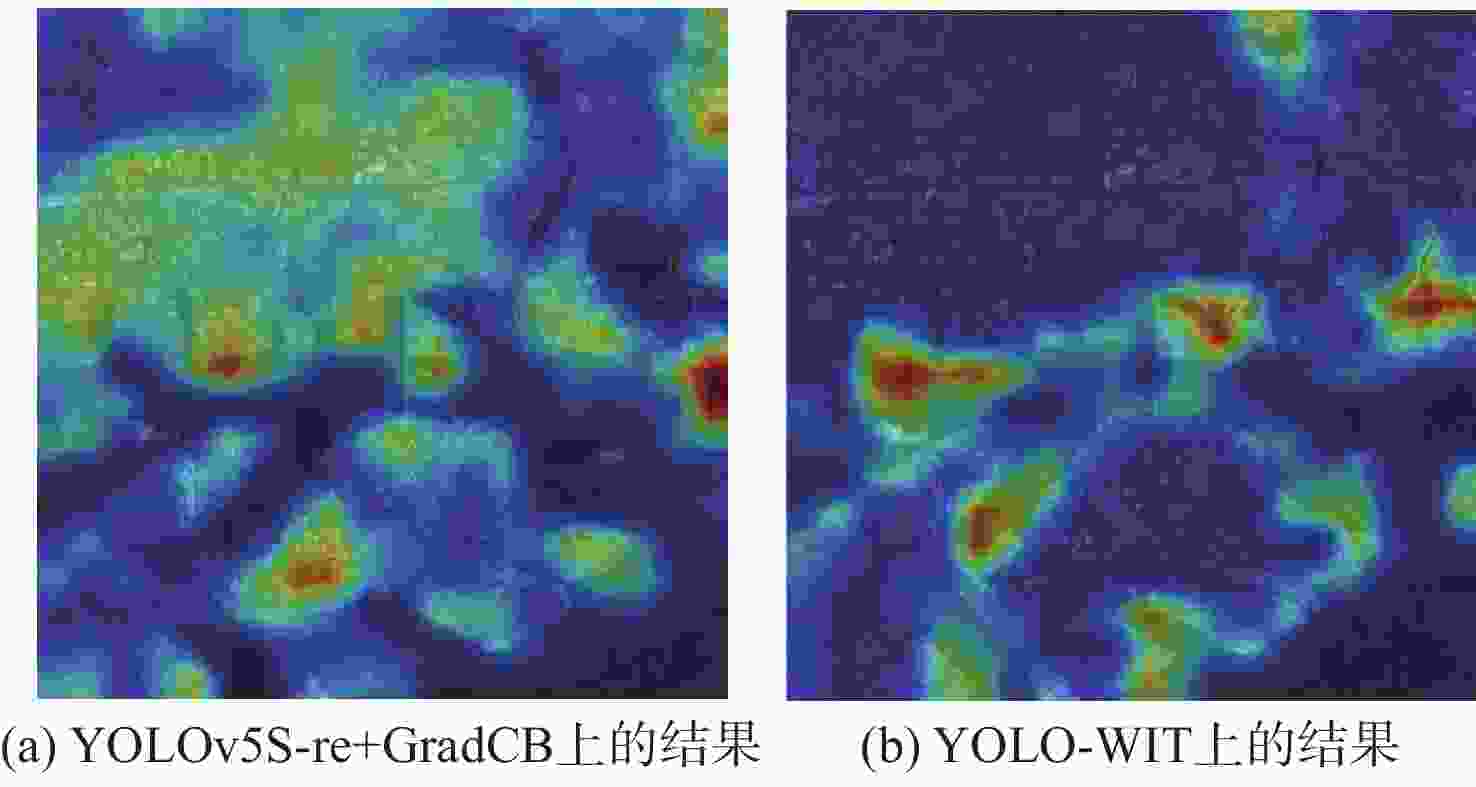

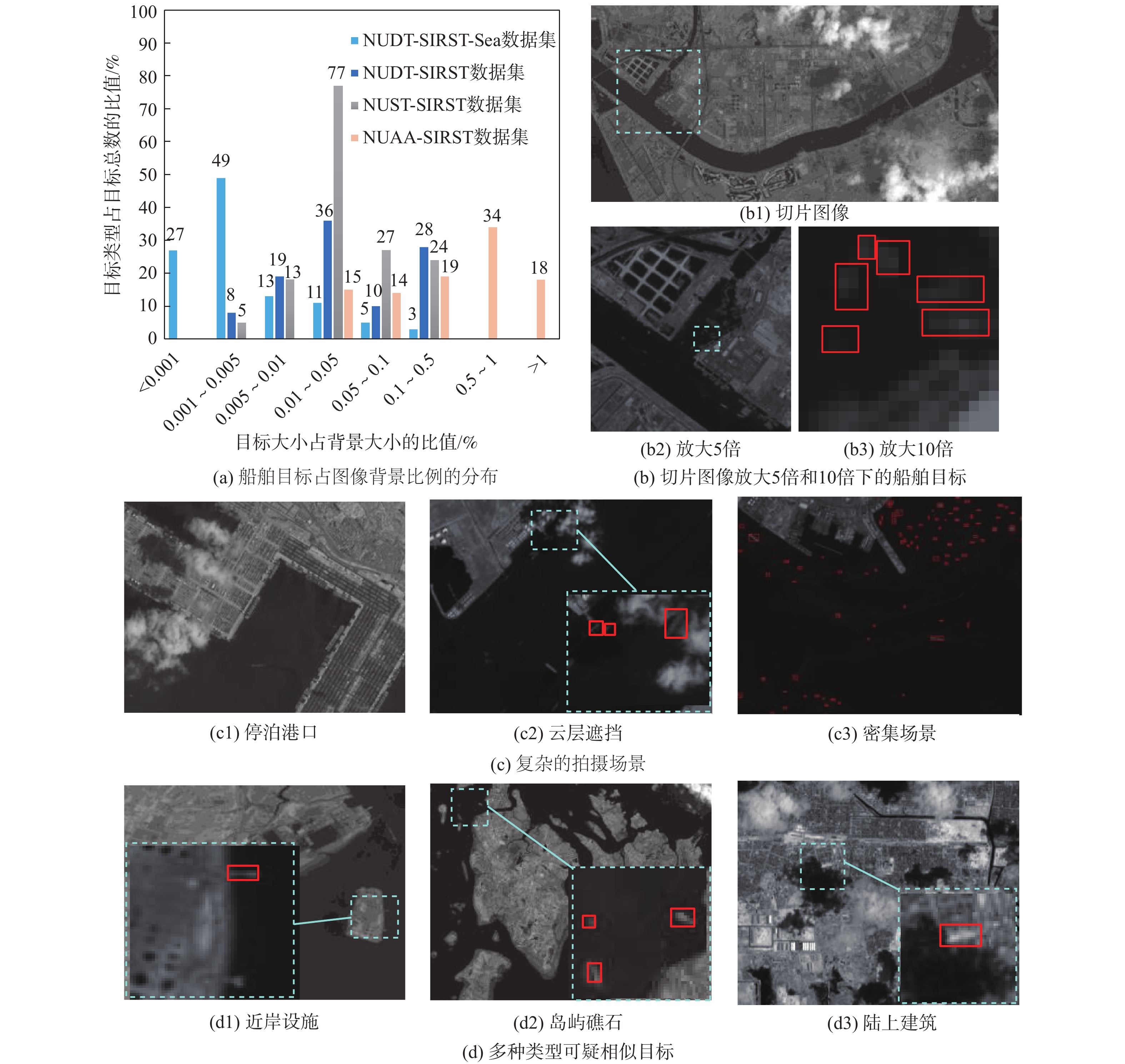

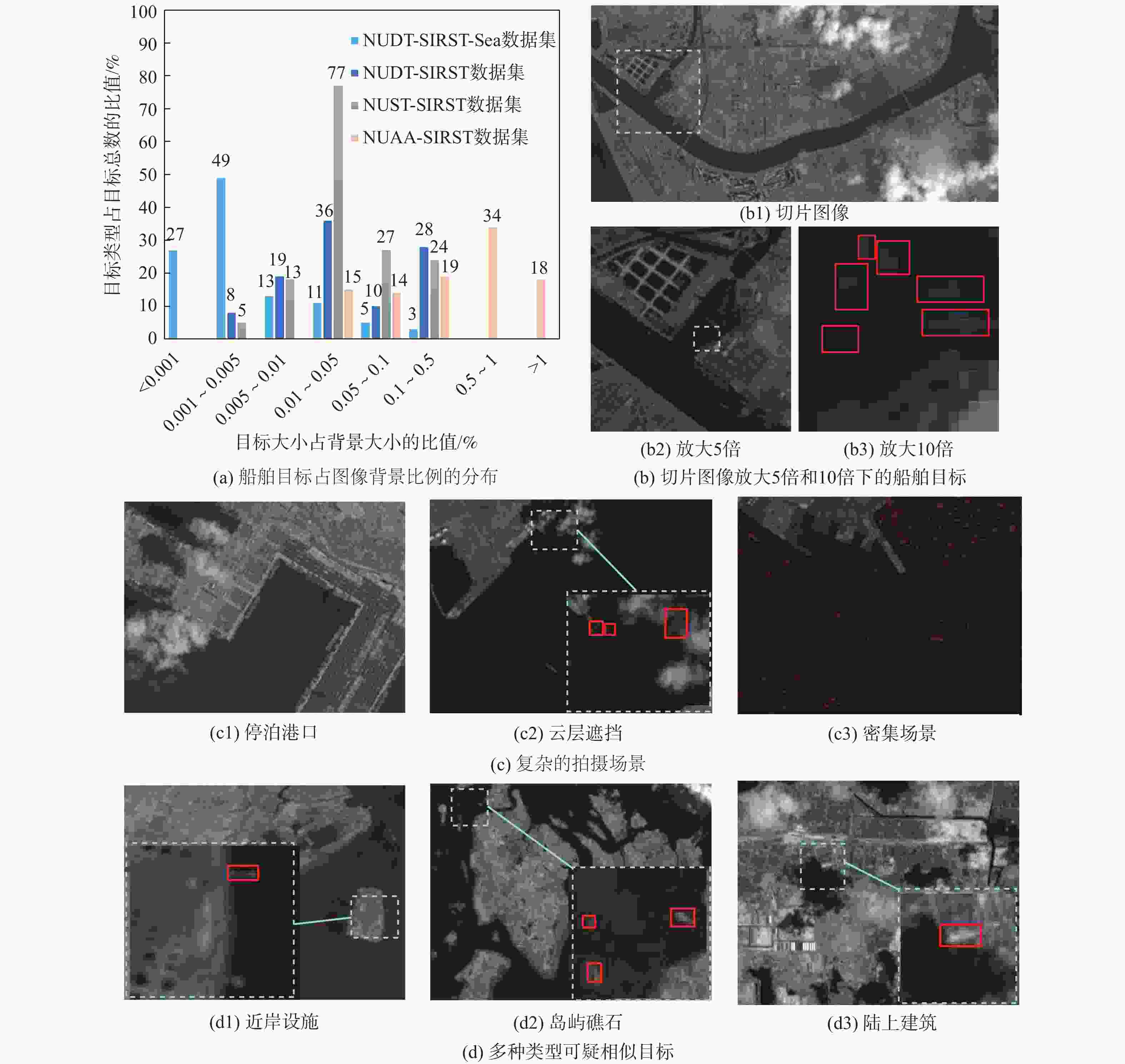

针对红外遥感弱小目标检测面临的挑战,提出一种面向弱小船舶目标的检测算法(YOLO-WIT)。针对弱小目标对位置偏移低容忍的难点,优化削减检测头,降低模型体积的同时限制锚框的最大偏移量,并设计了一种复合距离相似度度量作为损失函数降低回归分支敏感度,从而提升定位精度;针对图像背景高亮而目标灰暗的情况,设计了联接型信息扩展模块(DECO)保留微弱信号,增强对微弱特征的感知;为区分与船舶目标灰度幅值、形状相似的干扰物,利用Sobel算子求解浅层特征图的一阶导数,以边缘特征指导模型进行判断,并采用时空注意力机制进行特征强化。在数据集NUDT-SIRST-Sea上实验验证:与基线模型相比,所提算法参数量减少31.6%,在指标

E mAP50上提升9%,E mAP50-95提升4.9%;与主流检测算法相比,所提算法所需资源更少,模型体积仅为9.2×106,对弱小船舶目标的检测效果更加显著。Abstract:A detection technique called YOLO for weak infrared remote sensing ship target (YOLO-WIT) is presented to overcome the difficulties in detecting weak and small ship targets using infrared remote sensing. Firstly, due to the difficulty of low tolerance against positional offset for dim and small targets, the detection head is optimized to reduce model size while limiting the maximum offset of the anchor. Additionally, a composite distance similarity measure is designed to decrease location sensitivity in the regression branch, thereby enhancing regression accuracy. Secondly, in view of the situation where the infrared image background is bright and the target is dark, a dense concat information expansion convolution block (DECO) is designed to retain weak signals and enhance the perception of weak features. A spatio-temporal attention mechanism is employed for feature enhancement, and the Sobel operator is utilized to solve the first-order derivative of the shallow feature maps in order to direct the model to make judgments with edge features in order to differentiate interference objects with similar shapes and grayscale amplitudes. The experimental results on the NUDT-SIRST-Sea dataset demonstrate that: YOLO-WIT reduces parameters by 31.6% compared to the baseline model, increases mAP50 by 9%, and raises mAP50-95 by 4.9%. In comparison to mainstream detection algorithms, YOLO-WIT demands fewer resources with a model size of just 9.2×106. Its detection performance on dim and small ship targets is notably superior to other methods.

-

表 1 消融实验结果

Table 1. Ablation experiment results

算法 Eprecision/% Erecall/% EmAP50/% EmAP50-95/% Nparameters 浮点运算速度/109 s−1 YOLOv5S 65.4 33.6 38.7 16.4 7.02×106 15.8 YOLOv5S-re 66.3 38.4 44.4 19.2 4.78×106 12.9 Yolov5s-re+Crad 70.1 35.9 45.4 19.9 4.79×106 13.0 Yolov5s-re+CBAM 66.9 38.3 43.7 18.7 4.79×106 12.9 YOLOv5S-re+GradCB 71.7 36.2 45.9 19.9 4.80×106 13.0 YOLOv5S-re+DECO 67.6 37.8 44.7 19.6 4.79×106 14.6 YOLO-WIT(本文算法) 75.1 35.3 47.7 21.3 4.80×106 14.7 注:粗体表示最优值。 表 2 模块消融结果

Table 2. Module ablation results

算法或改动 Eprecision/% Erecall/% EmAP50/% EmAP50-95/% Nparameters 浮点运算速度/109 s−1 DECO模块 75.1 35.3 47.7 21.3 4.80×106 14.7 主干部分不采用任何方式联接 71.8 36.8 46.6 20.7 4.80×106 14.5 主干采用DenseNet结构联接 69.2 36.9 44.8 19.7 4.80×106 14.5 取消一阶导数计算 71.4 37.1 46.6 20.3 4.80×106 14.7 空间降采样时通道扩充 71.0 35.4 45.7 19.9 4.79×106 13.1 注:粗体表示最优值。 表 3 对比实验结果

Table 3. Comparative experimental results

算法 主干网络 EmAP50/% EmAP50-95/% Nparameters 浮点运算速度/109 s−1 模型体积 YOLOv5S CSPDarkNet 38.7 16.4 7.010×106 15.80 13.4×220 YOLO-WIT(本文算法) CSPDarkNet 47.7 21.3 4.800×106 14.70 9.2×220 YOLOv3 DarkNet-53 24.7 10.3 6.150×107 154.60 114.9×220 YOLOv7 22.7 9.7 3.649×107 103.20 69.7×220 YOLOv7-Tiny 20.0 8.09 6.010×106 13.00 45.0×220 YOLOv8n 23.9 10.8 3.010×106 8.10 5.9×220 YOLOv8s 25.1 11.5 1.110×107 28.40 21.5×220 YOLOv9-c 23.0 10.8 5.070×107 236.60 98.0×220 SSD SSDVGG 30.0 9.6 2.388×107 137.30 182.2×220 Faster-RCNN ResNet-50 2.1 1.3 4.113×107 91.00 307.6×220 RetinaNet ResNet-50 8.5 2.5 3.613×107 81.90 276.8×220 RT-DETR ResNet-18 22.5 8.7 2.010×107 60.00 307.3×220 DETR ResNet-50 0 0 4.128×107 37.10 474.1×220 注:粗体表示最优值。 表 4 泛化性验证实验结果

Table 4. Generalizability verification experimental results

算法 $ E_{{\mathrm{mAP}}_{50}} $/% $ E_{{\mathrm{mAP}}_{50-95}} $/% Nparameters 浮点运算

速度/109 s−1模型体积 NUAA-SIRST NUDT-SIRST IRSTD-1k NUAA-SIRST NUDT-SIRST IRSTD-1k YOLOv5S 79.8 96.2 71.9 28.2 74.1 30.1 7.01×106 15.80 13.4×220 YOLO-WIT(本文算法) 87.3 98.3 72.6 32.4 76.4 32.5 4.80×106 14.70 9.2×220 YOLOv8n 77.1 97.3 71.3 30.2 75.6 31.2 3.01×106 8.10 5.9×220 YOLOv8S 83.0 97.8 72.3 31.4 75.9 31.8 1.11×107 28.40 21.5×220 YOLOv9-c 73.8 98.7 70.2 27.2 76.5 31.9 5.07×107 236.60 98.0×220 RT-DETR 72.3 97.3 71.1 31.7 71.7 31.1 2.01×107 60.00 307.3×220 注:粗体表示最优值。 -

[1] LIU J, HE Z Q, CHEN Z L, et al. Tiny and dim infrared target detection based on weighted local contrast[J]. IEEE Geoscience and Remote Sensing Letters, 2018, 15(11): 1780-1784. [2] 卢宇韦. 海面红外弱小目标检测算法研究[D]. 大连: 大连海事大学, 2021.LU Y W. Research on detection algorithm of infrared dim and small targets on the sea surface[D]. Dalian: Dalian Maritime University, 2021(in Chinese). [3] 吴科君. 基于深度学习的海面船舶目标检测[D]. 哈尔滨: 哈尔滨工程大学, 2018.WU K J. Ship target detection on sea surface based on deep learning[D]. Harbin: Harbin Engineering University, 2018(in Chinese). [4] LI L Y, JIANG L Y, ZHANG J W, et al. A complete YOLO-based ship detection method for thermal infrared remote sensing images under complex backgrounds[J]. Remote Sensing, 2022, 14(7): 1534. [5] CHEN P, ZHOU H, YING L, et al. A novel deep learning network with deformable convolution and attention mechanisms for complex scenes ship detection in SAR images[J]. Remote Sensing, 2023, 15(10): 2589. [6] KOU R K, WANG C P, PENG Z M, et al. Infrared small target segmentation networks: a survey[J]. Pattern Recognition, 2023, 143: 109788. [7] GUAN X W, ZHANG L D, HUANG S Q, et al. Infrared small target detection via non-convex tensor rank surrogate joint local contrast energy[J]. Remote Sensing, 2020, 12(9): 1520. [8] KRIZHEVSKY A , SUTSKEVER I , HINTON G .ImageNet classification with deep convolutional neural networks[C]//NIPS.Curran Associates Inc. [S.l.:s.n.], 2012. [9] CHENG G, YUAN X, YAO X W, et al. Towards large-scale small object detection: survey and benchmarks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2023, 45(11): 13467-13488. [10] REDMON J, DIVVALA S, GIRSHICK R, et al. You only look once: unified, real-time object detection[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2016: 779-788. [11] REN S Q, HE K M, GIRSHICK R, et al. Faster R-CNN: towards real-time object detection with region proposal networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 39(6): 1137-1149. [12] CARION N, MASSA F, SYNNAEVE G, et al. End-to-end object detection with transformers[M]//Computer Vision-ECCV 2020. Berlin: Springer, 2020: 213-229. [13] LIU S, QI L, QIN H F, et al. Path aggregation network for instance segmentation[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 8759-8768. [14] LIN T Y, DOLLÁR P, GIRSHICK R, et al. Feature pyramid networks for object detection[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2017: 936-944. [15] LI Y Y, HUANG Q, PEI X, et al. Cross-layer attention network for small object detection in remote sensing imagery[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2020, 14: 2148-2161. [16] XU C, WANG J W, YANG W, et al. Dot distance for tiny object detection in aerial images[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops. Piscataway: IEEE Press, 2021: 1192-1201. [17] DAI Y M, WU Y Q, ZHOU F, et al. Asymmetric contextual modulation for infrared small target detection[C]//Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision. Piscataway: IEEE Press, 2021: 949-958. [18] DAI Y M, WU Y Q, ZHOU F, et al. Attentional local contrast networks for infrared small target detection[J]. IEEE Transactions on Geoscience and Remote Sensing, 2021, 59(11): 9813-9824. [19] ZHANG M J, ZHANG R, ZHANG J, et al. Dim2Clear network for infrared small target detection[J]. IEEE Transactions on Geoscience and Remote Sensing, 2023, 61: 5001714. [20] ZHANG X Y, RU J Y, WU C D. Infrared small target detection based on gradient correlation filtering and contrast measurement[J]. IEEE Transactions on Geoscience and Remote Sensing, 2023, 61: 5603012. [21] LIU S, CHEN P F, Woźniak M. Image enhancement-based detection with small infrared targets[J]. Remote Sensing, 2022, 14(13): 3232. [22] XIAO S, MA Y, FAN F, et al. Tracking small targets in infrared image sequences under complex environmental conditions[J]. Infrared Physics & Technology, 2020, 104: 103102. [23] 詹令明, 李翠芸, 姬红兵. 基于显著图的红外弱小目标动态规划检测前跟踪算法[J]. 计算机辅助设计与图形学学报, 2019, 31(7): 1061-1066.ZHAN L M, LI C Y, JI H B. Dynamic programming track-before-detect algorithm based on saliency map for infrared dim and small target[J]. Journal of Computer-Aided Design & Computer Graphics, 2019, 31(7): 1061-1066(in Chinese). [24] 黄康, 毛峡, 梁晓庚. 一种新的红外背景抑制滤波算法[J]. 航空学报, 2010, 31(6): 1239-1244.HUANG K, MAO X, LIANG X G. A novel background suppression algorithm for infrared images[J]. Acta Aeronautica et Astronautica Sinica, 2010, 31(6): 1239-1244(in Chinese). [25] WU T H, LI B Y, LUO Y H, et al. MTU-Net: multilevel TransUNet for space-based infrared tiny ship detection[J]. IEEE Transactions on Geoscience and Remote Sensing, 2023, 61: 5601015. [26] WANG H, ZHOU L P, WANG L. Miss detection vs. false alarm: adversarial learning for small object segmentation in infrared images[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE Press, 2019: 8509-8518. [27] LI B Y, XIAO C, WANG L G, et al. Dense nested attention network for infrared small target detection[J]. IEEE Transactions on Image Processing, 2022, 32: 1745-1758. [28] ZHANG M J, ZHANG R, YANG Y X, et al. ISNet: Shape matters for infrared small target detection[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2022: 877-886. [29] REDMON J, FARHADI A . YOLOv3: an incremental Improvement[EB/OL]. (2018-04-08)[2022-07-22]. https://arxiv.org/pdf/1804.02767. [30] LIN T Y, GOYAL P, GIRSHICK R, et al. Focal loss for dense object detection[C]//Proceedings of the IEEE International Conference on Computer Vision. Piscataway: IEEE Press, 2017: 2999-3007. [31] GIRSHICK R. Fast R-CNN[C]//Proceedings of the IEEE International Conference on Computer Vision. Piscataway: IEEE Press, 2015: 1440-1448. [32] 张瑞鑫, 黎宁, 张夏夏, 等. 基于优化CenterNet的低空无人机检测方法[J]. 北京航空航天大学学报, 2022, 48(11): 2335-2344.ZHANG R X, LI N, ZHANG X X, et al. Low-altitude UAV detection method based on optimized CenterNet[J]. Journal of Beijing University of Aeronautics and Astronautics, 2022, 48(11): 2335-2344(in Chinese). [33] HUANG G, LIU Z, VAN DER MAATEN L, et al. Densely connected convolutional networks[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2017: 2261-2269. [34] WOO S, PARK J, LEE J Y, et al. CBAM: convolutional block attention module[C]//Proceedings of the Computer Vision–ECCV 2018. Berlin: Springer, 2018: 3-19. [35] WANG J , XU C , YANG W , et al. A normalized Gaussian wasserstein distance for tiny object detection[EB/OL]. (2022-06-22)[2022-07-22]. https://arxiv.org/pdf/2110.13389. [36] CIPOLLA R, GAL Y, KENDALL A. Multi-task learning using uncertainty to weigh losses for scene geometry and semantics[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2018: 7482-7491. [37] WANG C Y, BOCHKOVSKIY A, LIAO H M. YOLOv7: trainable bag-of-freebies sets new state-of-the-art for real-time object detectors[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2023: 7464-7475. [38] WANG C Y, YEH I H, MARK LIAO H Y. Yolov9: learning what you want to learn using programmable gradient information[C]//Proceedings of the European Conference on Computer Vision. Berlin: Springer, 2024: 1-21. [39] LIU W, ANGUELOV D, ERHAN D, et al. SSD: single shot MultiBox detector[M]//Computer Vision-ECCV 2016. Berlin: Springer, 2016: 21-37. [40] ZHAO Y, LV W, XU S, et al. Detrs beat yolos on real-time object detection[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2024: 16965-16974. -

下载:

下载: