-

摘要:

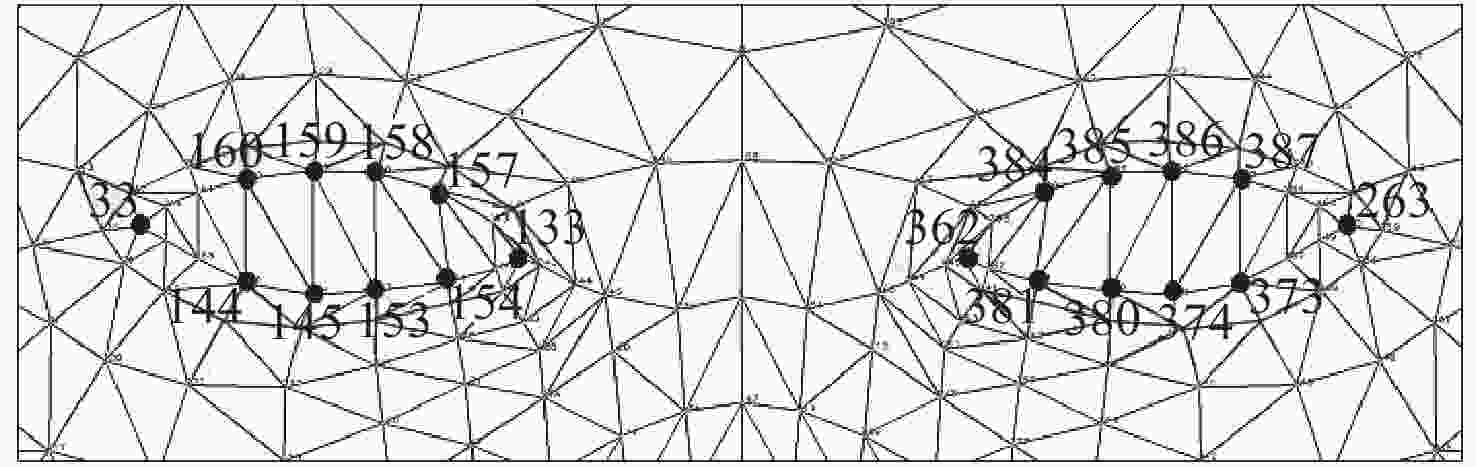

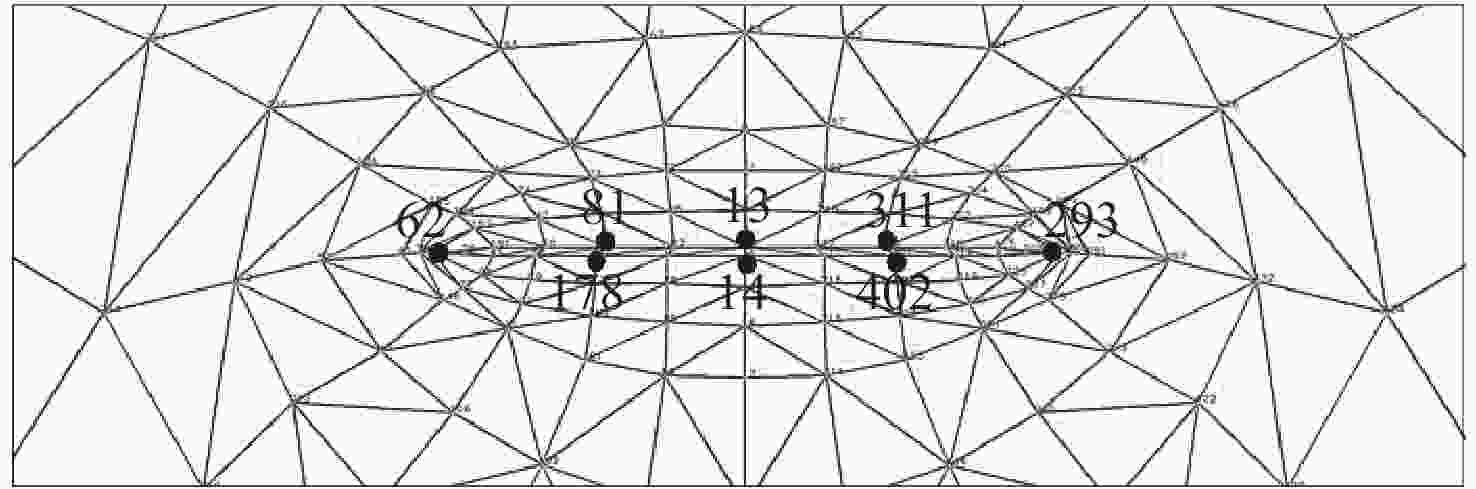

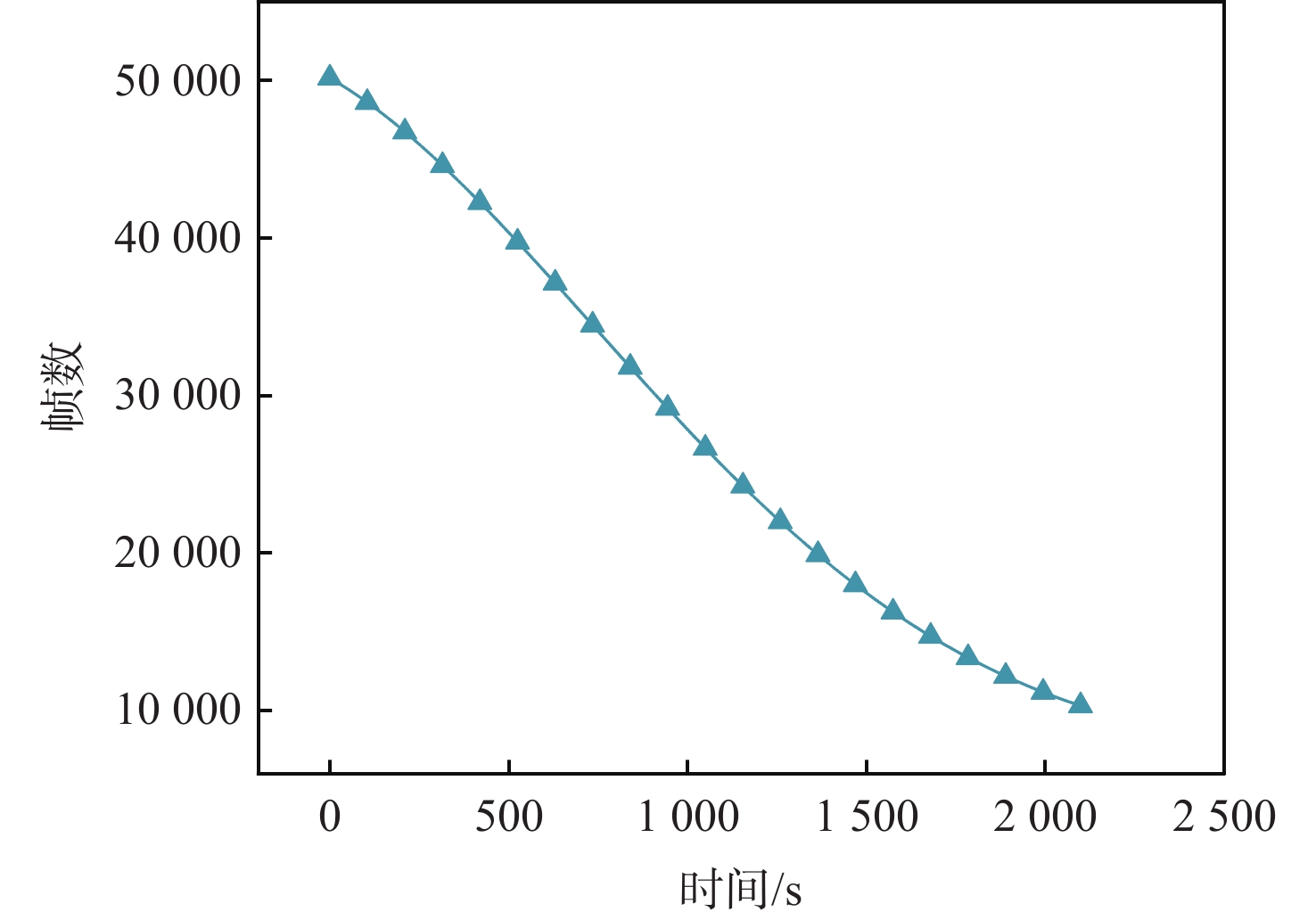

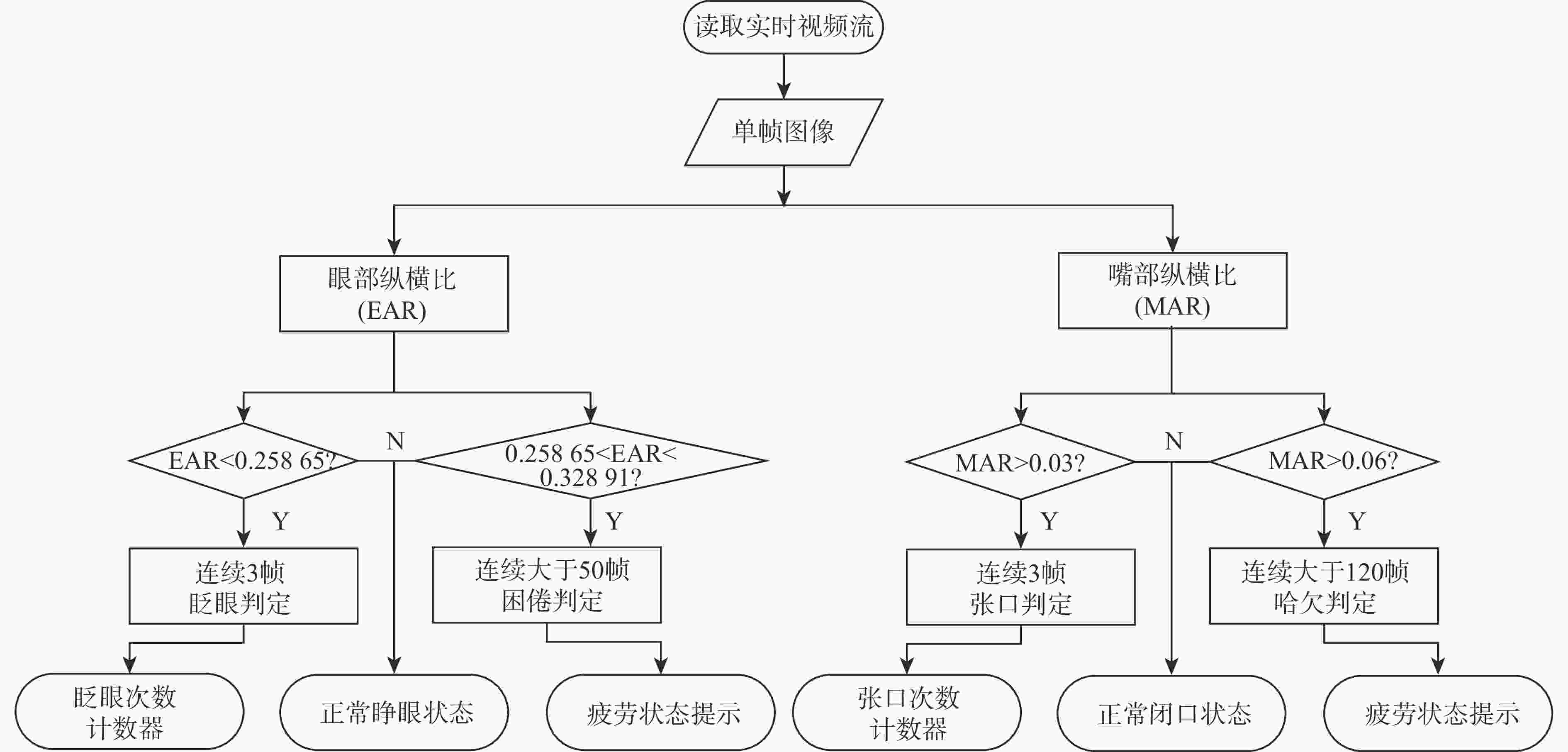

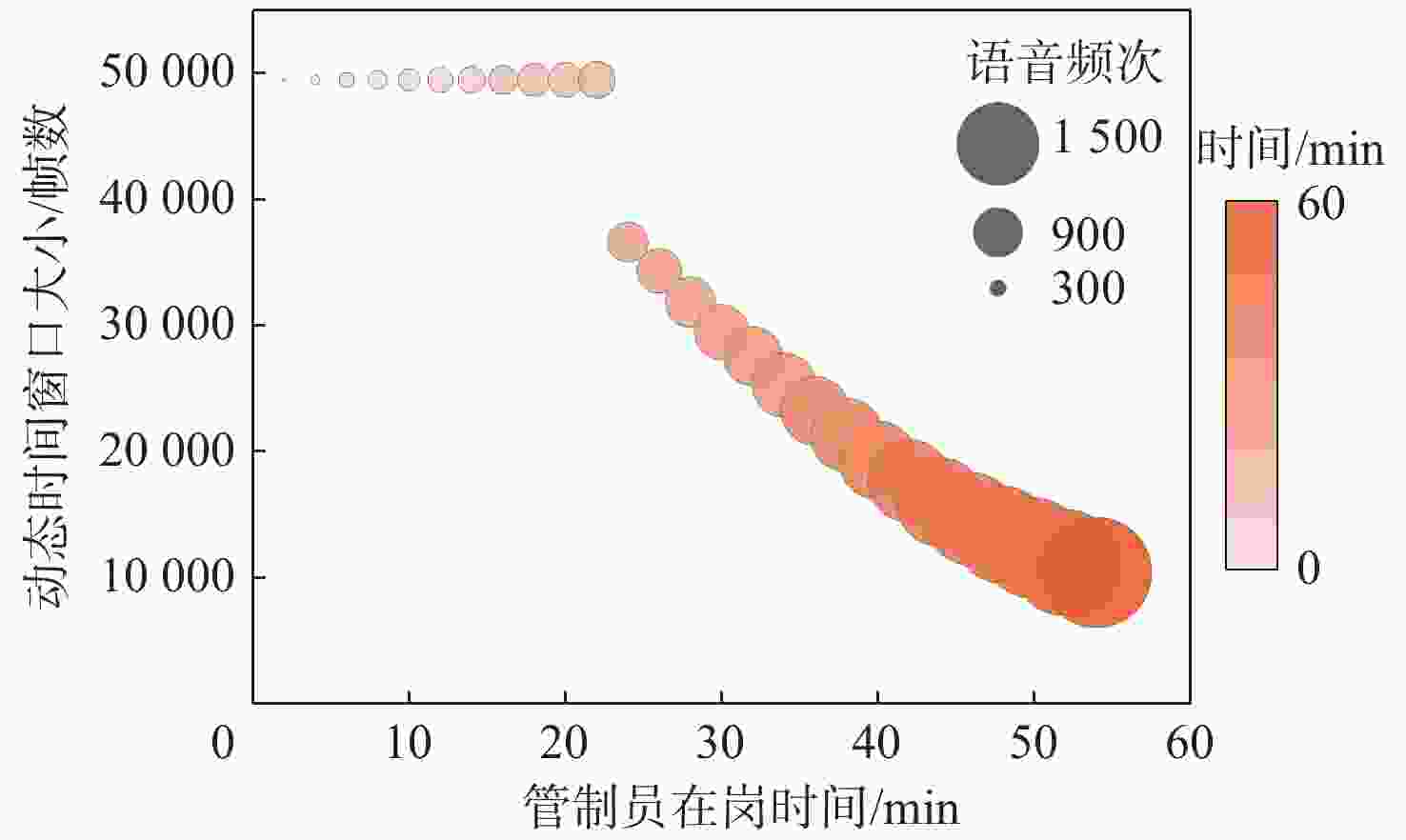

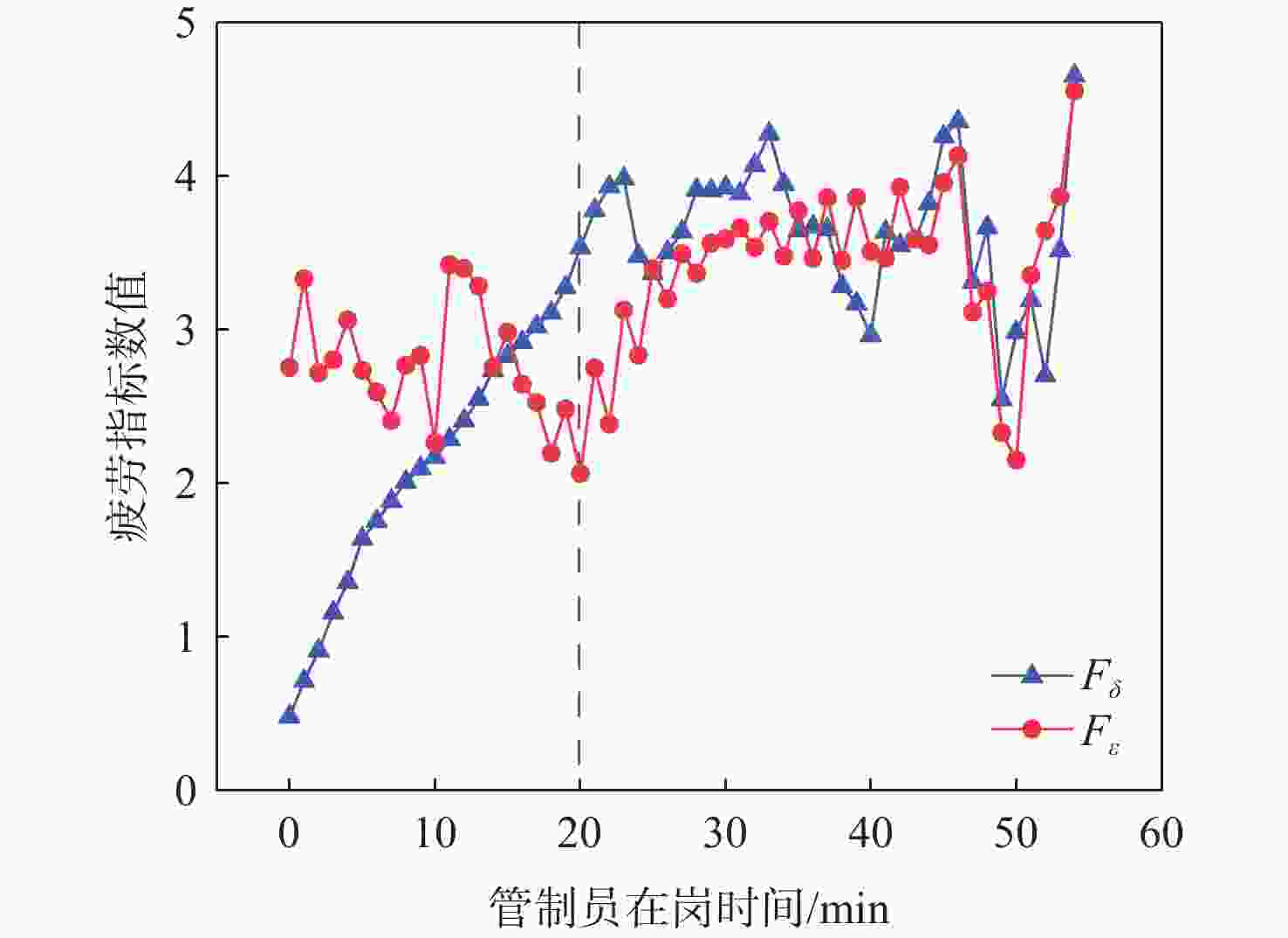

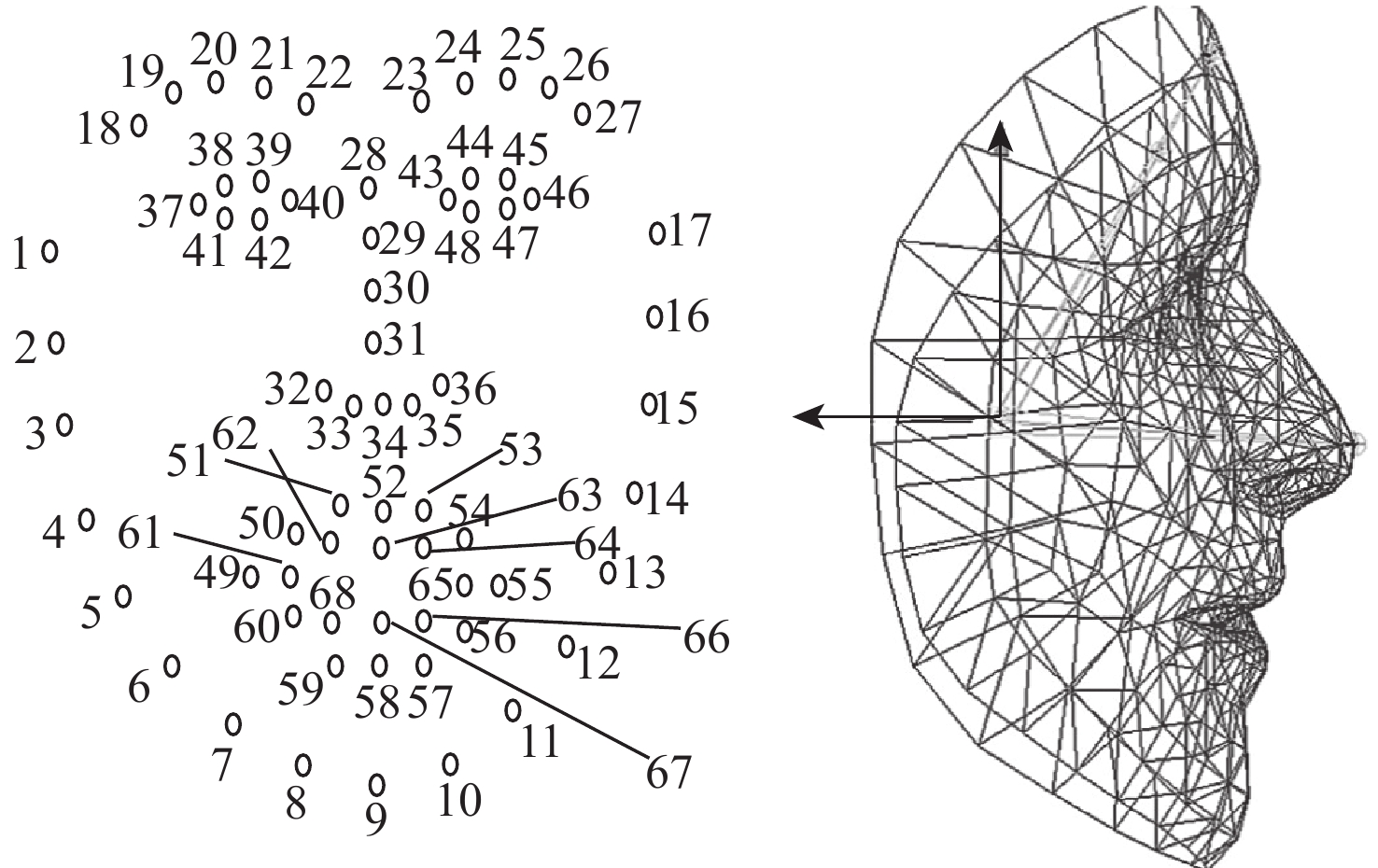

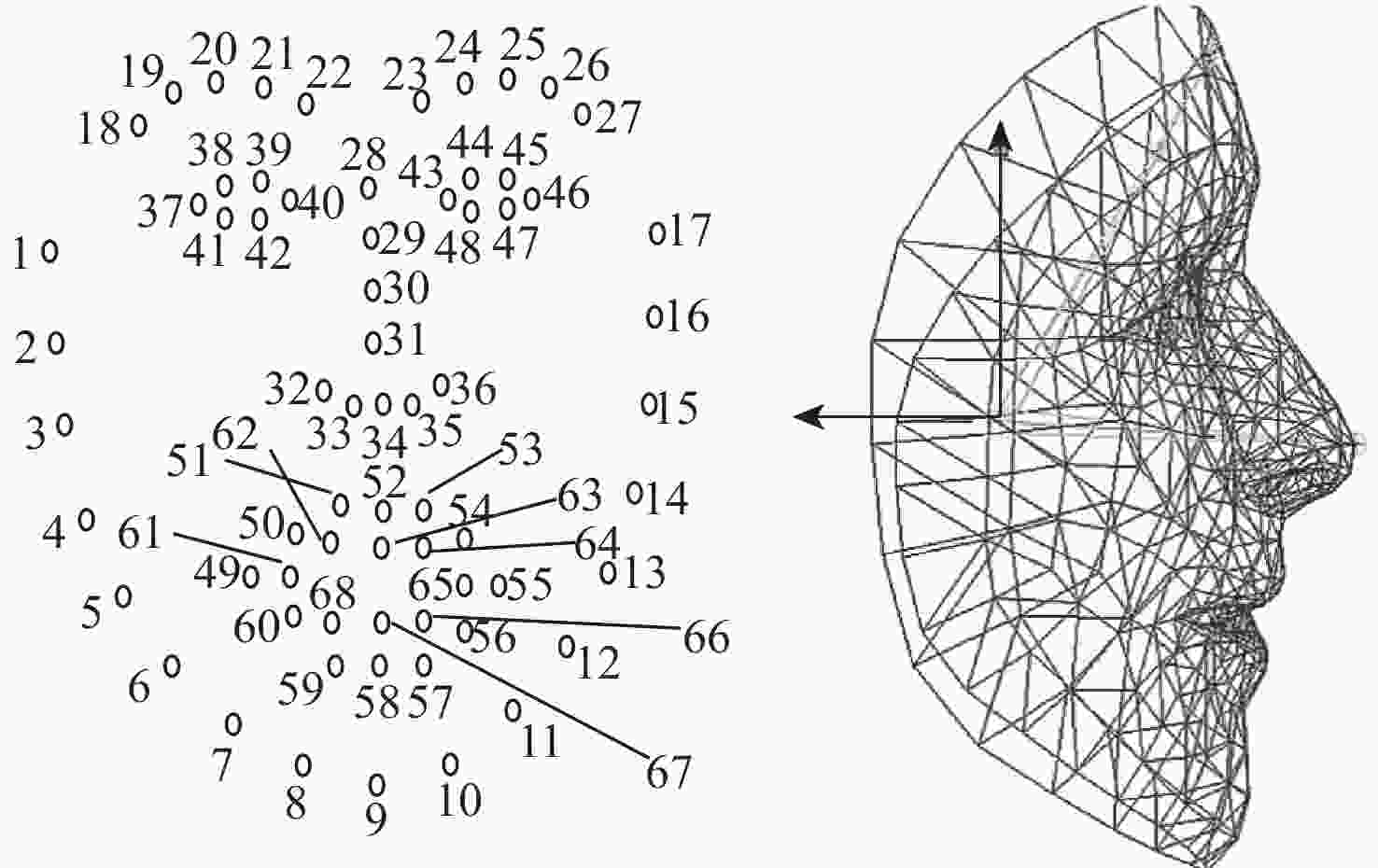

针对现有通过管制员面部信息监测疲劳,极少融合管制真实场景、算法鲁棒性低等特点,提出一种考虑管制员工作特性的疲劳实时判别算法。采用Attention Mesh算法获取面部468点的三维坐标信息,并使用特征匹配法逐样本对眼部与嘴部纵横比阈值进行标定;引入管制员在岗时间、实时陆空通话负荷及疲劳事件发生次数3个指标,将三者通过指数衰减函数动态映射至疲劳监测时间窗口,并通过计算动态衰减时间窗内眨眼频次占比,得出疲劳趋势指标。对某管制单位管制室30位成熟放单管制员班后管制测试的脑电与面部视频数据进行处理,并对通过面部数据得到的疲劳指标

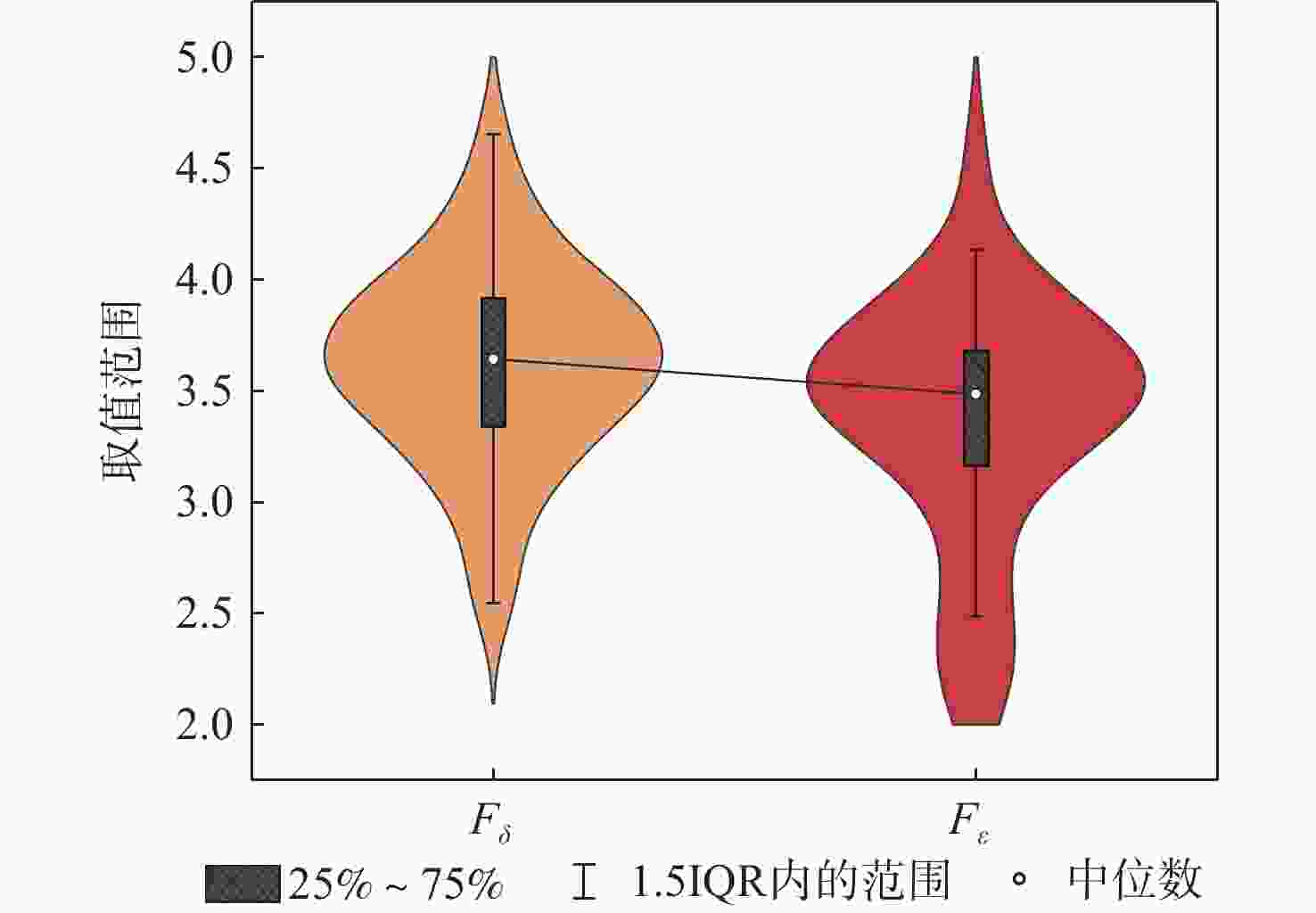

F δ 与脑电疲劳指标F ε 进行时间维度上的相关性分析,结果表明:在30个被试样本的双变量交叉相关性分析结果中,Pearson相关性系数整体介于0.462~0.785之间,Sig.双尾显著性检验均位于0.01级别,相关性显著,验证了所提算法的有效性与可靠性。Abstract:A real-time fatigue discrimination algorithm that takes into account the work characteristics of controllers is proposed in order to address the shortcomings of the current fatigue detection through facial information of controllers, such as the algorithm’s low robustness and infrequent integration of the actual scene of control. Firstly, the Attention Mesh algorithm is used to obtain the 3D coordinate information of 468 points on the face, and the thresholds of eye and mouth aspect ratios are calibrated sample by sample using the feature matching method. Secondly, three indicators are introduced, namely, the controller’s on-duty time, the real-time land and air call load, and the number of fatigue events, and these three indicators are dynamically mapped to the fatigue detection window through the exponential decay function and the fatigue frequency ratio of blinks within the dynamic decay time window is calculated through the calculation of the fatigue frequency ratio of blinks within the dynamic decay time window. The fatigue trend indicator is derived by calculating the percentage of blinking frequency within the dynamic decay time window. Finally, the EEG and facial video data of the post-shift control test of 30 mature release order controllers in the control room of a control unit are processed, and the fatigue indicator

F δ , obtained from the facial data, is correlated with the EEG fatigue indicatorF ε in the time dimension. The findings demonstrated that the validity and reliability of the suggested algorithms were confirmed, and the overall Pearson correlation coefficients in the bivariate cross-correlation analysis results of the 30 subject samples ranged from 0.462 to 0.785. The Sig. two-tailed significance tests were found at the 0.01 level, indicating a significant correlation. -

表 1 眼部与嘴部状态纵横比标定结果

Table 1. Eye and mouth condition aspect ratio test results

样本 M E 张口 闭口 睁眼 闭眼 半睁眼 1 0.08343 0.00857 0.32891 0.09235 0.25865 2 0.06012 0.00936 0.29643 0.16226 0.27624 3 0.07935 0.00839 0.37525 0.07734 0.24603 4 0.04742 0.00235 0.41882 0.16548 0.25076 5 0.04238 0.00688 0.40113 0.10396 0.24975 $\vdots $ $\vdots $ $\vdots $ $\vdots $ $\vdots $ $\vdots $ 26 0.04287 0.00448 0.40654 0.13943 0.22681 27 0.06604 0.00697 0.45101 0.16159 0.27371 28 0.04923 0.00191 0.41533 0.12762 0.22396 29 0.10738 0.00337 0.40861 0.10779 0.24397 30 0.04562 0.00496 0.36213 0.08365 0.19603 表 2 $ {F}_{\delta } $与$ {F}_{\varepsilon } $相关性分析结果

Table 2. Results of correlation analysis between $ {F}_{\delta } $ and $ {F}_{\varepsilon } $

被试者 个案数 Pearson相关性系数 Sig. (双尾) 1 36 0.462** 5×10−3 2 32 0.672** 2.6×10−5 3 40 0.591** 6×10−4 4 31 0.556** 1×10−3 5 29 0.522** 4×10−3 6 34 0.712** 2×10−6 7 26 0.506** 8×10−3 8 37 0.785** 8.6×10−9 9 25 0.646** 4.8×10−4 10 27 0.536** 4×10−3 11 27 0.724** 2×10−5 12 30 0.629** 1.9×10−4 13 24 0.653** 5.4×10−4 14 26 0.561** 3×10−3 15 24 0.517** 1×10−2 16 33 0.731** 1×10−6 17 28 0.623** 3.9×10−4 18 30 0.642** 1.4×10−4 19 25 0.583** 2×10−3 20 27 0.679** 9.8×10−5 21 36 0.482** 3×10−3 22 33 0.464** 7×10−3 23 29 0.651** 1.3×10−4 24 27 0.589** 1×10−3 25 35 0.703** 2×10−6 26 30 0.728** 5×10−6 27 25 0.622** 8.9×10−4 28 37 0.730** 2.9×10−7 29 23 0.561** 5×10−3 30 29 0.601** 5.7×10−4 注:**表示在0.01级别(双尾),相关性显著。 表 3 算法对比分析结果

Table 3. Comparative algorithm analysis results

算法 平均Pearson相关性系数 FT-Only_2D 0.3529 FT-Only_3D 0.3816 本文算法(二维) 0.5992 本文算法(三维) 0.6154 -

[1] LI K N, GONG Y B, REN Z L. A fatigue driving detection algorithm based on facial multi-feature fusion[J]. IEEE Access, 2020, 8: 101244-101259. [2] JI Y Y, WANG S G, ZHAO Y, et al. Fatigue state detection based on multi-index fusion and state recognition network[J]. IEEE Access, 2019, 7: 64136-64147. [3] 汪磊, 孙瑞山. 基于面部特征识别的管制员疲劳监测方法研究[J]. 中国安全科学学报, 2012, 22(7): 66-71.WANG L, SUN R S. Research on fatigue monitoring method of controller based on facial feature recognition[J]. Chinese Safety Science Journal, 2012, 22(7): 66-71( in Chinese). [4] 史增鹏. 基于面部识别的管制员疲劳风险评估技术研究[D]. 天津: 中国民航大学, 2015.SHI Z P. Research on fatigue risk assessment technology for controllers based on facial recognition[D]. Tianjin: Civil Aviation University of China, 2015(in Chinese). [5] LAL S K L, CRAIG A. A critical review of the psychophysiology of driver fatigue[J]. Biological Psychology, 2001, 55(3): 173-194. [6] BERNHARDT K A, POLTAVSKI D, PETROS T, et al. The effects of dynamic workload and experience on commercially available EEG cognitive state metrics in a high-fidelity air traffic control environment[J]. Applied Ergonomics, 2019, 77: 83-91. [7] ARICÒ P, BORGHINI G, DI FLUMERI G, et al. Reliability over time of EEG-based mental workload evaluation during air traffic management (ATM) tasks[C]//Proceedings of the 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society. Piscataway: IEEE Press, 2015: 7242-7245. [8] 王莉莉, 殷硕峰, 马也. 基于轻量化VIT的面部疲劳监测算法研究[J]. 计算机仿真, 2025, 42(1): 317-322.WANG L L, YIN S F, MA Y. Research on facial fatigue monitoring algorithm based on lightweight VIT[J]. Computer Simulation, 2025, 42(1): 317-322(in Chinese). [9] GRISHCHENKO I, ABLAVATSKI A, KARTYNNIK Y, et al. Attention Mesh: high-fidelity face mesh prediction in real-time[EB/OL]. (2020-06-19)[2024-01-01]. https://arxiv.org/abs/2006.10962. [10] KARTYNNIK Y, ABLAVATSKI A, GRISHCHENKO I, et al. Real-time facial surface geometry from monocular video on mobile GPUs[EB/OL]. (2019-07-15)[2024-01-01]. https://arxiv.org/abs/1907.06724. [11] GROSSBERG M D, NAYAR S K. What is the space of camera response functions? [C]//Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press, 2003. [12] 罗元, 云明静, 王艺, 等. 基于人眼信息特征的人体疲劳检测[J]. 计算机应用, 2019, 39(7): 2098-2102.LUO Y, YUN M J, WANG Y, et al. Human fatigue detection based on eye information characteristics[J]. Computer Applications, 2019, 39(7): 2098-2102(in Chinese). [13] GHODDOOSIAN R, GALIB M, ATHITSOS V. A realistic dataset and baseline temporal model for early drowsiness detection[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops. Piscataway: IEEE Press, 2019: 178-187. [14] WANG T S, SHI P F. Yawning detection for determining driver drowsiness[C]//Proceedings of the IEEE International Workshop on VLSI Design and Video Technology. Piscataway: IEEE Press, 2005: 373-376. [15] 王莉莉, 朱敏. 基于脑电数据的管制架次对管制员疲劳影响研究[J]. 中国安全科学学报, 2021, 31(2): 173-178.WANG L L, ZHU M. Research on influence of controlled sorties on controllers’ fatigue based on EEG data[J]. China Safety Science Journal, 2021, 31(2): 173-178(in Chinese). [16] 陈彦峰. 睡意对脑电信号的影响[D]. 兰州: 兰州理工大学, 2013.CHEN Y F. The effect of drowsiness on electroencephalogram[D]. Lanzhou: Lanzhou University of Technology, 2013(in Chinese). [17] MOTTAGHY F M. Interfering with working memory in humans[J]. Neuroscience, 2006, 139(1): 85-90. -

下载:

下载: