Dual discriminator fusion of infrared and visible light images for visual saliency enhancement

-

摘要:

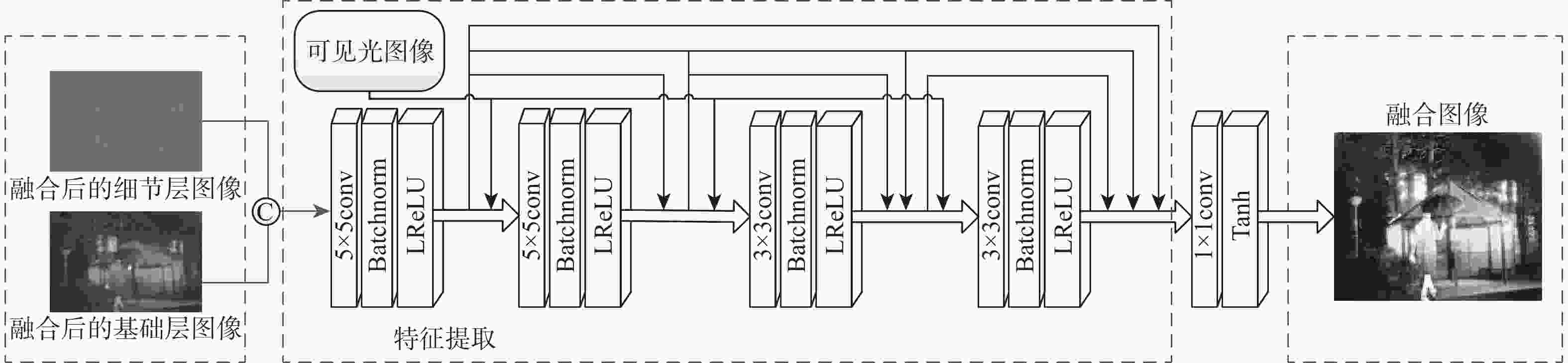

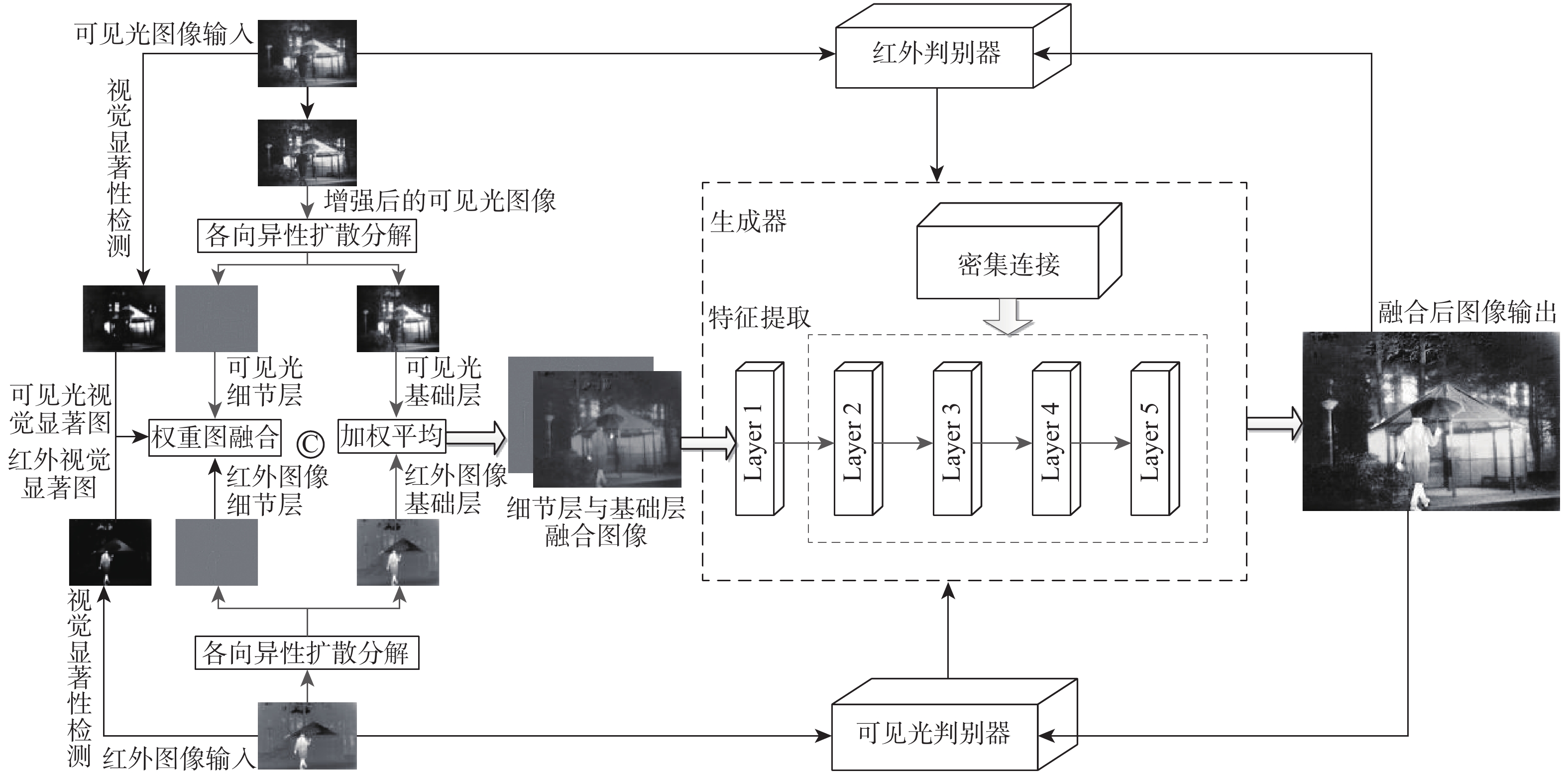

针对红外与可见光图像融合中边缘不清晰、细节缺失等问题,提出了一种视觉显著性增强的双鉴别器融合方法。采用局部自适应对可见光图像进行增强,并采用各向异性扩散对红外与可见光图像分解;通过视觉显著性检测对分解后的细节层图像和基础层图像进行视觉增强;设计密集连接DenseNet生成器模型对视觉增强后图像进行特征学习;通过与双鉴别器博弈对抗得到融合结果。在公开数据集中与10种融合方法进行对比,实验结果表明:所提方法具有更清晰的细节信息,在主客观评估上均优于对比方法,客观评价指标较FusionGAN方法在信息熵、空间频率、结构相似性和标准偏差上分别提高了7.4%、58.8%、25.5%和35.7%。

-

关键词:

- 红外与可见光图像融合 /

- 视觉显著性增强 /

- 各向异性扩散 /

- 双鉴别器 /

- 生成对抗网络

Abstract:In order to solve the problem of unclear edges and missing details in infrared and visible light image fusion, a saliency enhanced dual discriminator generation adversarial infrared and visible light image fusion method is proposed. First, infrared and visible light images are broken down using anisotropic diffusion, while visible light images are improved using local adaptation. Then, visual saliency detection is used to visually enhance the decomposed detail layer image and the base layer image. Next, a dense connected DenseNet generator model is designed to perform feature learning on visually enhanced images. Finally, the fusion result is obtained by competing with the dual discriminator game. Experimental results demonstrate that the suggested approach has more precise information and performs better than the comparison algorithm in both subjective and objective assessments when compared to ten fusion techniques in a public dataset. Compared with the FusionGAN algorithm, the proposed method has improved objective evaluation indicators such as information entropy, spatial frequency, structural similarity, and standard deviation by 7.4%, 58.8%, 25.5%, and 35.7%, respectively.

-

表 1 TNO客观对比值

Table 1. Objective comparison value of TNO

方法 信息熵 互信息 SSIM 标准偏差 空间频率 视觉保真度 ADF[10] 7.1617 0.6883 0.5030 38.4506 0.0318 0.7075 VSMWLS[11] 7.1748 0.6590 0.6248 40.0635 0.0323 0.7401 GFCE[13] 7.1613 0.6883 0.5030 36.4036 0.0436 0.7241 MGF[12] 7.2553 0.6932 0.6123 41.847 0.0311 0.4924 BEMD[23] 7.3241 0.7623 0.6538 48.0324 0.03539 0.5624 GANMcC[20] 7.3960 0.7725 0.6053 42.5729 0.0395 0.5853 DDcGAN[21] 6.6324 0.5674 0.4423 31.4367 0.0256 0.4640 GAN-FM[22] 7.6654 0.4525 0.4365 52.5466 0.0383 0.7206 DenseFuse[18] 7.1360 0.6770 0.5474 35.75 0.0309 0.6521 FusionGAN[19] 7.3374 0.6424 0.5370 40.3546 0.0311 0.5305 本文 7.8787 0.6938 0.6740 54.7573 0.0494 0.7358 表 2 消融实验客观指标

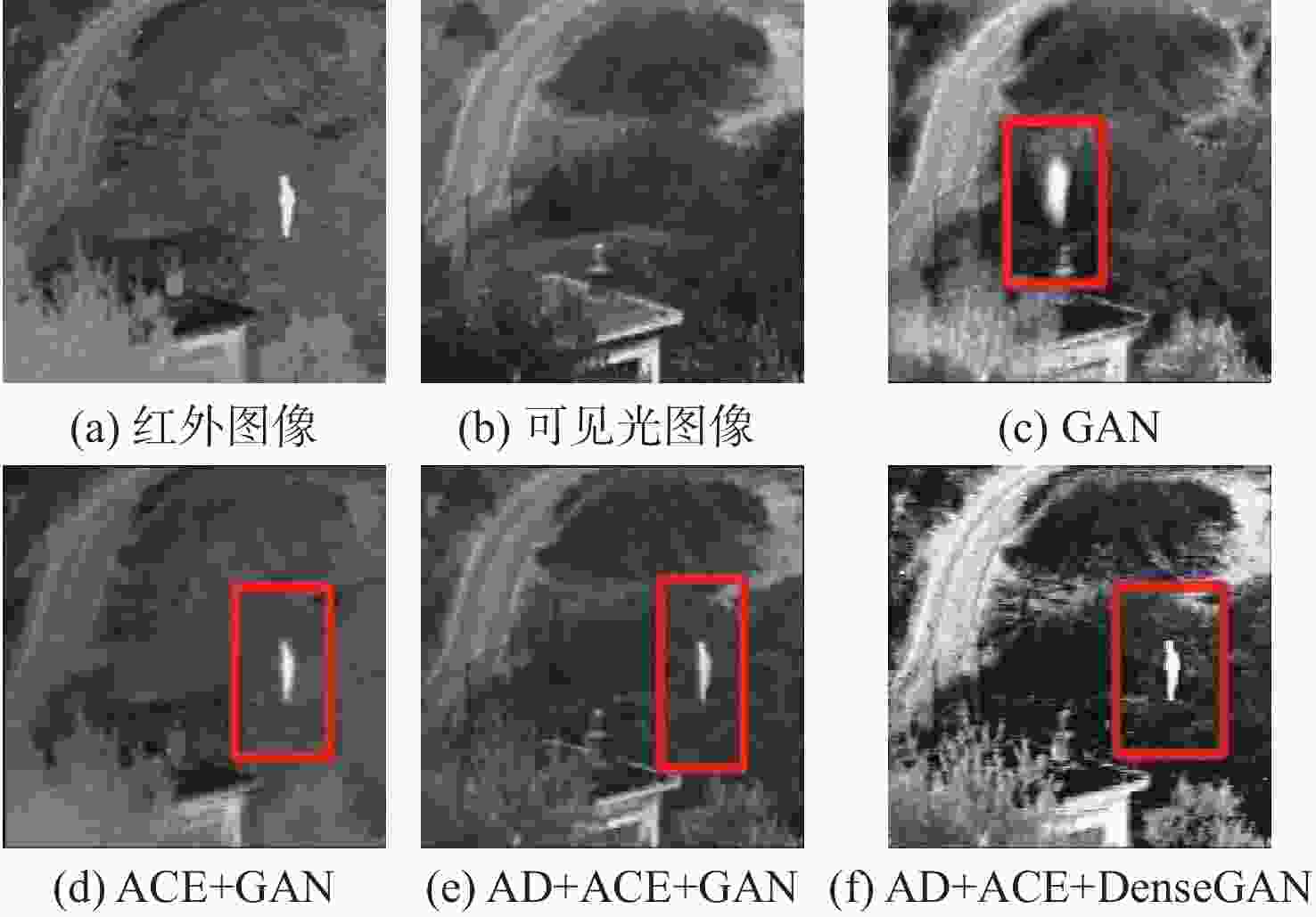

Table 2. Objective indicators of ablation experiment

模块 SSIM 平均梯度 GAN 0.6944 2.084 ACE+GAN 0.7572 2.719 AD+ACE+GAN 0.8140 3.104 AD+ACE+DenseGAN 0.8386 3.592 -

[1] 陈永, 王镇, 卢晨涛, 等. 红外弱光下多特征融合与注意力增强铁路异物检测[J]. 北京航空航天大学学报, 2023, 49(8): 1884-1894.CHEN Y, WANG Z, LU C T, et al. Detection of railway object intrusion under infrared low light based on multi-feature and attention enhancement network[J]. Journal of Beijing University of Aeronautics and Astronautics, 2023, 49(8): 1884-1894(in Chinese). [2] GONZÁLEZ J R, DAMIÃO C, MORAN M, et al. A computational study on the role of parameters for identification of thyroid nodules by infrared images (and comparison with real data)[J]. Sensors, 2021, 21(13): 4459. [3] MA J Y, MA Y, LI C. Infrared and visible image fusion methods and applications: a survey[J]. Information Fusion, 2019, 45: 153-178. [4] TU Z Z, LI Z, LI C L, et al. Multi-interactive dual-decoder for RGB-thermal salient object detection[J]. IEEE Transactions on Image Processing, 2021, 30: 5678-5691. [5] XIAO W X, ZHANG Y F, WANG H B, et al. Heterogeneous knowledge distillation for simultaneous infrared-visible image fusion and super-resolution[J]. IEEE Transactions on Instrumentation and Measurement, 2022, 71: 5004015. [6] DINH P H. Combining Gabor energy with equilibrium optimizer algorithm for multi-modality medical image fusion[J]. Biomedical Signal Processing and Control, 2021, 68: 102696. [7] 陈永, 张娇娇, 王镇. 多尺度密集连接注意力的红外与可见光图像融合[J]. 光学精密工程, 2022, 30(18): 2253-2266.CHEN Y, ZHANG J J, WANG Z. Infrared and visible image fusion based on multi-scale dense attention connection network[J]. Optics and Precision Engineering, 2022, 30(18): 2253-2266(in Chinese). [8] WANG Z S, XU J W, JIANG X L, et al. Infrared and visible image fusion via hybrid decomposition of NSCT and morphological sequential toggle operator[J]. Optik, 2020, 201: 163497. [9] 娄熙承, 冯鑫. 潜在低秩表示框架下基于卷积神经网络结合引导滤波的红外与可见光图像融合[J]. 光子学报, 2021, 50(3): 0310004.LOU X C, FENG X. Infrared and visible image fusion in latent low rank representation framework based on convolution neural network and guided filtering[J]. Acta Photonica Sinica, 2021, 50(3): 0310004(in Chinese). [10] VASU G T, PALANISAMY P. Multi-focus image fusion using anisotropic diffusion filter[J]. Soft Computing, 2022, 26(24): 14029-14040. [11] MA J L, ZHOU Z Q, WANG B, et al. Infrared and visible image fusion based on visual saliency map and weighted least square optimization[J]. Infrared Physics & Technology, 2017, 82: 8-17. [12] BAVIRISETTI D P, XIAO G, ZHAO J H, et al. Multi-scale guided image and video fusion: a fast and efficient approach[J]. Circuits, Systems, and Signal Processing, 2019, 38(12): 5576-5605. [13] ZHOU Z Q, DONG M J, XIE X Z, et al. Fusion of infrared and visible images for night-vision context enhancement[J]. Applied Optics, 2016, 55(23): 6480-6490. [14] SUN C Q, ZHANG C, XIONG N X. Infrared and visible image fusion techniques based on deep learning: a review[J]. Electronics, 2020, 9(12): 2162. [15] LI J, ZHU J M, LI C, et al. CGTF: convolution-guided Transformer for infrared and visible image fusion[J]. IEEE Transactions on Instrumentation and Measurement, 2022, 71: 5012314. [16] WANG Z S, WANG J Y, WU Y Y, et al. UNFusion: a unified multi-scale densely connected network for infrared and visible image fusion[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2022, 32(6): 3360-3374. [17] LI H, WU X J, KITTLER J. RFN-Nest: an end-to-end residual fusion network for infrared and visible images[J]. Information Fusion, 2021, 73: 72-86. [18] LI H, WU X J. DenseFuse: a fusion approach to infrared and visible images[J]. IEEE Transactions on Image Processing, 2019, 28(5): 2614-2623. [19] MA J Y, YU W, LIANG P W, et al. FusionGAN: a generative adversarial network for infrared and visible image fusion[J]. Information Fusion, 2019, 48: 11-26. [20] MA J Y, ZHANG H, SHAO Z F, et al. GANMcC: a generative adversarial network with multiclassification constraints for infrared and visible image fusion[J]. IEEE Transactions on Instrumentation and Measurement, 2020, 70: 5005014. [21] MA J Y, XU H, JIANG J J, et al. DDcGAN: a dual-discriminator conditional generative adversarial network for multi-resolution image fusion[J]. IEEE Transactions on Image Processing, 2020, 29: 4980-4995. [22] ZHANG H, YUAN J T, TIAN X, et al. GAN-FM: infrared and visible image fusion using GAN with full-scale skip connection and dual Markovian discriminators[J]. IEEE Transactions on Computational Imaging, 2021, 7: 1134-1147. [23] 崔晓荣, 沈涛, 黄建鲁, 等. 基于BEMD改进的视觉显著性红外和可见光图像融合[J]. 红外技术, 2020, 42(11): 1061-1071.CUI X R, SHEN T, HUANG J L, et al. Infrared and visible image fusion based on BEMD and improved visual saliency[J]. Infrared Technology, 2022, 42(11): 1061-1071(in Chinese). [24] YE X Y, GAO S L, LI F M. ACE-STDN: an infrared small target detection network with adaptive contrast enhancement[J]. Journal of Infrared Millim Waves, 2023, 42(5): 701-708. [25] JOO M G, LEE K T, SANG P, et al. Laser-generated focused ultrasound transmitters with frequency-tuned outputs over sub-10-MHz range[J]. Applied Physics Letters, 2019, 115(15): 154103. -

下载:

下载: